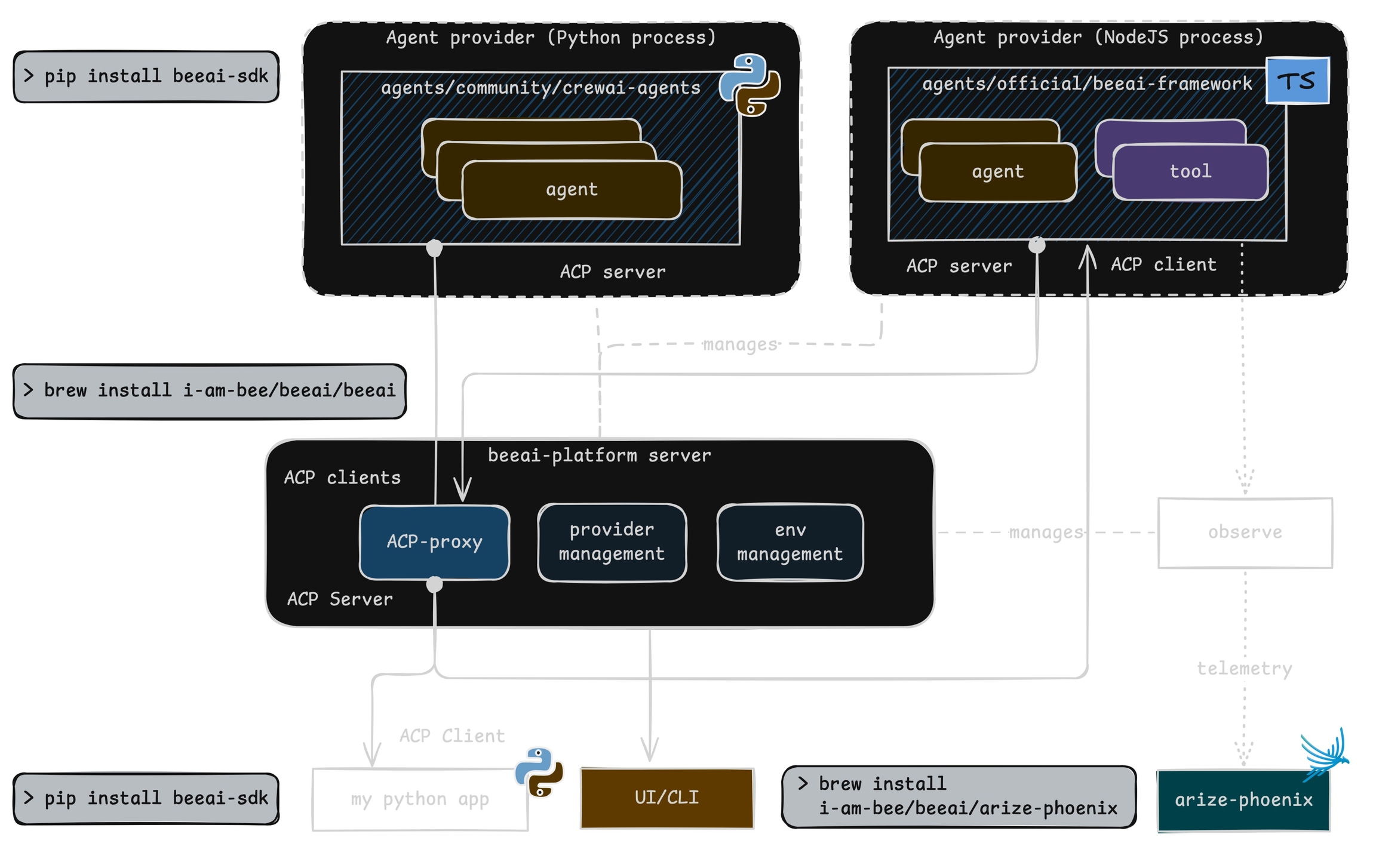

BeeAI is an open-source platform that enables developers to discover, run, and compose AI agents from any framework, facilitating the creation of interoperable multi-agent systems

Auto-instrument and observe BeeAI agents

This module provides automatic instrumentation for . It integrates seamlessly with the package to collect and export telemetry data.

To instrument your application, import and enable BeeAIInstrumentation. Create the instrumentation.js file:

Sample agent built using BeeAI with automatic tracing:

Phoenix provides visibility into your BeeAI agent operations by automatically tracing all interactions.

Add the following at the top of your instrumentation.js to see OpenTelemetry diagnostic logs in your console while debugging:

If traces aren't appearing, a common cause is an outdated beeai-framework package. Check the diagnostic logs for version or initialization errors and update your package as needed.

You can specify a custom tracer provider for BeeAI instrumentation in multiple ways:

npm install --save beeai-framework \

@arizeai/openinference-instrumentation-beeai \

@arizeai/openinference-semantic-conventions \

@opentelemetry/sdk-trace-node \

@opentelemetry/resources \

@opentelemetry/exporter-trace-otlp-proto \

@opentelemetry/semantic-conventions \

@opentelemetry/instrumentationimport {

NodeTracerProvider,

SimpleSpanProcessor,

ConsoleSpanExporter,

} from "@opentelemetry/sdk-trace-node";

import { diag, DiagConsoleLogger, DiagLogLevel } from "@opentelemetry/api";

import { resourceFromAttributes } from "@opentelemetry/resources";

import { OTLPTraceExporter } from "@opentelemetry/exporter-trace-otlp-proto";

import { ATTR_SERVICE_NAME } from "@opentelemetry/semantic-conventions";

import { SEMRESATTRS_PROJECT_NAME } from "@arizeai/openinference-semantic-conventions";

import * as beeaiFramework from "beeai-framework";

import { registerInstrumentations } from "@opentelemetry/instrumentation";

import { BeeAIInstrumentation } from "@arizeai/openinference-instrumentation-beeai";

const COLLECTOR_ENDPOINT = "your-phoenix-collector-endpoint";

const provider = new NodeTracerProvider({

resource: resourceFromAttributes({

[ATTR_SERVICE_NAME]: "beeai-project",

[SEMRESATTRS_PROJECT_NAME]: "beeai-project",

}),

spanProcessors: [

new SimpleSpanProcessor(new ConsoleSpanExporter()),

new SimpleSpanProcessor(

new OTLPTraceExporter({

url: `${COLLECTOR_ENDPOINT}/v1/traces`,

// (optional) if connecting to Phoenix with Authentication enabled

headers: { Authorization: `Bearer ${process.env.PHOENIX_API_KEY}` },

}),

),

],

});

provider.register();

const beeAIInstrumentation = new BeeAIInstrumentation();

beeAIInstrumentation.manuallyInstrument(beeaiFramework);

registerInstrumentations({

instrumentations: [beeAIInstrumentation],

});

console.log("👀 OpenInference initialized");import "./instrumentation.js";

import { ToolCallingAgent } from "beeai-framework/agents/toolCalling/agent";

import { TokenMemory } from "beeai-framework/memory/tokenMemory";

import { DuckDuckGoSearchTool } from "beeai-framework/tools/search/duckDuckGoSearch";

import { OpenMeteoTool } from "beeai-framework/tools/weather/openMeteo";

import { OpenAIChatModel } from "beeai-framework/adapters/openai/backend/chat";

const llm = new OpenAIChatModel(

"gpt-4o",

{},

{ apiKey: 'your-openai-api-key' }

);

const agent = new ToolCallingAgent({

llm,

memory: new TokenMemory(),

tools: [

new DuckDuckGoSearchTool(),

new OpenMeteoTool(), // weather tool

],

});

async function main() {

const response = await agent.run({ prompt: "What's the current weather in Berlin?" });

console.log(`Agent 🤖 : `, response.result.text);

}

main();import { diag, DiagConsoleLogger, DiagLogLevel } from "@opentelemetry/api";

// Enable OpenTelemetry diagnostic logging

diag.setLogger(new DiagConsoleLogger(), DiagLogLevel.INFO);const beeAIInstrumentation = new BeeAIInstrumentation({

tracerProvider: customTracerProvider,

});

beeAIInstrumentation.manuallyInstrument(beeaiFramework);const beeAIInstrumentation = new BeeAIInstrumentation();

beeAIInstrumentation.setTracerProvider(customTracerProvider);

beeAIInstrumentation.manuallyInstrument(beeaiFramework);const beeAIInstrumentation = new BeeAIInstrumentation();

beeAIInstrumentation.manuallyInstrument(beeaiFramework);

registerInstrumentations({

instrumentations: [beeAIInstrumentation],

tracerProvider: customTracerProvider,

});Instrument and observe BeeAI agents

Phoenix provides seamless observability and tracing for BeeAI agents through the Python OpenInference instrumentation package.

pip install openinference-instrumentation-beeai beeai-frameworkConnect to your Phoenix instance using the register function.

from phoenix.otel import register

# configure the Phoenix tracer

tracer_provider = register(

project_name="beeai-agent", # Default is 'default'

auto_instrument=True # Auto-instrument your app based on installed OI dependencies

)Sample agent built using BeeAI with automatic tracing:

import asyncio

from beeai_framework.agents.react import ReActAgent

from beeai_framework.agents.types import AgentExecutionConfig

from beeai_framework.backend.chat import ChatModel

from beeai_framework.backend.types import ChatModelParameters

from beeai_framework.memory import TokenMemory

from beeai_framework.tools.search.duckduckgo import DuckDuckGoSearchTool

from beeai_framework.tools.search.wikipedia import WikipediaTool

from beeai_framework.tools.tool import AnyTool

from beeai_framework.tools.weather.openmeteo import OpenMeteoTool

llm = ChatModel.from_name(

"ollama:granite3.1-dense:8b",

ChatModelParameters(temperature=0),

)

tools: list[AnyTool] = [

WikipediaTool(),

OpenMeteoTool(),

DuckDuckGoSearchTool(),

]

agent = ReActAgent(llm=llm, tools=tools, memory=TokenMemory(llm))

prompt = "What's the current weather in Las Vegas?"

async def main() -> None:

response = await agent.run(

prompt=prompt,

execution=AgentExecutionConfig(

max_retries_per_step=3, total_max_retries=10, max_iterations=20

),

)

print("Agent 🤖 : ", response.result.text)

asyncio.run(main())Phoenix provides visibility into your BeeAI agent operations by automatically tracing all interactions.

Sign up for Phoenix:

Sign up for an Arize Phoenix account at https://app.phoenix.arize.com/login

Click Create Space, then follow the prompts to create and launch your space.

Install packages:

pip install arize-phoenix-otelSet your Phoenix endpoint and API Key:

From your new Phoenix Space

Create your API key from the Settings page

Copy your Hostname from the Settings page

In your code, set your endpoint and API key:

import os

os.environ["PHOENIX_API_KEY"] = "ADD YOUR PHOENIX API KEY"

os.environ["PHOENIX_COLLECTOR_ENDPOINT"] = "ADD YOUR PHOENIX HOSTNAME"

# If you created your Phoenix Cloud instance before June 24th, 2025,

# you also need to set the API key as a header:

# os.environ["PHOENIX_CLIENT_HEADERS"] = f"api_key={os.getenv('PHOENIX_API_KEY')}"Launch your local Phoenix instance:

pip install arize-phoenix

phoenix serveFor details on customizing a local terminal deployment, see Terminal Setup.

Install packages:

pip install arize-phoenix-otelSet your Phoenix endpoint:

import os

os.environ["PHOENIX_COLLECTOR_ENDPOINT"] = "http://localhost:6006"See Terminal for more details.

Pull latest Phoenix image from Docker Hub:

docker pull arizephoenix/phoenix:latestRun your containerized instance:

docker run -p 6006:6006 arizephoenix/phoenix:latestThis will expose the Phoenix on localhost:6006

Install packages:

pip install arize-phoenix-otelSet your Phoenix endpoint:

import os

os.environ["PHOENIX_COLLECTOR_ENDPOINT"] = "http://localhost:6006"For more info on using Phoenix with Docker, see Docker.

Install packages:

pip install arize-phoenixLaunch Phoenix:

import phoenix as px

px.launch_app()