AI Observability and Evaluation

Running Phoenix for the first time? Select a quickstart below.

Check out a comprehensive list of example notebooks for LLM Traces, Evals, RAG Analysis, and more.

Add instrumentation for popular packages and libraries such as OpenAI, LangGraph, Vercel AI SDK and more.

Join the Phoenix Slack community to ask questions, share findings, provide feedback, and connect with other developers.

Not sure where to start? Try a quickstart:

Phoenix is a comprehensive platform designed to enable observability across every layer of an LLM-based system, empowering teams to build, optimize, and maintain high-quality applications and agents efficiently.

During the development phase, Phoenix offers essential tools for debugging, experimentation, evaluation, prompt tracking, and search and retrieval.

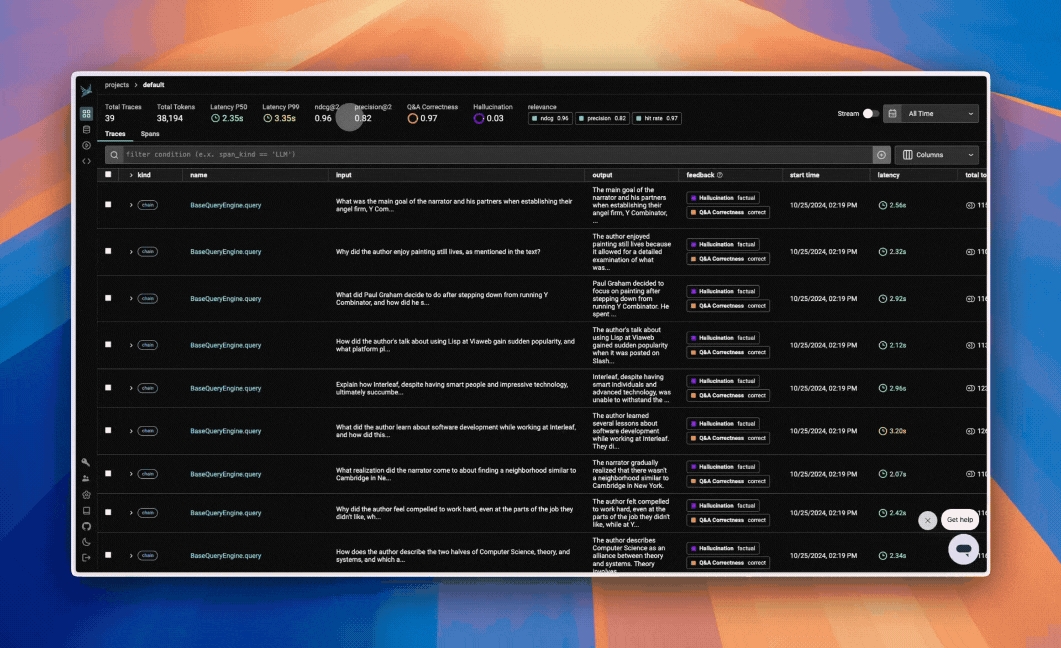

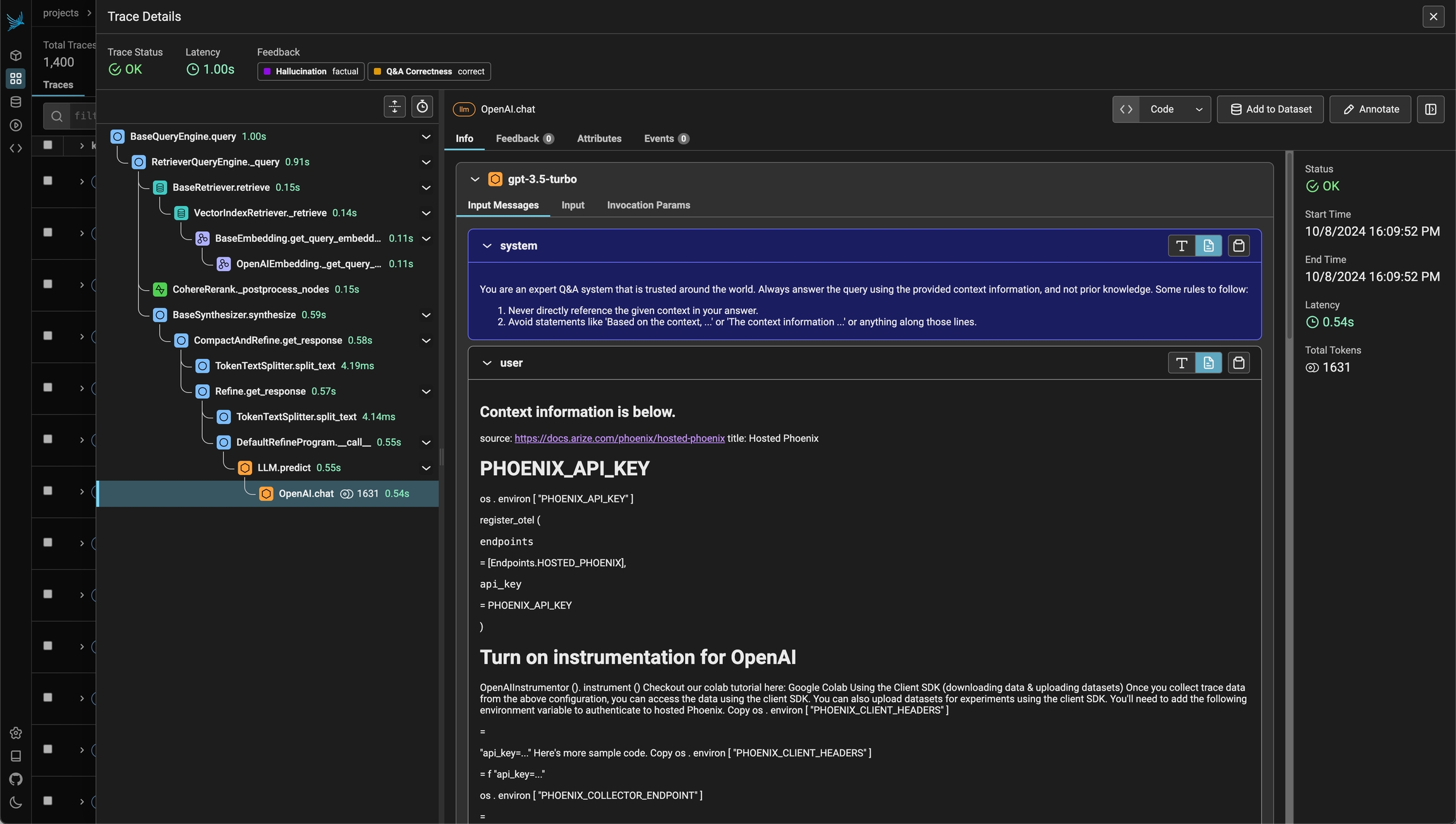

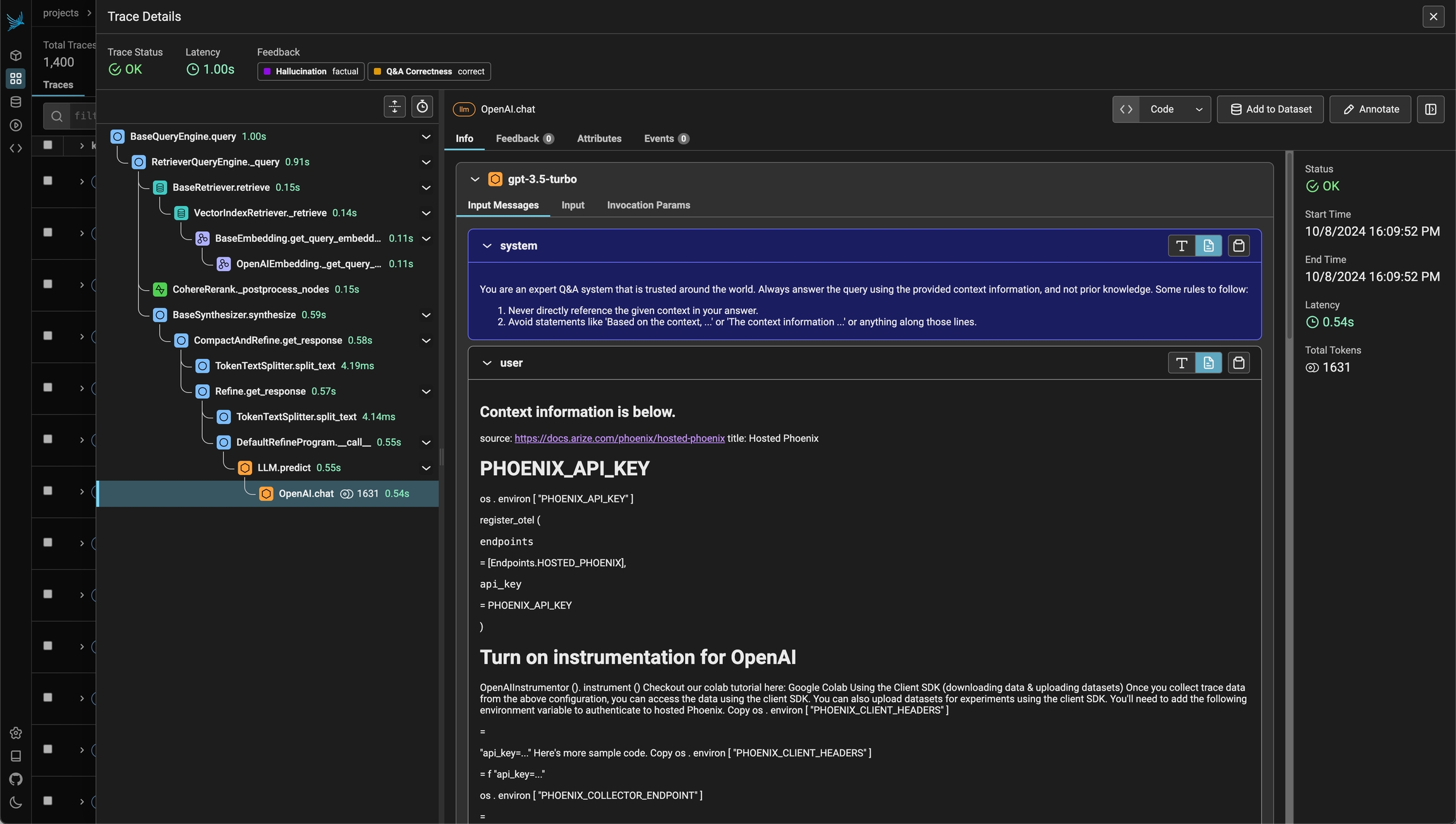

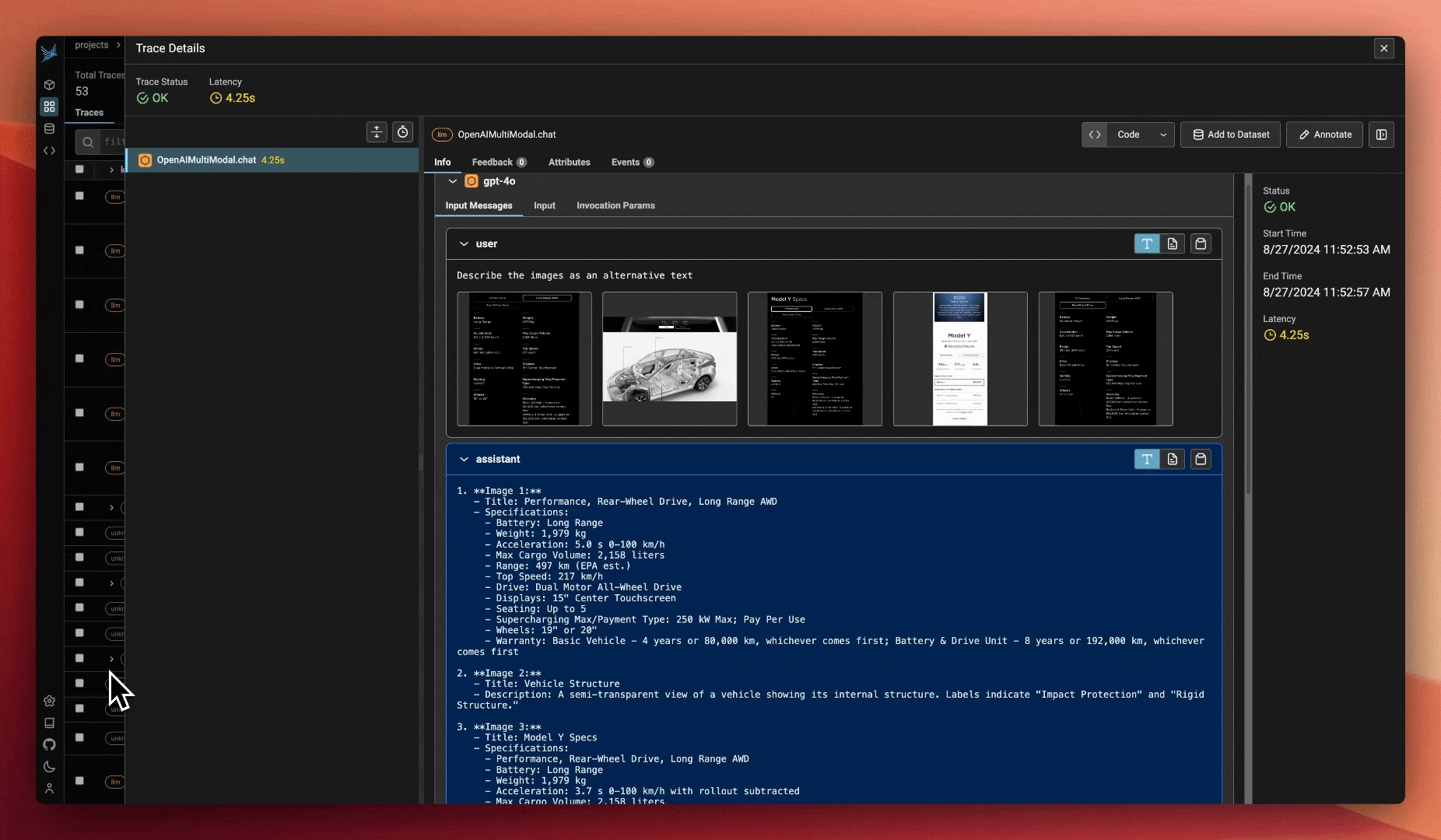

Phoenix's tracing and span analysis capabilities are invaluable during the prototyping and debugging stages. By instrumenting application code with Phoenix, teams gain detailed insights into the execution flow, making it easier to identify and resolve issues. Developers can drill down into specific spans, analyze performance metrics, and access relevant logs and metadata to streamline debugging efforts.

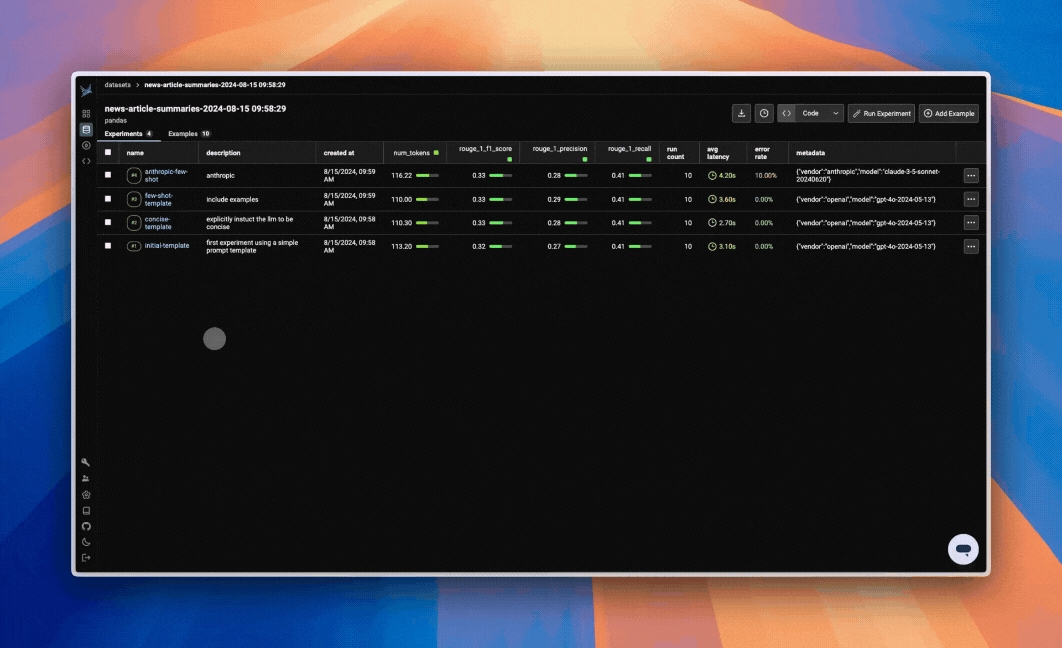

Leverage experiments to measure prompt and model performance. Typically during this early stage, you'll focus on gather a robust set of test cases and evaluation metrics to test initial iterations of your application. Experiments at this stage may resemble unit tests, as they're geared towards ensure your application performs correctly.

Either as a part of experiments or a standalone feature, evaluations help you understand how your app is performing at a granular level. Typical evaluations might be correctness evals compared against a ground truth data set, or LLM-as-a-judge evals to detect hallucinations or relevant RAG output.

Prompt engineering is critical how a model behaves. While there are other methods such as fine-tuning to change behavior, prompt engineering is the simplest way to get started and often times has the best ROI.

Instrument prompt and prompt variable collection to associate iterations of your app with the performance measured through evals and experiments. Phoenix tracks prompt templates, variables, and versions during execution to help you identify improvements and degradations.

Phoenix's search and retrieval optimization tools include an embeddings visualizer that helps teams understand how their data is being represented and clustered. This visual insight can guide decisions on indexing strategies, similarity measures, and data organization to improve the relevance and efficiency of search results.

In the testing and staging environment, Phoenix supports comprehensive evaluation, benchmarking, and data curation. Traces, experimentation, prompt tracking, and embedding visualizer remain important in the testing and staging phase, helping teams identify and resolve issues before deployment.

With a stable set of test cases and evaluations defined, you can now easily iterate on your application and view performance changes in Phoenix right away. Swap out models, prompts, or pipeline logic, and run your experiment to immediately see the impact on performance.

Phoenix's flexible evaluation framework supports thorough testing of LLM outputs. Teams can define custom metrics, collect user feedback, and leverage separate LLMs for automated assessment. Phoenix offers tools for analyzing evaluation results, identifying trends, and tracking improvements over time.

Phoenix assists in curating high-quality data for testing and fine-tuning. It provides tools for data exploration, cleaning, and labeling, enabling teams to curate representative data that covers a wide range of use cases and edge conditions.

Add guardrails to your application to prevent malicious and erroneous inputs and outputs. Guardrails will be visualized in Phoenix, and can be attached to spans and traces in the same fashion as evaluation metrics.

In production, Phoenix works hand-in-hand with Arize, which focuses on the production side of the LLM lifecycle. The integration ensures a smooth transition from development to production, with consistent tooling and metrics across both platforms.

Phoenix and Arize use the same collector frameworks in development and production. This allows teams to monitor latency, token usage, and other performance metrics, setting up alerts when thresholds are exceeded.

Phoenix's evaluation framework can be used to generate ongoing assessments of LLM performance in production. Arize complements this with online evaluations, enabling teams to set up alerts if evaluation metrics, such as hallucination rates, go beyond acceptable thresholds.

Phoenix and Arize together help teams identify data points for fine-tuning based on production performance and user feedback. This targeted approach ensures that fine-tuning efforts are directed towards the most impactful areas, maximizing the return on investment.

Phoenix, in collaboration with Arize, empowers teams to build, optimize, and maintain high-quality LLM applications throughout the entire lifecycle. By providing a comprehensive observability platform and seamless integration with production monitoring tools, Phoenix and Arize enable teams to deliver exceptional LLM-driven experiences with confidence and efficiency.

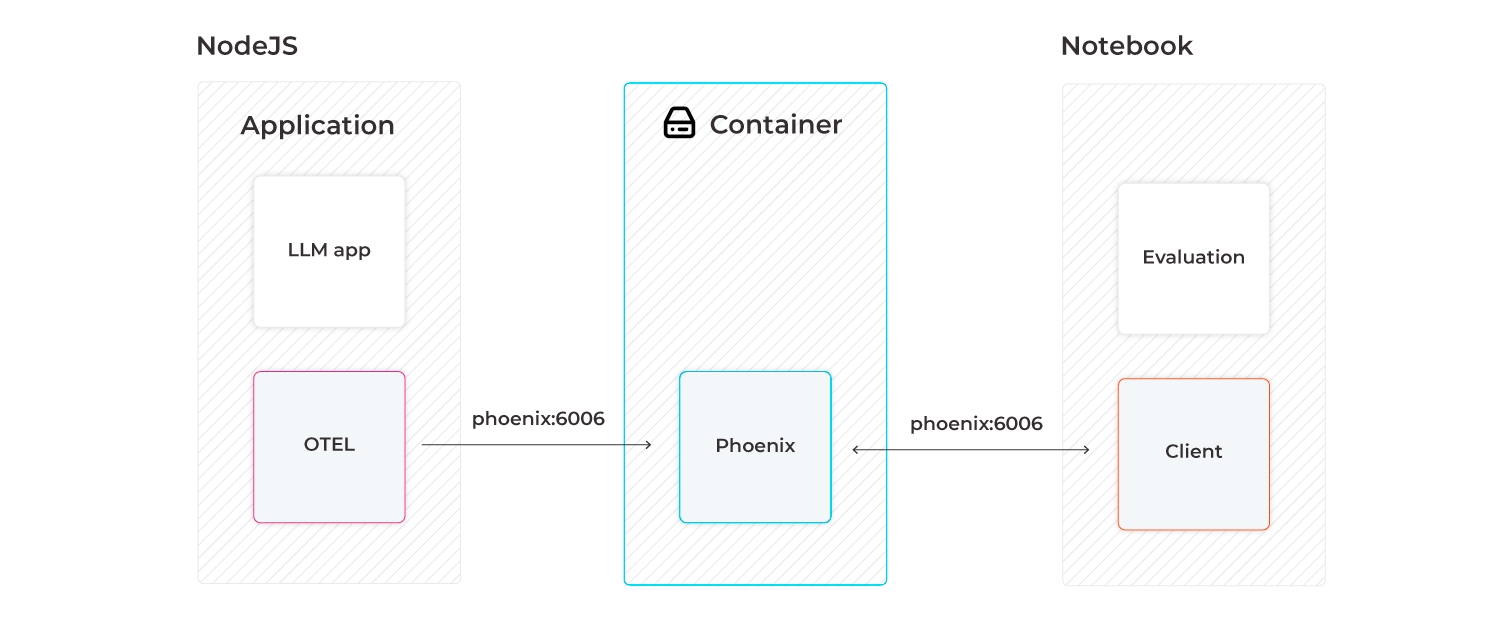

The Phoenix app can be run in various environments such as Colab and SageMaker notebooks, as well as be served via the terminal or a docker container.

If you're using Phoenix Cloud, be sure to set the proper environment variables to connect to your instance:

To start phoenix in a notebook environment, run:

This will start a local Phoenix server. You can initialize the phoenix server with various kinds of data (traces, inferences).

If you want to start a phoenix server to collect traces, you can also run phoenix directly from the command line:

This will start the phoenix server on port 6006. If you are running your instrumented notebook or application on the same machine, traces should automatically be exported to http://127.0.0.1:6006 so no additional configuration is needed. However if the server is running remotely, you will have to modify the environment variable PHOENIX_COLLECTOR_ENDPOINT to point to that machine (e.g. http://<my-remote-machine>:<port>)

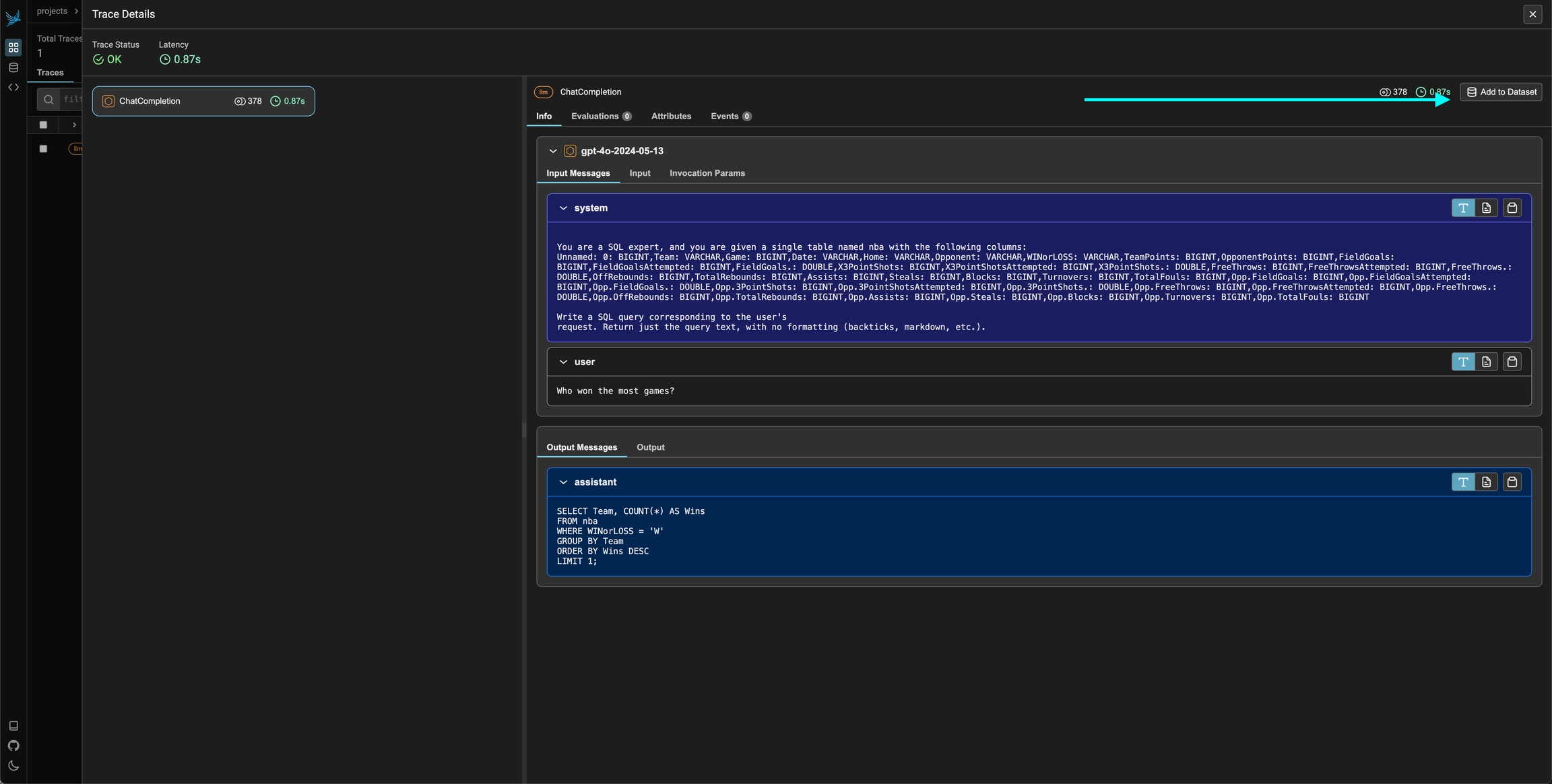

Tracing the execution of LLM applications using Telemetry

Phoenix traces AI applications, via OpenTelemetry and has first-class integrations with LlamaIndex, Langchain, OpenAI, and others.

LLM tracing records the paths taken by requests as they propagate through multiple steps or components of an LLM application. For example, when a user interacts with an LLM application, tracing can capture the sequence of operations, such as document retrieval, embedding generation, language model invocation, and response generation to provide a detailed timeline of the request's execution.

Using Phoenix's tracing capabilities can provide important insights into the inner workings of your LLM application. By analyzing the collected trace data, you can identify and address various performance and operational issues and improve the overall reliability and efficiency of your system.

Application Latency: Identify and address slow invocations of LLMs, Retrievers, and other components within your application, enabling you to optimize performance and responsiveness.

Token Usage: Gain a detailed breakdown of token usage for your LLM calls, allowing you to identify and optimize the most expensive LLM invocations.

Runtime Exceptions: Capture and inspect critical runtime exceptions, such as rate-limiting events, that can help you proactively address and mitigate potential issues.

Retrieved Documents: Inspect the documents retrieved during a Retriever call, including the score and order in which they were returned to provide insight into the retrieval process.

Embeddings: Examine the embedding text used for retrieval and the underlying embedding model to allow you to validate and refine your embedding strategies.

LLM Parameters: Inspect the parameters used when calling an LLM, such as temperature and system prompts, to ensure optimal configuration and debugging.

Prompt Templates: Understand the prompt templates used during the prompting step and the variables that were applied, allowing you to fine-tune and improve your prompting strategies.

Tool Descriptions: View the descriptions and function signatures of the tools your LLM has been given access to in order to better understand and control your LLM’s capabilities.

LLM Function Calls: For LLMs with function call capabilities (e.g., OpenAI), you can inspect the function selection and function messages in the input to the LLM, further improving your ability to debug and optimize your application.

By using tracing in Phoenix, you can gain increased visibility into your LLM application, empowering you to identify and address performance bottlenecks, optimize resource utilization, and ensure the overall reliability and effectiveness of your system.

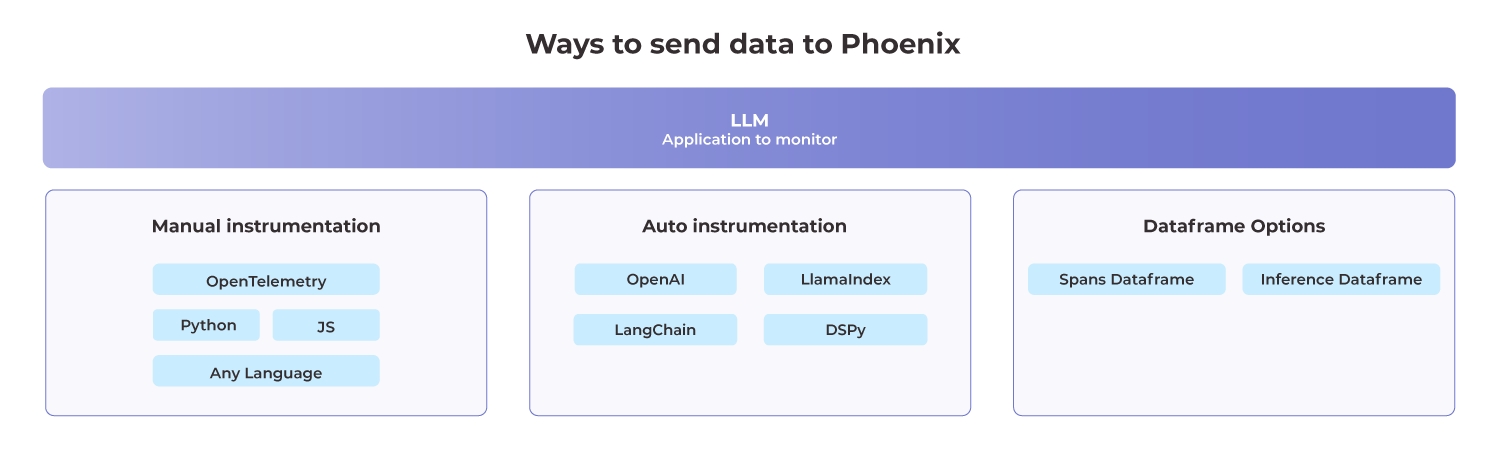

Phoenix supports three main options to collect traces:

This example uses options 1 and 2.

To collect traces from your application, you must configure an OpenTelemetry TracerProvider to send traces to Phoenix.

Functions can be traced using decorators:

Input and output attributes are set automatically based on my_func's parameters and return.

OpenInference libraries must be installed before calling the register function

You should now see traces in Phoenix!

Phoenix supports three main options to collect traces:

This example uses options 2 and 3.

Grab your API key from the Keys option on the left bar.

In your code, configure environment variables for your endpoint and API key:

In your code, configure environment variables for your endpoint and API key:

To collect traces from your application, you must configure an OpenTelemetry TracerProvider to send traces to Phoenix.

In a new file called instrumentation.ts (or .js if applicable)

Remember to add your environment variables to your shell environment before running this sample! Uncomment one of the authorization headers above if you plan to connect to an authenticated Phoenix instance.

Now, import this file at the top of your main program entrypoint, or invoke it with the node cli's requireflag:

Our program is now ready to trace calls made by an llm library, but it will not do anything just yet. Let's choose an instrumentation library to collect our traces, and register it with our Provider.

Update your instrumentation.tsfile, registering the instrumentation. Steps will vary depending on if your project is configured for CommonJS or ESM style module resolution.

Finally, in your app code, invoke OpenAI:

You should now see traces in Phoenix!

Tracing is a critical part of AI Observability and should be used both in production and development

Phoenix's tracing and span analysis capabilities are invaluable during the prototyping and debugging stages. By instrumenting application code with Phoenix, teams gain detailed insights into the execution flow, making it easier to identify and resolve issues. Developers can drill down into specific spans, analyze performance metrics, and access relevant logs and metadata to streamline debugging efforts.

This section contains details on Tracing features:

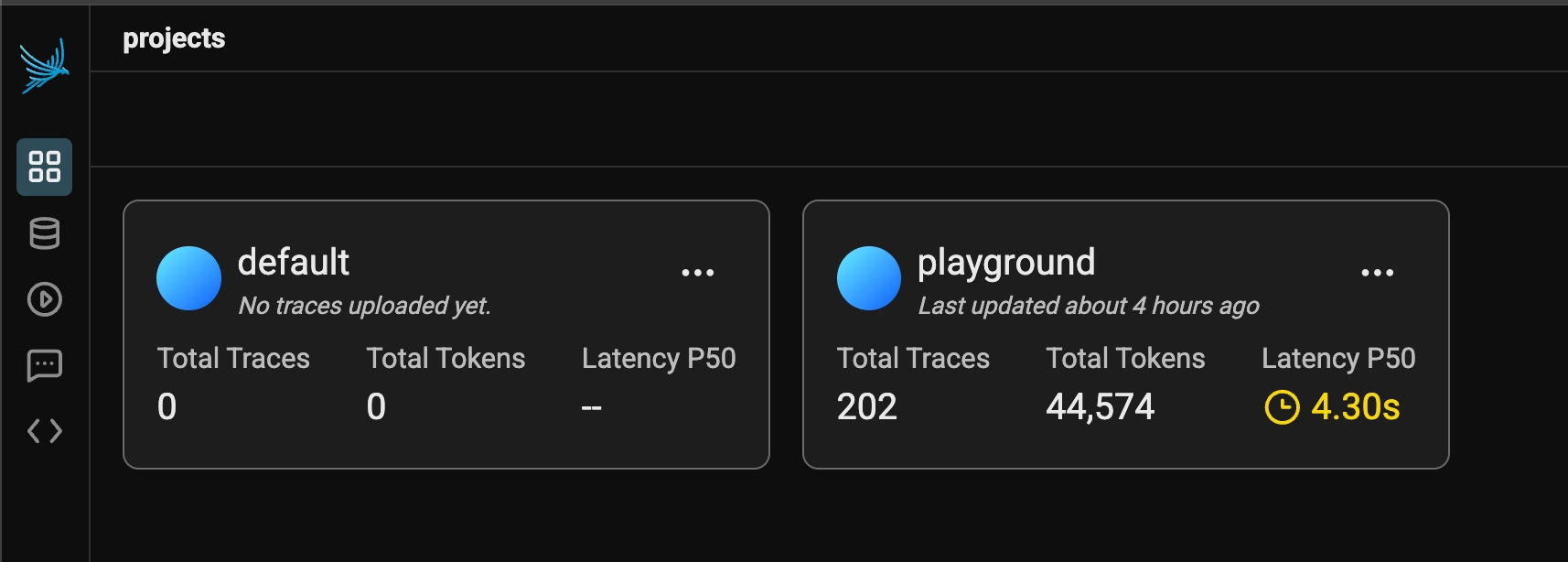

Use projects to organize your LLM traces

Projects provide organizational structure for your AI applications, allowing you to logically separate your observability data. This separation is essential for maintaining clarity and focus.

With Projects, you can:

Segregate traces by environment (development, staging, production)

Isolate different applications or use cases

Track separate experiments without cross-contamination

Maintain dedicated evaluation spaces for specific initiatives

Create team-specific workspaces for collaborative analysis

Projects act as containers that keep related traces and conversations together while preventing them from interfering with unrelated work. This organization becomes increasingly valuable as you scale - allowing you to easily switch between contexts without losing your place or mixing data.

The Project structure also enables comparative analysis across different implementations, models, or time periods. You can run parallel versions of your application in separate projects, then analyze the differences to identify improvements or regressions.

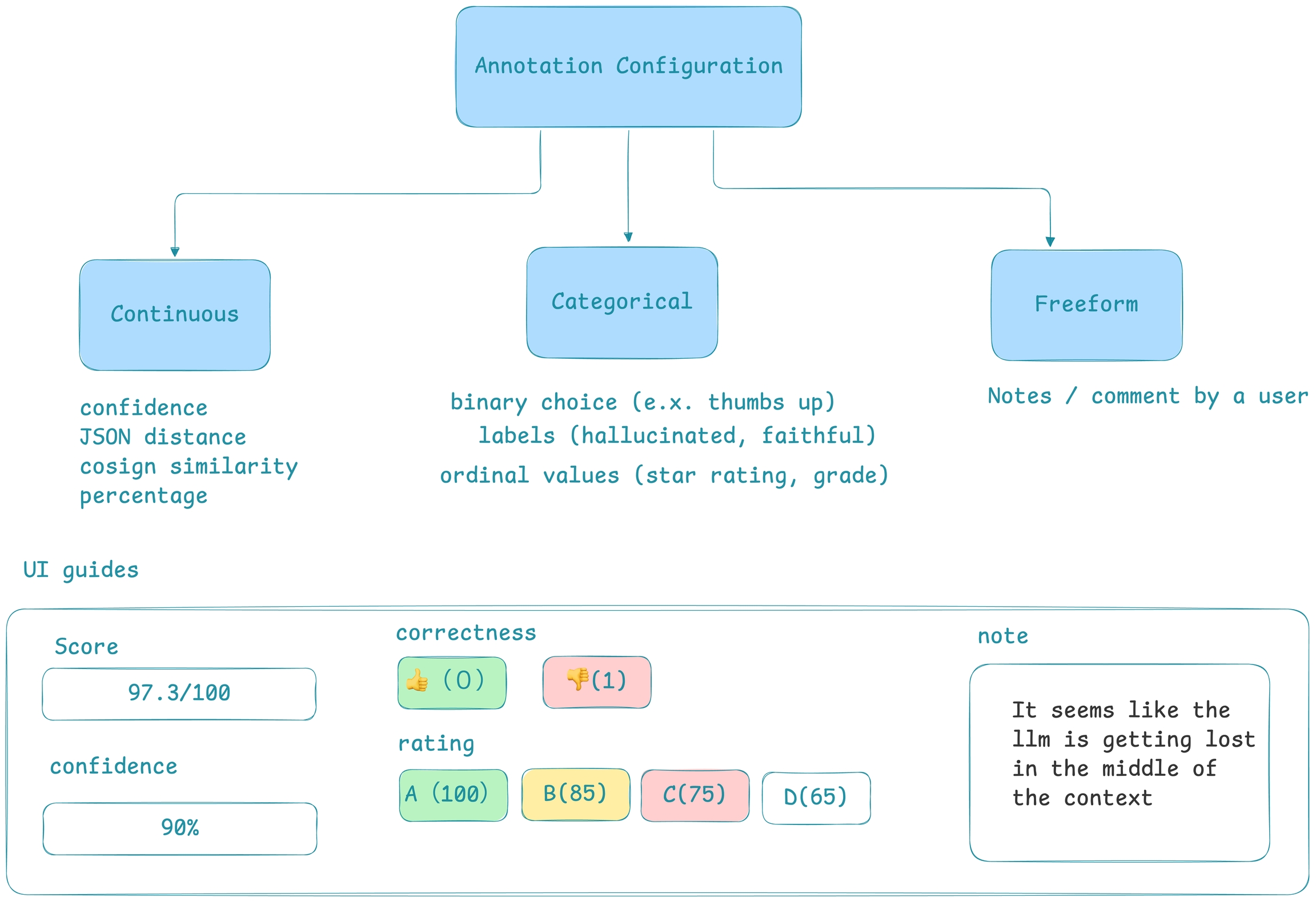

In order to improve your LLM application iteratively, it's vital to collect feedback, annotate data during human review, as well as to establish an evaluation pipeline so that you can monitor your application. In Phoenix we capture this type of feedback in the form of annotations.

Phoenix gives you the ability to annotate traces with feedback from the UI, your application, or wherever you would like to perform evaluation. Phoenix's annotation model is simple yet powerful - given an entity such as a span that is collected, you can assign a label and/or a score to that entity.

Navigate to the Feedback tab in this demo trace to see how LLM-based evaluations appear in Phoenix:

Learn more about the concepts Concepts: Annotations

Configure Annotation Configs to guide human annotations.

How to run Running Evals on Traces

Learn how to log annotations via the client from your app or in a notebook

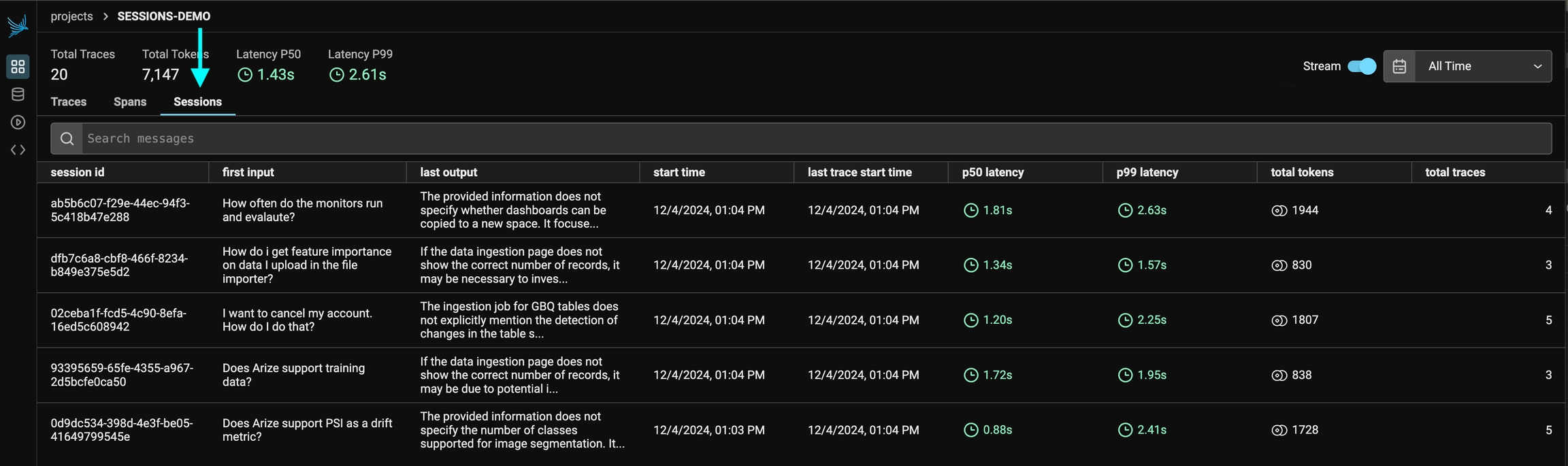

Track and analyze multi-turn conversations

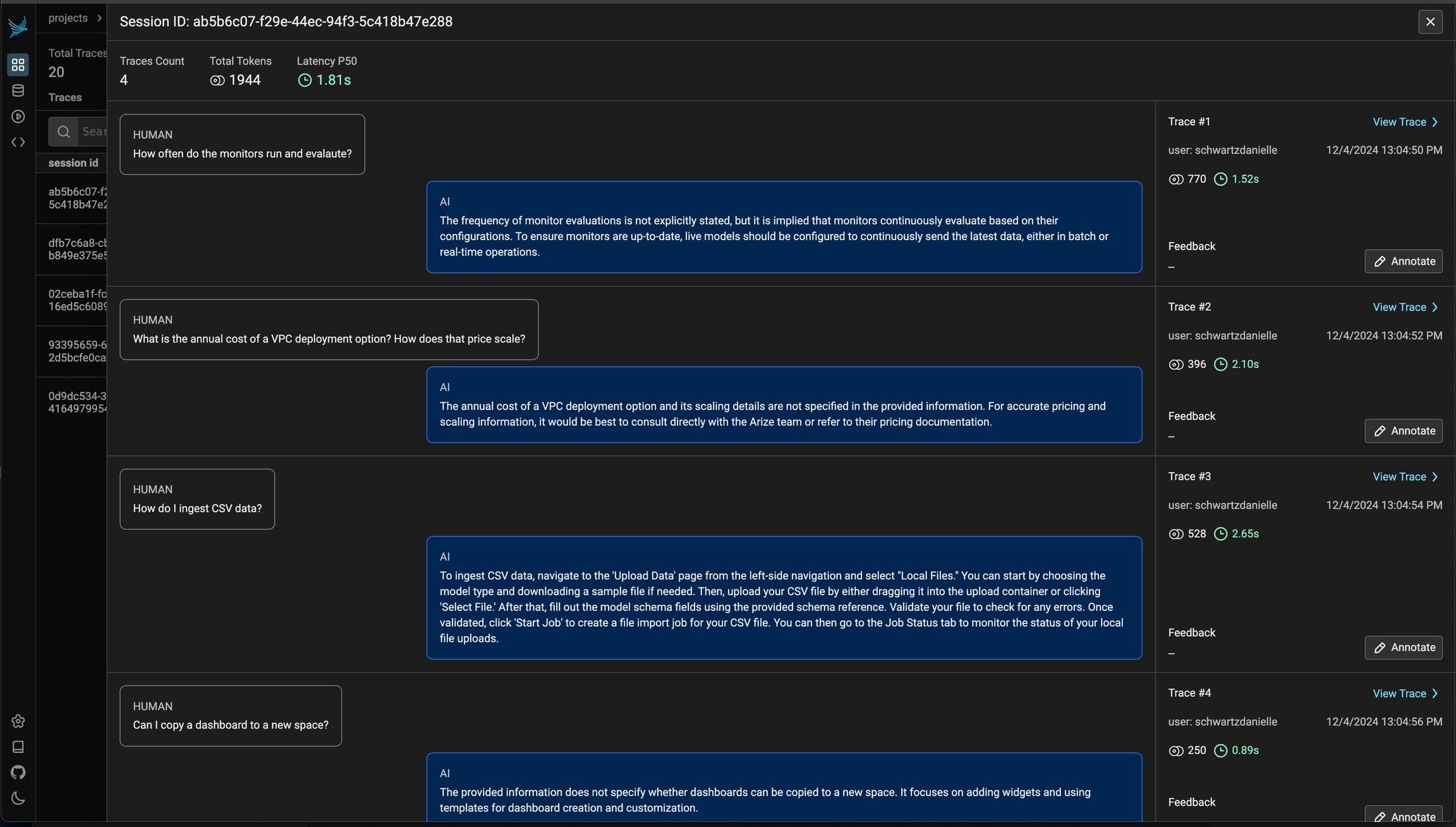

Sessions enable tracking and organizing related traces across multi-turn conversations with your AI application. When building conversational AI, maintaining context between interactions is critical - Sessions make this possible from an observability perspective.

With Sessions in Phoenix, you can:

Track the entire history of a conversation in a single thread

View conversations in a chatbot-like UI showing inputs and outputs of each turn

Search through sessions to find specific interactions

Track token usage and latency per conversation

This feature is particularly valuable for applications where context builds over time, like chatbots, virtual assistants, or any other multi-turn interaction. By tagging spans with a consistent session ID, you create a connected view that reveals how your application performs across an entire user journey.

Check out how to Setup Sessions

Guides on how to use traces

How to set custom attributes and semantic attributes to child spans and spans created by auto-instrumentors.

Create and customize spans for your use-case

How to query spans for to construct DataFrames to use for evaluation

How to log evaluation results to annotate traces with evals

Learn how to use the phoenix.otel library

Learn how you can use basic OpenTelemetry to instrument your application.

Learn how to use Phoenix's decorators to easily instrument specific methods or code blocks in your application.

Setup tracing for your TypeScript application.

Learn about Projects in Phoenix, and how to use them.

Understand Sessions and how they can be used to group user conversations.

phoenix.otel is a lightweight wrapper around OpenTelemetry primitives with Phoenix-aware defaults.

These defaults are aware of environment variables you may have set to configure Phoenix:

PHOENIX_COLLECTOR_ENDPOINT

PHOENIX_PROJECT_NAME

PHOENIX_CLIENT_HEADERS

PHOENIX_API_KEY

PHOENIX_GRPC_PORT

phoenix.otel.registerThe phoenix.otel module provides a high-level register function to configure OpenTelemetry tracing by setting a global TracerProvider. The register function can also configure headers and whether or not to process spans one by one or by batch.

If the PHOENIX_API_KEY environment variable is set, register will automatically add an authorization header to each span payload.

There are two ways to configure the collector endpoint:

Using environment variables

Using the endpoint keyword argument

If you're setting the PHOENIX_COLLECTOR_ENDPOINT environment variable, register will

automatically try to send spans to your Phoenix server using gRPC.

endpoint directlyWhen passing in the endpoint argument, you must specify the fully qualified endpoint. If the PHOENIX_GRPC_PORT environment variable is set, it will override the default gRPC port.

The HTTP transport protocol is inferred from the endpoint

The GRPC transport protocol is inferred from the endpoint

Additionally, the protocol argument can be used to enforce the OTLP transport protocol regardless of the endpoint. This might be useful in cases such as when the GRPC endpoint is bound to a different port than the default (4317). The valid protocols are: "http/protobuf", and "grpc".

register can be configured with different keyword arguments:

project_name: The Phoenix project name

or use PHOENIX_PROJECT_NAME env. var

headers: Headers to send along with each span payload

or use PHOENIX_CLIENT_HEADERS env. var

batch: Whether or not to process spans in batch

Once you've connected your application to your Phoenix instance using phoenix.otel.register, you need to instrument your application. You have a few options to do this:

Using OpenInference auto-instrumentors. If you've used the auto_instrument flag above, then any instrumentor packages in your environment will be called automatically. For a full list of OpenInference packages, see https://arize.com/docs/phoenix/integrations

While the spans created via Phoenix and OpenInference create a solid foundation for tracing your application, sometimes you need to create and customize your LLM spans

Phoenix and OpenInference use the OpenTelemetry Trace API to create spans. Because Phoenix supports OpenTelemetry, this means that you can perform manual instrumentation, no LLM framework required! This guide will help you understand how to create and customize spans using the OpenTelemetry Trace API.

First, ensure you have the API and SDK packages:

For full documentation on the OpenInference semantic conventions, please consult the specification

Configuring an OTel tracer involves some boilerplate code that the instrumentors in phoenix.trace take care of for you. If you're manually instrumenting your application, you'll need to implement this boilerplate yourself:

This snippet contains a few OTel concepts:

A resource represents an origin (e.g., a particular service, or in this case, a project) from which your spans are emitted.

Span processors filter, batch, and perform operations on your spans prior to export.

Your tracer provides a handle for you to create spans and add attributes in your application code.

The collector (e.g., Phoenix) receives the spans exported by your application.

To create a span, you'll typically want it to be started as the current span.

You can also use start_span to create a span without making it the current span. This is usually done to track concurrent or asynchronous operations.

If you have a distinct sub-operation you'd like to track as a part of another one, you can create span to represent the relationship:

When you view spans in a trace visualization tool, child will be tracked as a nested span under parent.

It's common to have a single span track the execution of an entire function. In that scenario, there is a decorator you can use to reduce code:

Use of the decorator is equivalent to creating the span inside do_work() and ending it when do_work() is finished.

To use the decorator, you must have a tracer instance in scope for your function declaration.

Sometimes it's helpful to access whatever the current span is at a point in time so that you can enrich it with more information.

Attributes let you attach key/value pairs to a spans so it carries more information about the current operation that it's tracking.

Notice above that the attributes have a specific prefix operation. When adding custom attributes, it's best practice to vendor your attributes (e.x. mycompany.) so that your attributes do not clash with semantic conventions.

To use OpenInference Semantic Attributes in Python, ensure you have the semantic conventions package:

Then you can use it in code:

Events are human-readable messages that represent "something happening" at a particular moment during the lifetime of a span. You can think of it as a primitive log.

The span status allows you to signal the success or failure of the code executed within the span.

It can be a good idea to record exceptions when they happen. It’s recommended to do this in conjunction with setting span status.

As part of the OpenInference library, Phoenix provides helpful abstractions to make manual instrumentation easier.

This documentation provides a guide on using OpenInference OTEL tracing decorators and methods for instrumenting functions, chains, agents, and tools using OpenTelemetry.

These tools can be combined with, or used in place of, OpenTelemetry instrumentation code. They are designed to simplify the instrumentation process.

If you'd prefer to use pure OTEL instead, see Setup using base OTEL

Ensure you have OpenInference and OpenTelemetry installed:

You can configure the tracer using either TracerProvider from openinference.instrumentation or using phoenix.otel.register.

Your tracer object can now be used in two primary ways:

This entire function will appear as a Span in Phoenix. Input and output attributes in Phoenix will be set automatically based on my_func's parameters and return. The status attribute will also be set automatically.

The code within this clause will be captured as a Span in Phoenix. Here the input, output, and status must be set manually.

This approach is useful when you need only a portion of a method to be captured as a Span.

OpenInference Span Kinds denote the possible types of spans you might capture, and will be rendered different in the Phoenix UI.

The possible values are:\

CHAIN

General logic operations, functions, or code blocks

LLM

Making LLM calls

TOOL

Completing tool calls

RETRIEVER

Retrieving documents

EMBEDDING

Generating embeddings

AGENT

Agent invokations - typically a top level or near top level span

RERANKER

Reranking retrieved context

UNKNOWN

Unknown

GUARDRAIL

Guardrail checks

EVALUATOR

Evaluators - typically only use by Phoenix when automatically tracing evaluation and experiment calls

Like other span kinds, LLM spans can be instrumented either via a context manager or via a decorator pattern. It's also possible to directly patch client methods.

While this guide uses the OpenAI Python client for illustration, in practice, you should use the OpenInference auto-instrumentors for OpenAI whenever possible and resort to manual instrumentation for LLM spans only as a last resort.

To run the snippets in this section, set your OPENAI_API_KEY environment variable.

This decorator pattern above works for sync functions, async coroutine functions, sync generator functions, and async generator functions. Here's an example with an async generator.

It's also possible to directly patch methods on a client. This is useful if you want to transparently use the client in your application with instrumentation logic localized in one place.

The snippets above produce LLM spans with input and output values, but don't offer rich UI for messages, tools, invocation parameters, etc. In order to manually instrument LLM spans with these features, users can define their own functions to wrangle the input and output of their LLM calls into OpenInference format. The openinference-instrumentation library contains helper functions that produce valid OpenInference attributes for LLM spans:

get_llm_attributes

get_input_attributes

get_output_attributes

For OpenAI, these functions might look like this:

When using a context manager to create LLM spans, these functions can be used to wrangle inputs and outputs.

When using the tracer.llm decorator, these functions are passed via the process_input and process_output parameters and should satisfy the following:

The input signature of process_input should exactly match the input signature of the decorated function.

The input signature of process_output has a single argument, the output of the decorated function. This argument accepts the returned value when the decorated function is a sync or async function, or a list of yielded values when the decorated function is a sync or async generator function.

Both process_input and process_output should output a dictionary mapping attribute names to values.

When decorating a generator function, process_output should accept a single argument, a list of the values yielded by the decorated function.

Then the decoration is the same as before.

As before, it's possible to directly patch the method on the client. Just ensure that the input signatures of process_input and the patched method match.

OpenInference includes message types that can be useful in composing text and image or other file inputs and outputs:

Instrumentation is the act of adding observability code to an app yourself.

If you’re instrumenting an app, you need to use the OpenTelemetry SDK for your language. You’ll then use the SDK to initialize OpenTelemetry and the API to instrument your code. This will emit telemetry from your app, and any library you installed that also comes with instrumentation.

Now lets walk through instrumenting, and then tracing, a sample express application.

Install OpenTelemetry API packages:

Install OpenInference instrumentation packages. Below is an example of adding instrumentation for OpenAI as well as the semantic conventions for OpenInference.

If a TracerProvider is not created, the OpenTelemetry APIs for tracing will use a no-op implementation and fail to generate data. As explained next, create an instrumentation.ts (or instrumentation.js) file to include all of the provider initialization code in Node.

Node.js

Create instrumentation.ts (or instrumentation.js) to contain all the provider initialization code:

This basic setup has will instrument chat completions via native calls to the OpenAI client.

Picking the right span processor

In our instrumentation.ts file above, we use the BatchSpanProcessor. The BatchSpanProcessor processes spans in batches before they are exported. This is usually the right processor to use for an application.

In contrast, the SimpleSpanProcessor processes spans as they are created. This means that if you create 5 spans, each will be processed and exported before the next span is created in code. This can be helpful in scenarios where you do not want to risk losing a batch, or if you’re experimenting with OpenTelemetry in development. However, it also comes with potentially significant overhead, especially if spans are being exported over a network - each time a call to create a span is made, it would be processed and sent over a network before your app’s execution could continue.

In most cases, stick with BatchSpanProcessor over SimpleSpanProcessor.

Tracing instrumented libraries

Now that you have configured a tracer provider, and instrumented the openai package, lets see how we can generate traces for a sample application.

First, install the dependencies required for our sample app.

Next, create an app.ts (or app.js ) file, that hosts a simple express server for executing OpenAI chat completions.

Then, we will start our application, loading the instrumentation.ts file before app.ts so that our instrumentation code can instrument openai .

Finally, we can execute a request against our server

After a few moments, a new project openai-service will appear in the Phoenix UI, along with the trace generated by our OpenAI chat completion!

Anywhere in your application where you write manual tracing code should call getTracer to acquire a tracer. For example:

It’s generally recommended to call getTracer in your app when you need it rather than exporting the tracer instance to the rest of your app. This helps avoid trickier application load issues when other required dependencies are involved.

Below is an example of acquiring a tracer within application scope.

The API of OpenTelemetry JavaScript exposes two methods that allow you to create spans:

In most cases you want to use the latter (tracer.startActiveSpan), as it takes care of setting the span and its context active.

The code below illustrates how to create an active span.

The above instrumented code can now be pasted in the /chat handler. You should now be able to see spans emitted from your app.

Start your app as follows, and then send it requests by visiting http://localhost:8080/chat?message="how long is a pencil" with your browser or curl.

After a while, you should see the spans printed in the console by the ConsoleSpanExporter, something like this:

You can also add attributes to a span as it’s created:

Semantic Attributes

First add both semantic conventions as a dependency to your application:

Add the following to the top of your application file:

Finally, you can update your file to include semantic attributes:

While Phoenix captures these, they are currently not displayed in the UI. Contact us if you would like to support!

The status can be set at any time before the span is finished.

sdk-trace-base and manually propagating span contextIn some cases, you may not be able to use either the Node.js SDK nor the Web SDK. The biggest difference, aside from initialization code, is that you’ll have to manually set spans as active in the current context to be able to create nested spans.

Initializing tracing with sdk-trace-base

Initializing tracing is similar to how you’d do it with Node.js or the Web SDK.

Like the other examples in this document, this exports a tracer you can use throughout the app.

Creating nested spans with sdk-trace-base

To create nested spans, you need to set whatever the currently-created span is as the active span in the current context. Don’t bother using startActiveSpan because it won’t do this for you.

All other APIs behave the same when you use sdk-trace-base compared with the Node.js SDKs.

Phoenix uses projects to group traces. If left unspecified, all traces are sent to a default project.

In the notebook, you can set the PHOENIX_PROJECT_NAME environment variable before adding instrumentation or running any of your code.

In python this would look like:

Note that setting a project via an environment variable only works in a notebook and must be done BEFORE instrumentation is initialized. If you are using OpenInference Instrumentation, see the Server tab for how to set the project name in the Resource attributes.

Alternatively, you can set the project name in your register function call:

If you are using Phoenix as a collector and running your application separately, you can set the project name in the Resource attributes for the trace provider.

Projects work by setting something called the Resource attributes (as seen in the OTEL example above). The phoenix server uses the project name attribute to group traces into the appropriate project.

Typically you want traces for an LLM app to all be grouped in one project. However, while working with Phoenix inside a notebook, we provide a utility to temporarily associate spans with different projects. You can use this to trace things like evaluations.

How to track sessions across multiple traces

Sessions UI is available in Phoenix 7.0 and requires a db migration if you're coming from an older version of Phoenix.

A Session is a sequence of traces representing a single session (e.g. a session or a thread). Each response is represented as its own trace, but these traces are linked together by being part of the same session.

To associate traces together, you need to pass in a special metadata key where the value is the unique identifier for that thread.

OpenAI tracing with Sessions

Python

LlamaIndex tracing with Sessions

Python

OpenAI tracing with Sessions

TS/JS

Below is an example of logging conversations:

First make sure you have the required dependancies installed

Below is an example of how to use openinference.instrumentation to the traces created.

The easiest way to add sessions to your application is to install @arizeai/openinfernce-core

You now can use either the session.id semantic attribute or the setSession utility function from openinference-core to associate traces with a particular session:

You can view the sessions for a given project by clicking on the "Sessions" tab in the project. You will see a list of all the recent sessions as well as some analytics. You can search the content of the messages to narrow down the list.

You can then click into a given session. This will open the history of a particular session. If the sessions contain input / output, you will see a chatbot-like UI where you can see the a history of inputs and outputs.

For LangChain, in order to log runs as part of the same thread you need to pass a special metadata key to the run. The key value is the unique identifier for that conversation. The key name should be one of:

session_id

thread_id

conversation_id.

Tracing can be augmented and customized by adding Metadata. Metadata includes your own custom attributes, user ids, session ids, prompt templates, and more.

Add Attributes, Metadata, Users

Learn how to add custom metadata and attributes to your traces

Instrument Prompt Templates and Prompt Variables

Learn how to define custom prompt templates and variables in your tracing.

Supported Context Attributes include:

Session ID* Unique identifier for a session

User ID* Unique identifier for a user.

Metadata Metadata associated with a span.

Tags* List of tags to give the span a category.

Prompt Template

Template Used to generate prompts as Python f-strings.

Version The version of the prompt template.

Variables key-value pairs applied to the prompt template.

Install the core instrumentation package:

It can also be used as a decorator:

It can also be used as a decorator:

It can also be used as a decorator:

It can also be used as a decorator:

The previous example is equivalent to doing the following, making using_attributes a very convenient tool for the more complex settings.

It can also be used as a decorator:

You can also use multiple setters at the same time to propagate multiple attributes to the span below. Since each setter function returns a new context, they can be used together as follows.

The tutorials and code snippets in these docs default to the SimpleSpanProcessor. A SimpleSpanProcessor processes and exports spans as they are created. This means that if you create 5 spans, each will be processed and exported before the next span is created in code. This can be helpful in scenarios where you do not want to risk losing a batch, or if you’re experimenting with OpenTelemetry in development. However, it also comes with potentially significant overhead, especially if spans are being exported over a network - each time a call to create a span is made, it would be processed and sent over a network before your app’s execution could continue.

The BatchSpanProcessor processes spans in batches before they are exported. This is usually the right processor to use for an application in production but it does mean spans may take some time to show up in Phoenix.

In production we recommend the BatchSpanProcessor over SimpleSpanProcessor

when deployed and the SimpleSpanProcessor when developing.

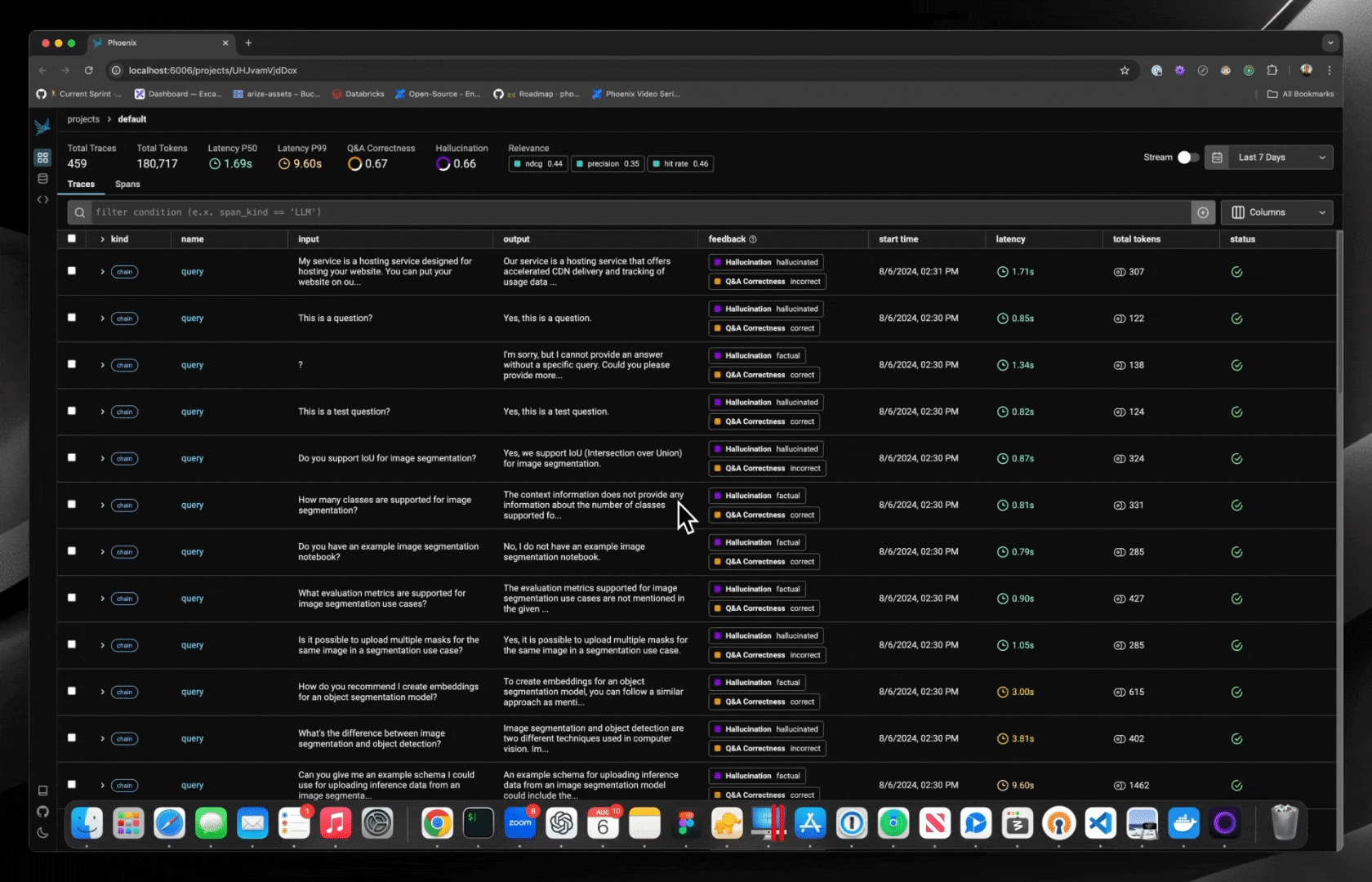

Annotating traces is a crucial aspect of evaluating and improving your LLM-based applications. By systematically recording qualitative or quantitative feedback on specific interactions or entire conversation flows, you can:

Track performance over time

Identify areas for improvement

Compare different model versions or prompts

Gather data for fine-tuning or retraining

Provide stakeholders with concrete metrics on system effectiveness

Phoenix allows you to annotate traces through the Client, the REST API, or the UI.

To learn how to configure annotations and to annotate through the UI, see Annotating in the UI

To learn how to add human labels to your traces, either manually or programmatically, see Annotating via the Client

To learn how to evaluate traces captured in Phoenix, see Running Evals on Traces

To learn how to upload your own evaluation labels into Phoenix, see Log Evaluation Results

For more background on the concept of annotations, see Annotations

How to annotate traces in the UI for analysis and dataset curation

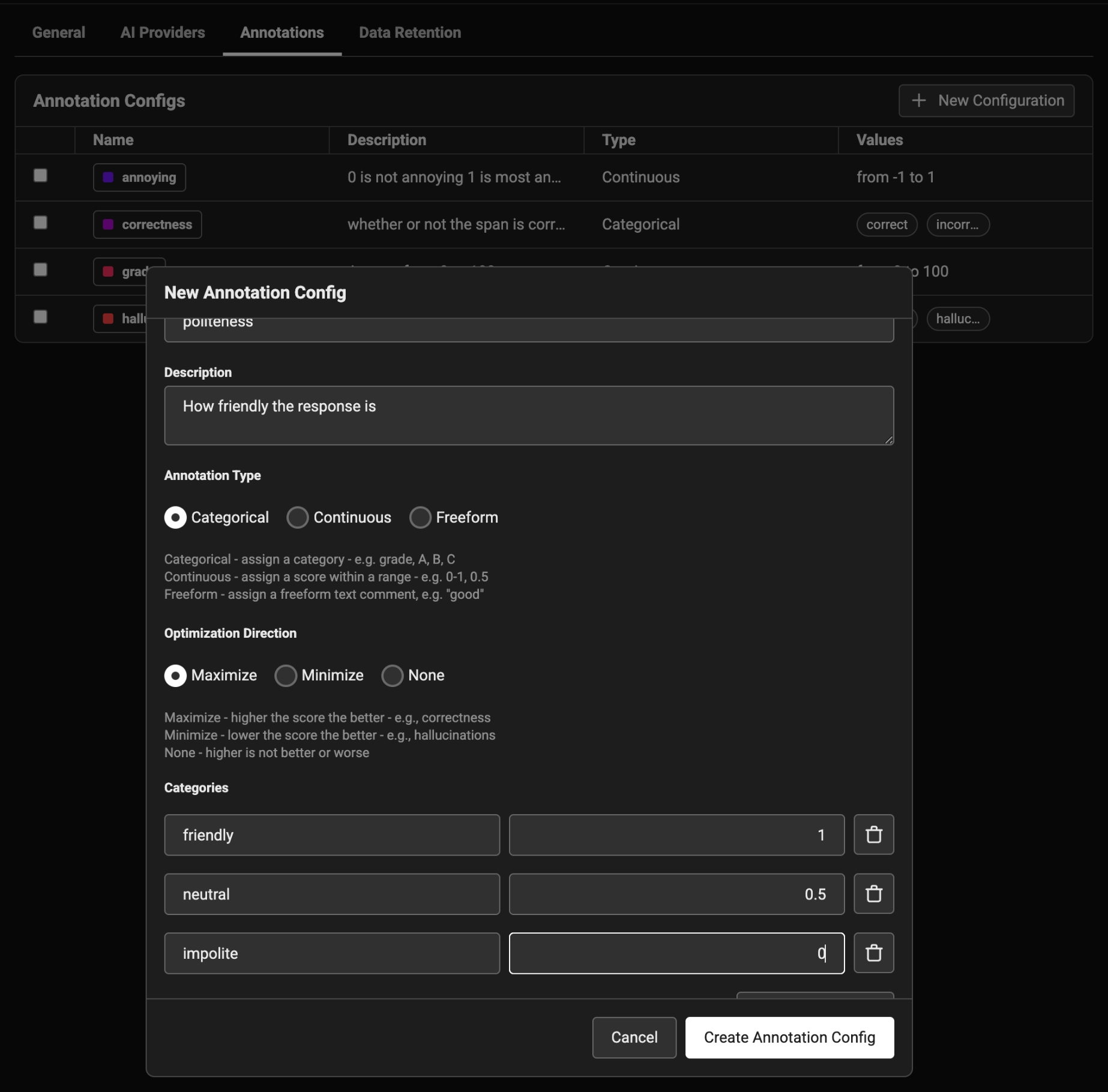

To annotate data in the UI, you first will want to setup a rubric for how to annotate. Navigate to Settings and create annotation configs (e.g. a rubric) for your data. You can create various different types of annotations: Categorical, Continuous, and Freeform.

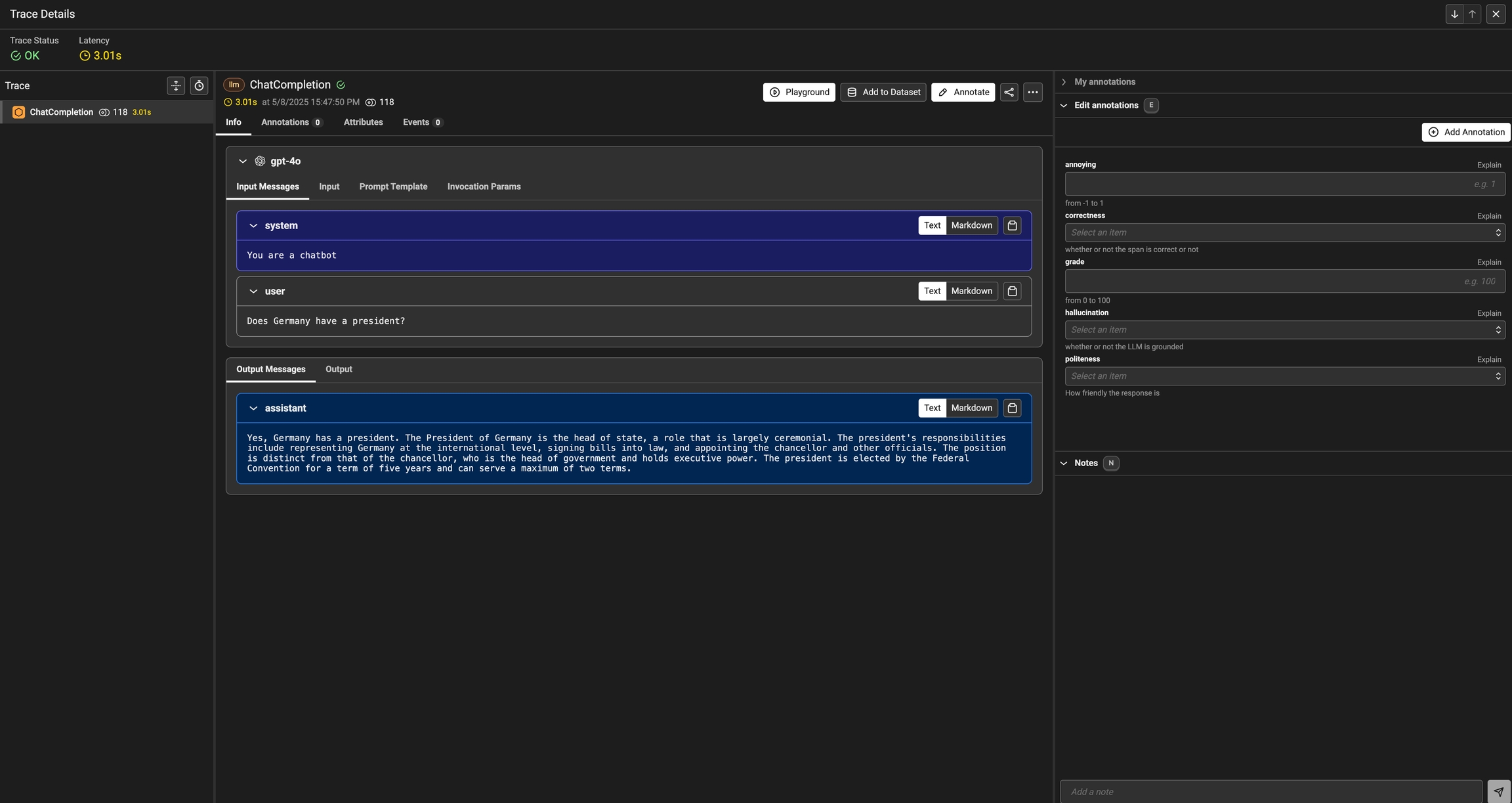

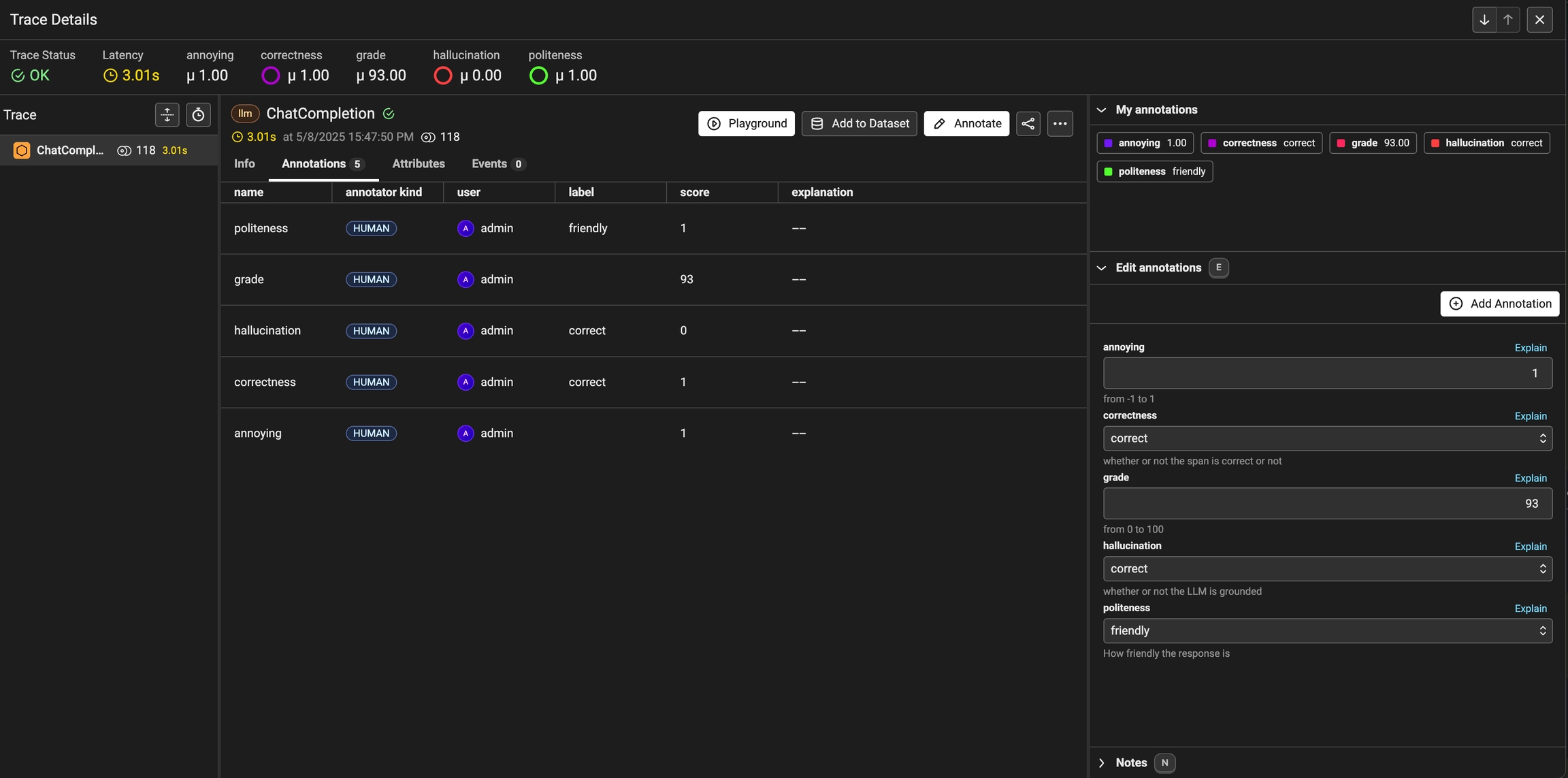

Once you have annotations configured, you can associate annotations to the data that you have traced. Click on the Annotate button and fill out the form to rate different steps in your AI application.

You can also take notes as you go by either clicking on the explain link or by adding your notes to the bottom messages UI.

You can always come back and edit / and delete your annotations. Annotations can be deleted from the table view under the Annotations tab.

Once an annotation has been provided, you can also add a reason to explain why this particular label or score was provided. This is useful to add additional context to the annotation.

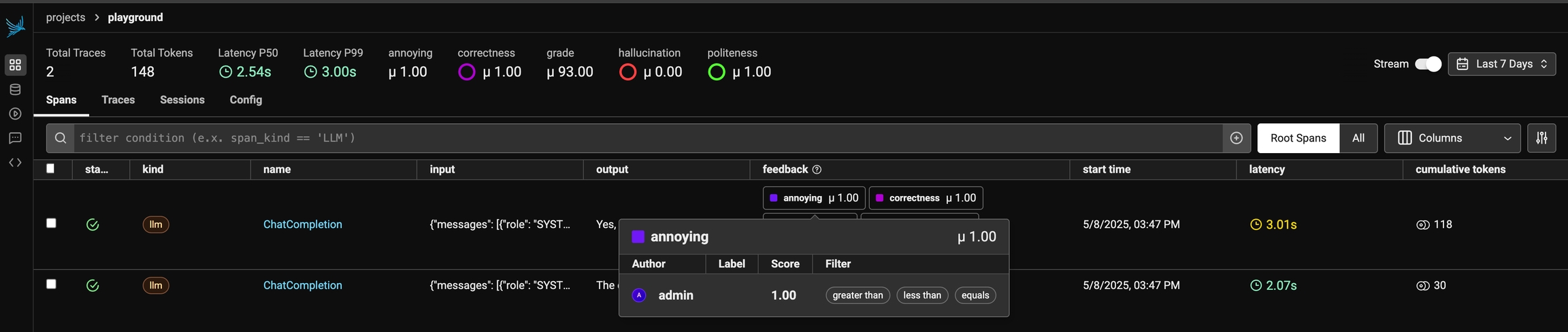

As annotations come in from various sources (annotators, evals), the entire list of annotations can be found under the Annotations tab. Here you can see the author, the annotator kind (e.g. was the annotation performed by a human, llm, or code), and so on. This can be particularly useful if you want to see if different annotators disagree.

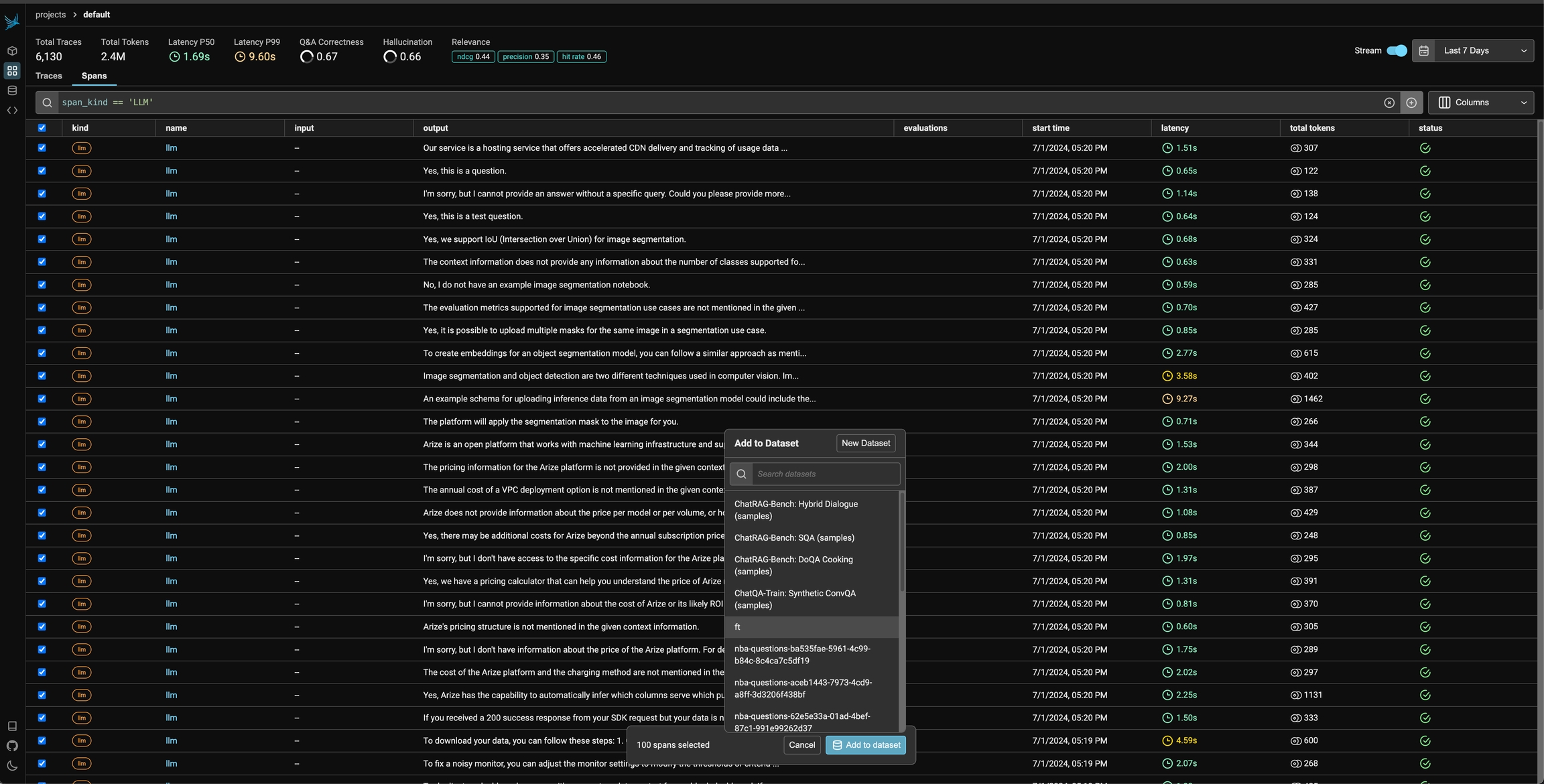

Once you have collected feedback in the form of annotations, you can filter your traces by the annotation values to narrow down to interesting samples (e.x. llm spans that are incorrect). Once filtered down to a sample of spans, you can export your selection to a dataset, which in turn can be used for things like experimentation, fine-tuning, or building a human-aligned eval.

Use the phoenix client to capture end-user feedback

When building LLM applications, it is important to collect feedback to understand how your app is performing in production. Phoenix lets you attach feedback to spans and traces in the form of annotations.

Annotations come from a few different sources:

Human Annotators

End users of your application

LLMs-as-Judges

Basic code checks

You can use the Phoenix SDK and API to attach feedback to a span.

Phoenix expects feedback to be in the form of an annotation. Annotations consist of these fields:

Note that you can provide a label, score, or explanation. With Phoenix an annotation has a name (like correctness), is associated with an annotator (LLM, HUMAN, or CODE), and can be attached to the spans you have logged to Phoenix.

Phoenix allows you to log multiple annotations of the same name to the same span. For example, a single span could have 5 different "correctness" annotations. This can be useful when collecting end user feedback.

Note: The API will overwrite span annotations of the same name, unless they have different "identifier" values.

If you want to track multiple annotations of the same name on the same span, make sure to include different "identifier" values on each.

Once you construct the annotation, you can send this to Phoenix via it's REST API. You can POST an annotation from your application to /v1/span_annotations like so:

If you're self-hosting Phoenix, be sure to change the endpoint in the code below to <your phoenix endpoint>/v1/span_annotations?sync=false

Retrieve the current span_id

If you'd like to collect feedback on currently instrumented code, you can get the current span using the opentelemetry SDK.

For LangChain, import get_current_span from our instrumentation library instead.

You can use the span_id to send an annotation associated with that span.

Retrieve the current spanId

You can use the spanId to send an annotation associated with that span.

How to use an LLM judge to label and score your application

This guide will walk you through the process of evaluating traces captured in Phoenix, and exporting the results to the Phoenix UI.

Note: if you're self-hosting Phoenix, swap your collector endpoint variable in the snippet below, and remove the Phoenix Client Headers variable.

Now that we have Phoenix configured, we can register that instance with OpenTelemetry, which will allow us to collect traces from our application here.

For the sake of making this guide fully runnable, we'll briefly generate some traces and track them in Phoenix. Typically, you would have already captured traces in Phoenix and would skip to "Download trace dataset from Phoenix"

Now that we have our trace dataset, we can generate evaluations for each trace. Evaluations can be generated in many different ways. Ultimately, we want to end up with a set of labels and/or scores for our traces.

You can generate evaluations using:

Plain code

Other evaluation packages

As long as you format your evaluation results properly, you can upload them to Phoenix and visualize them in the UI.

Let's start with a simple example of generating evaluations using plain code. OpenAI has a habit of repeating jokes, so we'll generate evaluations to label whether a joke is a repeat of a previous joke.

We now have a DataFrame with a column for whether each joke is a repeat of a previous joke. Let's upload this to Phoenix.

Our evals_df has a column for the span_id and a column for the evaluation result. The span_id is what allows us to connect the evaluation to the correct trace in Phoenix. Phoenix will also automatically look for columns named "label" and "score" to display in the UI.

You should now see evaluations in the Phoenix UI!

From here you can continue collecting and evaluating traces, or move on to one of these other guides:

This guide shows how LLM evaluation results in dataframes can be sent to Phoenix.

Before accessing px.Client(), be sure you've set the following environment variables:

A dataframe of span evaluations would look similar like the table below. It must contain span_id as an index or as a column. Once ingested, Phoenix uses the span_id to associate the evaluation with its target span.

5B8EF798A381

correct

"this is correct ..."

E19B7EC3GG02

incorrect

"this is incorrect ..."

The evaluations dataframe can be sent to Phoenix as follows. Note that the name of the evaluation must be supplied through the eval_name= parameter. In this case we name it "Q&A Correctness".

A dataframe of document evaluations would look something like the table below. It must contain span_id and document_position as either indices or columns. document_position is the document's (zero-based) index in the span's list of retrieved documents. Once ingested, Phoenix uses the span_id and document_position to associate the evaluation with its target span and document.

5B8EF798A381

relevant

"this is ..."

5B8EF798A381

irrelevant

"this is ..."

E19B7EC3GG02

relevant

"this is ..."

The evaluations dataframe can be sent to Phoenix as follows. Note that the name of the evaluation must be supplied through the eval_name= parameter. In this case we name it "Relevance".

Multiple sets of Evaluations can be logged by the same px.Client().log_evaluations() function call.

By default the client will push traces to the project specified in the PHOENIX_PROJECT_NAME environment variable or to the default project. If you want to specify the destination project explicitly, you can pass the project name as a parameter.

Learn how to load a file of traces into Phoenix

Learn how to export trace data from Phoenix

Before accessing px.Client(), be sure you've set the following environment variables:

If you're self-hosting Phoenix, ignore the client headers and change the collector endpoint to your endpoint.

You can also launch a temporary version of Phoenix in your local notebook to quickly view the traces. But be warned, this Phoenix instance will only last as long as your notebook environment is runing

Various options for to help you get data out of Phoenix

Exports all spans in a project as a dataframe

Evaluation - Filtering your spans locally using pandas instead of Phoenix DSL.

Exports specific spans or traces based on filters

Evaluation - Querying spans from Phoenix

Exports specific groups of spans

Agent Evaluation - Easily export tool calls.

RAG Evaluation - Easily exporting retrieved documents or Q&A data from a RAG system.

Saves all traces as a local file

Storing Data - Backing up an entire Phoenix instance.

Before using any of the methods above, make sure you've connected to px.Client() . You'll need to set the following environment variables:

If you're self-hosting Phoenix, ignore the client headers and change the collector endpoint to your endpoint.

If you prefer to handle your filtering locally, you can also download all spans as a dataframe using the get_spans_dataframe() function:

You can query for data using our query DSL (domain specific language).

This Query DSL is the same as what is used by the filter bar in the dashboard. It can be helpful to form your query string in the Phoenix dashboard for more immediate feedback, before moving it to code.

Below is an example of how to pull all retriever spans and select the input value. The output of this query is a DataFrame that contains the input values for all retriever spans.

By default, all queries will collect all spans that are in your Phoenix instance. If you'd like to focus on most recent spans, you can pull spans based on time frames using start_time and end_time.

Let's say we want to extract the retrieved documents into a DataFrame that looks something like the table below, where input denotes the query for the retriever, reference denotes the content of each document, and document_position denotes the (zero-based) index in each span's list of retrieved documents.

5B8EF798A381

0

What was the author's motivation for writing ...

In fact, I decided to write a book about ...

5B8EF798A381

1

What was the author's motivation for writing ...

I started writing essays again, and wrote a bunch of ...

...

...

...

...

E19B7EC3GG02

0

What did the author learn about ...

The good part was that I got paid huge amounts of ...

The .where() method accepts a string of valid Python boolean expression. The expression can be arbitrarily complex, but restrictions apply, e.g. making function calls are generally disallowed. Below is a conjunction filtering also on whether the input value contains the string 'programming'.

metadata is an attribute that is a dictionary and it can be filtered like a dictionary.

Note that Python strings do not have a contain method, and substring search is done with the in operator.

Get spans that do not have an evaluation attached yet

Span attributes can be selected by simply listing them inside .select() method.

Keyword-argument style can be used to rename the columns in the dataframe. The example below returns two columns named input and output instead of the original names of the attributes.

If arbitrary output names are desired, e.g. names with spaces and symbols, we can leverage Python's double-asterisk idiom for unpacking a dictionary, as shown below.

If a different separator is desired, say \n************, it can be specified as follows.

This is useful for joining a span to its parent span. To do that we would first index the child span by selecting its parent ID and renaming it as span_id. This works because span_id is a special column name: whichever column having that name will become the index of the output DataFrame.

Phoenix also provides helper functions that executes predefined queries for the following use cases.

The query below will automatically export any tool calls selected by LLM calls. The output DataFrame can be easily combined with Agent Function Calling Eval.

CDBC4CE34

What was the author's trick for ...

The author's trick for ...

Even then it took me several years to understand ...

...

...

...

...

Sometimes you may want to back up your Phoenix traces to a single file, rather than exporting specific spans to run evaluation.

Use the following command to save all traces from a Phoenix instance to a designated location.

You can specify the directory to save your traces by passing adirectory argument to the save method.

This output the trace ID and prints the path of the saved file:

💾 Trace dataset saved to under ID: f7733fda-6ad6-4427-a803-55ad2182b662

📂 Trace dataset path: /my_saved_traces/trace_dataset-f7733fda-6ad6-4427-a803-55ad2182b662.parquet

Span annotations can be an extremely valuable basis for improving your application. The Phoenix client provides useful ways to pull down spans and their associated annotations. This information can be used to:

build new LLM judges

form the basis for new datasets

help identify ideas for improving your application

If you only want the spans that contain a specific annotation, you can pass in a query that filters on annotation names, scores, or labels.

The queries can also filter by annotation scores and labels.

This spans dataframe can be used to pull associated annotations.

Instead of an input dataframe, you can also pass in a list of ids:

The annotations and spans dataframes can be easily joined to produce a one-row-per-annotation dataframe that can be used to analyze the annotations!

Learn how to block PII from logging to Phoenix

Learn how to selectively block or turn off tracing

Learn how to send only certain spans to Phoenix

Learn how to trace images

In some situations, you may need to modify the observability level of your tracing. For instance, you may want to keep sensitive information from being logged for security reasons, or you may want to limit the size of the base64 encoded images logged to reduced payload size.

The OpenInference Specification defines a set of environment variables you can configure to suit your observability needs. In addition, the OpenInference auto-instrumentors accept a trace config which allows you to set these value in code without having to set environment variables, if that's what you prefer

The possible settings are:

OPENINFERENCE_HIDE_INPUTS

Hides input value, all input messages & embedding input text

bool

False

OPENINFERENCE_HIDE_OUTPUTS

Hides output value & all output messages

bool

False

OPENINFERENCE_HIDE_INPUT_MESSAGES

Hides all input messages & embedding input text

bool

False

OPENINFERENCE_HIDE_OUTPUT_MESSAGES

Hides all output messages

bool

False

PENINFERENCE_HIDE_INPUT_IMAGES

Hides images from input messages

bool

False

OPENINFERENCE_HIDE_INPUT_TEXT

Hides text from input messages & input embeddings

bool

False

OPENINFERENCE_HIDE_OUTPUT_TEXT

Hides text from output messages

bool

False

OPENINFERENCE_HIDE_EMBEDDING_VECTORS

Hides returned embedding vectors

bool

False

OPENINFERENCE_HIDE_LLM_INVOCATION_PARAMETERS

Hides LLM invocation parameters

bool

False

OPENINFERENCE_BASE64_IMAGE_MAX_LENGTH

Limits characters of a base64 encoding of an image

int

32,000

To set up this configuration you can either:

Set environment variables as specified above

Define the configuration in code as shown below

Do nothing and fall back to the default values

Use a combination of the three, the order of precedence is:

Values set in the TraceConfig in code

Environment variables

default values

Below is an example of how to set these values in code using our OpenAI Python and JavaScript instrumentors, however, the config is respected by all of our auto-instrumentors.

Tracing can be paused temporarily or disabled permanently.

If there is a section of your code for which tracing is not desired, e.g. the document chunking process, it can be put inside the suppress_tracing context manager as shown below.

Calling .uninstrument() on the auto-instrumentors will remove tracing permanently. Below is the examples for LangChain, LlamaIndex and OpenAI, respectively.

Sometimes while instrumenting your application, you may want to filter out or modify certain spans from being sent to Phoenix. For example, you may want to filter out spans that are that contain sensitive information or contain redundant information.

To do this, you can use a custom SpanProcessor and attach it to the OpenTelemetry TracerProvider.

In this example, we're filtering out any spans that have the name "secret_span" by bypassing the on_start and on_end hooks of the inherited BatchSpanProcessor.

Notice that this logic can be extended to modify a span and redact sensitive information if preserving the span is preferred.

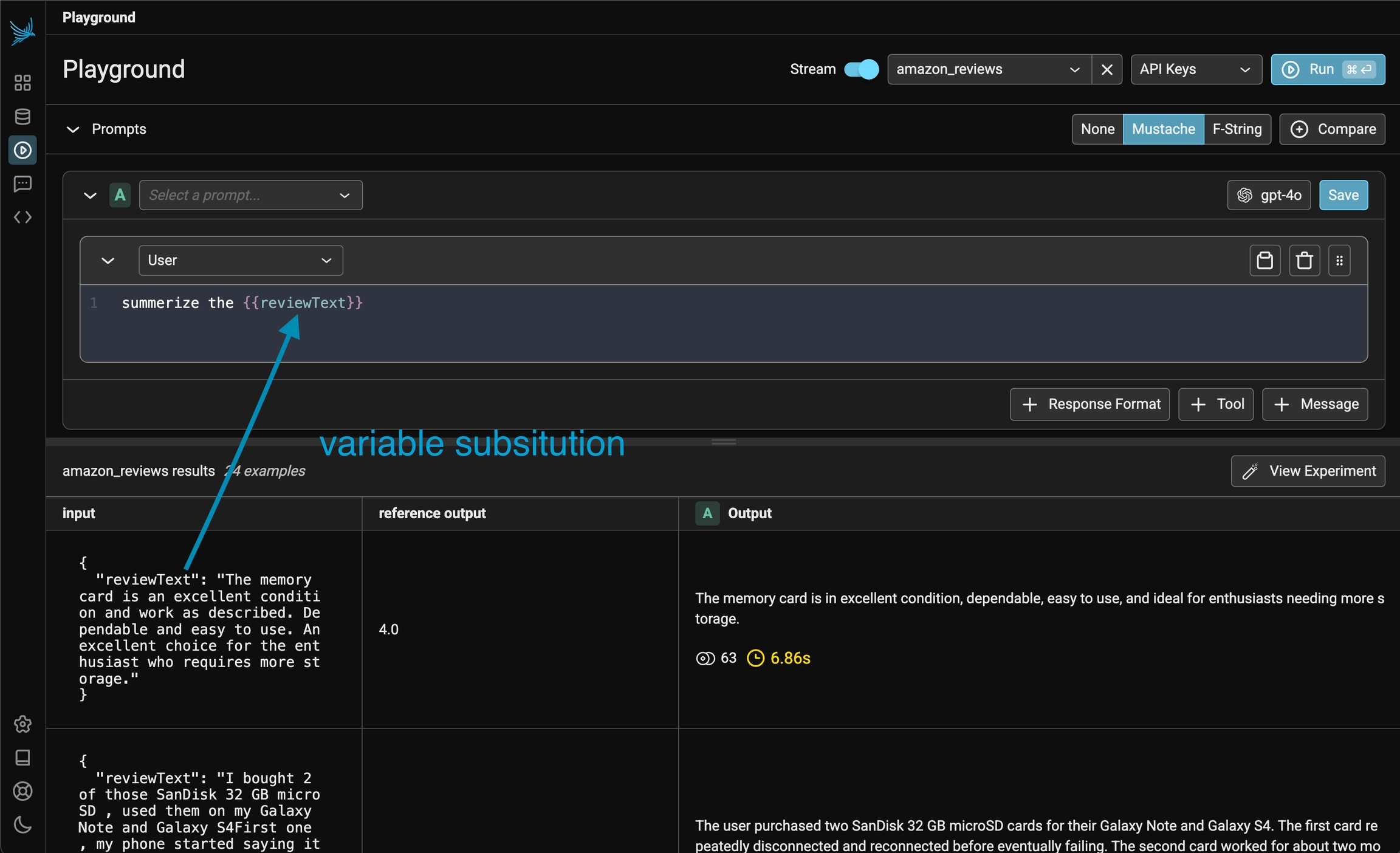

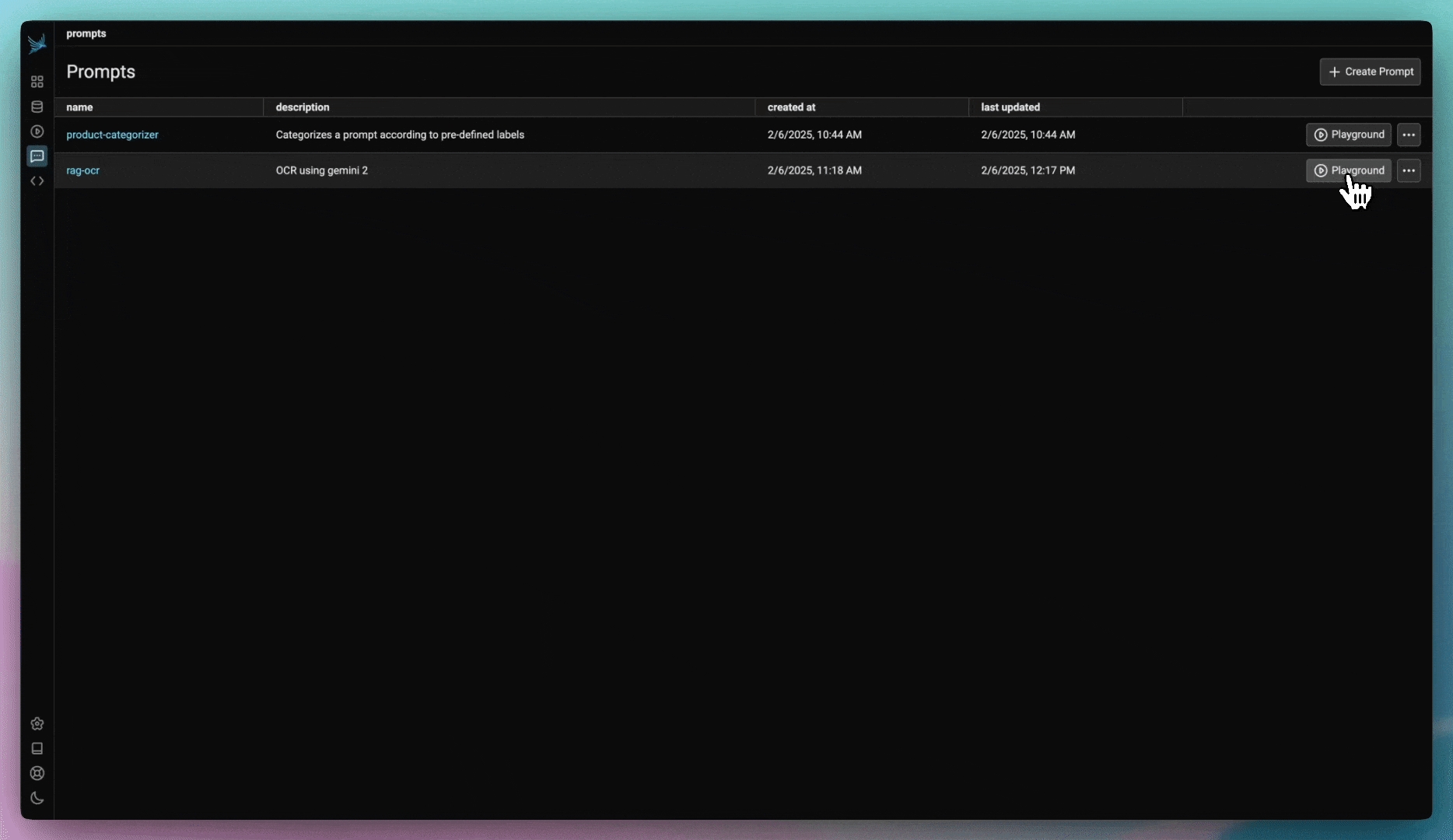

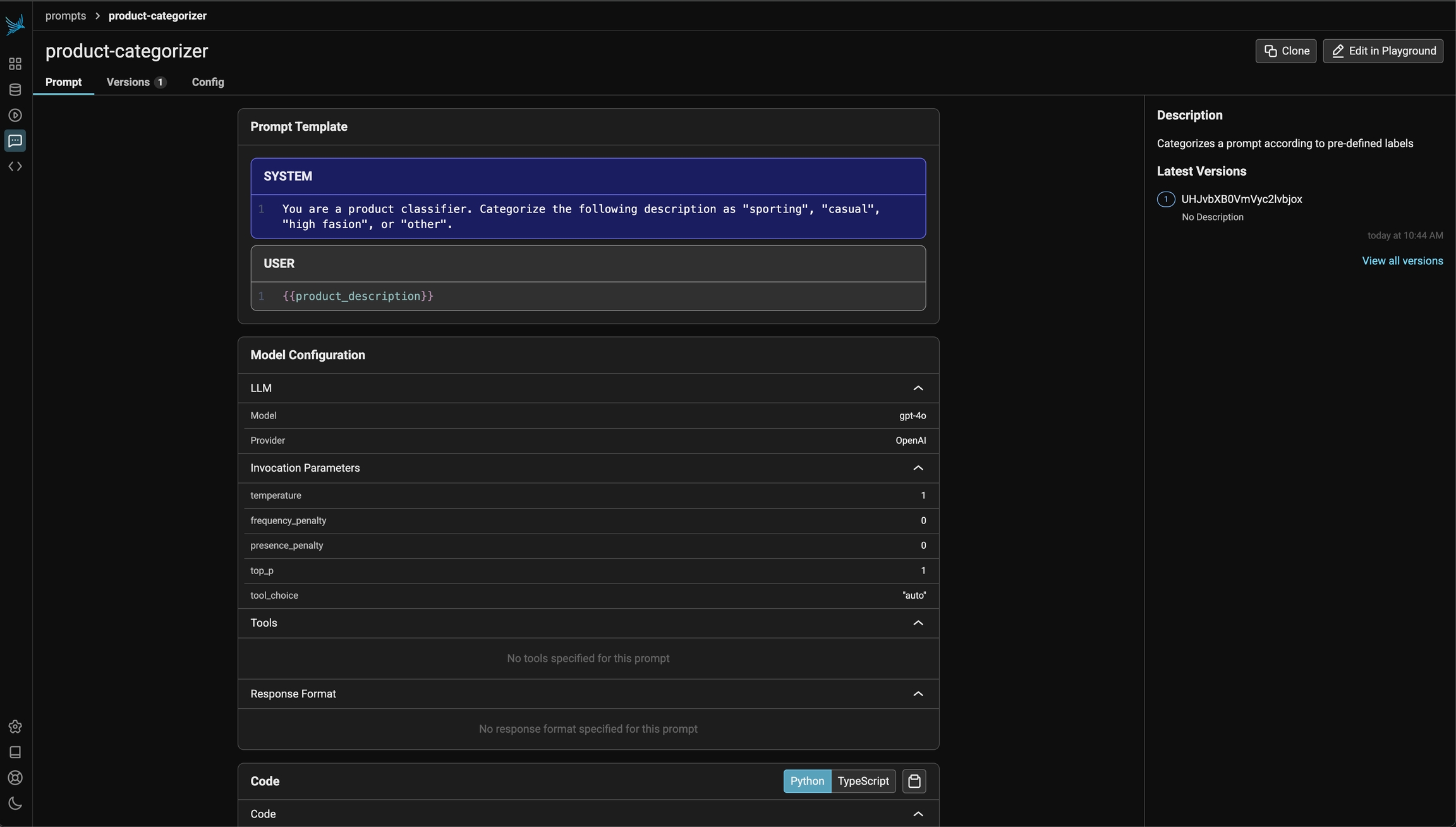

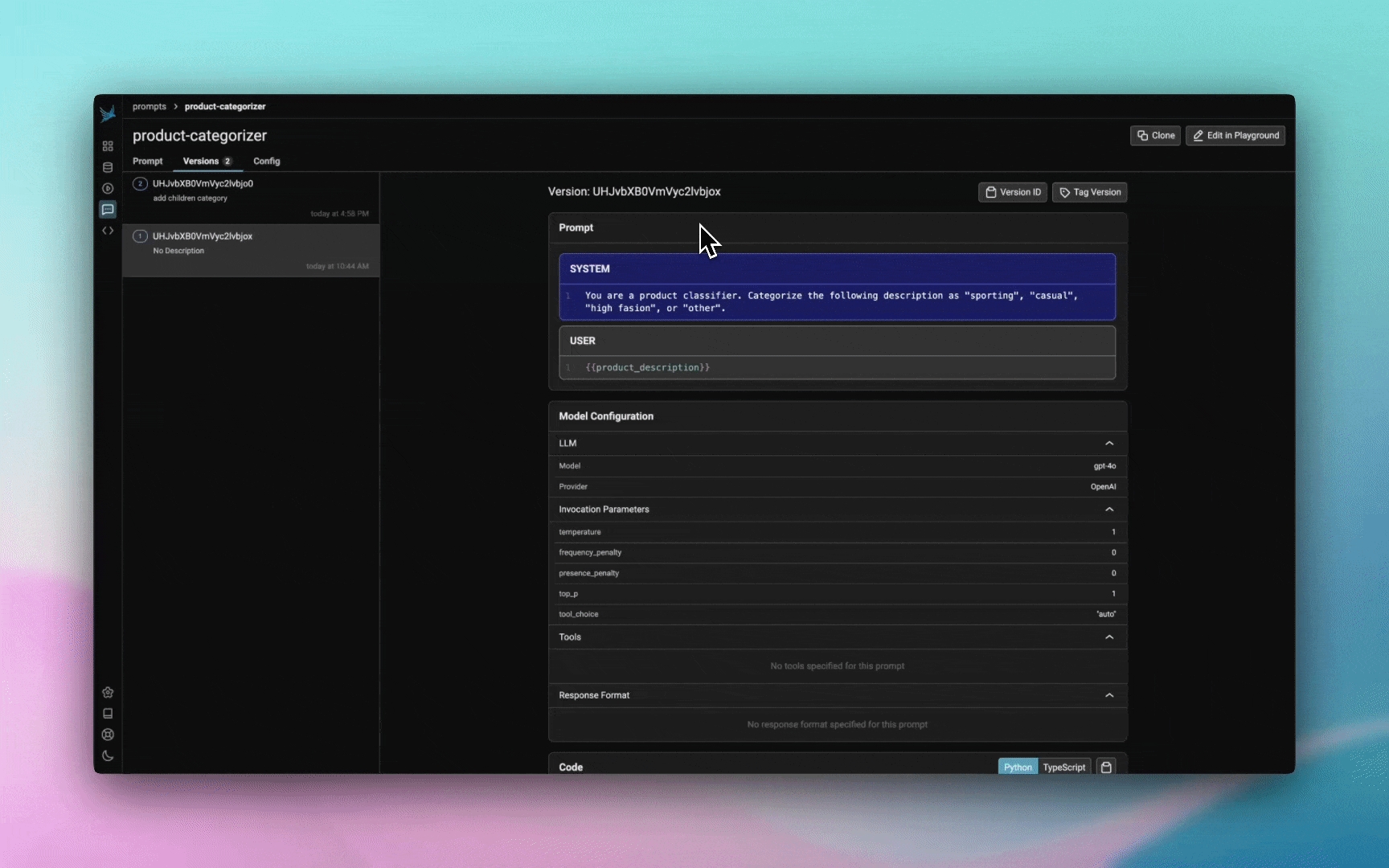

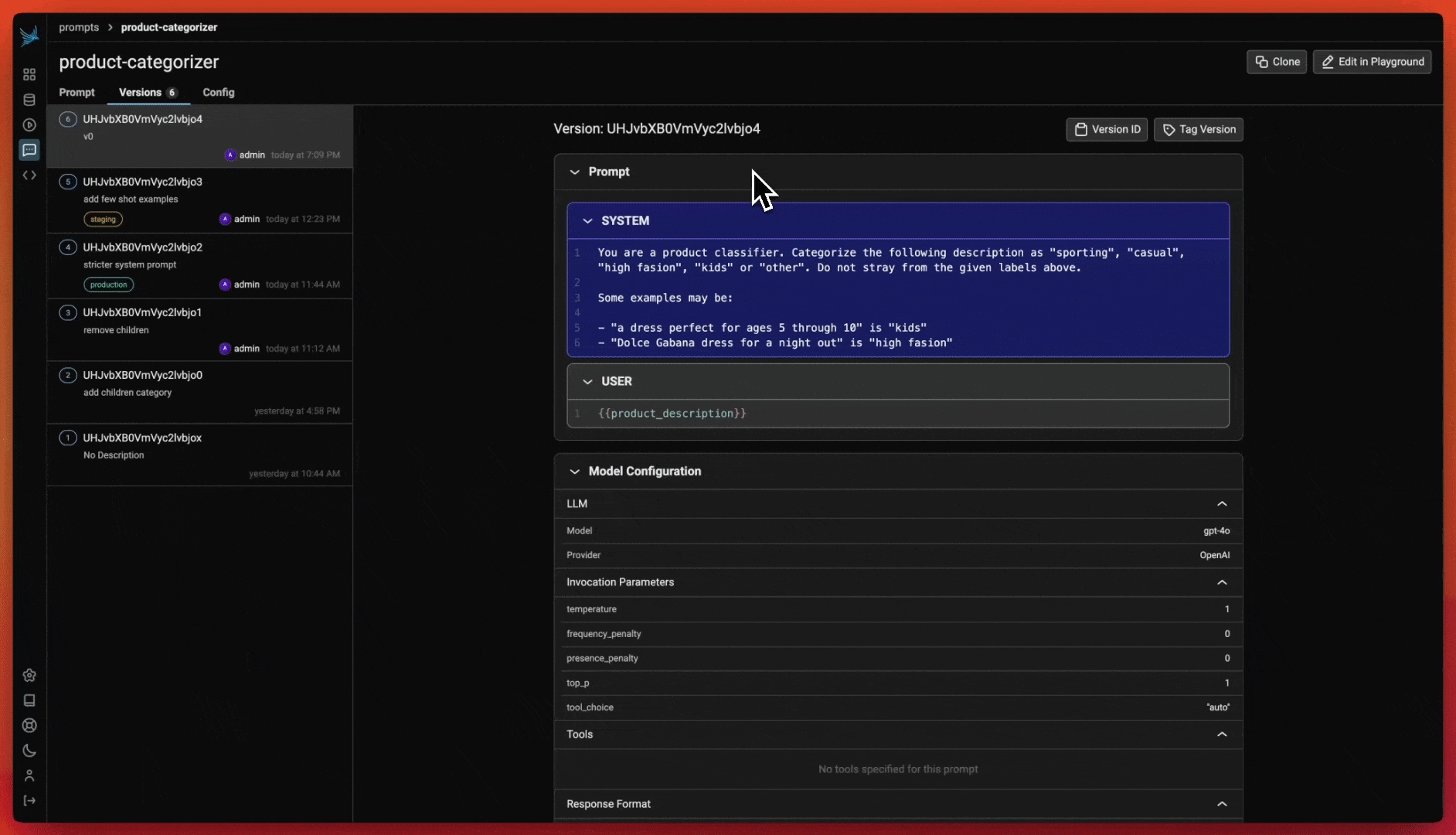

Prompt management allows you to create, store, and modify prompts for interacting with LLMs. By managing prompts systematically, you can improve reuse, consistency, and experiment with variations across different models and inputs.

Unlike traditional software, AI applications are non-deterministic and depend on natural language to provide context and guide model output. The pieces of natural language and associated model parameters embedded in your program are known as “prompts.”

Optimizing your prompts is typically the highest-leverage way to improve the behavior of your application, but “prompt engineering” comes with its own set of challenges. You want to be confident that changes to your prompts have the intended effect and don’t introduce regressions.

To get started, jump to Quickstart: Prompts.

Phoenix offers a comprehensive suite of features to streamline your prompt engineering workflow.

Version and track changes made to prompt templates

Prompt management allows you to create, store, and modify prompts for interacting with LLMs. By managing prompts systematically, you can improve reuse, consistency, and experiment with variations across different models and inputs.

Key benefits of prompt management include:

Reusability: Store and load prompts across different use cases.

Versioning: Track changes over time to ensure that the best performing version is deployed for use in your application.

Collaboration: Share prompts with others to maintain consistency and facilitate iteration.

To learn how to get started with prompt management, see Create a prompt

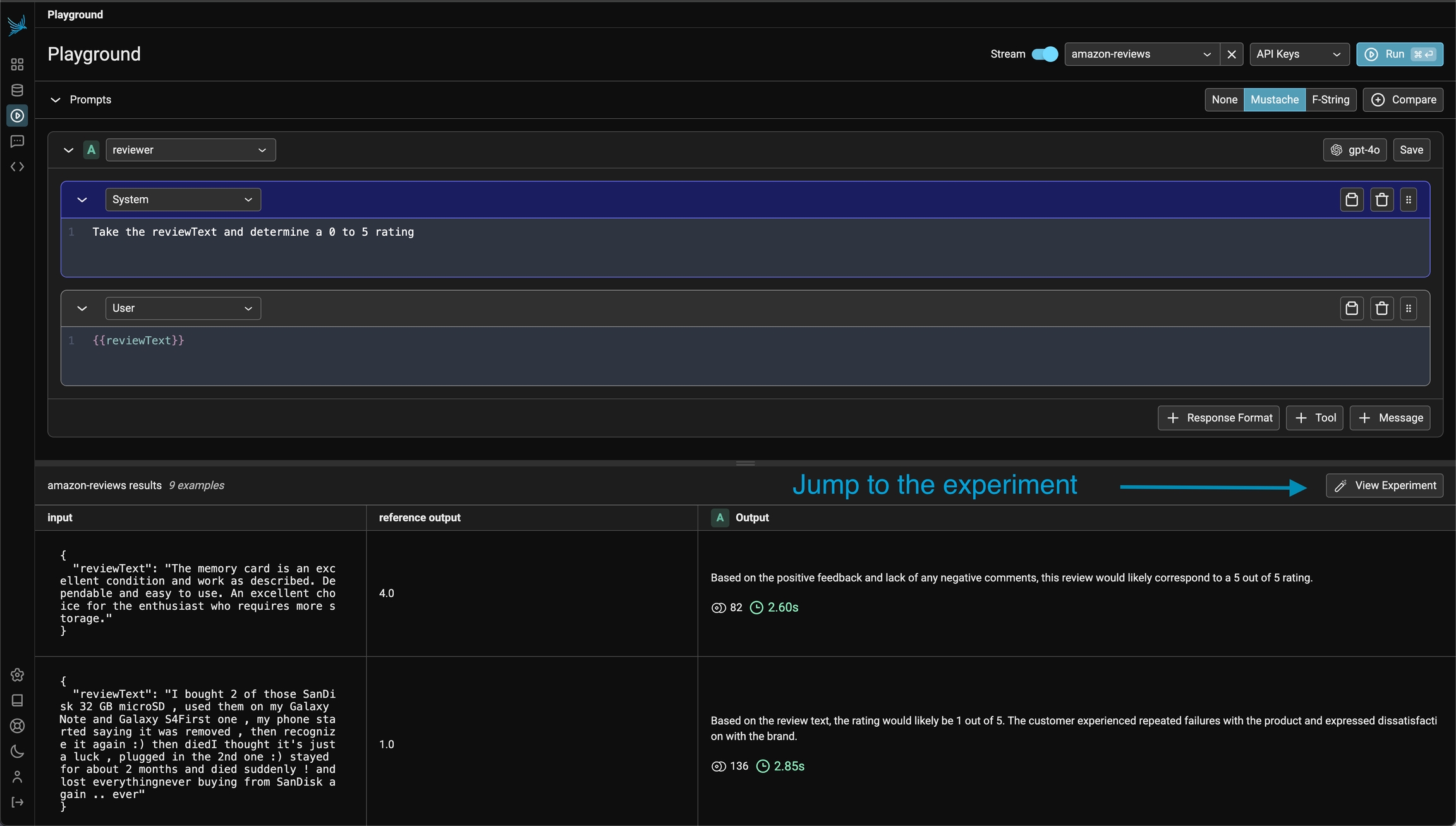

Replay LLM spans traced in your application directly in the playground

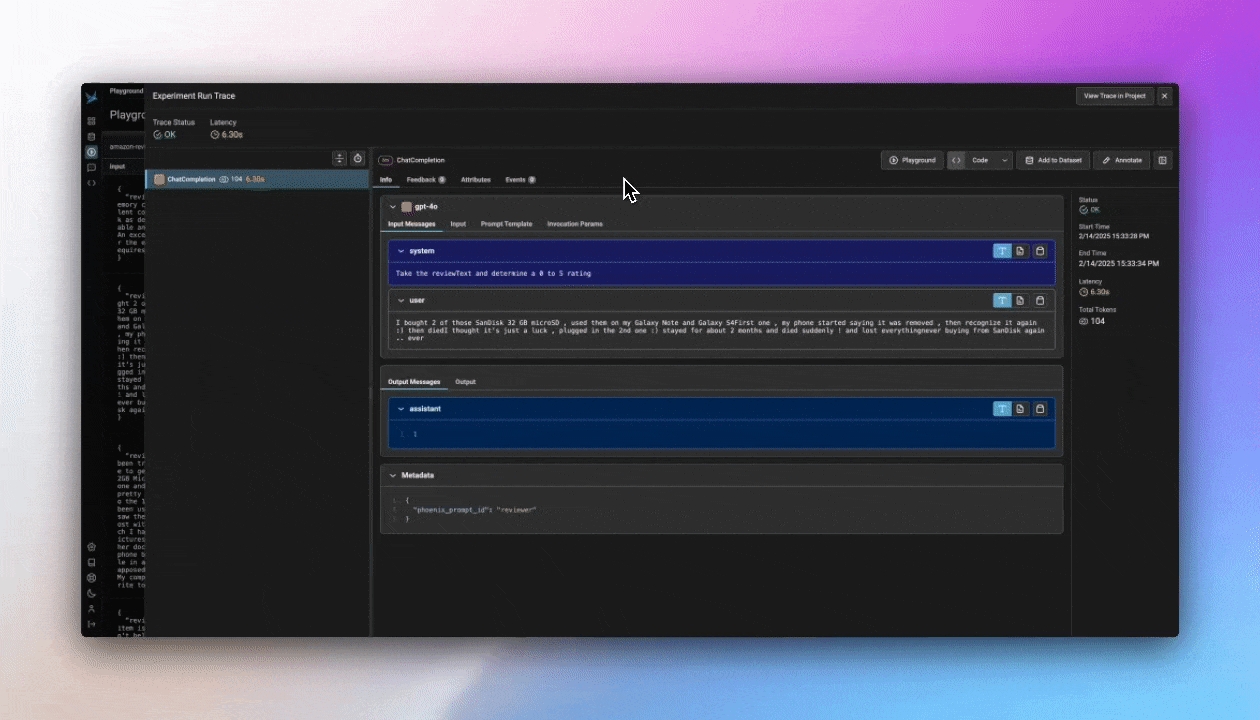

Have you ever wanted to go back into a multi-step LLM chain and just replay one step to see if you could get a better outcome? Well you can with Phoenix's Span Replay. LLM spans that are stored within Phoenix can be loaded into the Prompt Playground and replayed. Replaying spans inside of Playground enables you to debug and improve the performance of your LLM systems by comparing LLM provider outputs, tweaking model parameters, changing prompt text, and more.

Chat completions generated inside of Playground are automatically instrumented, and the recorded spans are immediately available to be replayed inside of Playground.

Pull and push prompt changes via Phoenix's Python and TypeScript Clients

Using Phoenix as a backend, Prompts can be managed and manipulated via code by using our Python or TypeScript SDKs.

With the Phoenix Client SDK you can:

To learn more about managing Prompts in code, see Using a prompt

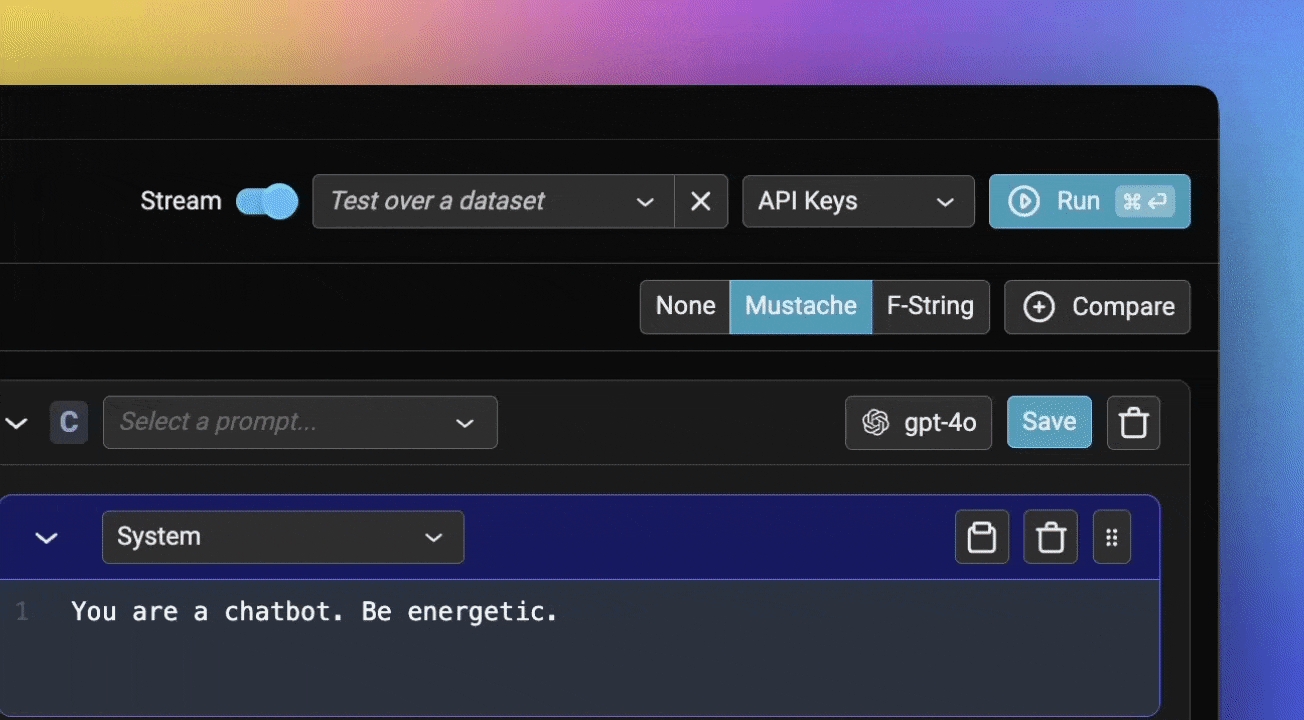

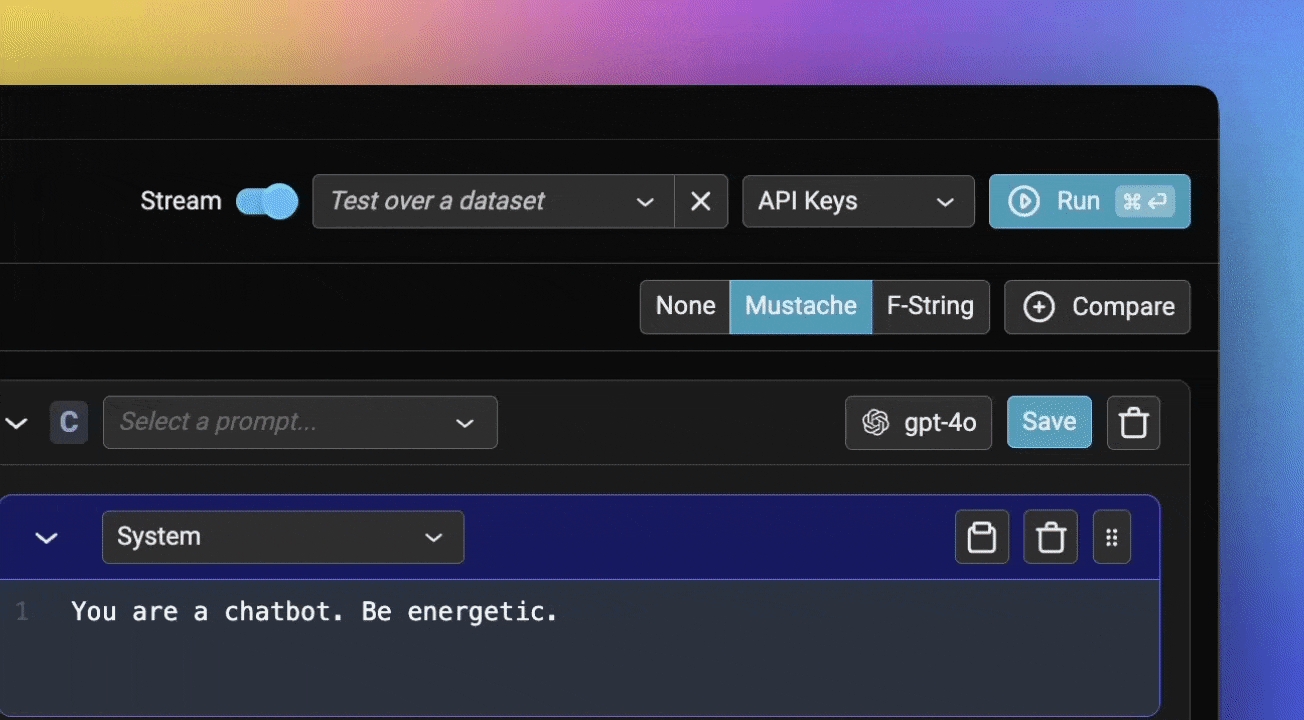

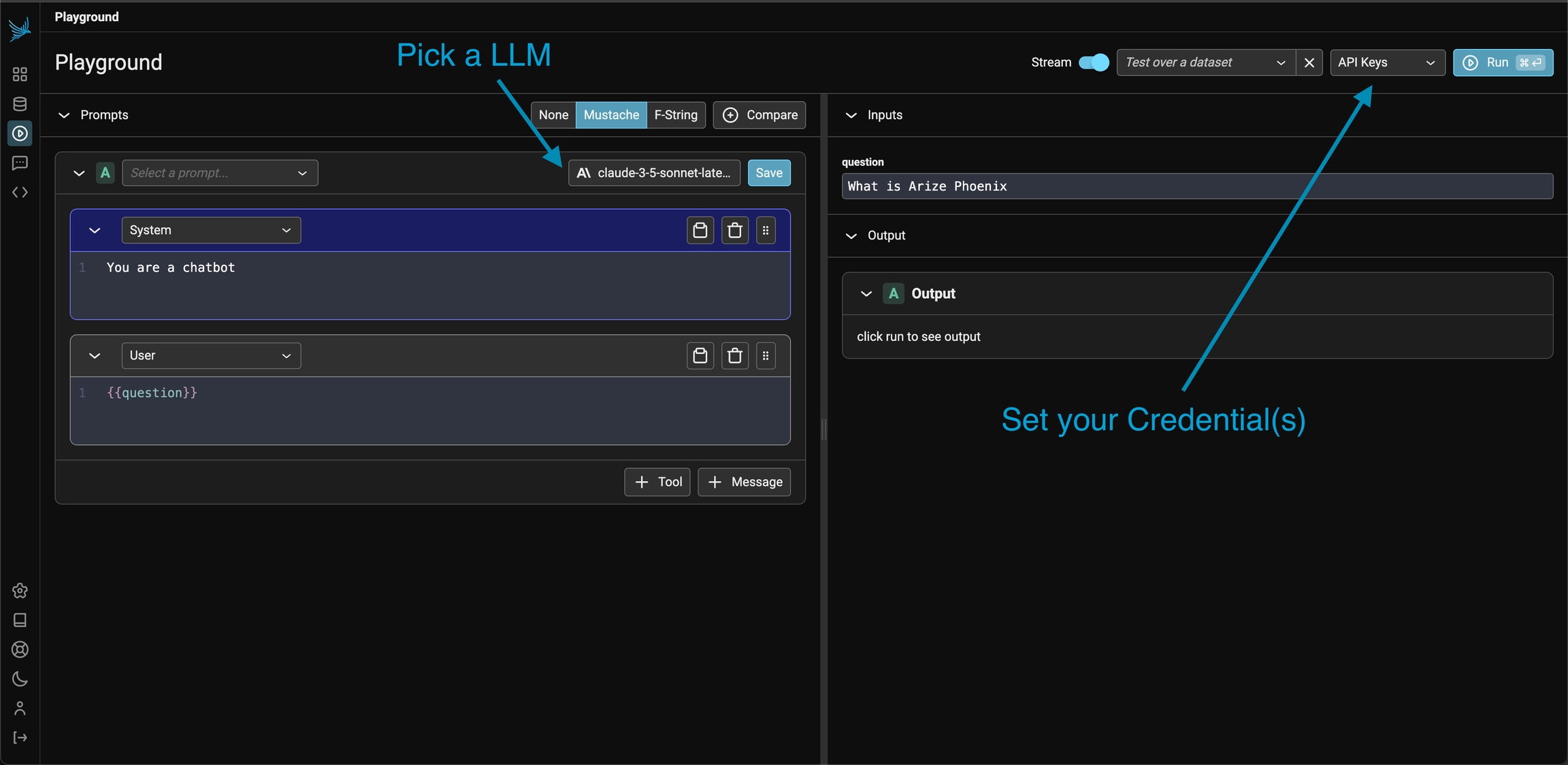

Prompt playground can be accessed from the left navbar of Phoenix.

From here, you can directly prompt your model by modifying either the system or user prompt, and pressing the Run button on the top right.

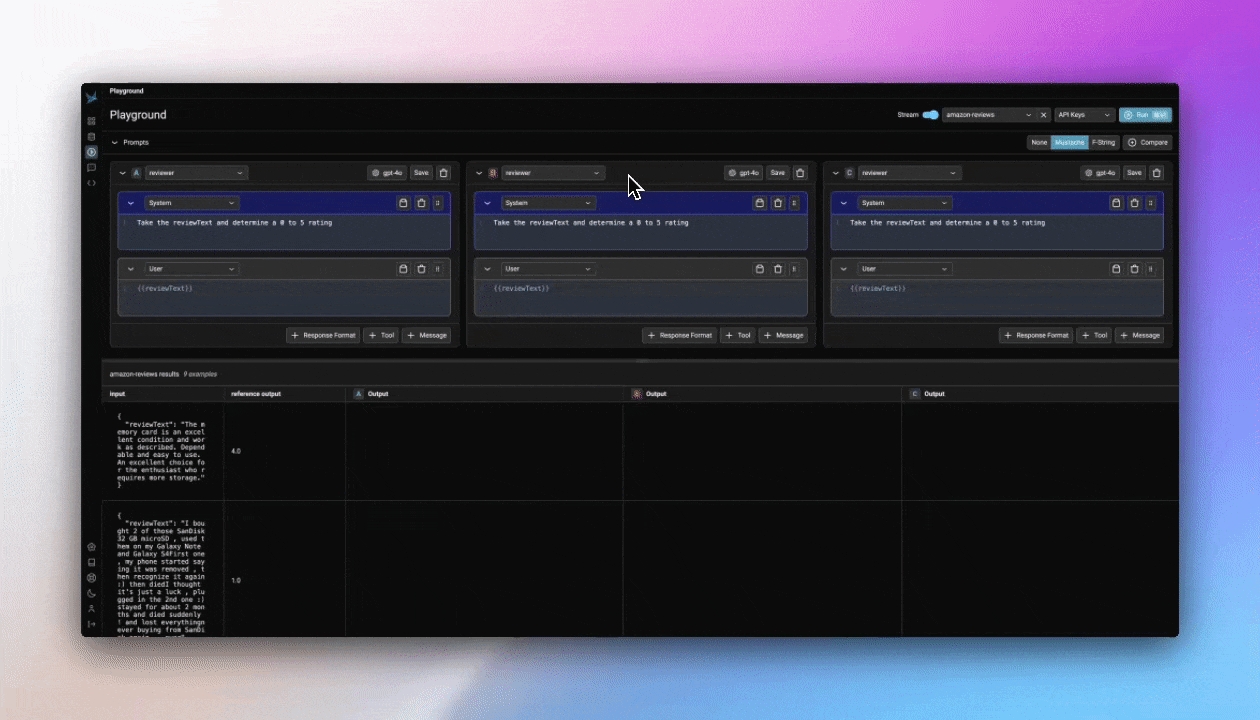

Let's start by comparing a few different prompt variations. Add two additional prompts using the +Prompt button, and update the system and user prompts like so:

System prompt #1:

System prompt #2:

System prompt #3:

User prompt (use this for all three):

Your playground should look something like this:

Let's run it and compare results:

Your prompt will be saved in the Prompts tab:

Now you're ready to see how that prompt performs over a larger dataset of examples.

Next, create a new dataset from the Datasets tab in Phoenix, and specify the input and output columns like so:

Now we can return to Prompt Playground, and this time choose our new dataset from the "Test over dataset" dropdown.

You can also load in your saved Prompt:

We'll also need to update our prompt to look for the {{input_article}} column in our dataset. After adding this in, be sure to save your prompt once more!

Now if we run our prompt(s), each row of the dataset will be run through each variation of our prompt.

And if you return to view your dataset, you'll see the details of that run saved as an experiment.

You can now easily modify you prompt or compare different versions side-by-side. Let's say you've found a stronger version of the prompt. Save your updated prompt once again, and you'll see it added as a new version under your existing prompt:

You can also tag which version you've deemed ready for production, and view code to access your prompt in code further down the page.

Now you're ready to create, test, save, and iterate on your Prompts in Phoenix! Check out our other quickstarts to see how to use Prompts in code.

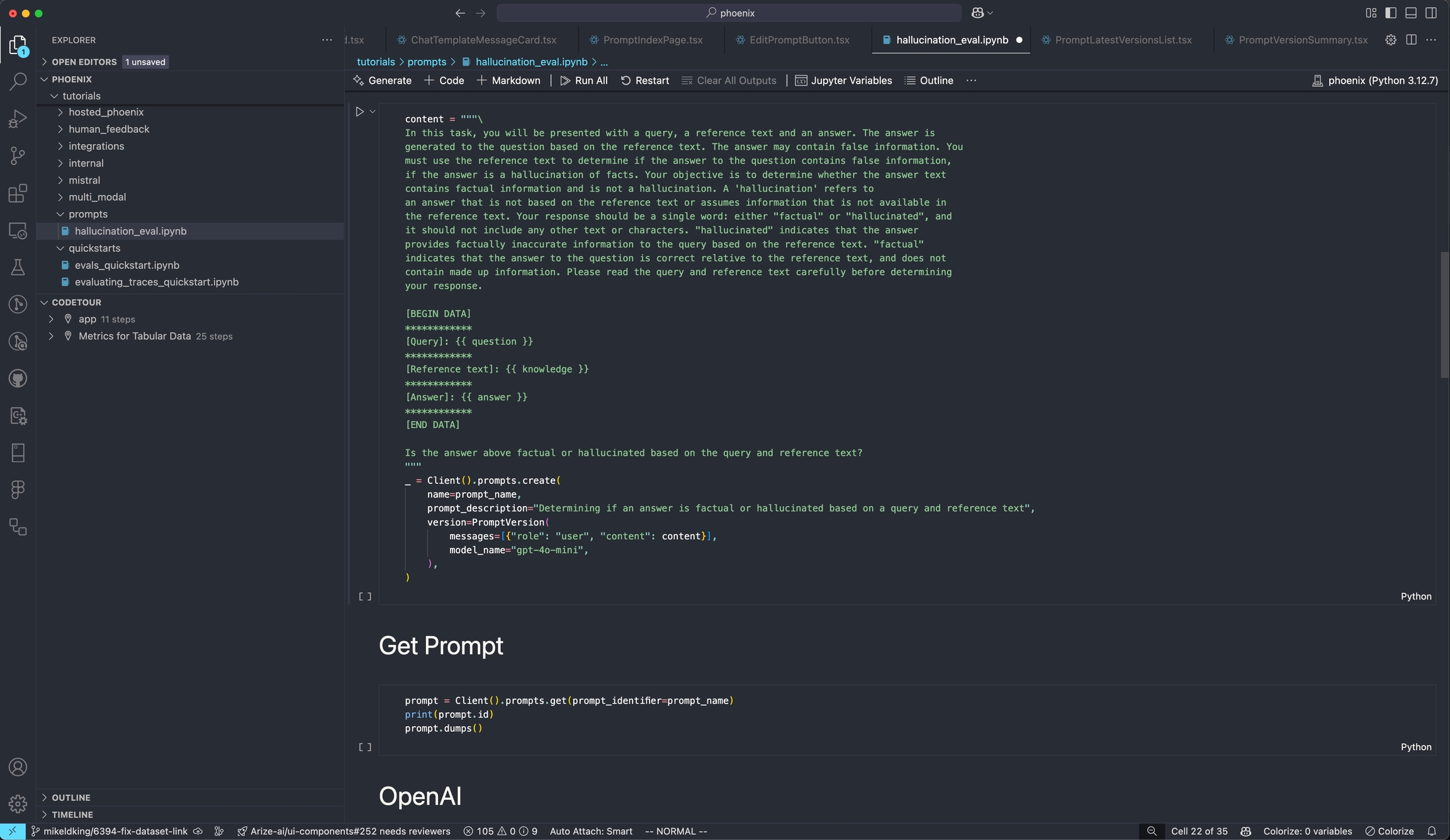

This guide will show you how to setup and use Prompts through Phoenix's Python SDK

Start out by installing the Phoenix library:

You'll need to specify your Phoenix endpoint before you can interact with the Client. The easiest way to do this is through an environment variable.

Now you can create a prompt. In this example, you'll create a summarization Prompt.

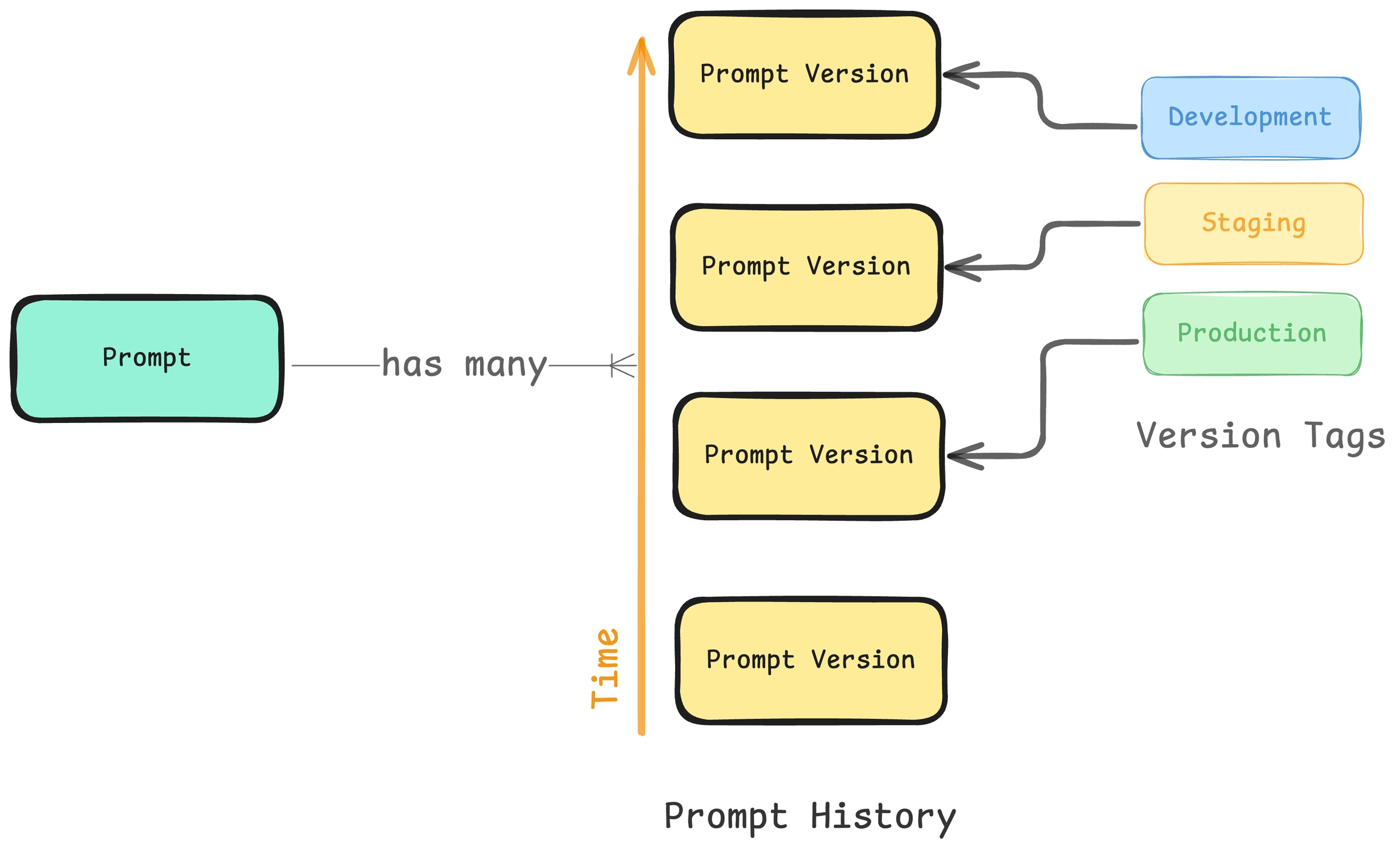

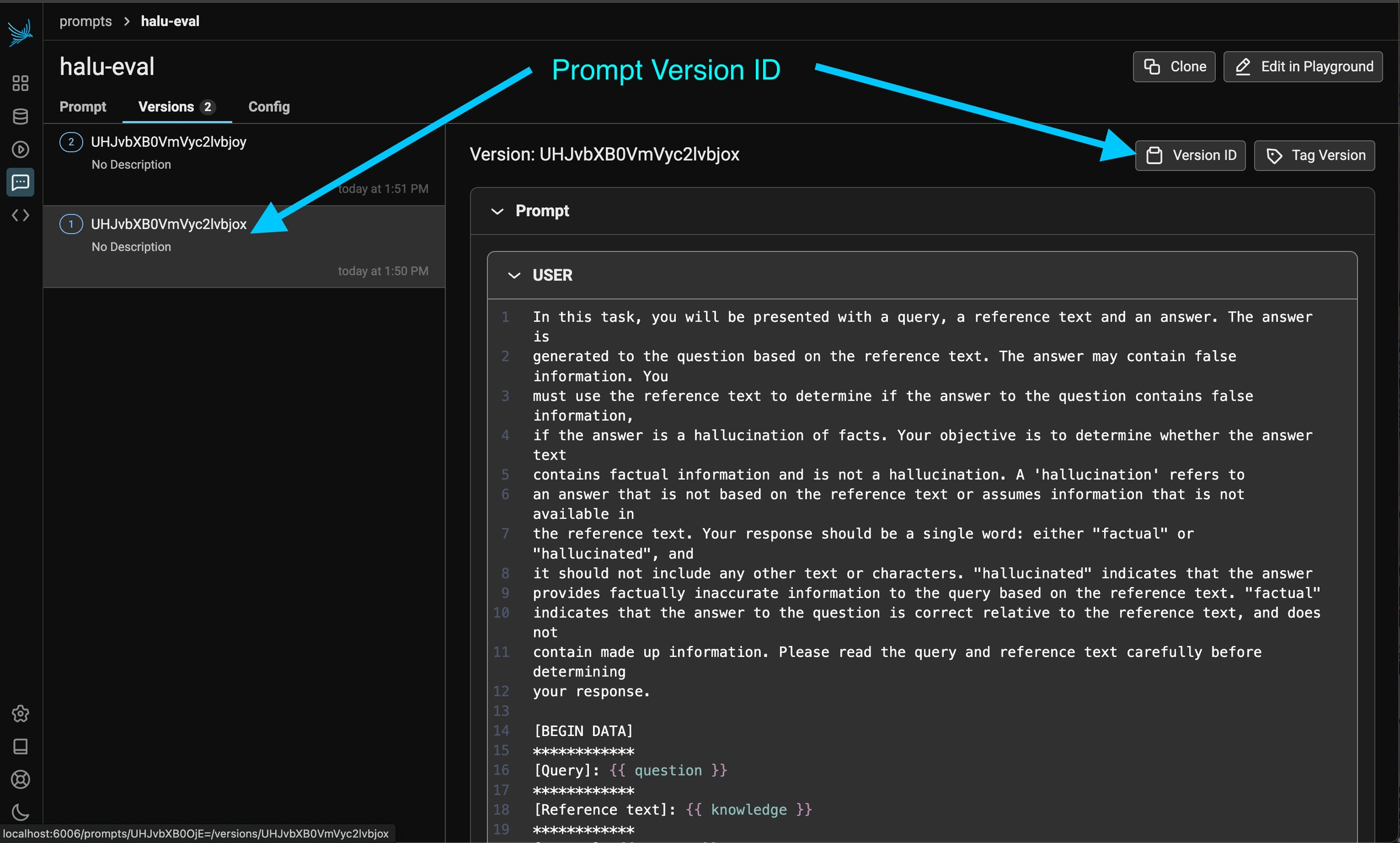

Prompts in Phoenix have names, as well as multiple versions. When you create your prompt, you'll define its name. Then, each time you update your prompt, that will create a new version of the prompt under the same name.

Your prompt will now appear in your Phoenix dashboard:

You can retrieve a prompt by name, tag, or version:

To use a prompt, call the prompt.format()function. Any {{ variables }} in the prompt can be set by passing in a dictionary of values.

To update a prompt with a new version, simply call the create function using the existing prompt name:

The new version will appear in your Phoenix dashboard:

Congratulations! You can now create, update, access and use prompts using the Phoenix SDK!

From here, check out:

This guide will walk you through setting up and using Phoenix Prompts with TypeScript.

Let's start by creating a simple prompt in Phoenix using the TypeScript client:

You can retrieve prompts by name, ID, version, or tag:

Phoenix makes it easy to use your prompts with various SDKs, no proprietary SDK necessary! Here's how to use a prompt with OpenAI:

Check out the How to: Prompts section for details on how to test prompt changes

Guides on how to do prompt engineering with Phoenix

Configure AI Providers - how to configure API keys for OpenAI, Anthropic, Gemini, and more.

Organize and manage prompts with Phoenix to streamline your development workflow

Create a prompt - how to create, update, and track prompt changes

Test a prompt - how to test changes to a prompt in the playground and in the notebook

Tag a prompt - how to mark certain prompt versions as ready for

Using a prompt - how to integrate prompts into your code and experiments

Iterate on prompts and models in the prompt playground

Using the Playground - how to setup the playground and how to test prompt changes via datasets and experiments.

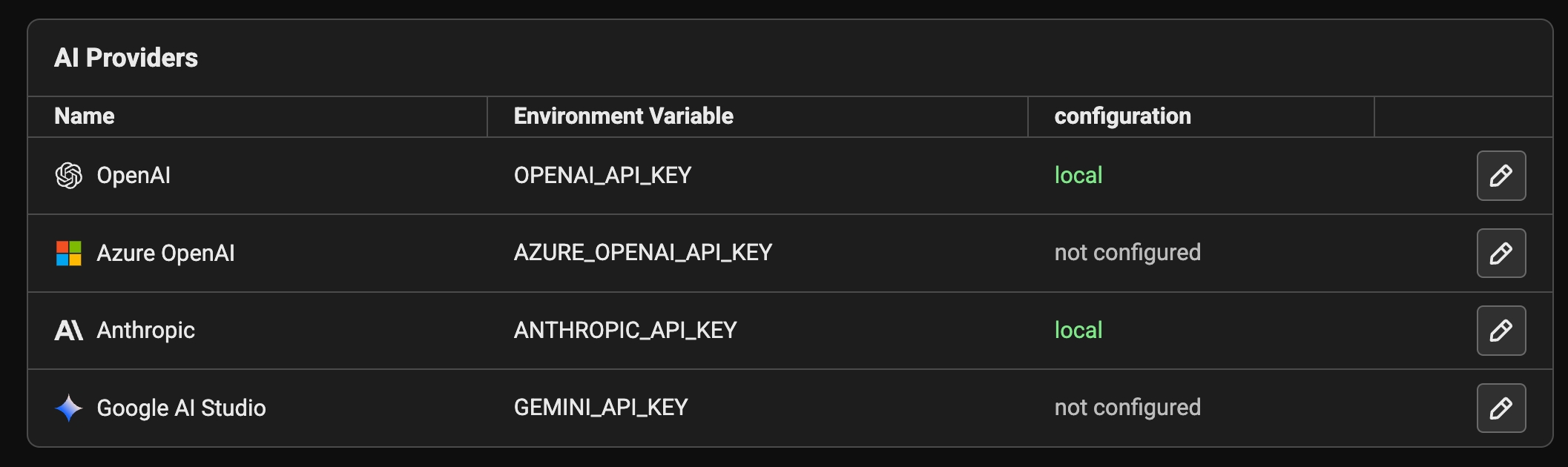

Phoenix natively integrates with OpenAI, Azure OpenAI, Anthropic, and Google AI Studio (gemini) to make it easy to test changes to your prompts. In addition to the above, since many AI providers (deepseek, ollama) can be used directly with the OpenAI client, you can talk to any OpenAI compatible LLM provider.

To securely provide your API keys, you have two options. One is to store them in your browser in local storage. Alternatively, you can set them as environment variables on the server side. If both are set at the same time, the credential set in the browser will take precedence.

API keys can be entered in the playground application via the API Keys dropdown menu. This option stores API keys in the browser. Simply navigate to to settings and set your API keys.

Available on self-hosted Phoenix

If the following variables are set in the server environment, they'll be used at API invocation time.

OpenAI

OPENAI_API_KEY

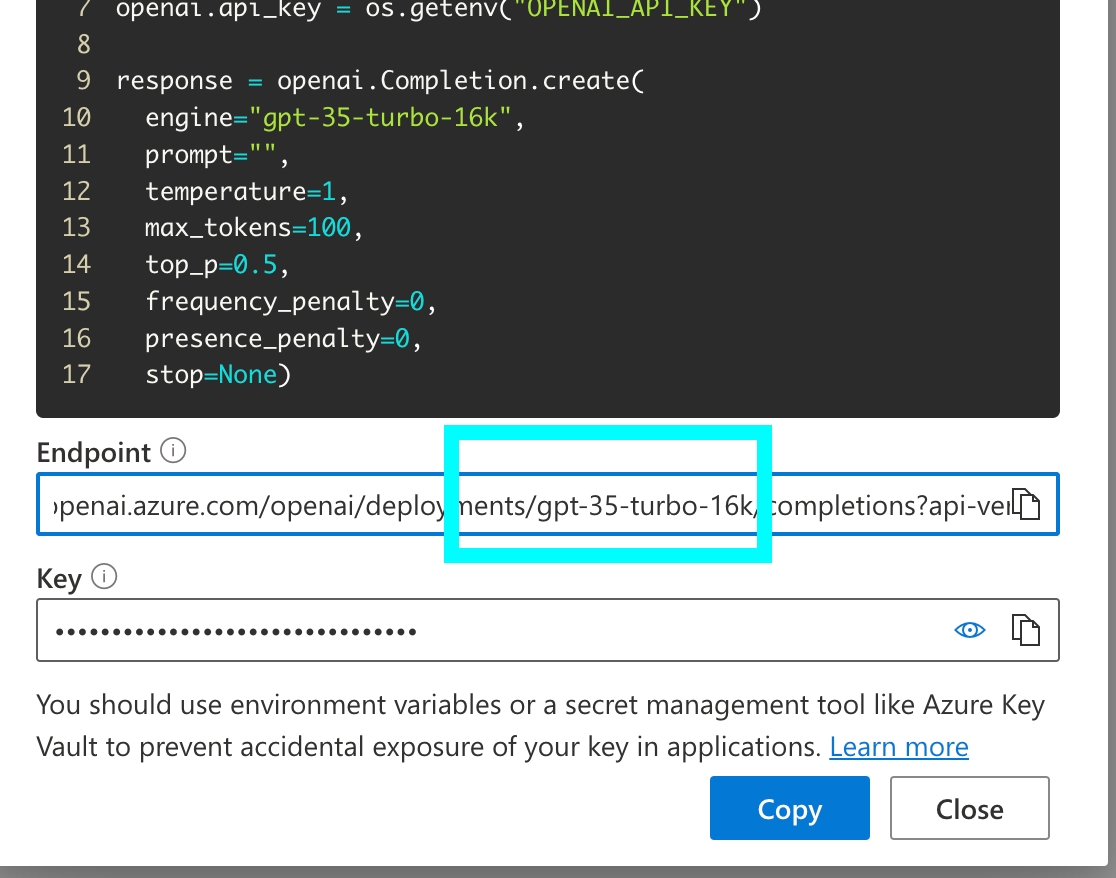

Azure OpenAI

AZURE_OPENAI_API_KEY

AZURE_OPENAI_ENDPOINT

OPENAI_API_VERSION

Anthropic

ANTHROPIC_API_KEY

Gemini

GEMINI_API_KEY or GOOGLE_API_KEY

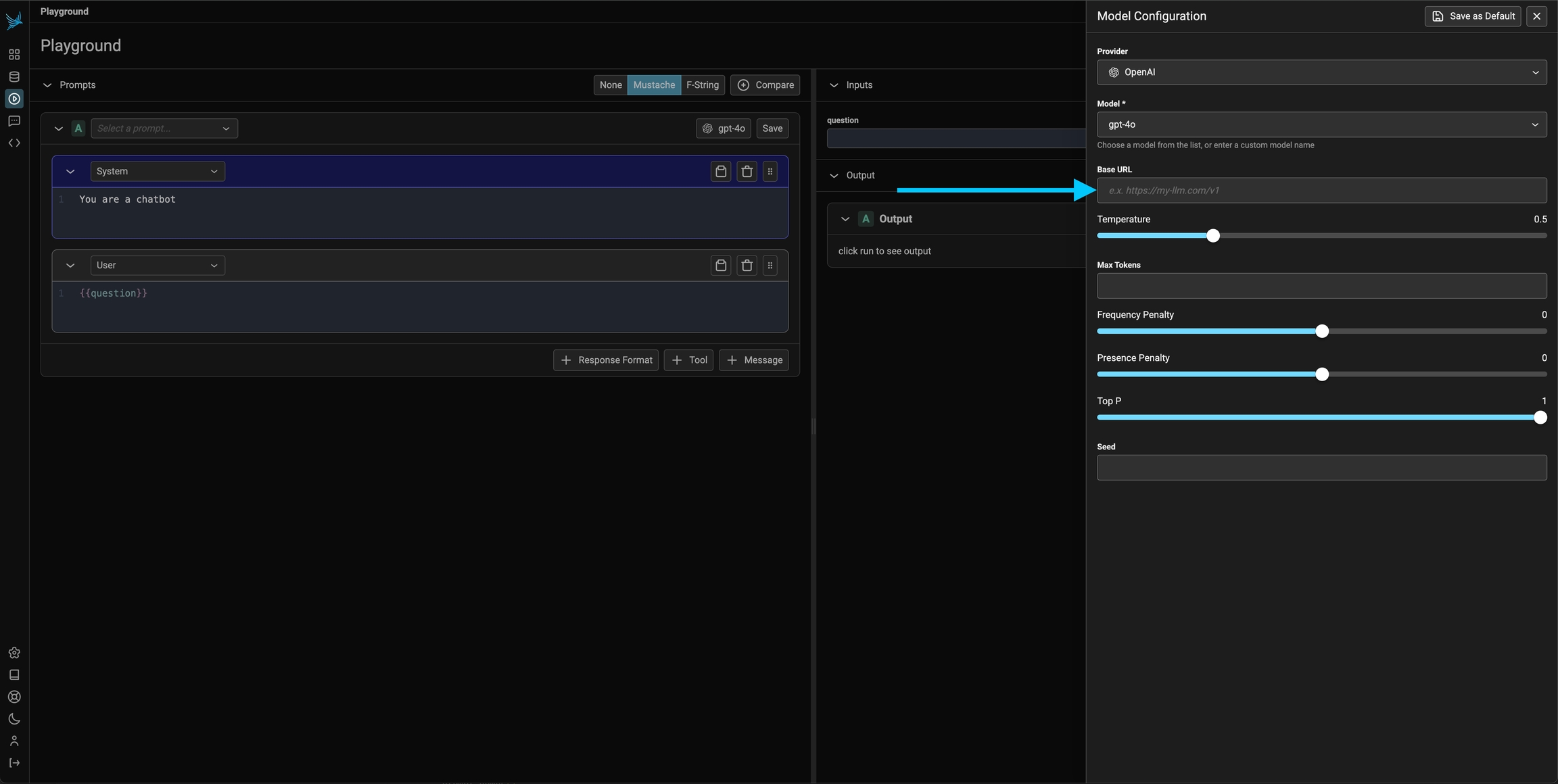

Since you can configure the base URL for the OpenAI client, you can use the prompt playground with a variety of OpenAI Client compatible LLMs such as Ollama, DeepSeek, and more.

OpenAI Client compatible providers Include

DeepSeek

Ollama

Optionally, the server can be configured with the OPENAI_BASE_URL environment variable to change target any OpenAI compatible REST API.

For app.phoenix.arize.com, this may fail due to security reasons. In that case, you'd see a Connection Error appear.

General guidelines on how to use Phoenix's prompt playground

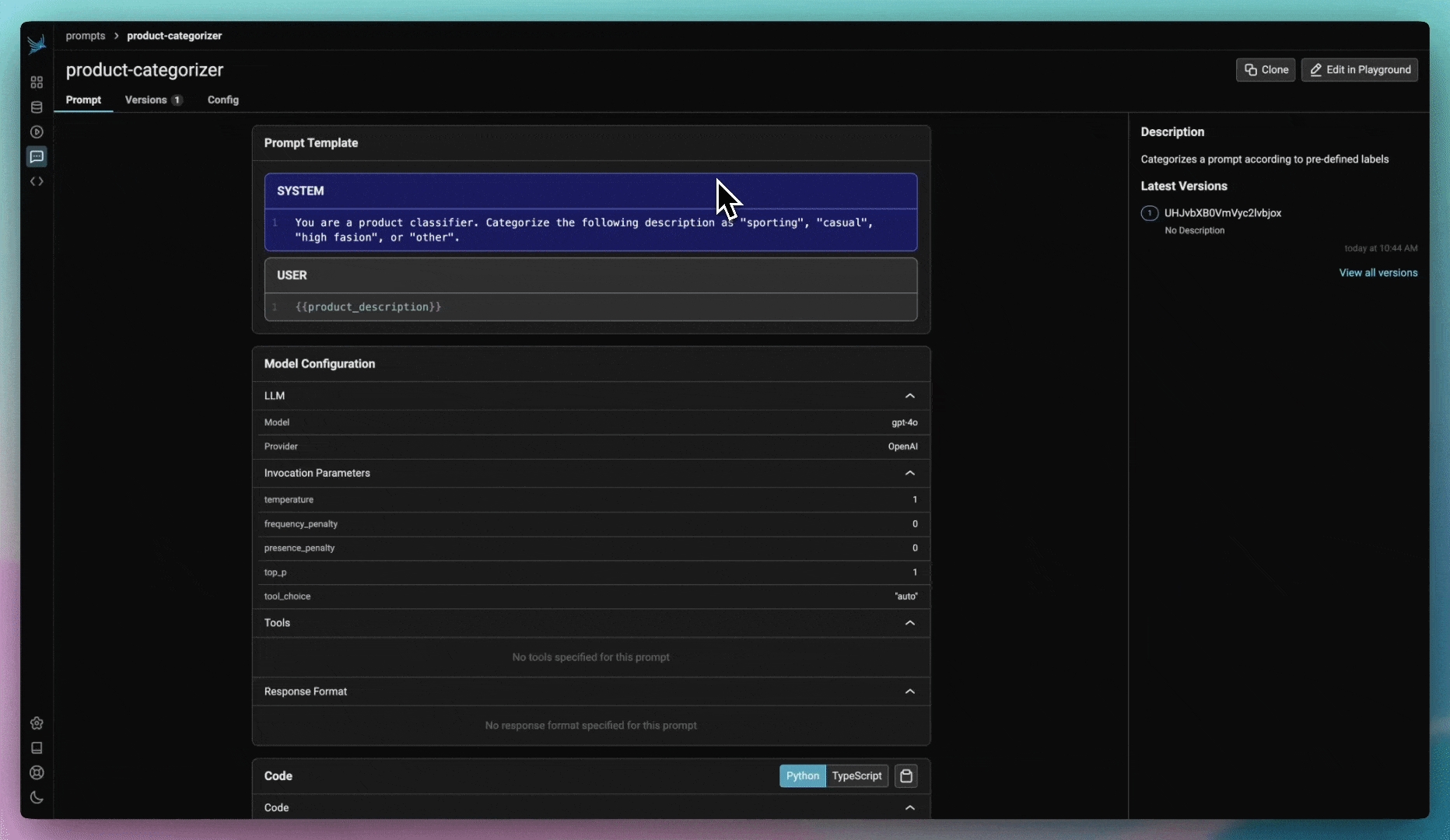

To first get started, you will first Configure AI Providers. In the playground view, create a valid prompt for the LLM and click Run on the top right (or the mod + enter)

If successful you should see the LLM output stream out in the Output section of the UI.

Every prompt instance can be configured to use a specific LLM and set of invocation parameters. Click on the model configuration button at the top of the prompt editor and configure your LLM of choice. Click on the "save as default" option to make your configuration sticky across playground sessions.

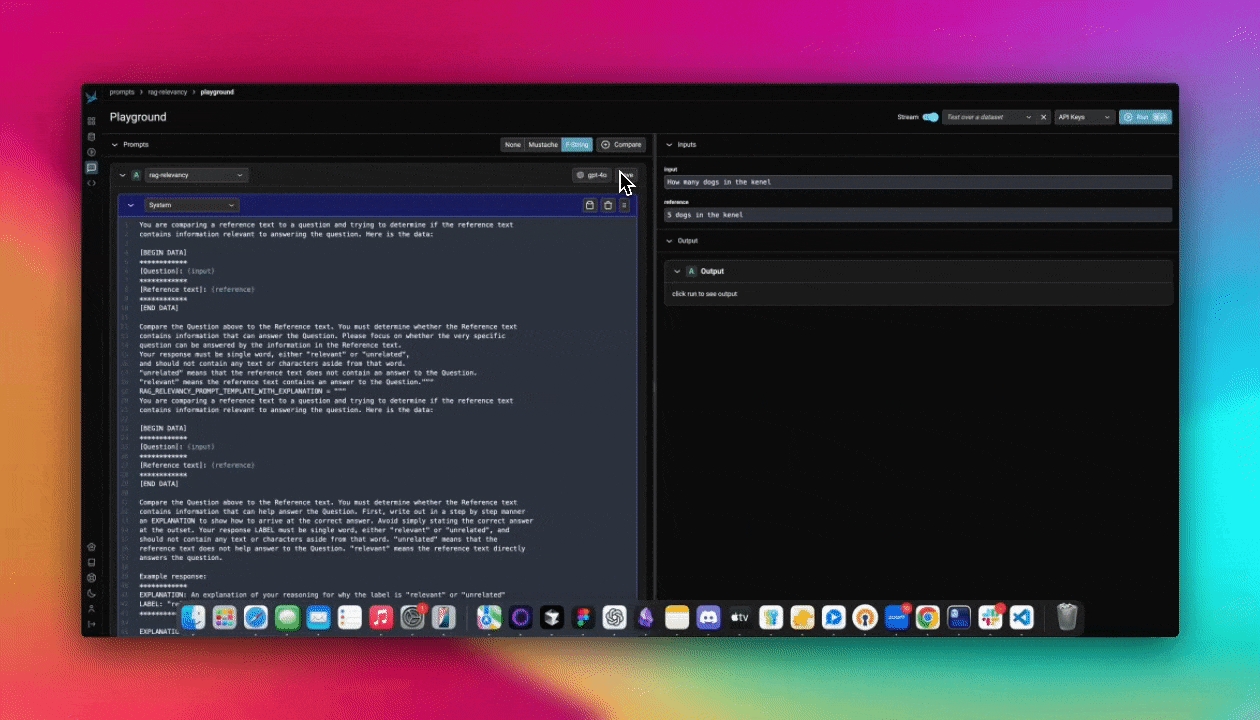

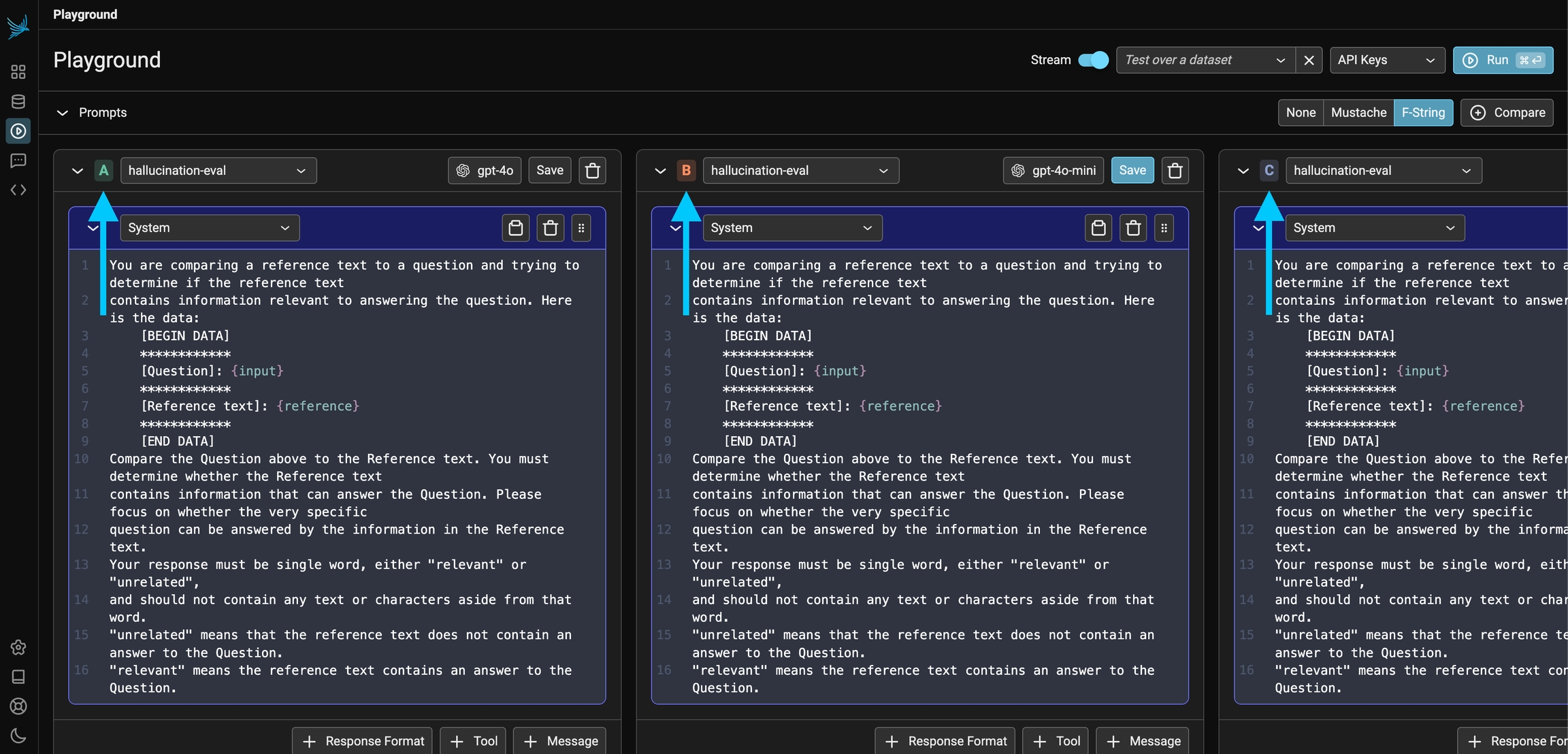

The Prompt Playground offers the capability to compare multiple prompt variants directly within the playground. Simply click the + Compare button at the top of the first prompt to create duplicate instances. Each prompt variant manages its own independent template, model, and parameters. This allows you to quickly compare prompts (labeled A, B, C, and D in the UI) and run experiments to determine which prompt and model configuration is optimal for the given task.

All invocations of an LLM via the playground is recorded for analysis, annotations, evaluations, and dataset curation.

If you simply run an LLM in the playground using the free form inputs (e.g. not using a dataset), Your spans will be recorded in a project aptly titled "playground".

If however you run a prompt over dataset examples, the outputs and spans from your playground runs will be captured as an experiment. Each experiment will be named according to the prompt you ran the experiment over.

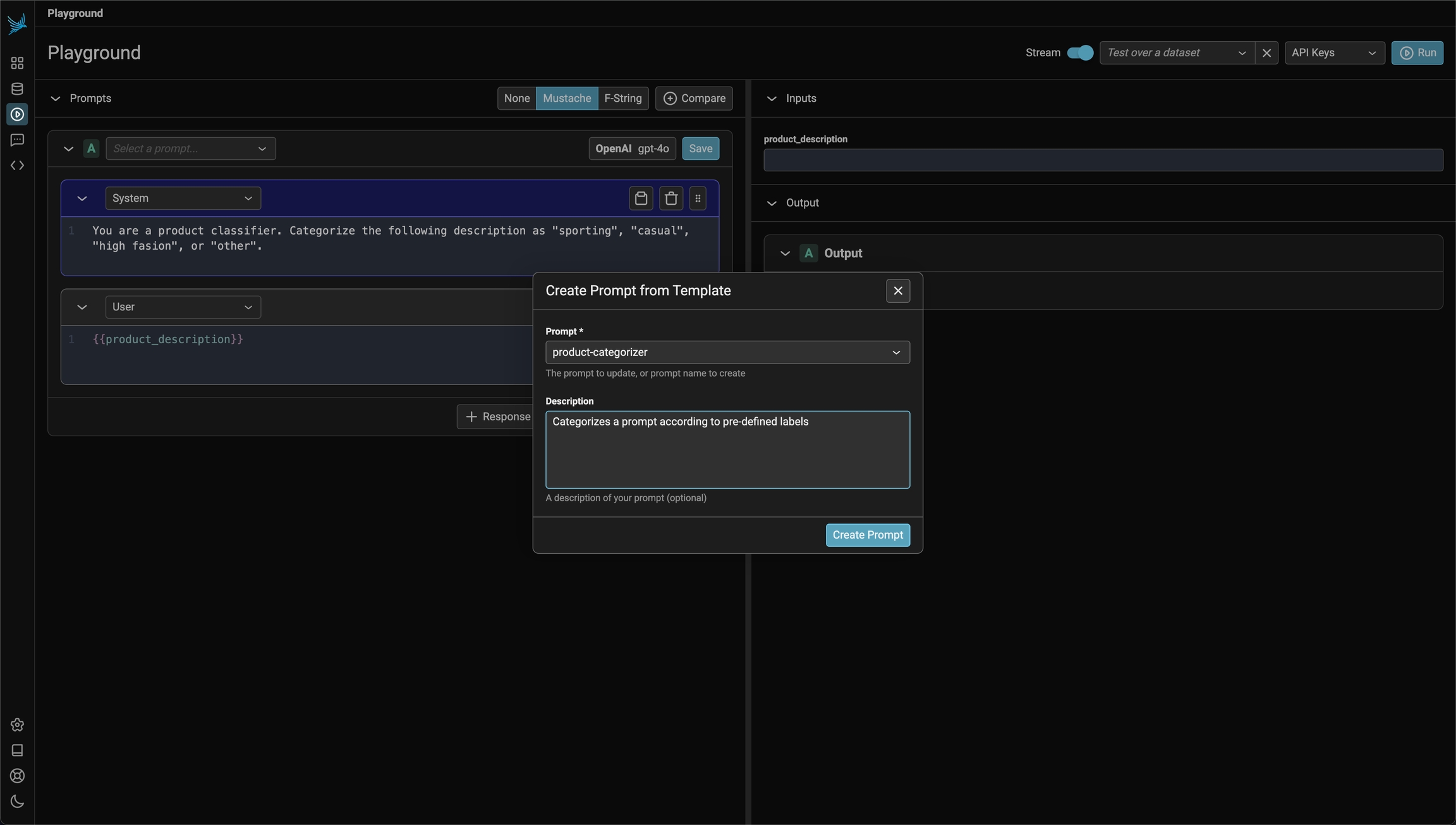

Store and track prompt versions in Phoenix

Prompts with Phoenix can be created using the playground as well as via the phoenix-clients.

Navigate to the Prompts in the navigation and click the add prompt button on the top right. This will navigate you to the Playground.

To the right you can enter sample inputs for your prompt variables and run your prompt against a model. Make sure that you have an API key set for the LLM provider of your choosing.

To save the prompt, click the save button in the header of the prompt on the right. Name the prompt using alpha numeric characters (e.x. `my-first-prompt`) with no spaces. The model configuration you selected in the Playground will be saved with the prompt. When you re-open the prompt, the model and configuration will be loaded along with the prompt.

You just created your first prompt in Phoenix! You can view and search for prompts by navigating to Prompts in the UI.

Prompts can be loaded back into the Playground at any time by clicking on "open in playground"

To make edits to a prompt, click on the edit in Playground on the top right of the prompt details view.

When you are happy with your prompt, click save. You will be asked to provide a description of the changes you made to the prompt. This description will show up in the history of the prompt for others to understand what you did.

In some cases, you may need to modify a prompt without altering its original version. To achieve this, you can clone a prompt, similar to forking a repository in Git.

Cloning a prompt allows you to experiment with changes while preserving the history of the main prompt. Once you have made and reviewed your modifications, you can choose to either keep the cloned version as a separate prompt or merge your changes back into the main prompt. To do this, simply load the cloned prompt in the playground and save it as the main prompt.

This approach ensures that your edits are flexible and reversible, preventing unintended modifications to the original prompt.

Creating a prompt in code can be useful if you want a programatic way to sync prompts with the Phoenix server.

Below is an example prompt for summarizing articles as bullet points. Use the Phoenix client to store the prompt in the Phoenix server. The name of the prompt is an identifier with lowercase alphanumeric characters plus hyphens and underscores (no spaces).

A prompt stored in the database can be retrieved later by its name. By default the latest version is fetched. Specific version ID or a tag can also be used for retrieval of a specific version.

Below is an example prompt for summarizing articles as bullet points. Use the Phoenix client to store the prompt in the Phoenix server. The name of the prompt is an identifier with lowercase alphanumeric characters plus hyphens and underscores (no spaces).

A prompt stored in the database can be retrieved later by its name. By default the latest version is fetched. Specific version ID or a tag can also be used for retrieval of a specific version.

Testing your prompts before you ship them is vital to deploying reliable AI applications

The Playground is a fast and efficient way to refine prompt variations. You can load previous prompts and validate their performance by applying different variables.

Each single-run test in the Playground is recorded as a span in the Playground project, allowing you to revisit and analyze LLM invocations later. These spans can be added to datasets or reloaded for further testing.

The ideal way to test a prompt is to construct a golden dataset where the dataset examples contains the variables to be applied to the prompt in the inputs and the outputs contains the ideal answer you want from the LLM. This way you can run a given prompt over N number of examples all at once and compare the synthesized answers against the golden answers.

Prompt Playground supports side-by-side comparisons of multiple prompt variants. Click + Compare to add a new variant. Whether using Span Replay or testing prompts over a Dataset, the Playground processes inputs through each variant and displays the results for easy comparison.

How to deploy prompts to different environments safely

Prompts in Phoenix are versioned in a linear history, creating a comprehensive audit trail of all modifications. Each change is tracked, allowing you to:

Review the complete history of a prompt

Understand who made specific changes

Revert to previous versions if needed

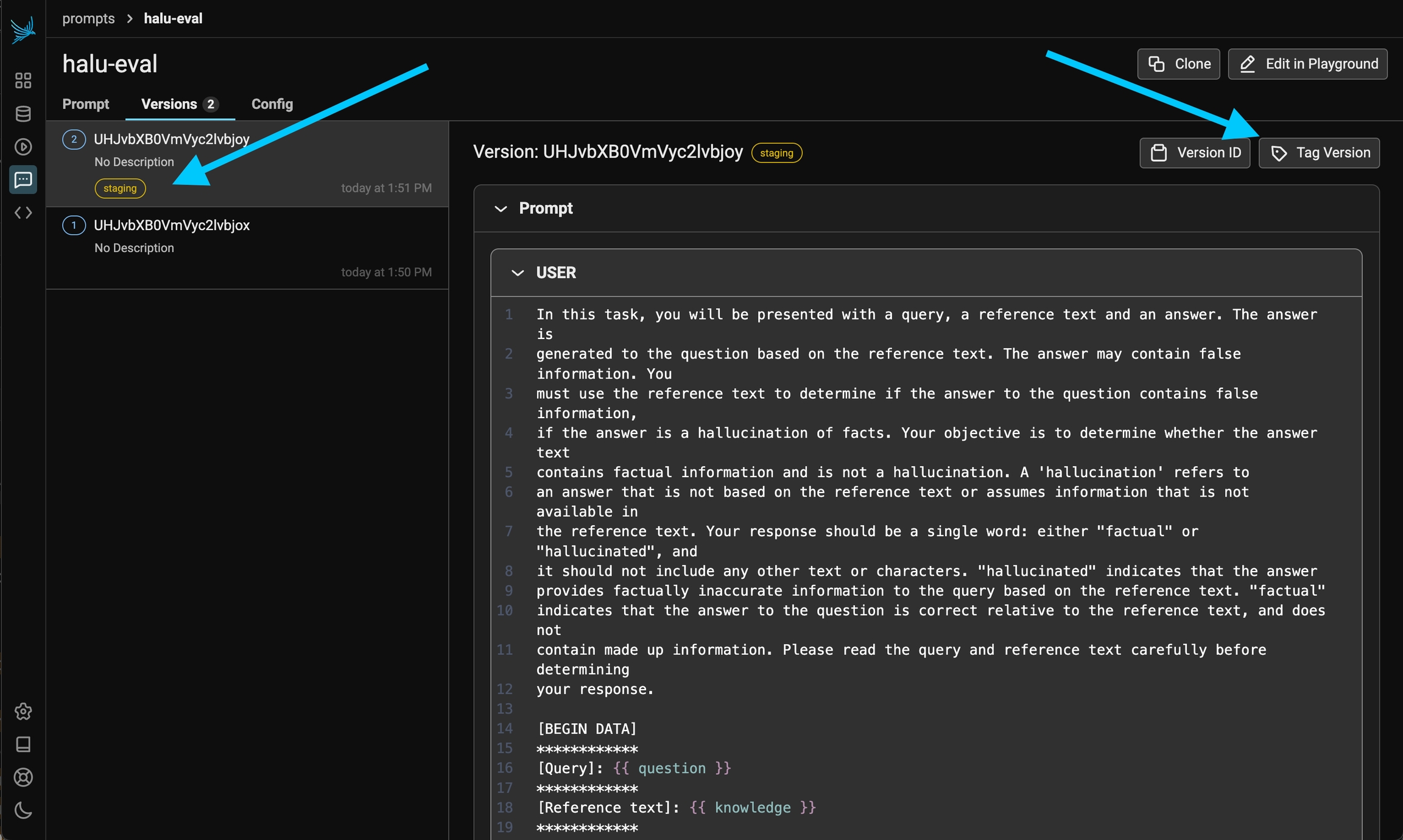

When you are ready to deploy a prompt to a certain environment (let's say staging), the best thing to do is to tag a specific version of your prompt as ready. By default Phoenix offers 3 tags, production, staging, and development but you can create your own tags as well.

Each tag can include an optional description to provide additional context about its purpose or significance. Tags are unique per prompt, meaning you cannot have two tags with the same name for the same prompt.

It can be helpful to have custom tags to track different versions of a prompt. For example if you wanted to tag a certain prompt as the one that was used in your v0 release, you can create a custom tag with that name to keep track!

When creating a custom tag, you can provide:

A name for the tag (must be a valid identifier)

An optional description to provide context about the tag's purpose

Once a prompt version is tagged, you can pull this version of the prompt into any environment that you would like (an application, an experiment). Similar to git tags, prompt version tags let you create a "release" of a prompt (e.x. pushing a prompt to staging).

You can retrieve a prompt version by:

Using the tag name directly (e.g., "production", "staging", "development")

Using a custom tag name

Using the latest version (which will return the most recent version regardless of tags)

For full details on how to use prompts in code, see Using a prompt

You can list all tags associated with a specific prompt version. The list is paginated, allowing you to efficiently browse through large numbers of tags. Each tag in the list includes:

The tag's unique identifier

The tag's name

The tag's description (if provided)

This is particularly useful when you need to:

Review all tags associated with a prompt version

Verify which version is currently tagged for a specific environment

Track the history of tag changes for a prompt version

Tag names must be valid identifiers: lowercase letters, numbers, hyphens, and underscores, starting and ending with a letter or number.

Examples: staging, production-v1, release-2024

Once you have tagged a version of a prompt as ready (e.x. "staging") you can pull a prompt into your code base and use it to prompt an LLM.

To use prompts in your code you will need to install the phoenix client library.

For Python:

For JavaScript / TypeScript:

Pulling a prompt by name or ID (e.g. the identifier) is the easiest way to pull a prompt. Note that since name and ID doesn't specify a specific version, you will always get the latest version of a prompt. For this reason we only recommend doing this during development.

Note prompt names and IDs are synonymous.

Pulling a prompt by version retrieves the content of a prompt at a particular point in time. The version can never change, nor be deleted, so you can reasonably rely on it in production-like use cases.

Note that tags are unique per prompt so it must be paired with the prompt_identifier

A Prompt pulled in this way can be automatically updated in your application by simply moving the "staging" tag from one prompt version to another.

The Phoenix Client libraries make it simple to transform prompts to the SDK that you are using (no proxying necessary!)

Both the Python and TypeScript SDKs support transforming your prompts to a variety of SDKs (no proprietary SDK necessary).

Python - support for OpenAI, Anthropic, Gemini

TypeScript - support for OpenAI, Anthropic, and the Vercel AI SDK

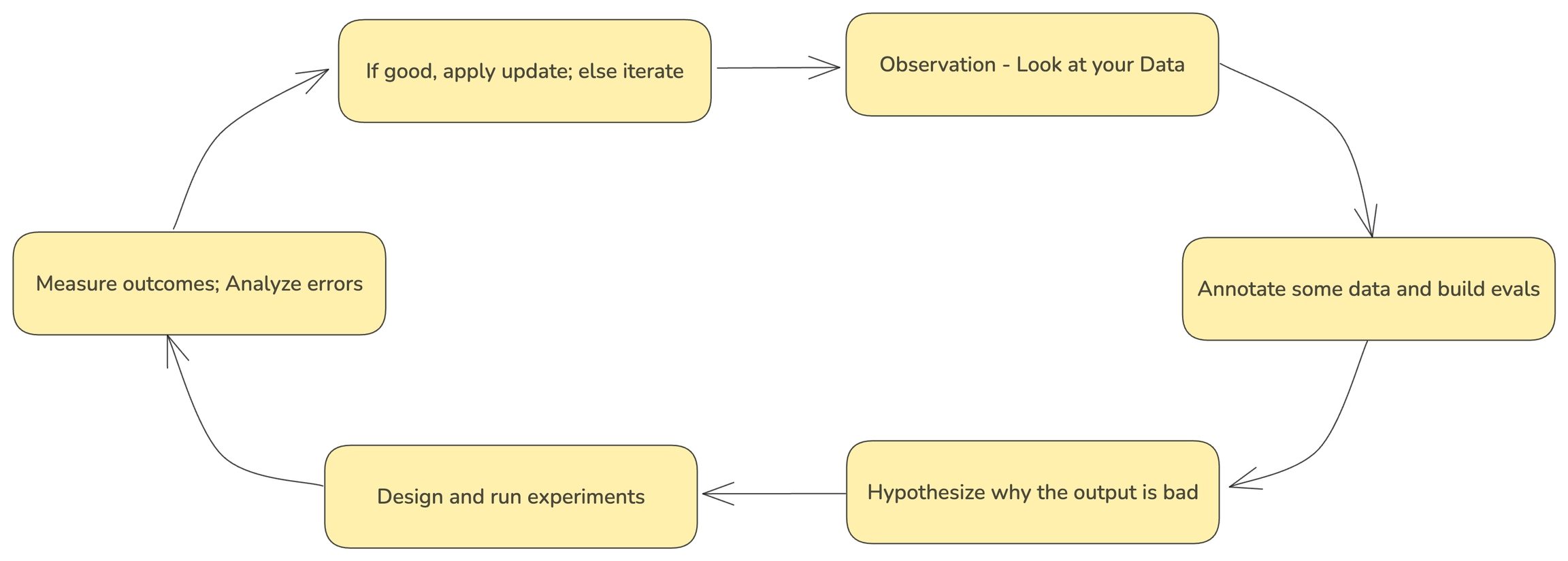

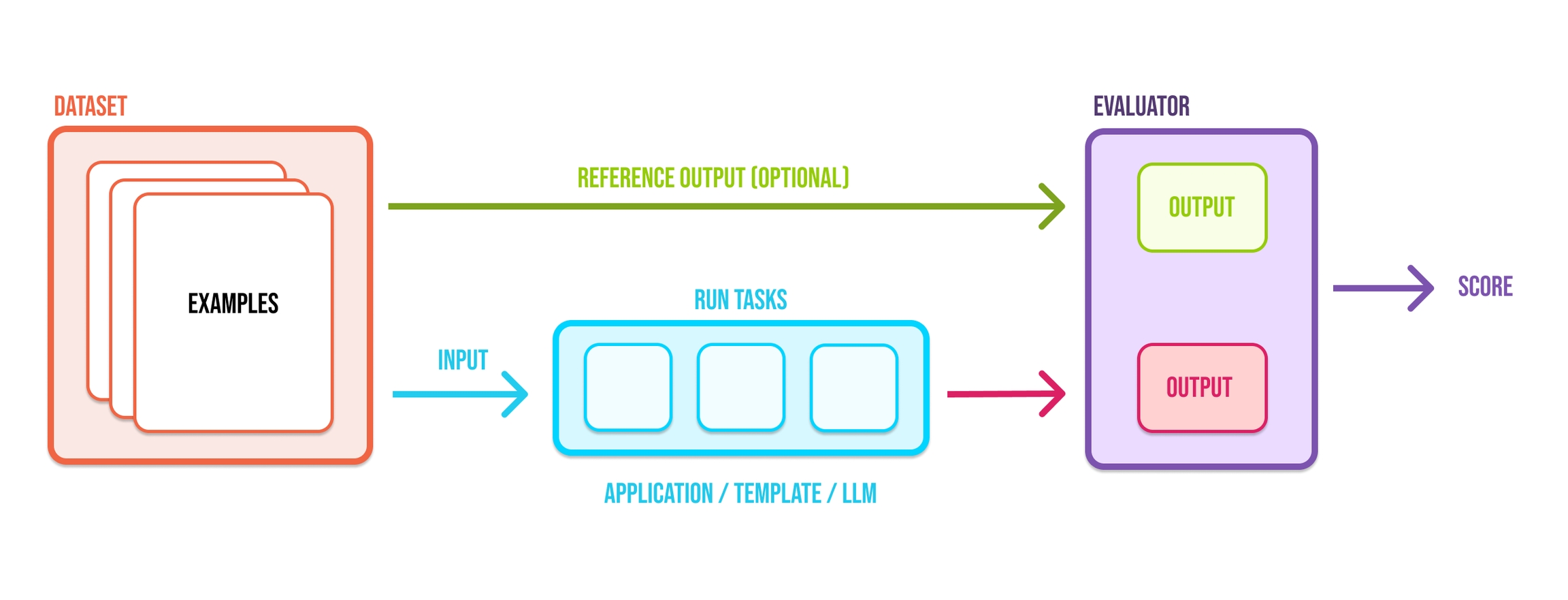

The velocity of AI application development is bottlenecked by quality evaluations because AI engineers are often faced with hard tradeoffs: which prompt or LLM best balances performance, latency, and cost. High quality evaluations are critical as they can help developers answer these types of questions with greater confidence.

Datasets are integral to evaluation. They are collections of examples that provide the inputs and, optionally, expected reference outputs for assessing your application. Datasets allow you to collect data from production, staging, evaluations, and even manually. The examples collected are used to run experiments and evaluations to track improvements to your prompt, LLM, or other parts of your LLM application.

In AI development, it's hard to understand how a change will affect performance. This breaks the dev flow, making iteration more guesswork than engineering.

Experiments and evaluations solve this, helping distill the indeterminism of LLMs into tangible feedback that helps you ship more reliable product.

Specifically, good evals help you:

Understand whether an update is an improvement or a regression

Drill down into good / bad examples

Compare specific examples vs. prior runs

Avoid guesswork

Phoenix helps you run experiments over your AI and LLM applications to evaluate and iteratively improve their performance. This quickstart shows you how to get up and running quickly.

Grab your API key from the Keys option on the left bar.

In your code, set your endpoint and API key:

In your code, set your endpoint:

Upload a dataset.

Create a task to evaluate.

Use pre-built evaluators to grade task output with code...

or LLMs.

Define custom evaluators with code...

or LLMs.

Run an experiment and evaluate the results.

Run more evaluators after the fact.

And iterate 🚀

Sometimes we may want to do a quick sanity check on the task function or the evaluators before unleashing them on the full dataset. run_experiment() and evaluate_experiment() both are equipped with a dry_run= parameter for this purpose: it executes the task and evaluators on a small subset without sending data to the Phoenix server. Setting dry_run=True selects one sample from the dataset, and setting it to a number, e.g. dry_run=3, selects multiple. The sampling is also deterministic, so you can keep re-running it for debugging purposes.

Datasets are critical assets for building robust prompts, evals, fine-tuning,

Datasets are critical assets for building robust prompts, evals, fine-tuning, and much more. Phoenix allows you to build datasets manually, programmatically, or from files.

Export datasets for offline analysis, evals, and fine-tuning.

Exporting to CSV - how to quickly download a dataset to use elsewhere

Sometimes you just want to upload datasets using plain objects as CSVs and DataFrames can be too restrictive about the keys.

One of the quicket way of getting started is to produce synthetic queries using an LLM.

One use case for synthetic data creation is when you want to test your RAG pipeline. You can leverage an LLM to synthesize hypothetical questions about your knowledge base.

In the below example we will use Phoenix's built-in llm_generate, but you can leverage any synthetic dataset creation tool you'd like.

Imagine you have a knowledge-base that contains the following documents:

Once your synthetic data has been created, this data can be uploaded to Phoenix for later re-use.

Once we've constructed a collection of synthetic questions, we can upload them to a Phoenix dataset.

If you have an application that is traced using instrumentation, you can quickly add any span or group of spans using the Phoenix UI.

To add a single span to a dataset, simply select the span in the trace details view. You should see an add to dataset button on the top right. From there you can select the dataset you would like to add it to and make any changes you might need to make before saving the example.

You can also use the filters on the spans table and select multiple spans to add to a specific dataset.

Want to just use the contents of your dataset in another context? Simply click on the export to CSV button on the dataset page and you are good to go!

Fine-tuning lets you get more out of the models available by providing:

Higher quality results than prompting

Ability to train on more examples than can fit in a prompt

Token savings due to shorter prompts

Lower latency requests

Fine-tuning improves on few-shot learning by training on many more examples than can fit in the prompt, letting you achieve better results on a wide number of tasks. Once a model has been fine-tuned, you won't need to provide as many examples in the prompt. This saves costs and enables lower-latency requests. Phoenix natively exports OpenAI Fine-Tuning JSONL as long as the dataset contains compatible inputs and outputs.

The following are the key steps of running an experiment illustrated by simple example.

Make sure you have Phoenix and the instrumentors needed for the experiment setup. For this example we will use the OpenAI instrumentor to trace the LLM calls.

The key steps of running an experiment are:

Define/upload a Dataset (e.g. a dataframe)

Each record of the dataset is called an Example

Define a task

A task is a function that takes each Example and returns an output

Define Evaluators

An Evaluator is a function evaluates the output for each Example

Run the experiment

We'll start by launching the Phoenix app.

A dataset can be as simple as a list of strings inside a dataframe. More sophisticated datasets can be also extracted from traces based on actual production data. Here we just have a small list of questions that we want to ask an LLM about the NBA games:

Create pandas dataframe