Available in @arizeai/openinference-tanstack-ai 0.1.0+ Phoenix now ships an OpenInference middleware for TanStack AI. PlugDocumentation Index

Fetch the complete documentation index at: https://arizeai-433a7140.mintlify.app/llms.txt

Use this file to discover all available pages before exploring further.

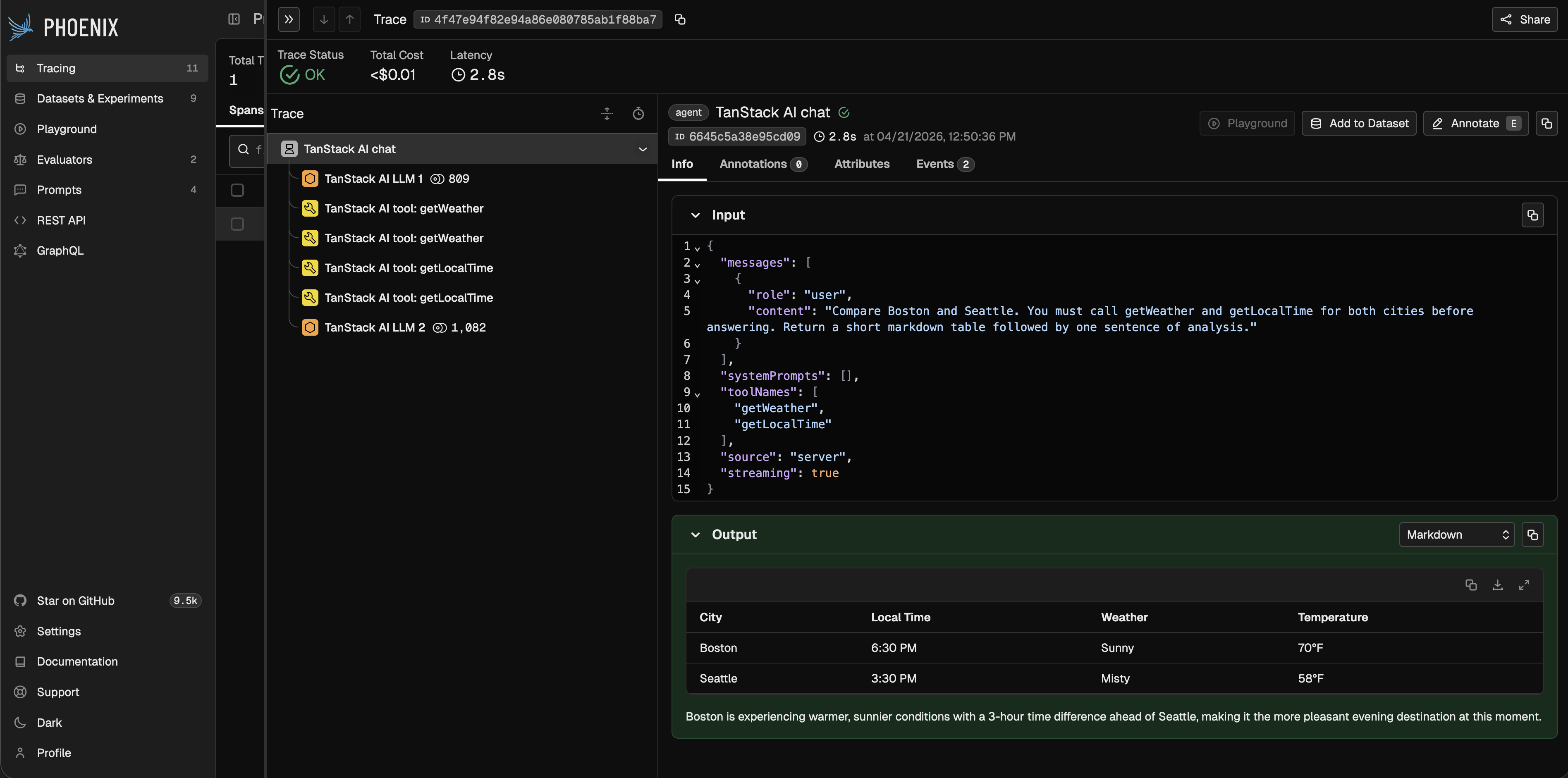

openInferenceMiddleware() into any chat() call to capture an AGENT span for the run, an LLM span for each model turn, and a TOOL span for every executed tool call — across both streaming and non-streaming flows, and across any TanStack AI provider adapter.

This integration is brand new. If you run into issues or have ideas for improvements, please reach out via the OpenInference repo — we’d love your feedback.

TanStack AI Tracing Docs

Setup, usage, and a tool-calling example.

TanStack AI

Learn more about TanStack AI.