Documentation Index

Fetch the complete documentation index at: https://arize-ax.mintlify.dev/docs/llms.txt

Use this file to discover all available pages before exploring further.

What’s New

November 2, 2021Page Titles

Intuitive page titles to stay organized while working with multiple tabs!

Enhancements

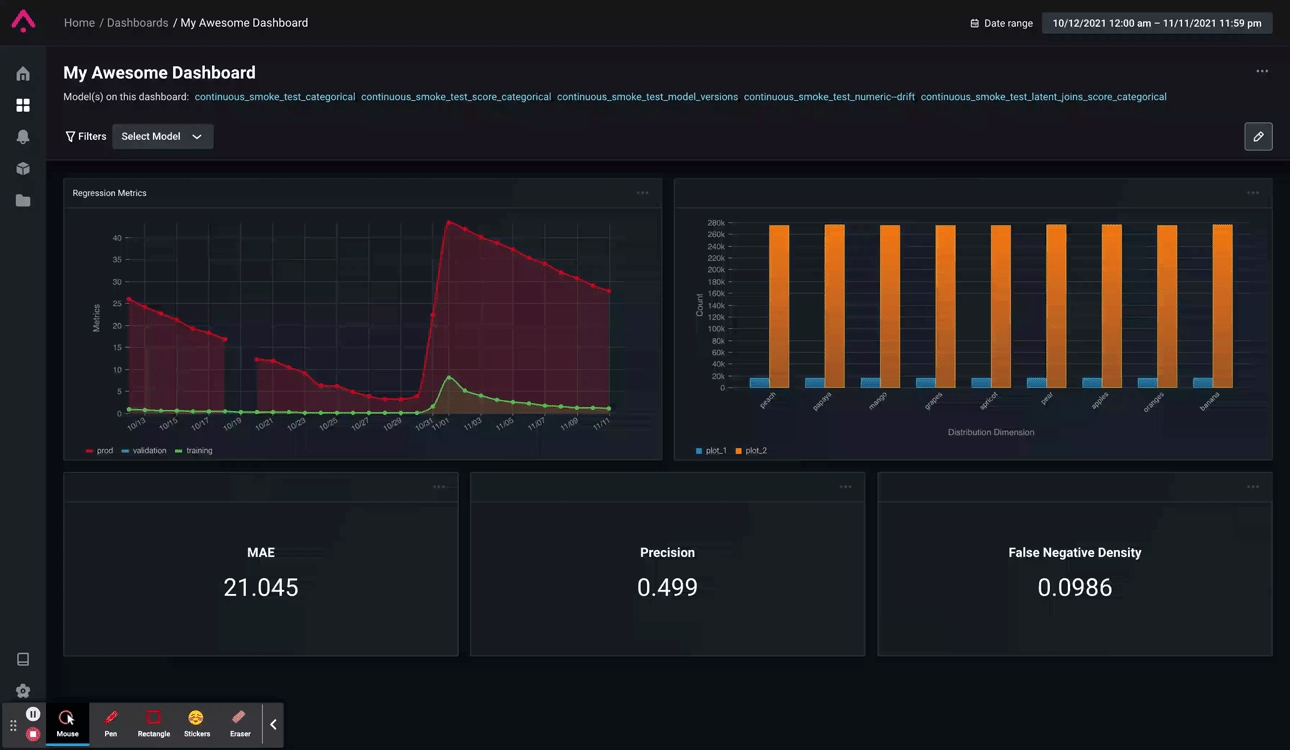

November 18, 2021Dashboard Copy

Ever had to manually recreate a dashboard with a ton of widgets? Wish there was a way to just clone an existing one? Well, now you can! You can now find a “Copy dashboard” dropdown option on all your dashboards which clones your existing dashboard and creates a copy!

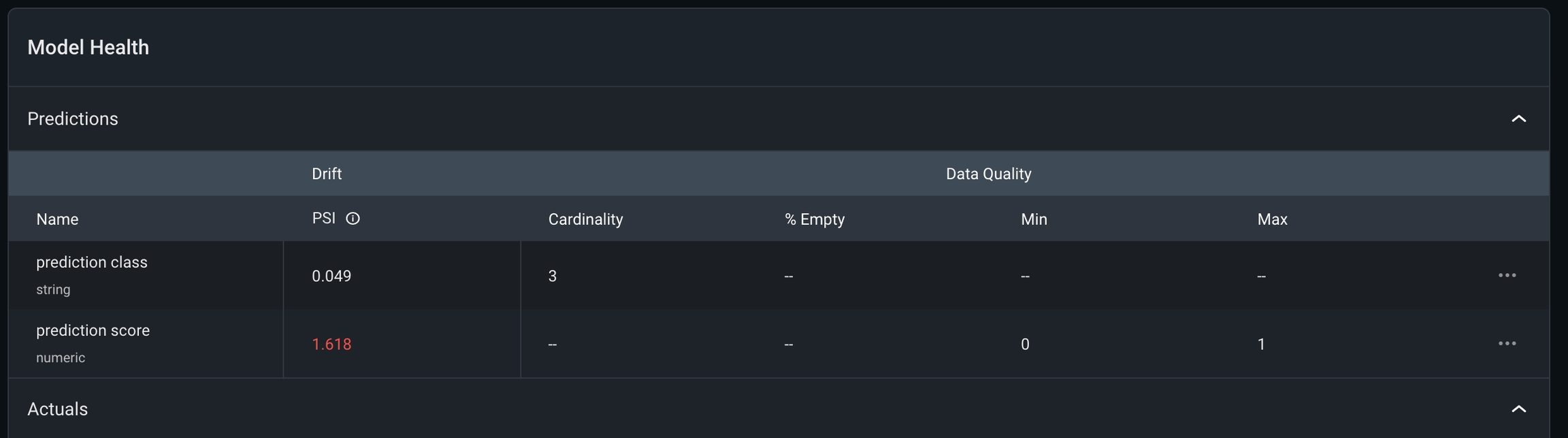

Model Overview Update

We’ve added subheaders to the Model Overview page! With the addition of **Drift **and Data Quality subcategories on the model overview page, users can easily map their monitors to their respective functions for increased clarity.

Python SDK 3.1.3

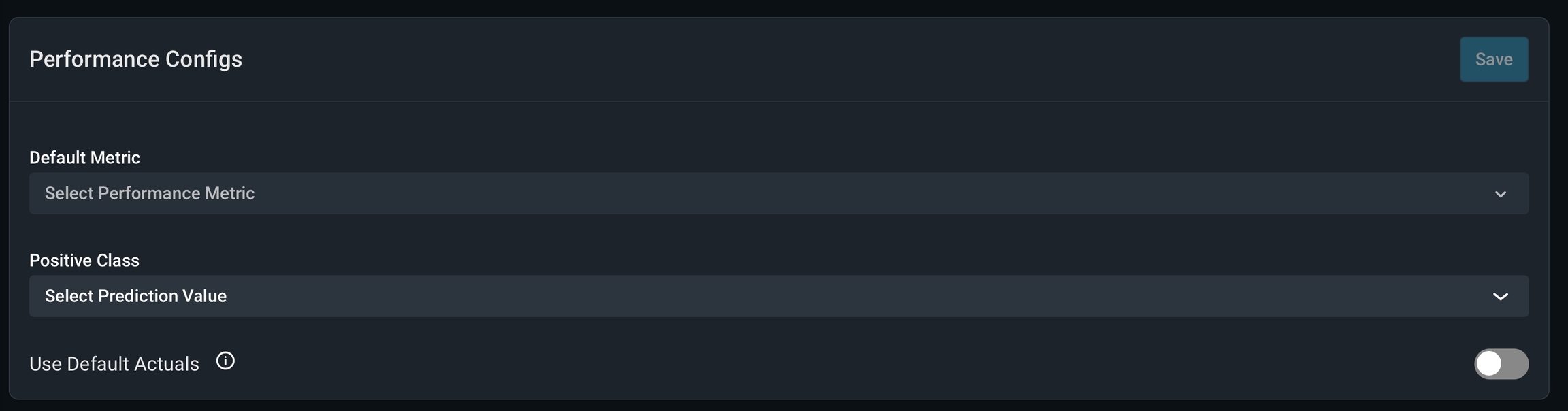

November 2, 2021 A new set of client-side validations and options for automatic data cleaning and an improved troubleshooting workflow for data sent to the Arize platform. With Python SDK 3.1.3, improve clarity on how to navigate errors amongst newly ingested data.Default Actuals

Increased support for models without actuals. Treat predictions without actuals as having implicit negative class actuals when calculating certain performance metrics such as: AUC, PR-AU, and Log Loss with new default actuals.

In the News

November 18, 2021Machine Learning Observability 101 E-Book

Getting a model from research to production is hard. Making sure it works once its live is its own challenge. Struggling to resolve complex model issues in a post-COVID environment that often stacks the decks against easy answers? We wrote an ebook on it. **Read more ******

Best Practices in ML Observability for Monitoring, Mitigating, and Preventing Fraud - Webinar

Does your organization build and deploy sophisticated ML models to detect fraud, the $5 trillion problem? If so, join Reah Miyara, Arize’s Head of Product and former Google AI lead for algorithms and optimization in tomorrow’s webinar as he shares best practices in ML observability for fraud models. Watch it

Feast and Arize Supercharge Feature Management and Model Monitoring for MLOps

Getting machine learning to work in production is not the same as getting it to work in notebooks. Arize AI and Feast have partnered to enhance the ML model lifecycle. Empower online/offline feature transformation and serving through Feast’s feature store and detect and resolve data inconsistencies through Arize’s ML observability platform. Learn more about how you can supercharge feature management and model monitoring for MLOps. Read it

Continuous Monitoring, Continuous Improvements for ML Models Using Neptune AI and Arize AI

November 2, 2021 We’ve partnered with Neptune AI to consistently deploy — and maintain — higher-quality models in production!** Connect Arize’s ML observability platform with Neptune’s metadata store to streamline your MLOps workflow. Learn how to leverage the power of both platforms to enable better ML Ops: **Read More ****

Best Practices In ML Observability for Monitoring, Mitigating, and Preventing Fraud

Fraud is a burden on the global economy annually to the tune of $5 trillion and threats are evolving and taking new forms. Learn how data science and ML teams can develop an observability strategy to catch, monitor, and troubleshoot problems with their fraud models in production.** Read more**

Arize AI Selected by Gartner as Cool Vendor in Enterprise AI Operationalization and Engineering Report

We’ve been named an Enterprise Cool Vendor by Gartner who believes Arize should be on the radar of “enterprises seeking to maximize ROI and have visibility into how the model impacts your business bottom line.” Check out Gartner’s website to get a copy of the full report. Read our release: