Documentation Index

Fetch the complete documentation index at: https://arize-ax.mintlify.dev/docs/llms.txt

Use this file to discover all available pages before exploring further.

What’s New

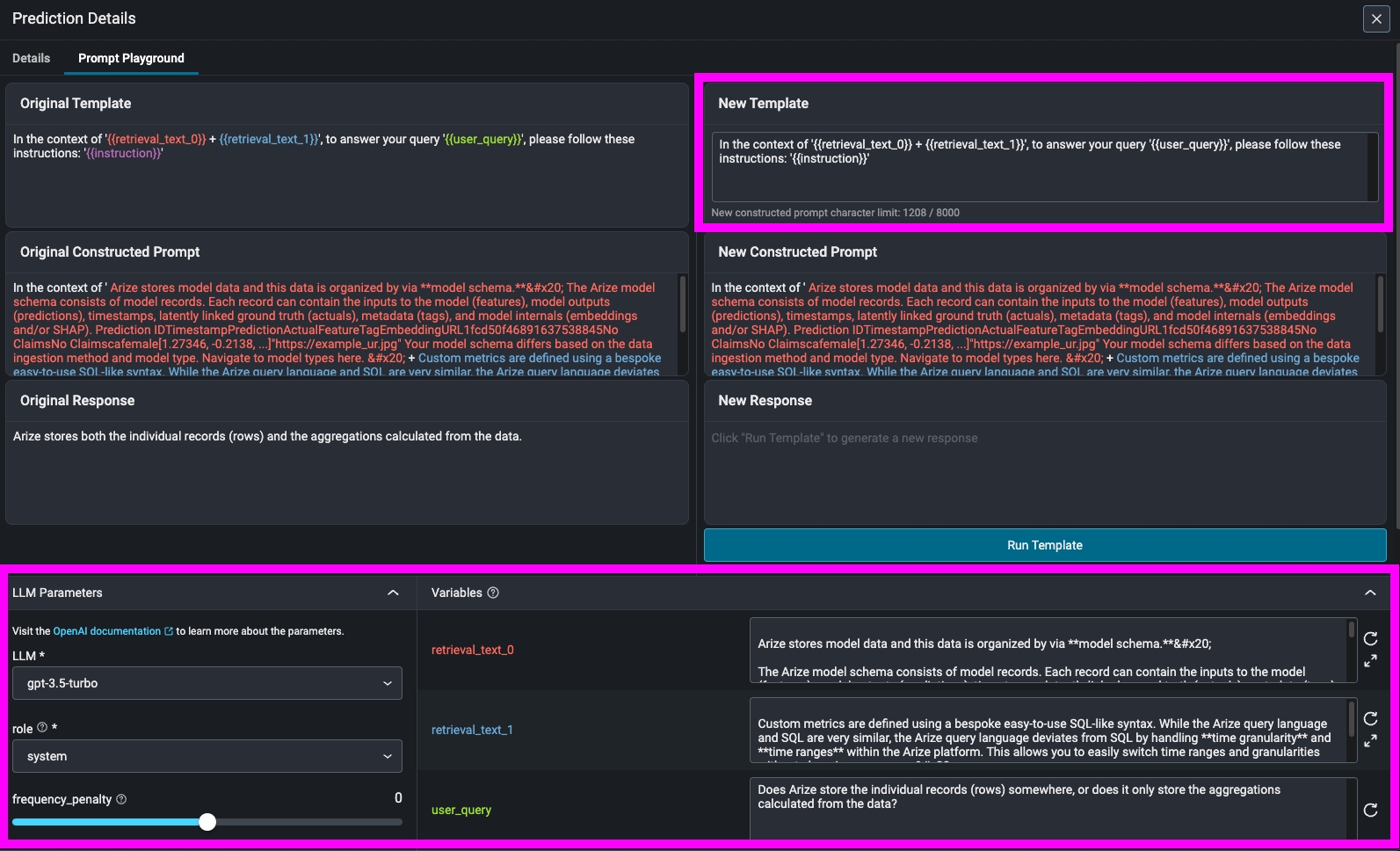

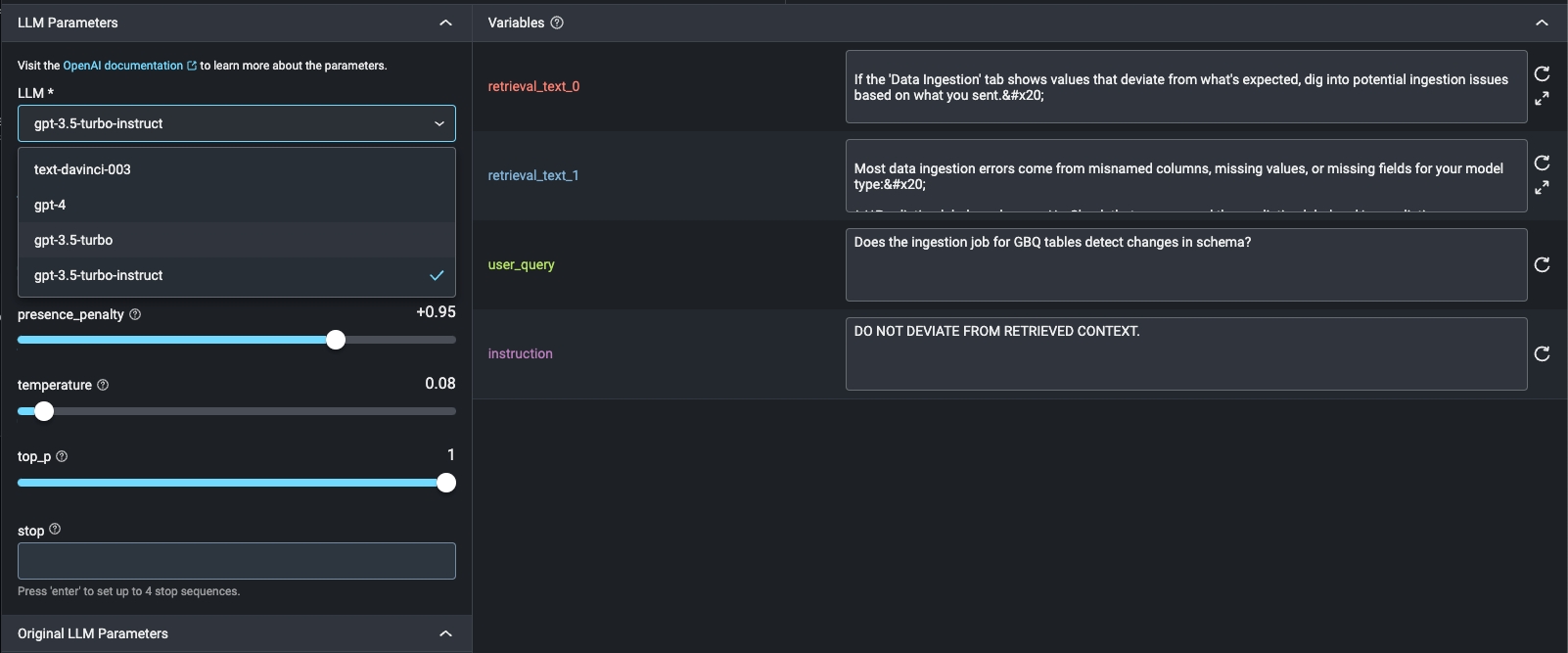

October 11, 2023Prompt Playground

Uncover poorly performing prompt templates, iterate on them in real time and verify improved LLM outputs before deployment. Choose to edit the prompt variables, LLM parameters, or the prompt template itself. Then re-run the prompt to easily compare responses for analysis prior to implementing a new or revised prompt template. Learn more here.

Enhancements

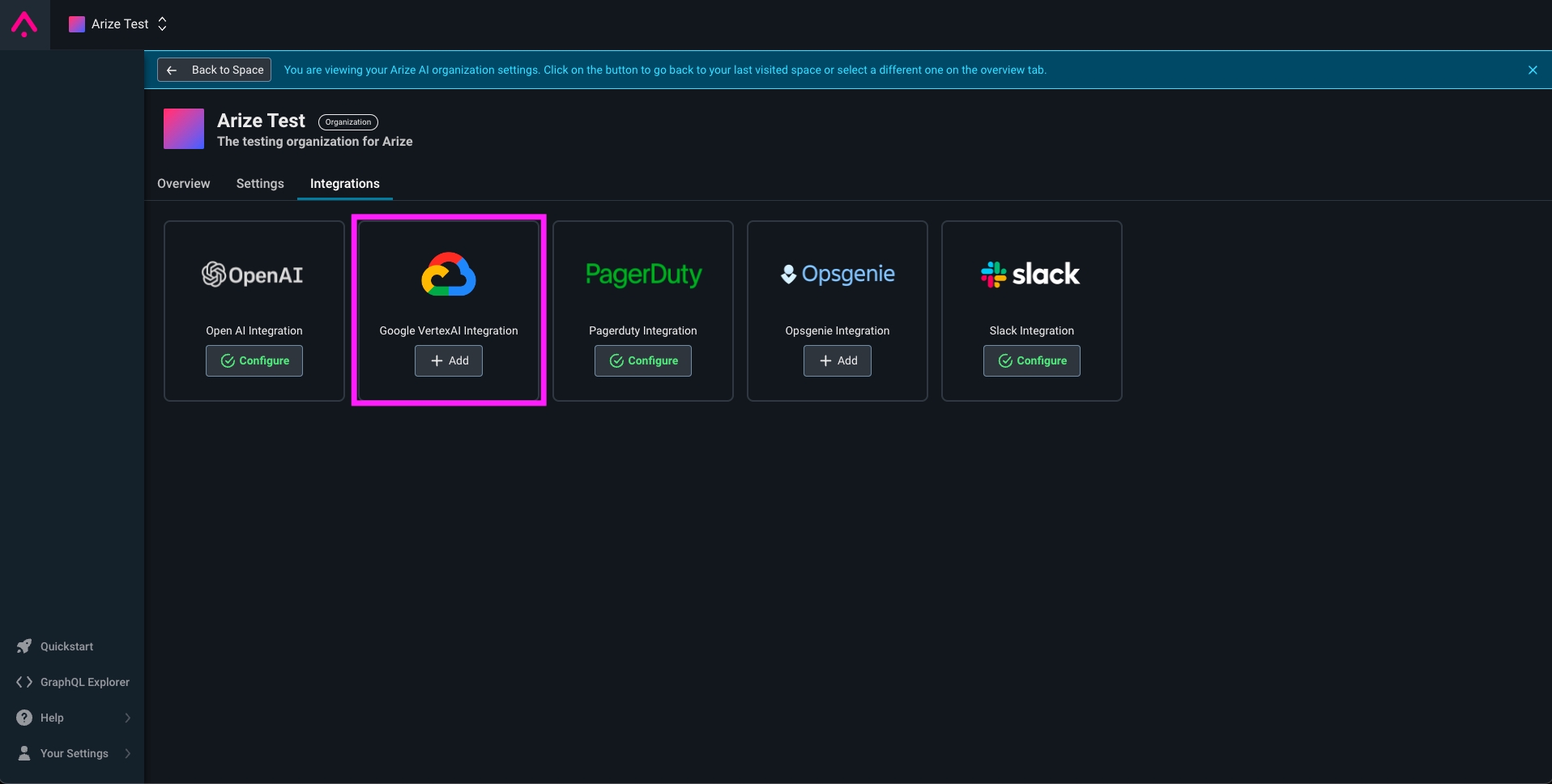

October 26, 2023VertexAI Integration for Prompt Playground

Users can now iterate on prompts in the Prompt Playground with Google’s models built on PaLM 2. This integration allows users to iterate on prompt templates, parameters, and variables in the platform and compare responses. Additionally, users can now compare LLM providers by comparing prompt runs between OpenAI and VertexAI. Learn more ->

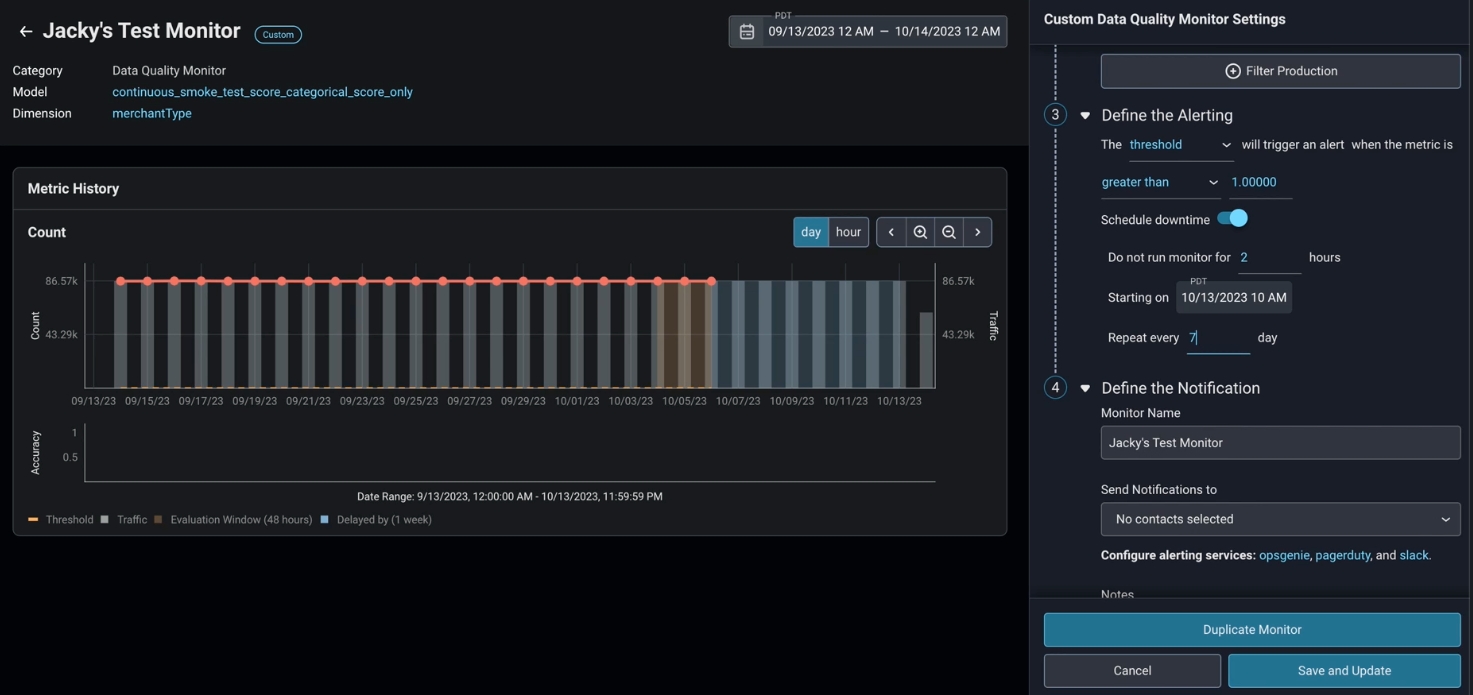

Monitor Downtime

Users can now schedule monitor downtime - a recurring time period in which monitors should not be evaluated. Monitor downtime aims to reduce noise in monitors due to periods of unstable or low traffic. Learn more ->

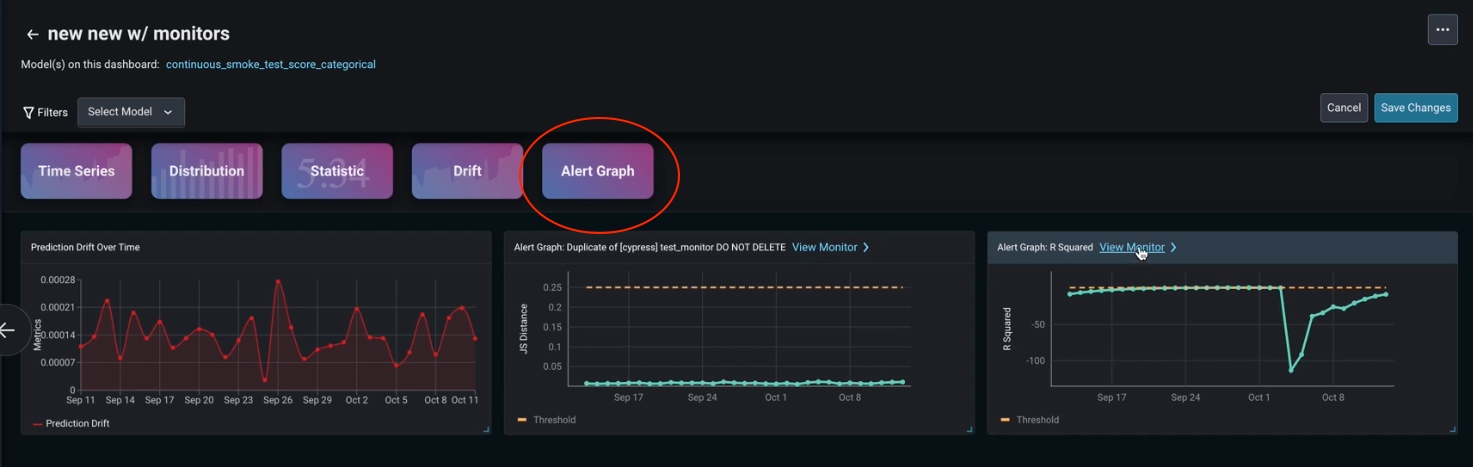

Monitors Widget in Dashboards

“Alert Graph” is a new type of dashboard widget, enabling users to view historical alerting data alongside other key metrics in their dashboard. Simply select “Alert Graph” from the widgets menu.

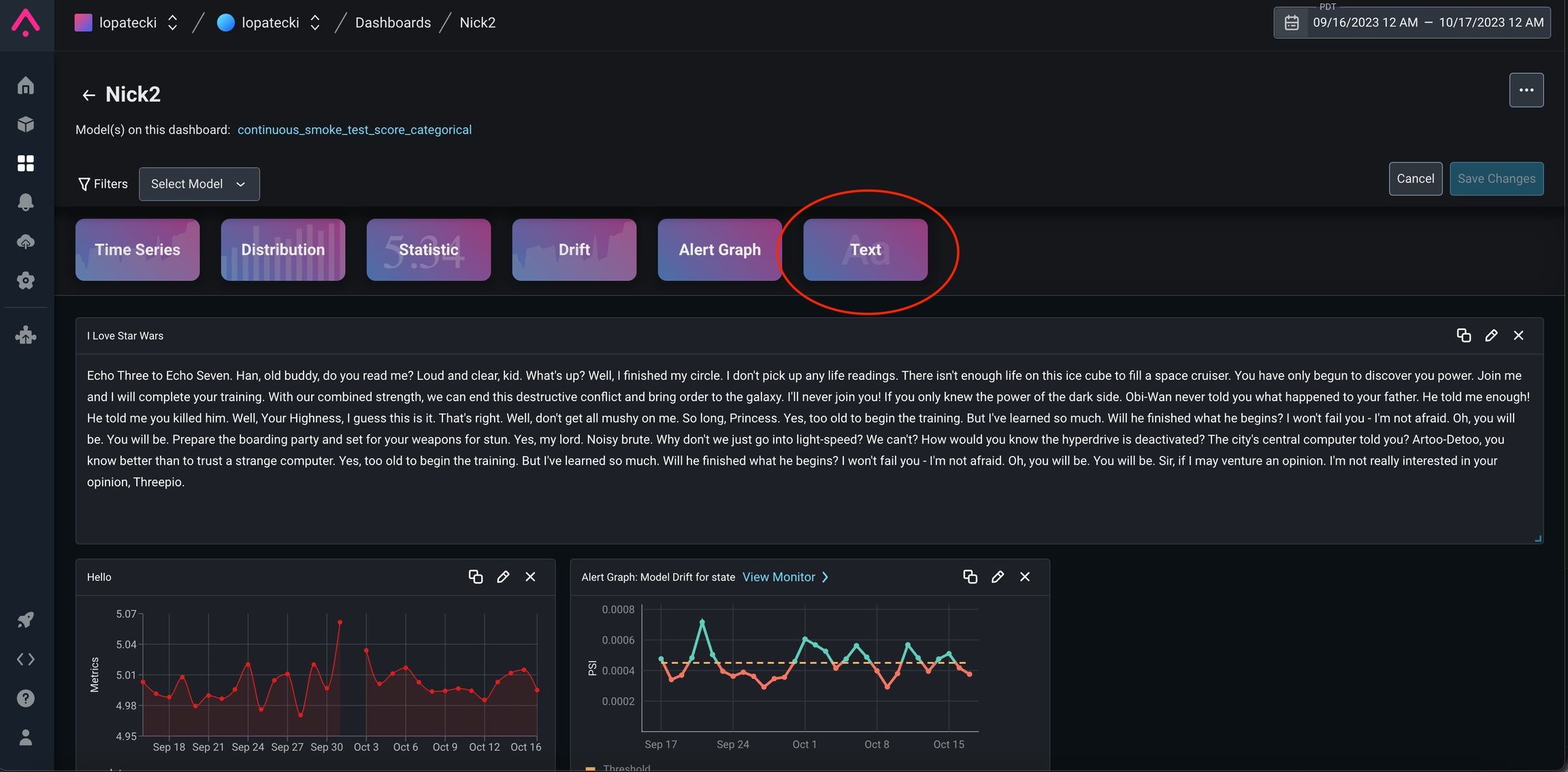

Text Widgets In Dashboards

Text Widgets are now included in dashboards. Users can create text widgets to share notes and observations.

GraphQL Usability Enhancement for Monitors

Users can now usespaceId + modelName to create a monitor in lieu of using modelID, allowing users to streamline monitor creation. To use, simply replace the modelId field with spaceId (from url) and the modelName (the same field used for create a file import job).

Support for gpt-3.5-instruct-turbo

Users can now select to use gpt-3.5-instruct-turbo as their LLM of choice when using the Prompt Playground, or summarizing embeddings clusters.

Interactive Calibration Chart and New Precision Recall (PR) Curve

Users can interact with the calibration chart in the “More Charts” view of Performance Tracing. The chart now includes traffic bars and users have the ability to zoom into certain regions of their data, allowing for more granular insight. In addition, users can now select to view a PR curve for further analysis.

Y-Axis Controls On Dashboards

Users can now customize their Y-axis domain, customizing the min and max per-widget. Simply go into the edit form of a line or bar chart widget and hit theDisplay tab to control. Alternatively, users can employ auto and the charting library will compute what it believes to be the most comprehensible domain automatically.

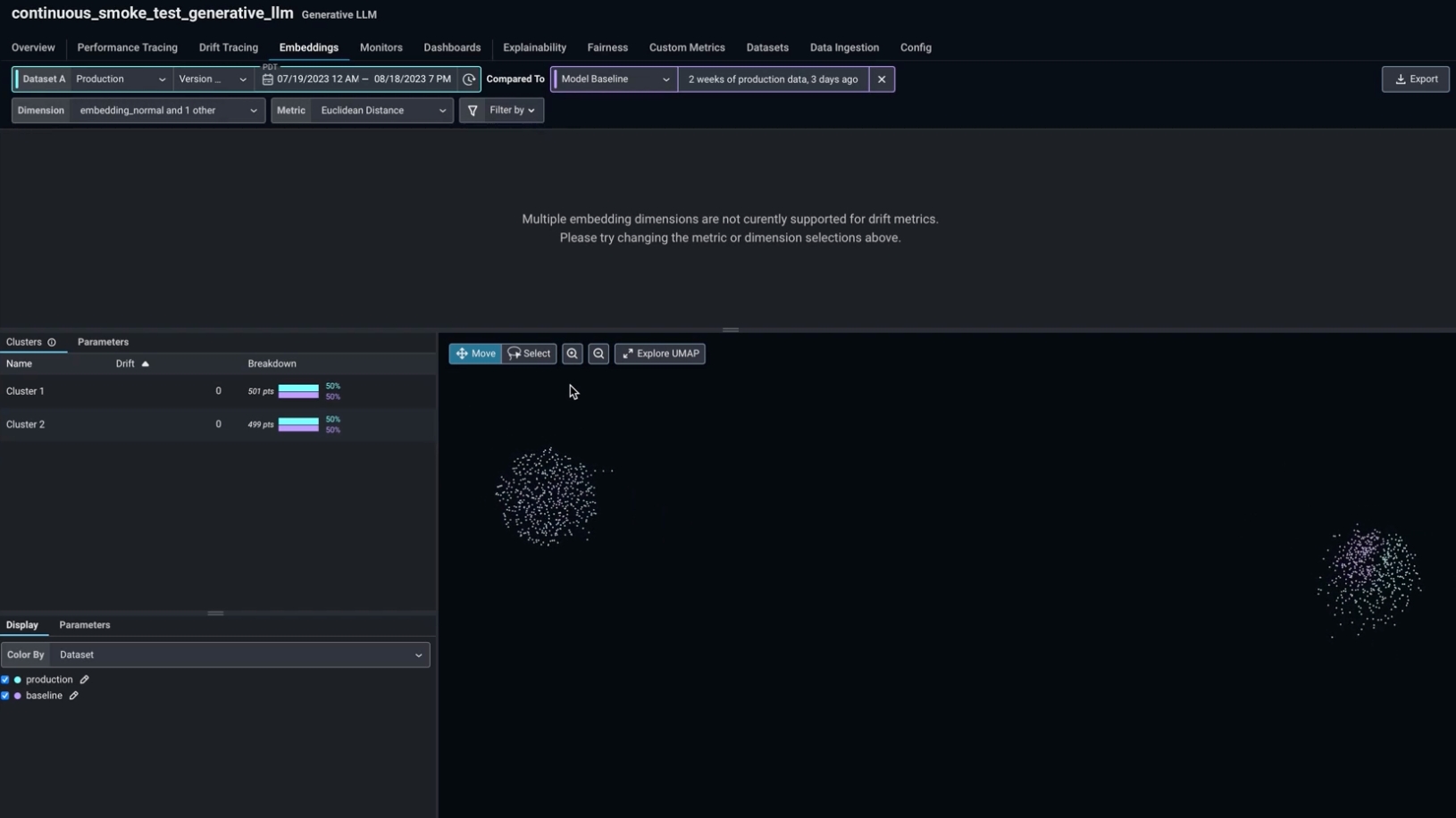

Simultaneous UMAP visualization for multiple embedding features

Visualize your UPMAP for multiple embedding features for a multi-input model simultaneously, without needing to increase the number of predictions sent. To view multiple embedding features, select them in the “Dimensions” dropdown.

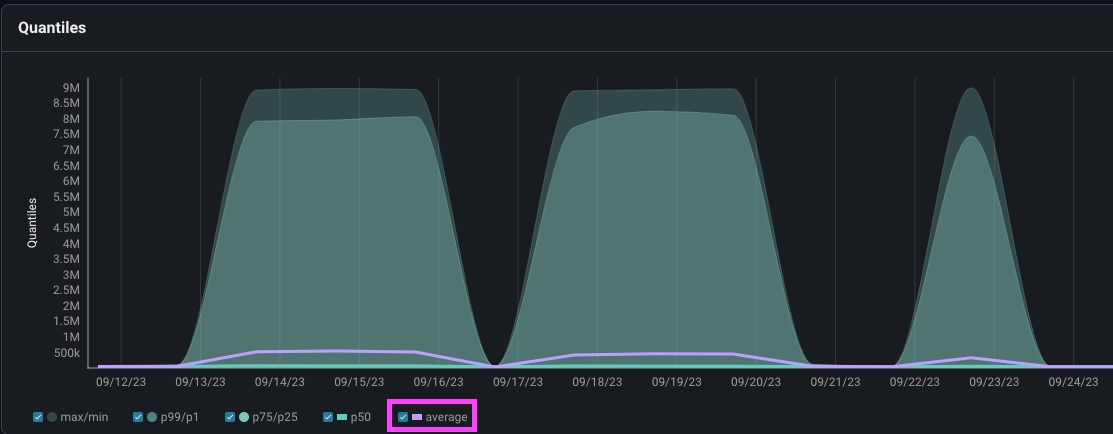

”Average” Option Added to Quantiles Plots

For additional views, users can now plot the mean, in addition to the previously offered median in quantile plots.

Python SDK v7.6.1

- Added validation on embedding raw data for batch and record-at-a-time loggers.

- Raised the validation string limits for string fields allowing users to send much larger strings.

- Added truncation warnings if strings reach size limit.

Python SDK v7.5.1

- Core SDK is compatible with Python 3.6+ (previously 3.8+)

-

SDK now works with any version of

pyarrow

📚 New Content

LLM Tracing and Observability with Arize Phoenix How Wayfair Achieves Self-Service Onboarding for MLOps Tooling- PII Anonymization with Microsoft Presidio: How To

- The Definitive Guide to LLM Evaluation

- LLM Leaderboard

- Traces and Spans

- ReAct Architecture: Simple AI Agents

- HDBSCAN

- LLM Monitoring

- Large Content and Behavior Models

- Explaining Grokking Through Circuit Efficiency

- RankVicuna: Zero-Shot Listwise Document Reranking with Open-Source Large Language Models