Documentation Index

Fetch the complete documentation index at: https://arizeai-433a7140.mintlify.app/llms.txt

Use this file to discover all available pages before exploring further.

Using OpenAI with Phoenix Evals

Requires

openai>=1.0.0LLM instance with the OpenAI provider:

LLM wrapper reads your API key from the OPENAI_API_KEY environment variable, or you can pass it directly:

Using with evaluators

Custom parameters

Pass additional parameters to the OpenAI client:Azure OpenAI

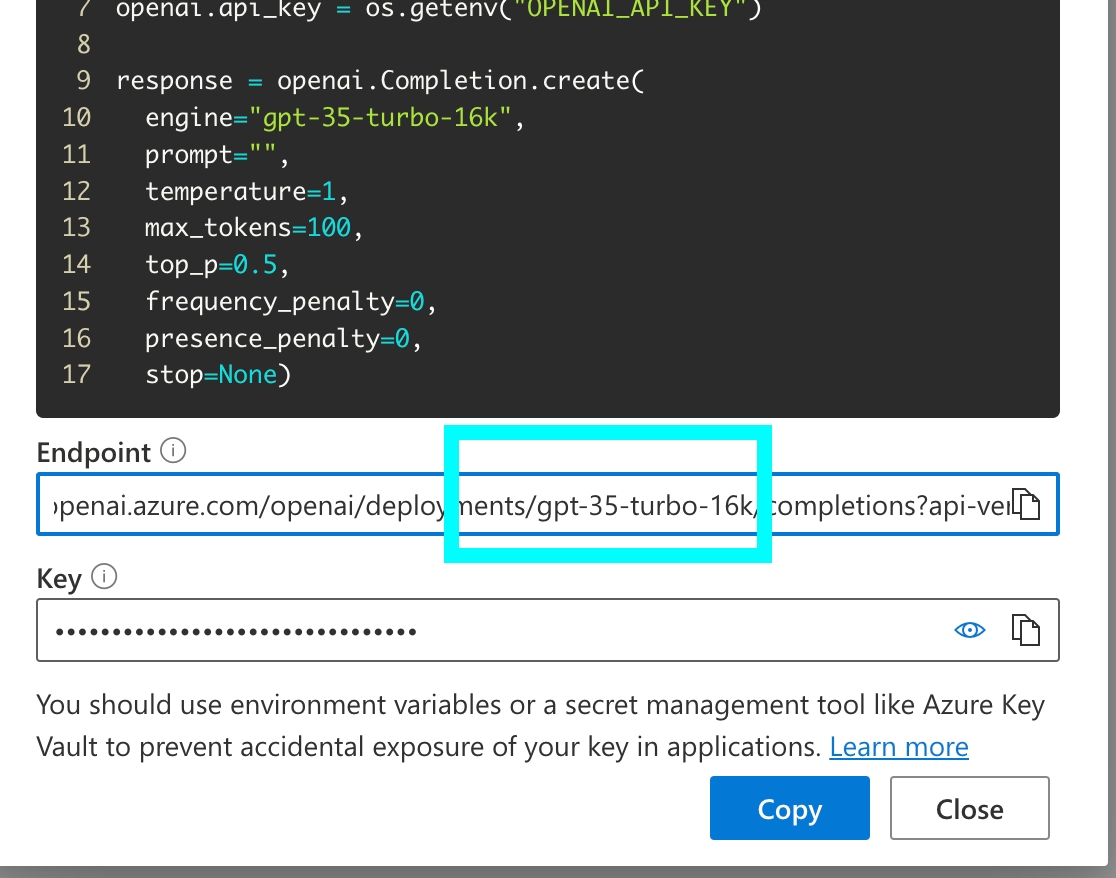

Use the"azure" provider for Azure OpenAI deployments:

The

model parameter is the deployment name in Azure. You can find it in the Azure OpenAI playground.