Documentation Index

Fetch the complete documentation index at: https://arizeai-433a7140.mintlify.app/llms.txt

Use this file to discover all available pages before exploring further.

Credentials

To securely provide your API keys, you have four options.- Browser: Store them in your browser’s local storage.

- Database (Secrets): Save them encrypted in the Phoenix database.

- Database (Custom Providers): Configure named providers with specific credentials.

- Environment Variables: Set them as environment variables on the server.

Option 1: Store API Keys in the Browser

API keys can be entered in the playground application via the API Keys dropdown menu. This option stores API keys in your browser’s local storage.

Option 2: Save Secrets (Encrypted) in the Database

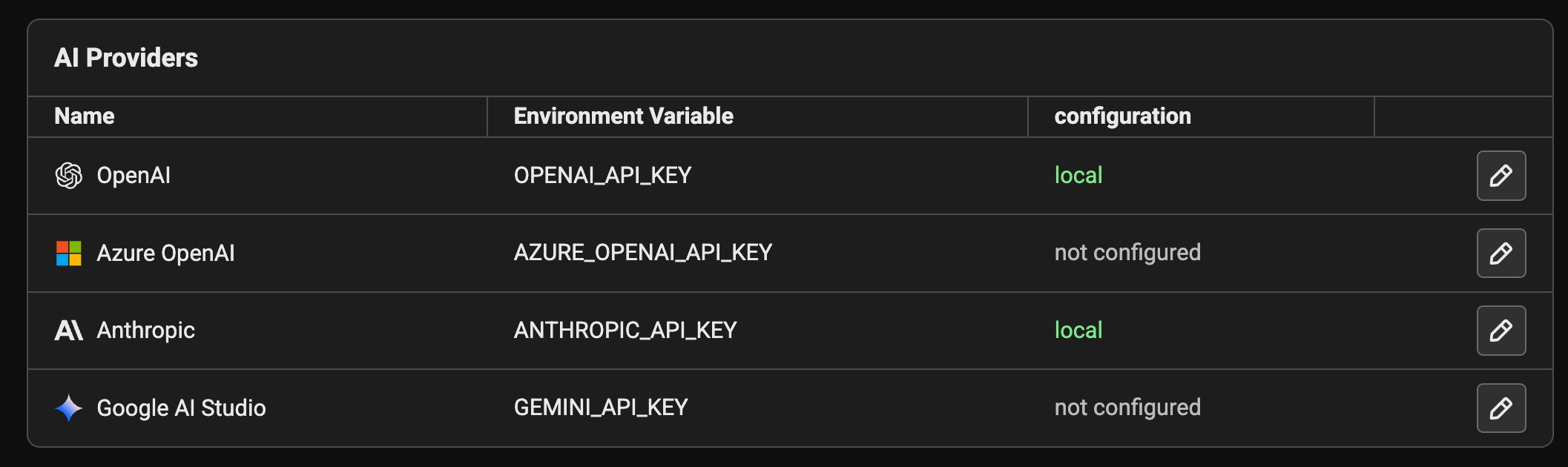

You can save your API keys persistently in the Phoenix database. Navigate to the Settings page, select the AI Providers tab, and click the edit icon for a provider. In the dialog, toggle the view to Secrets and enter your keys. Keys are stored encrypted at rest in thesecrets table. If you change the server’s PHOENIX_SECRET environment variable, these entries will become unreadable and must be updated.

Option 3: Custom Providers

Custom providers let you store provider credentials and routing settings on the server and reuse them across the playground and prompt versions. For detailed instructions on configuring custom providers, see the Custom AI Providers settings page. Using Custom Providers in the Playground and Prompts Custom providers appear in model selection menus as their own provider group. When you select one:- The model list mirrors the built-in model list for that SDK.

- Routing fields (base URL, Azure endpoint, AWS region) are pulled from the custom provider config.

- You can still add request-level custom headers from the model configuration panel.

Option 4: Set Environment Variables on Server Side

If the following variables are set in the server environment, they’ll be used at API invocation time.| Provider | Environment Variable | Platform Link |

|---|---|---|

| OpenAI | - OPENAI_API_KEY | https://platform.openai.com/ |

| Azure OpenAI | - AZURE_OPENAI_API_KEY - AZURE_OPENAI_ENDPOINT - OPENAI_API_VERSION | https://azure.microsoft.com/en-us/products/ai-services/openai-service/ |

| Anthropic | - ANTHROPIC_API_KEY | https://console.anthropic.com/ |

| Gemini | - GEMINI_API_KEY or GOOGLE_API_KEY | https://aistudio.google.com/ |

| AWS Bedrock | - AWS_ACCESS_KEY_ID - AWS_SECRET_ACCESS_KEY - AWS_SESSION_TOKEN - AWS_BEARER_TOKEN_BEDROCK | https://aws.amazon.com/bedrock/ |

| Cerebras | - CEREBRAS_API_KEY | https://cloud.cerebras.ai/ |

| Fireworks | - FIREWORKS_API_KEY | https://fireworks.ai/ |

| Groq | - GROQ_API_KEY | https://console.groq.com/ |

| Moonshot | - MOONSHOT_API_KEY | https://platform.moonshot.ai/ |

For Azure, you can also set the following server-side environment variables:

AZURE_TENANT_ID, AZURE_CLIENT_ID, and AZURE_FEDERATED_TOKEN_FILE to use WorkloadIdentityCredential.AWS Bedrock AuthenticationWhen using AWS Bedrock, Phoenix leverages the standard boto3 credential chain or the

AWS_BEARER_TOKEN_BEDROCK environment variable. This means if you are running Phoenix on an EC2 instance with an assigned IAM role, have ~/.aws/credentials configured, or have exported AWS_BEARER_TOKEN_BEDROCK, you do not need to explicitly provide credentials.To use this fallback behavior, do not fill out the AWS credentials in the Playground settings. The client will automatically discover and use the available credentials from the environment.Using OpenAI Compatible LLMs

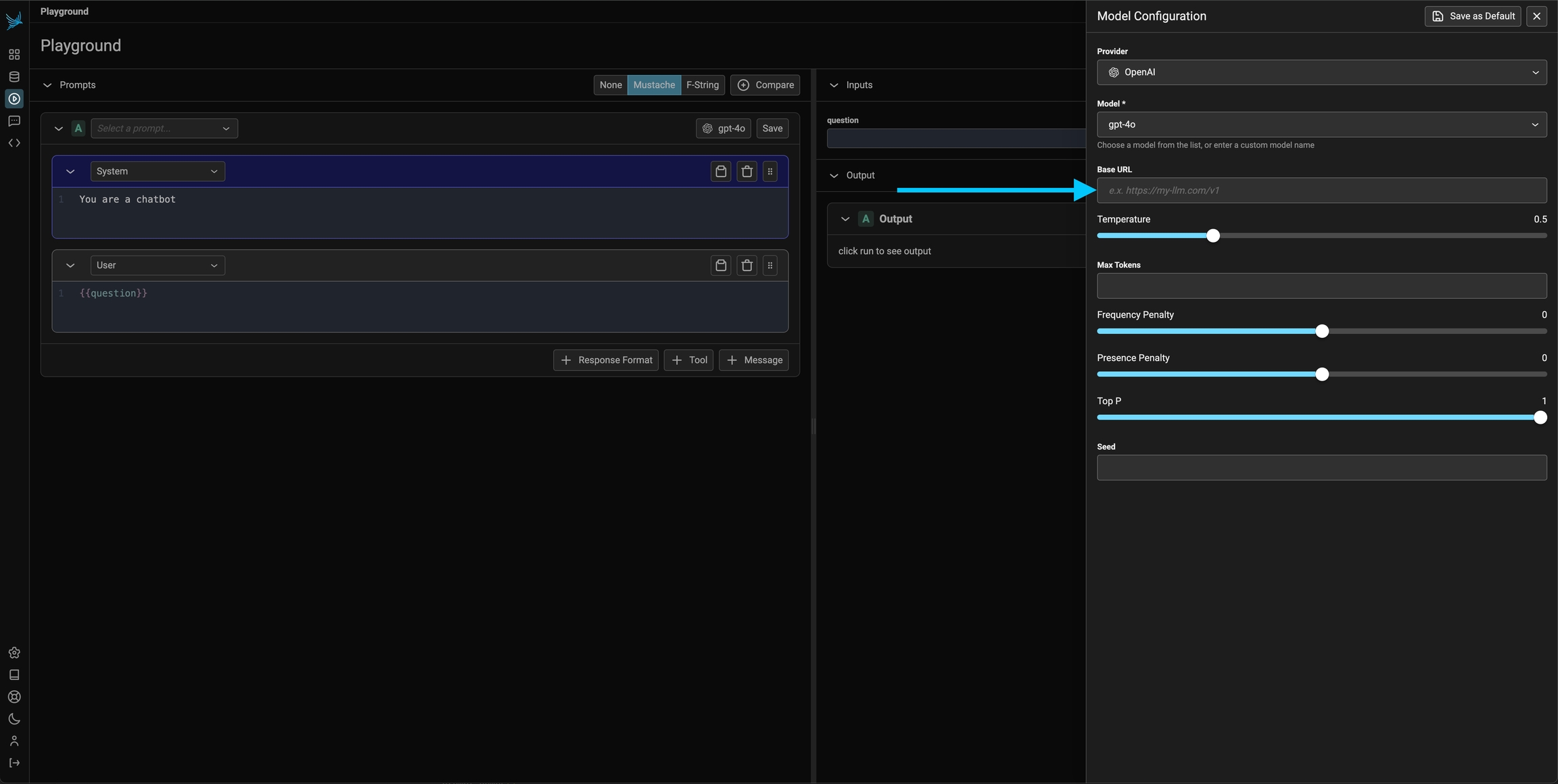

Option 1: Configure the base URL in the prompt playground

Since you can configure the base URL for the OpenAI client, you can use the prompt playground with a variety of OpenAI Client compatible LLMs such as Ollama, DeepSeek, and more.\

If you are using an LLM provider, you will have to set the OpenAI api key to that provider’s api key for it to work.

Option 2: Server side configuration of the OpenAI base URL

Optionally, the server can be configured with theOPENAI_BASE_URL environment variable to change target any OpenAI compatible REST API.

OpenAI and Azure OpenAI support two API types: Chat Completions (

chat.completions.create) and Responses (responses.create). For built-in providers, choose the API type from the model configuration panel in the Playground. For custom providers, set the API type in the provider configuration. Built-in providers default to Chat Completions, while custom providers default to Responses.Custom Headers

Phoenix supports adding custom HTTP headers to requests sent to AI providers. This is useful for additional credentials, routing needs, or cost tracking when using custom LLM proxies.Configuring Custom Headers

- Click on the model configuration button in the playground

- Scroll down to the “Custom Headers” section

- Add your headers in JSON format: