The Evaluator

Your go-to blog for insights on AI observability and evaluation.

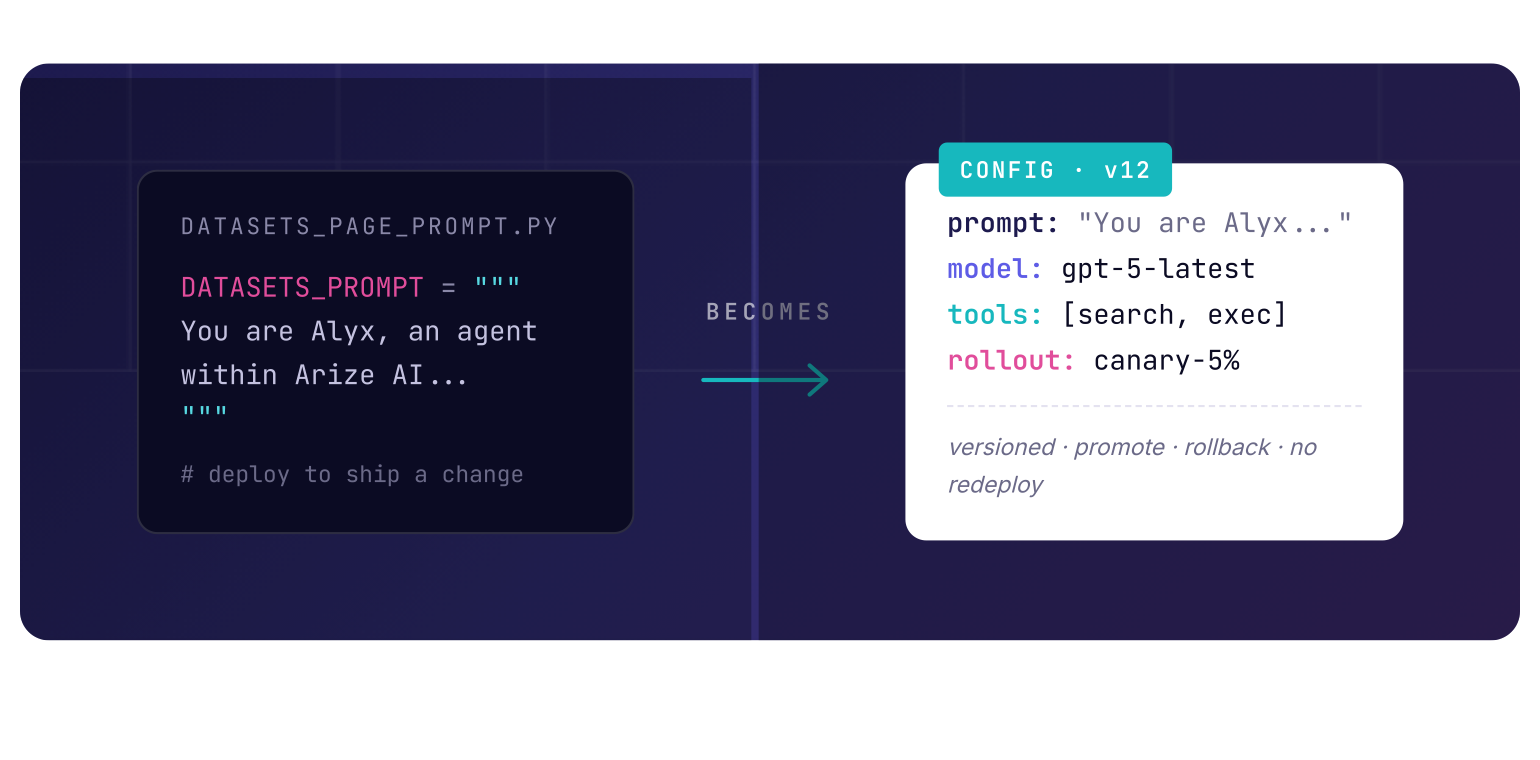

Prompt templates as configs, not code

This post was written in April 2026. Cloud products, feature maturity, and recommended patterns change over time, so readers should treat these examples as directional guidance. For teams already using Arize, there is a natural extension of that pattern. Prompt Playground can sit upstream of the config layer as the place where prompts are edited, compared, and versioned before they are promoted into whatever config system the company already trusts in production.

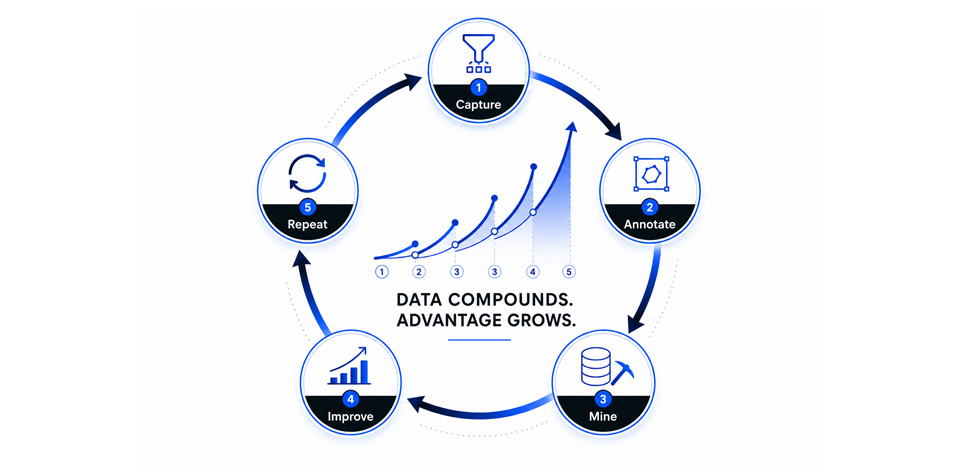

Using context graphs: build a data moat like Google’s using your enterprise data

Enterprise software is on the verge of its first compounding data loop, the same kind of self-reinforcing mechanism that built the most valuable consumer businesses of the last twenty years….

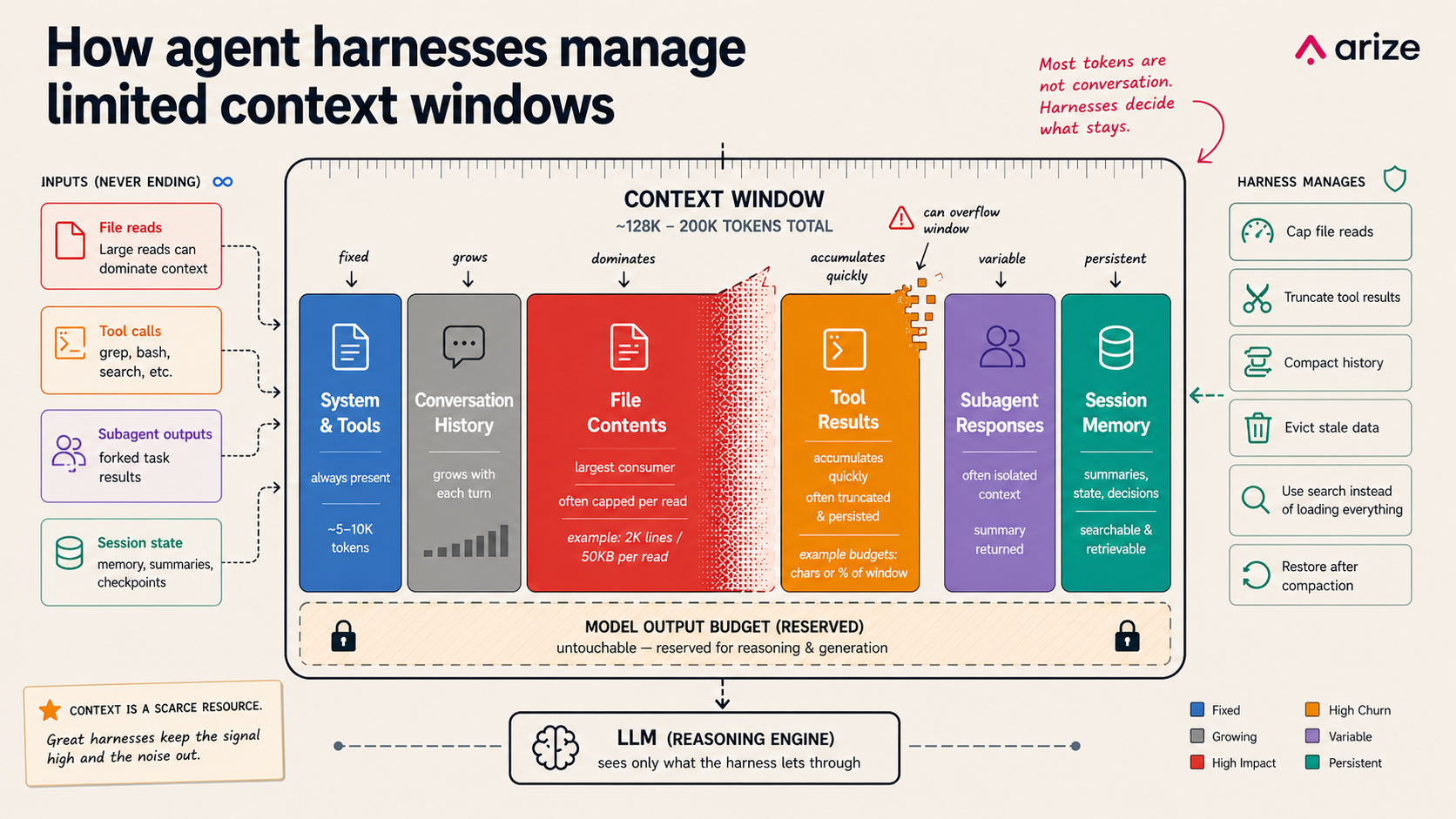

Context management in agent harnesses: memory, files, and subagents

A version of this article originally appeared on X. Every agent harness runs into the same limit: the context window is too small for everything the model might want to remember….

Sign up for our newsletter, The Evaluator — and stay in the know with updates and new resources:

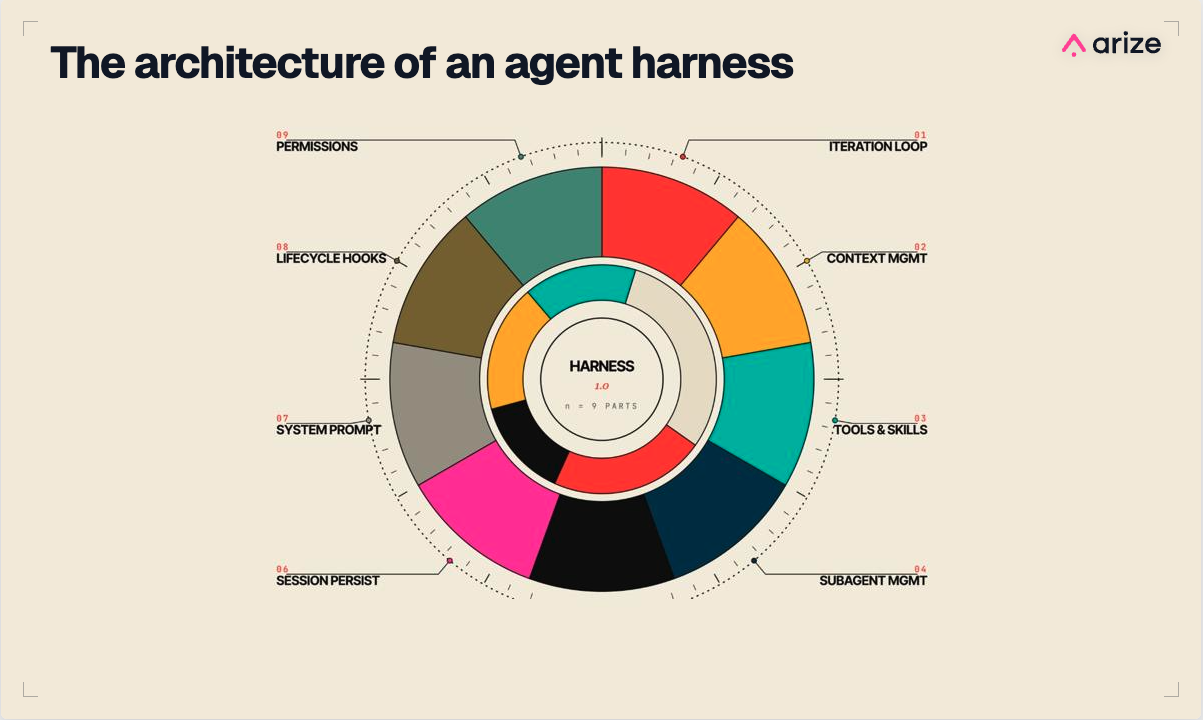

What is an agent harness?

A version of this article originally appeared on X. Someone asked me at a hacker event last week: “Can anyone actually tell me what a harness really is?” It was…

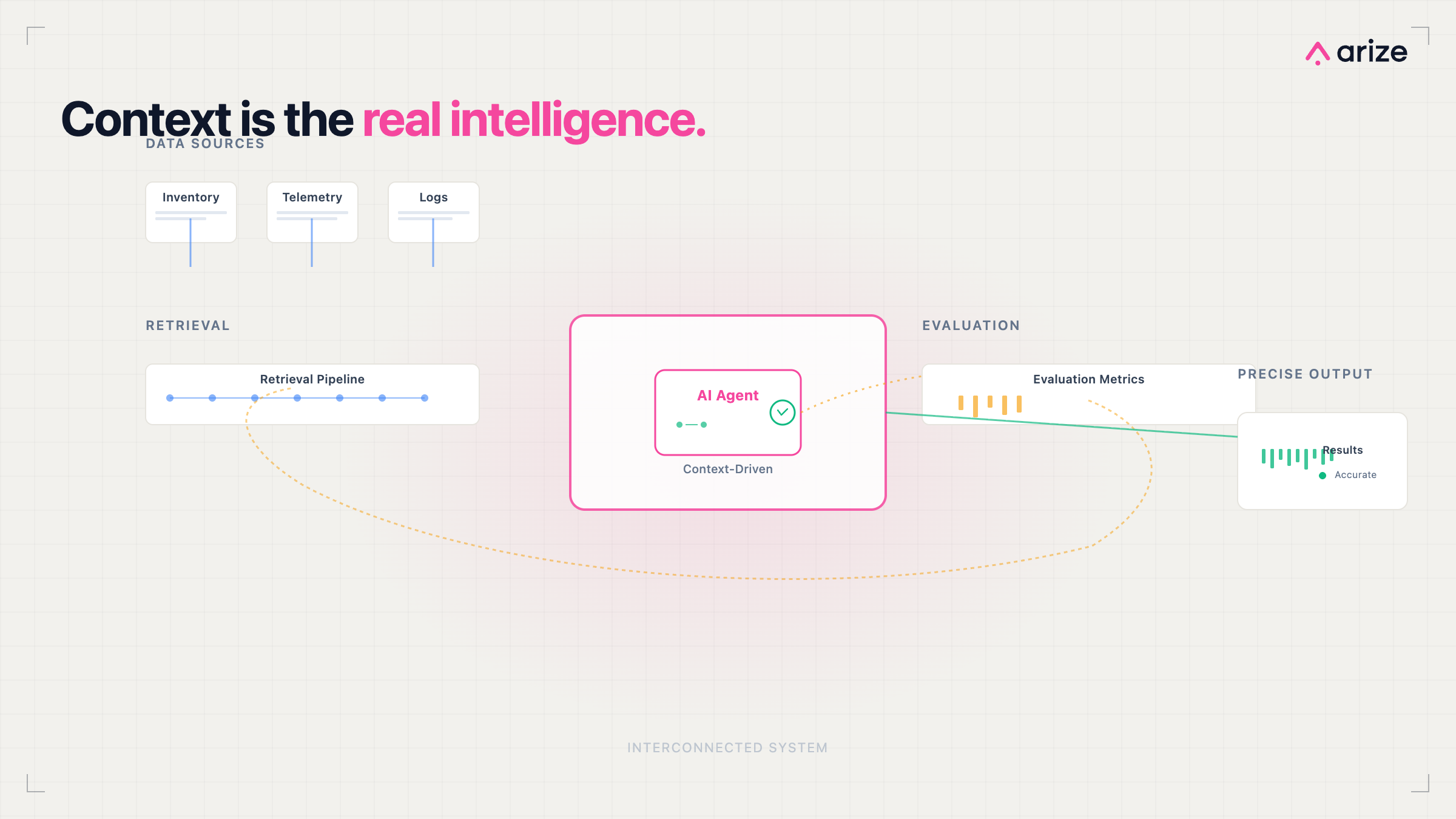

Beyond models: How context and evals make agents work in production

Building an AI agent has never been easier. But getting one into production that’s reliable is still hard. Most teams can ship a working demo in a day. The agent…

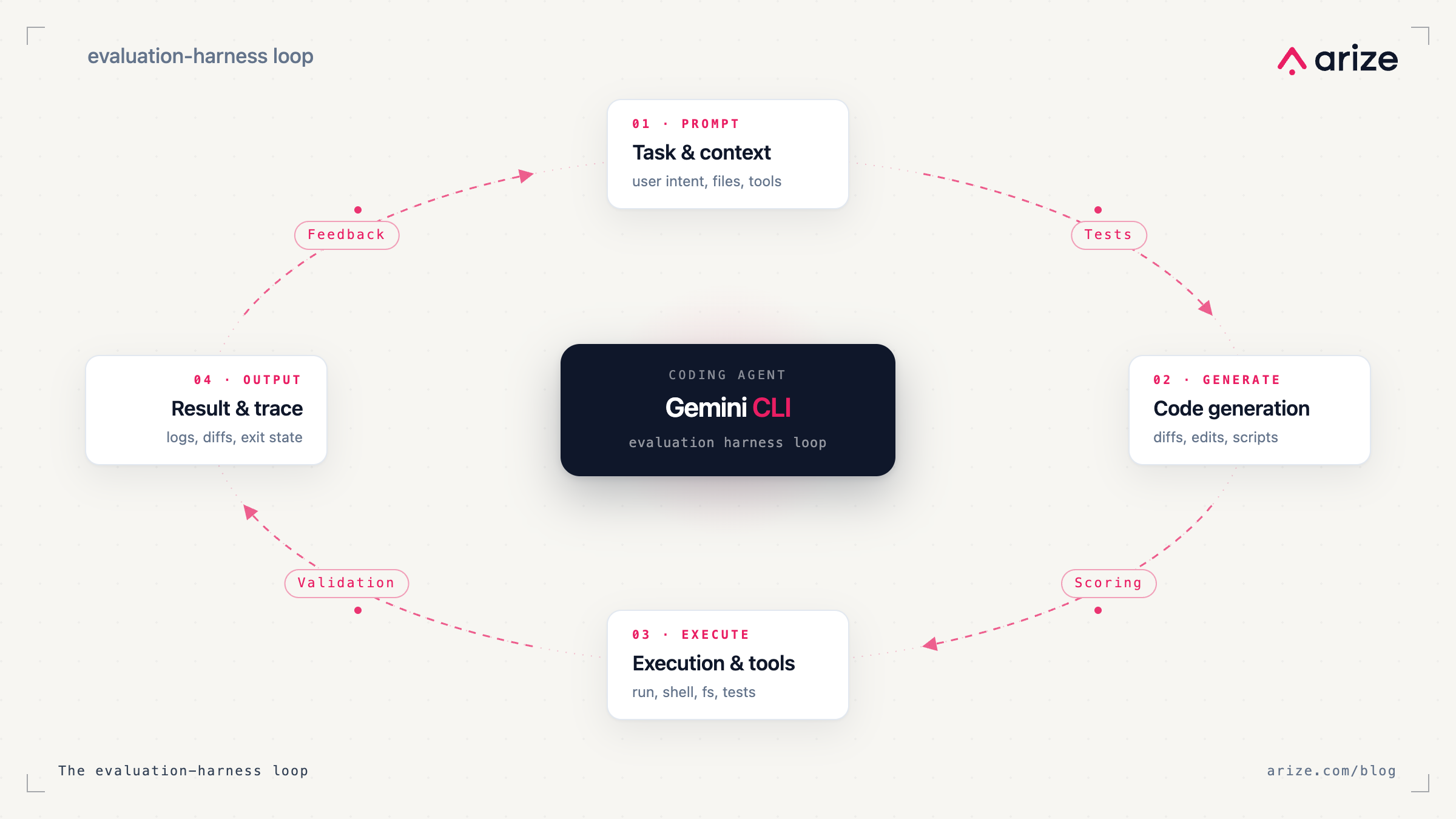

How to add an evaluation harness to your Gemini CLI coding agent

Coding agents can update prompts, wire in tools, and change application logic across your codebase in a single run. The hard part isn’t getting the agent to make changes, but…

Code is free, technical debt isn’t: Notes from AI Engineer Europe

Keynotes at Europe’s first flagship AI Engineer Conference shared one theme: code generation has accelerated past our ability to verify it, and the industry is quietly reorganizing around that fact….

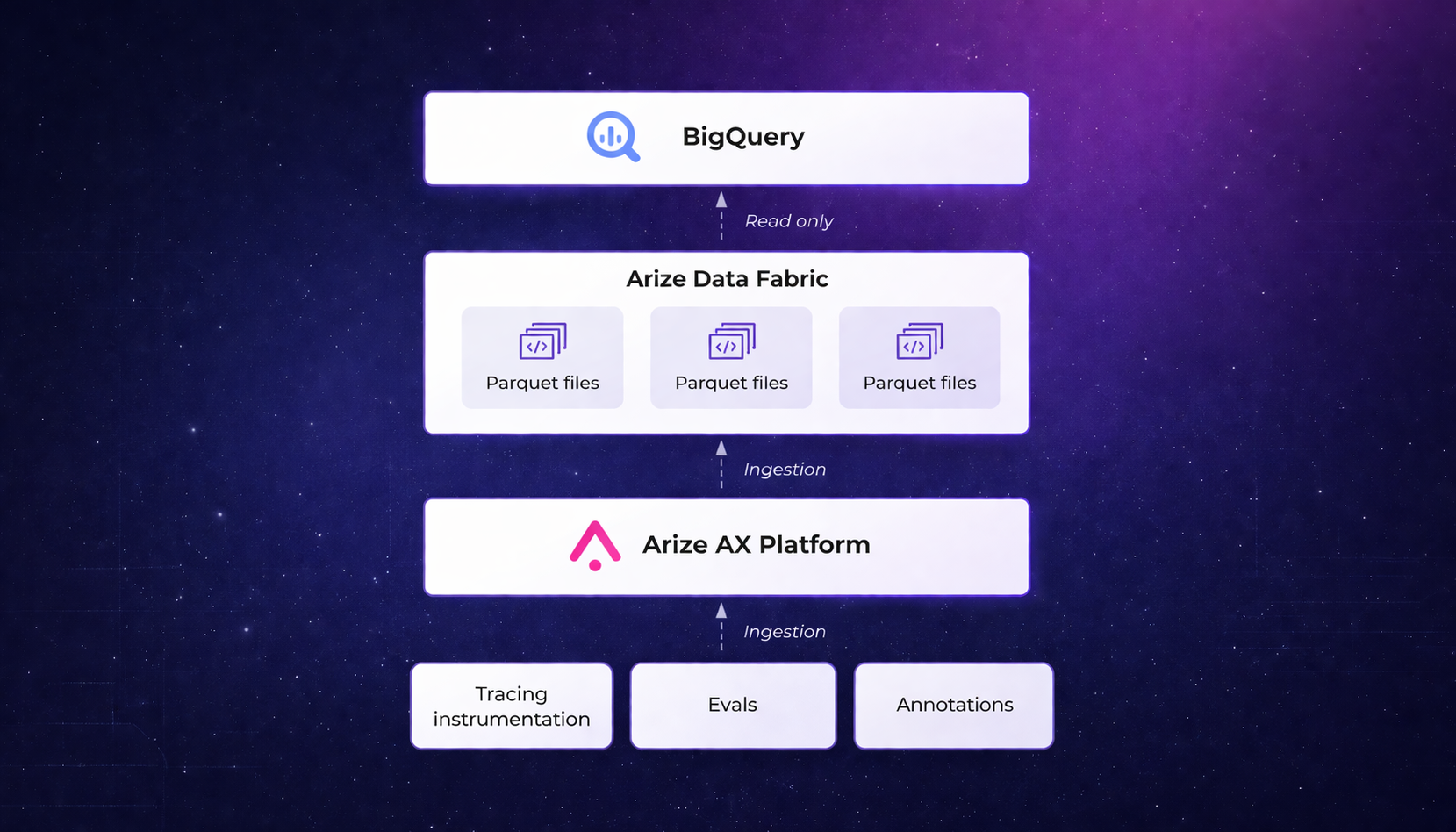

Data Fabric: Querying agent traces in BigQuery

How to join LLM traces with billing, infrastructure, and customer data using Iceberg and BigQuery If you run AI agents in production, you’ve probably run into a simple problem: you…

Building smarter AI agents: architecture, evals, and lessons from the field

Shipping an AI agent is easy. Understanding whether it actually works in production is not. That was the common thread across two AI Builders events in San Francisco at GitHub…

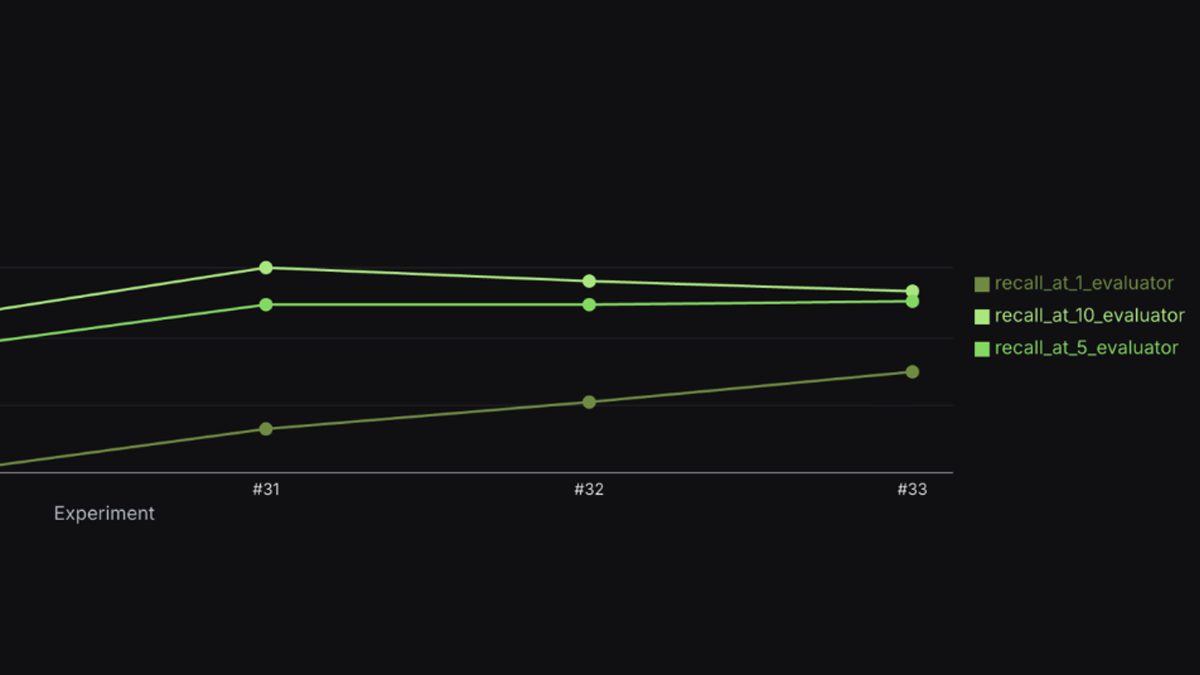

How Arize Skills Improved RAG Recall from 39% to 75% in 8 Hours

The Pain of Iterative RAG Development If you’ve built a production RAG system, you know this cycle. Tweak parameters, re-index, re-evaluate, repeat. It’s slow. It’s manual. The feedback loop between…