OTel-native observability that works with any framework, any model provider, any agent architecture. Purpose-built for production AI from day one with the open-source roots to prove it.

Your agents aren’t single-framework. Observability shouldn’t be either.

Framework-agnostic. Production-native.

Dependent on LangChain ecosystem.

If you’re all-in on LangChain and LangGraph, LangSmith is the path of least resistance. One environment variable and tracing works. But most production teams run multiple frameworks, custom stacks, and direct provider calls.

What AI builders are saying

from field interviews

We evaluated both, and people were split. LangSmith made sense if you’re all-in on LangChain, but we run multiple frameworks and direct provider calls. The OpenTelemetry-first approach won us over.

LangSmith crashed on us during a critical workflow. Arize wins the award for simplicity getting up and running, and it hasn’t gone down since we switched.

The other tools don’t come anywhere close on evaluating prompts and LLM processing in production. We needed custom metrics, monitors, and dashboards, not just dev tooling.

Where the architecture diverges

One framework shouldn't own your observability stack

Open by default vs. open by marketing

LangSmith is closed source. Self-hosting is gated behind Enterprise contracts. Per-trace pricing climbs fast at scale.

Arize Phoenix is fully open source – run it via CLI or Docker, free, unlimited and locally. Try it out with your coding agent to get running in minutes and see what your agent is really doing.

Arize AX adds the industry leading AI datastore adb for processing agent telemetry data with enterprise-grade monitoring, alerting, and access controls on top. Your choice of deployment. Data stays yours.

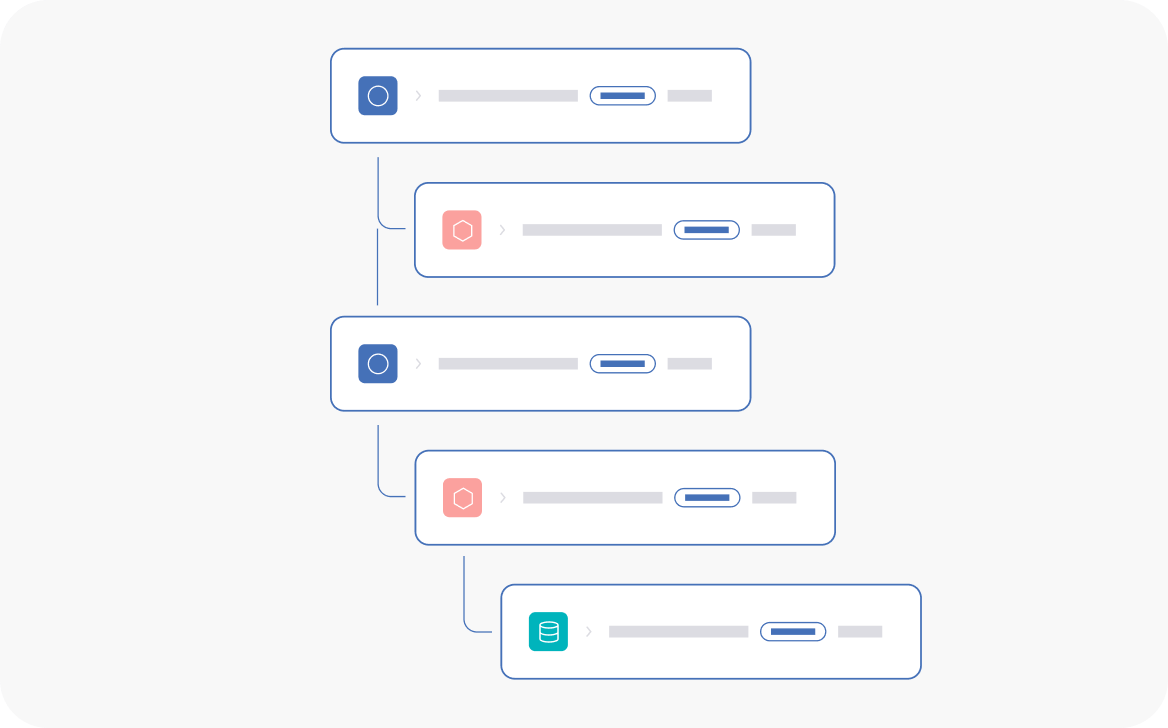

Deeper than traces

LangSmith is optimized for tracing and debugging LLM workflows (especially in LangChain ecosystems), while Arize focuses on end-to-end agent observability and production behavior across full agent trajectories.

Path evals measure if your agent took the optimal route. Convergence evals catch loops and unnecessary backtracking. Session evals track coherence across multi-turn interactions.

When you need to debug why an agent made a specific decision – not just what it did – eval depth matters.

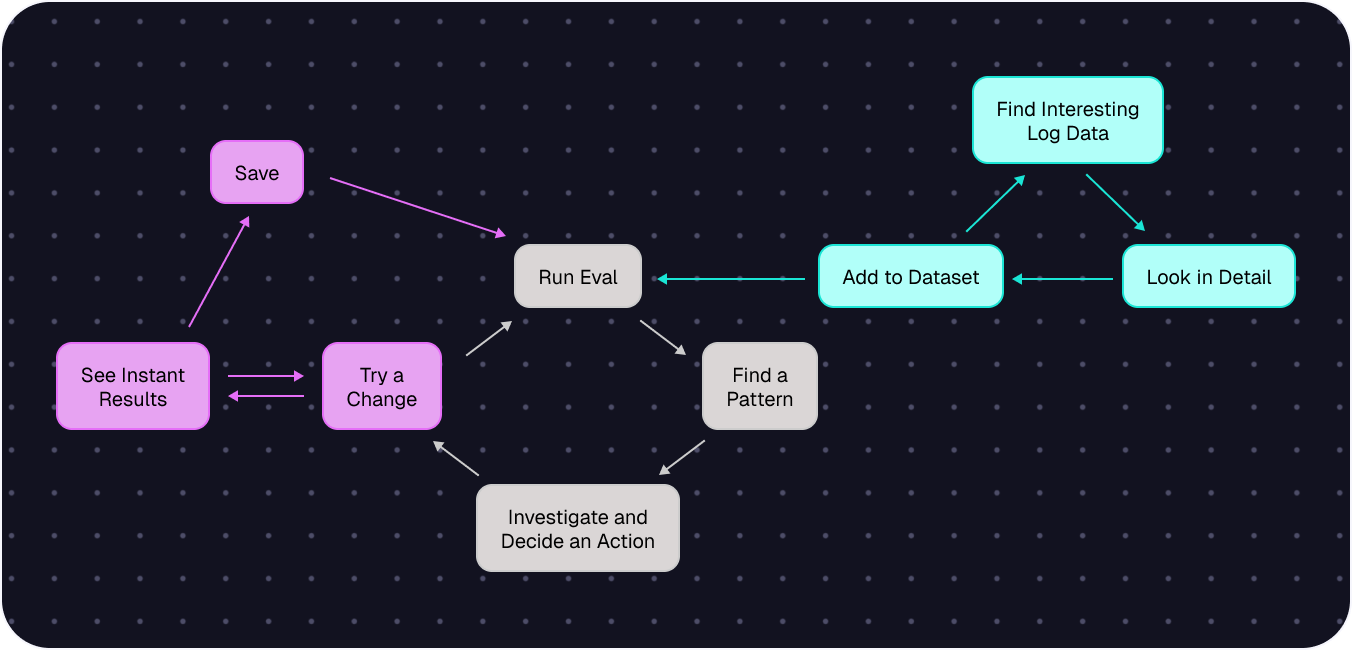

Monitoring that closes the loop

LangSmith surfaces evaluation results in dashboards. But those results don’t automatically influence the deploy pipeline – quality drops can reach production before someone manually intervenes.

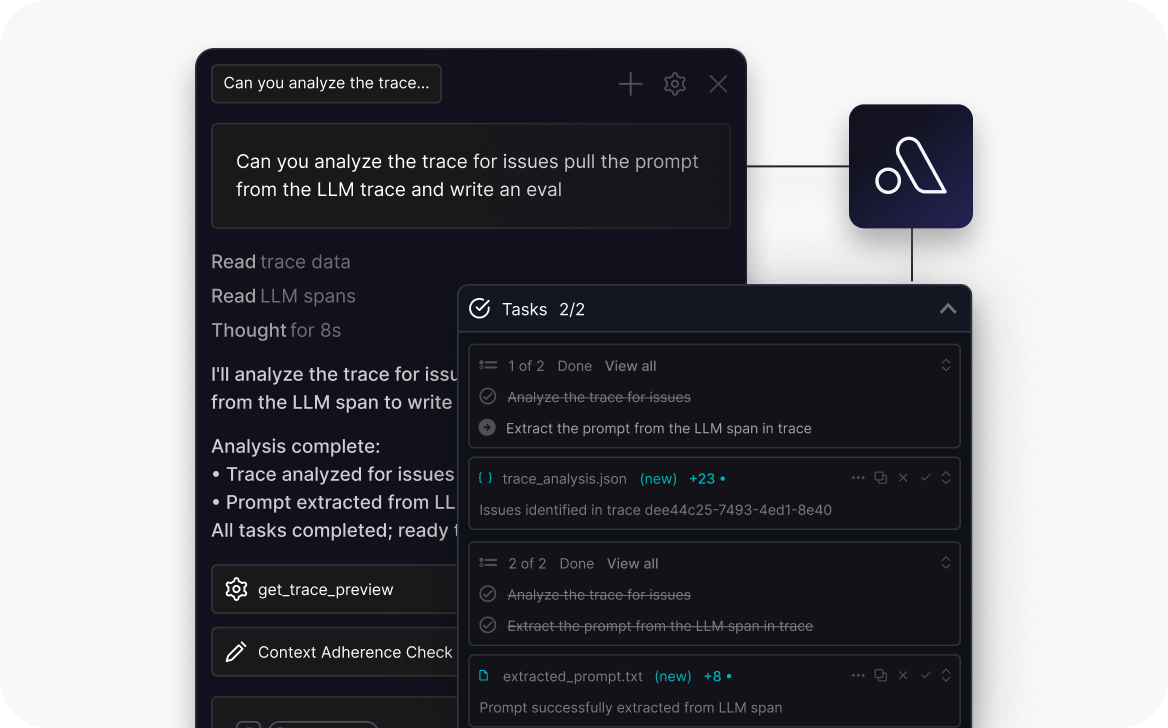

Arize provides continuous real-time monitoring with automated alerting, quality gates, and Alyx – an AI engineering agent that surfaces issues before users do.

No framework tax on deployment

LangSmith’s enterprise deployment requires LangChain’s infrastructure. Teams with strict data residency requirements on smaller budgets hit a wall – self-hosting is Enterprise-only.

Arize AX deploys to one Kubernetes cluster in your VPC. No outbound calls. No framework dependency in your production infrastructure.

When LangSmith is the right call

If your team is fully committed to LangChain and LangGraph, and you’re building within that ecosystem end-to-end, LangSmith’s native integration is genuinely seamless.

When your stack evolves beyond one framework, we’ll be here.

Where the difference is most felt

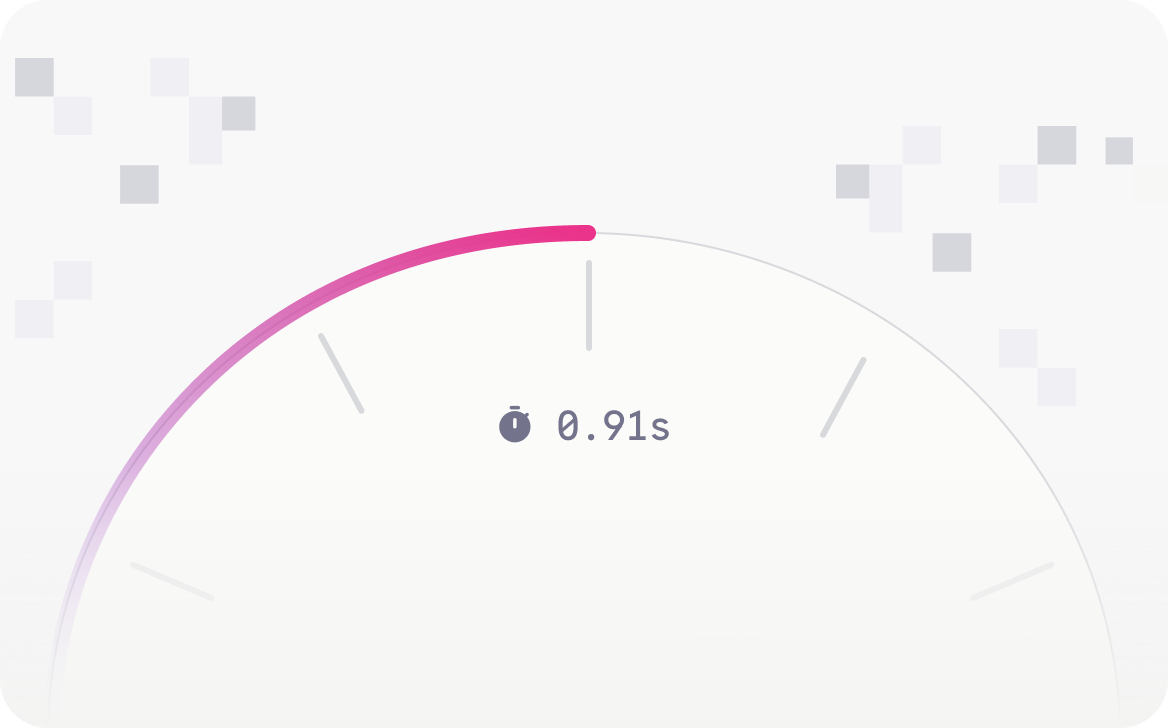

Trillions of data points. No tradeoffs.

First trace to full production visibility

Find what you didn't know to look for

One deployment. Total control.

No framework dependency

Predictable costs

Simpler operations

AI evolved from ML. So did we.

We’re Jason and Aparna.

We built the foundational ML infrastructure at Uber, Apple, and TubeMogul.

Before LLMs existed, we watched models break in production with nothing to fix them. So we started Arize to fix it.

Our mission since 2020: make AI work.

ML first. Then LLMs. We shipped the first open-source library for LLM evaluation: Phoenix.

Now agents.

That’s Arize AX — the Agent Experience.