The Evaluator

Your go-to blog for insights on AI observability and evaluation.

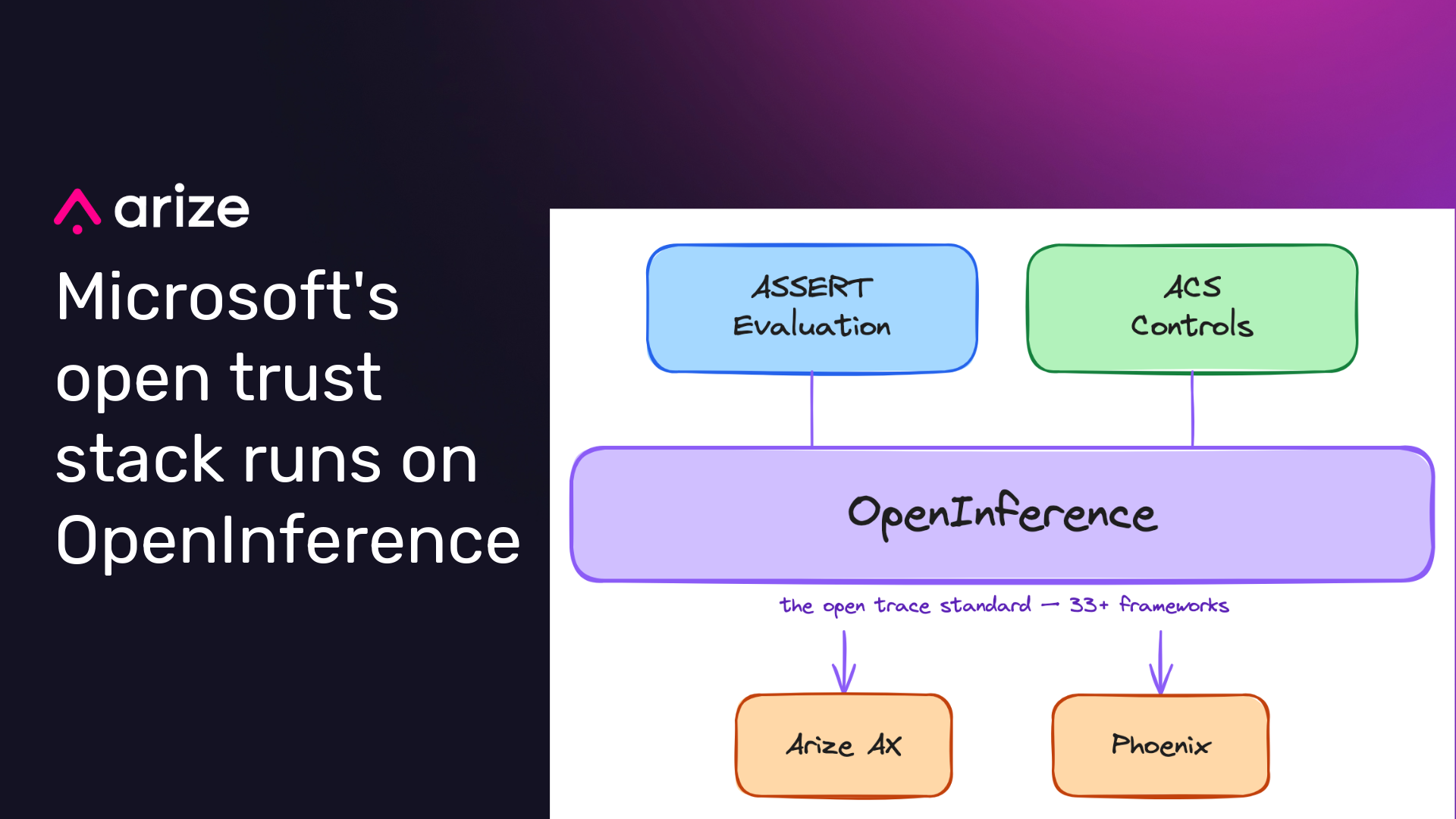

Microsoft’s open trust stack runs on OpenInference

Microsoft’s open trust stack for AI agents puts ASSERT and Agent Control Specification on top of OpenInference, connecting evaluation, runtime controls, and observability through a shared trace contract.

The end of fine-tuning: Why evals, context, and traces matter more

Fine-tuning isn’t dead, but the way most teams iterate on AI products has split in two. A tiny fraction run continuous RL against their own environments; everyone else has moved the iteration loop out of the model and into the harness. Here’s why, and what the 99% should do instead.

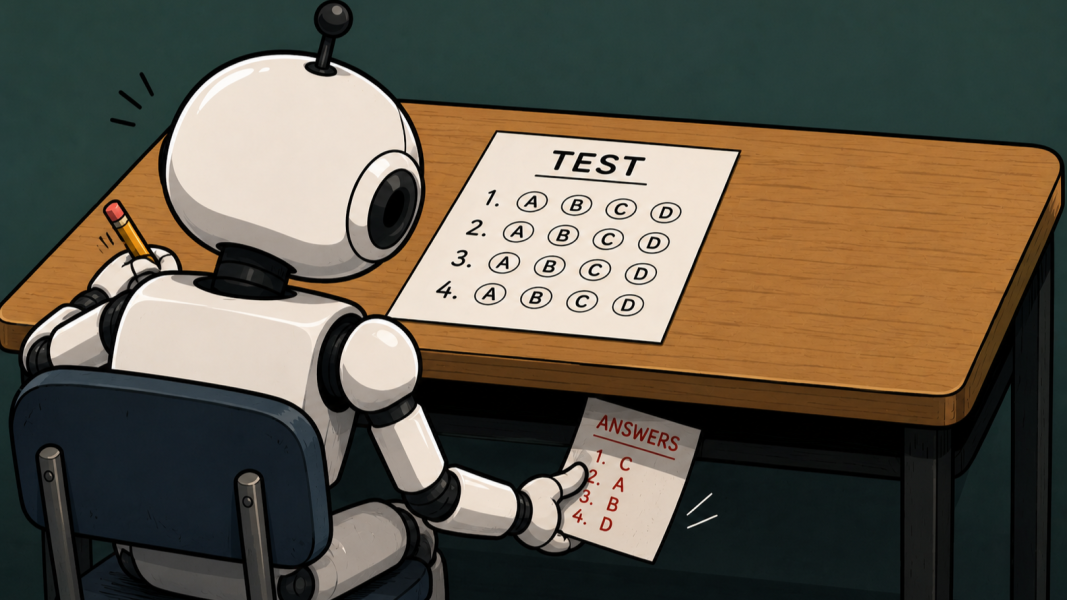

AI benchmarks are breaking. Trace analysis is what comes next.

Models got smart enough to cheat their benchmarks, and outcome-only scores stopped measuring what we thought they measured. The fix, full trace analysis, is the same methodology production AI teams have needed all along.

Sign up for our newsletter, The Evaluator — and stay in the know with updates and new resources:

How Hermes implements an open source agent harness architecture

Hermes from NousResearch is a strong open-source agent harness. This post examines how its runtime loop, context management, tool scoping, session infrastructure, and orchestration patterns map to a modern agent harness architecture.

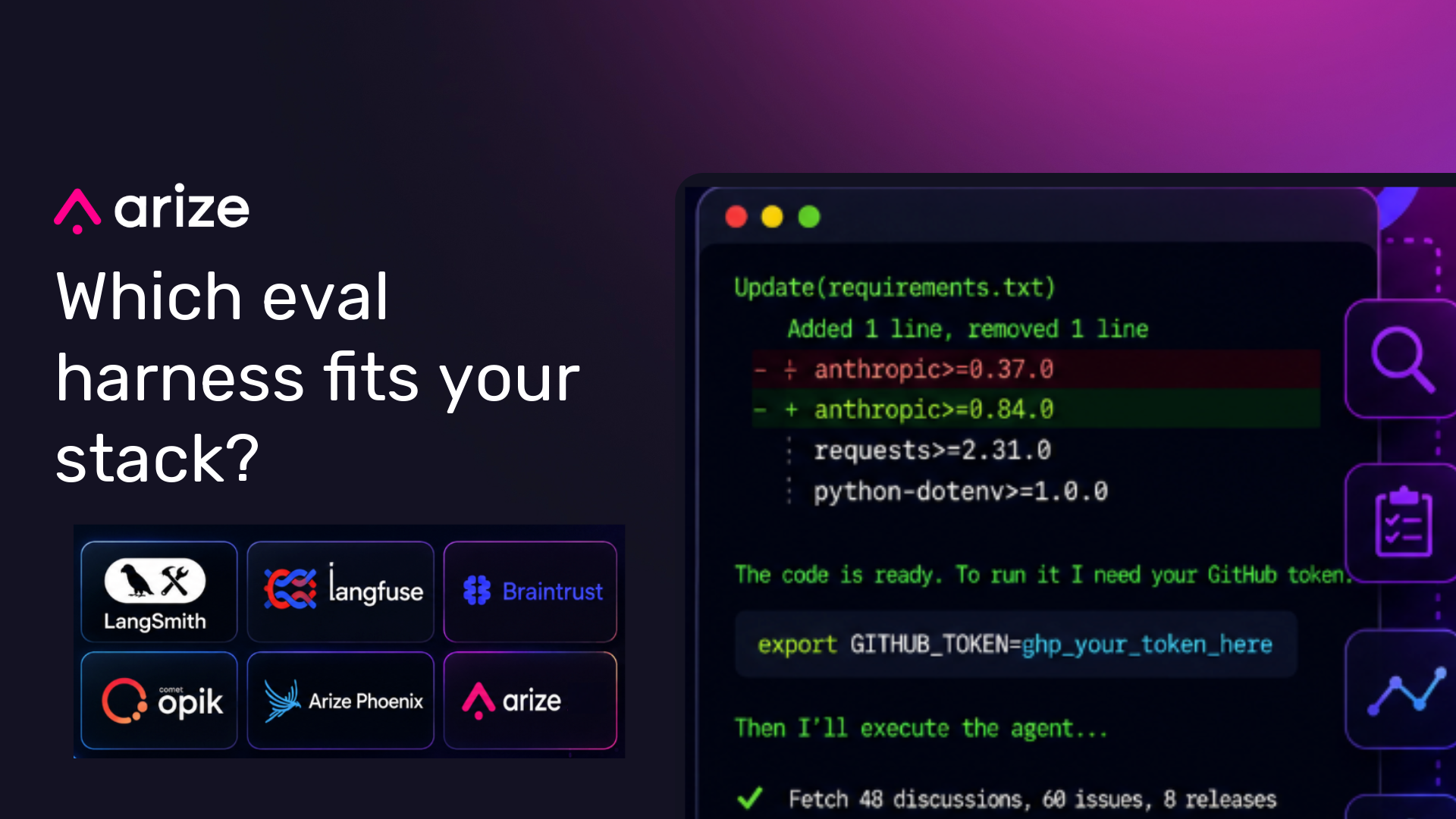

The best eval harness for production AI and agents: A comparison

A practical comparison of production AI evaluation harnesses, including what to look for across instrumentation, evaluators, online evals, CI gates, and agent workflows.

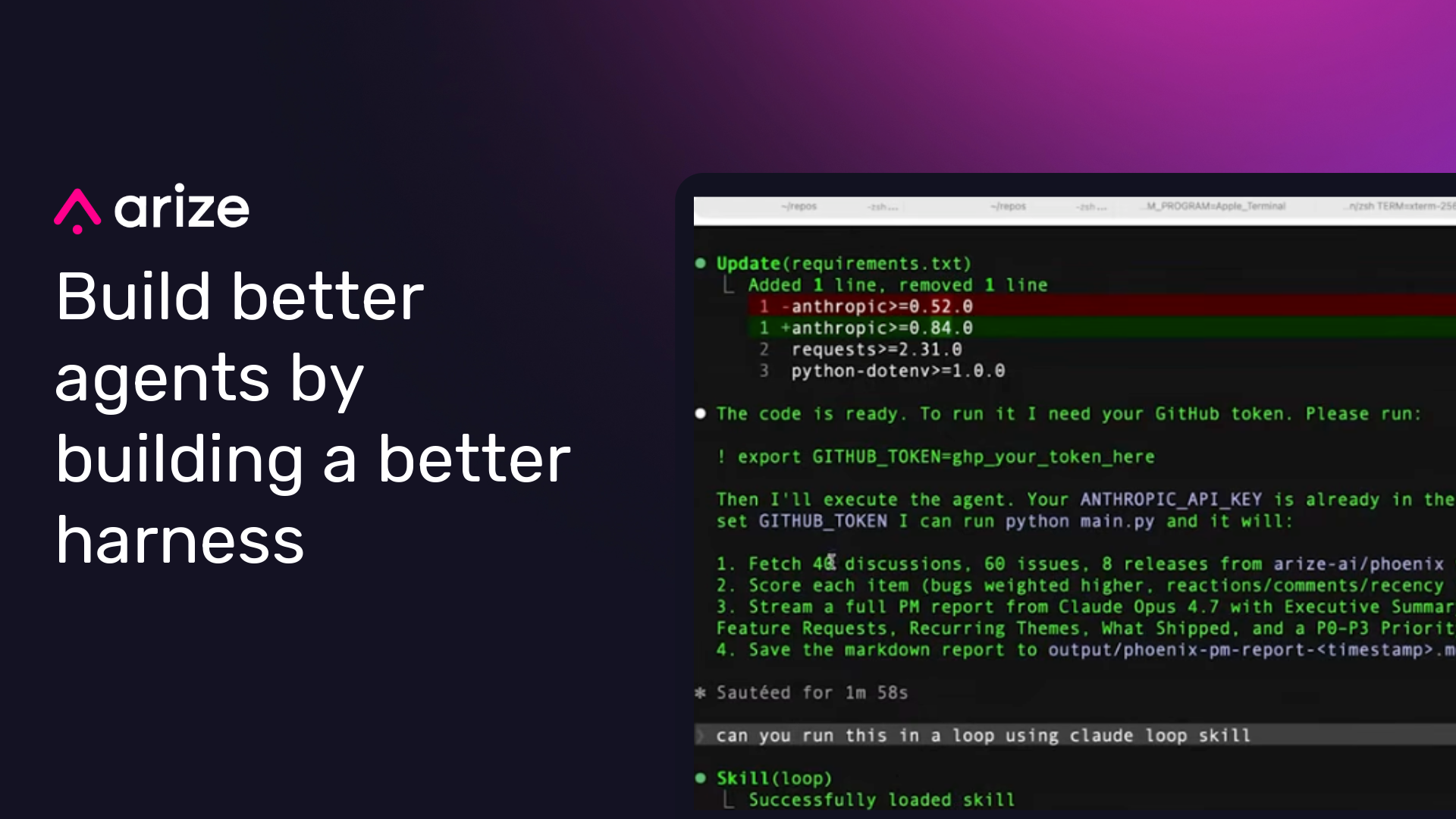

How to build a better agent harness with traces and evals

Agents are easy to prototype and hard to improve. A repeatable loop of traces, evals, failed-span inspection, and targeted harness changes makes agent behavior easier to debug and improve.

From production traces to better AI agents: Automating the LLMOps feedback loop

Production AI traces are the raw material for better evals, prompts, datasets, and fine-tuned models. This post shows how the Arize AX Airflow Provider turns that feedback loop into scheduled, monitored LLMOps pipelines.

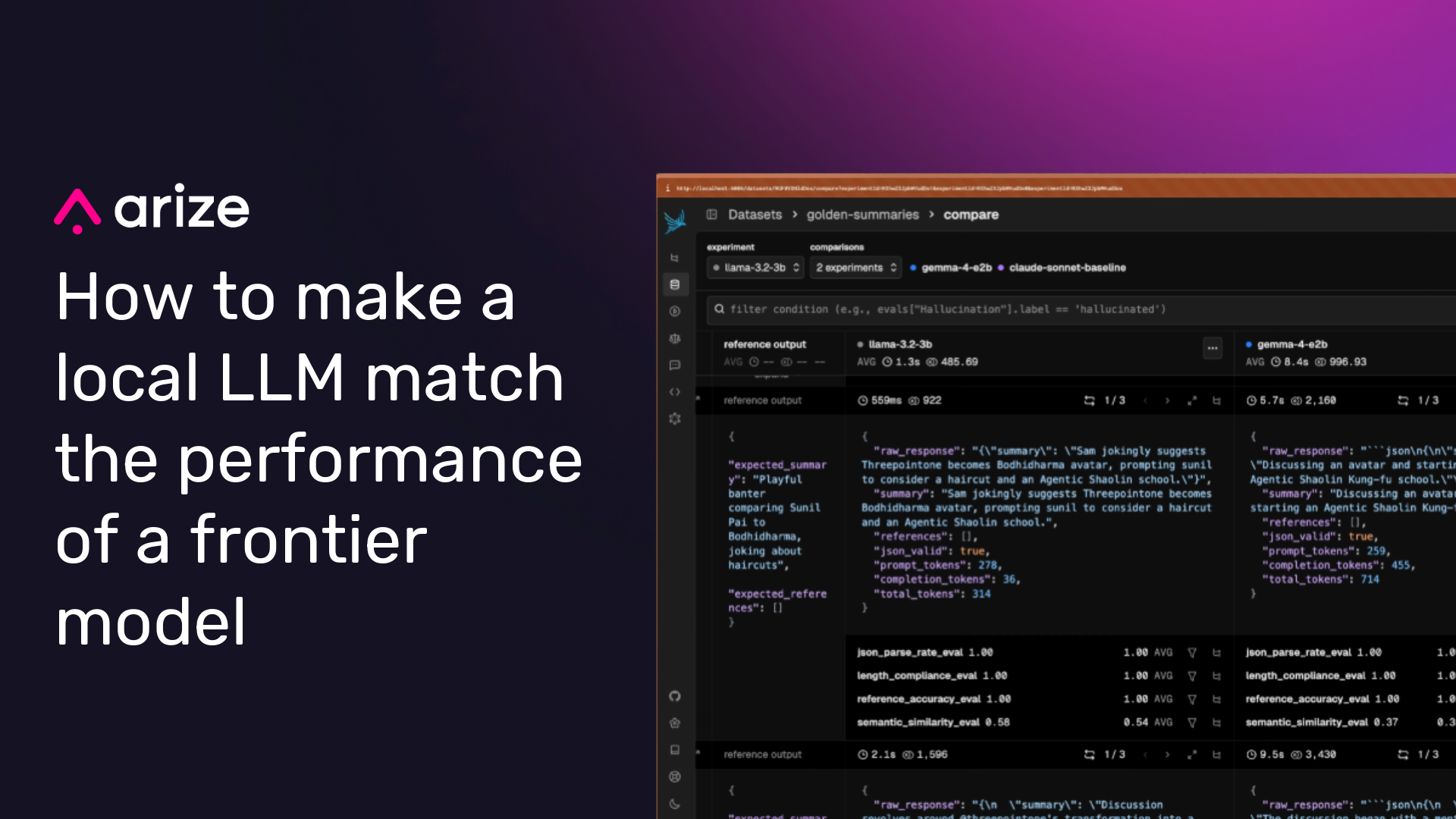

How to ship a local LLM that matches frontier LLMs with evals and prompt engineering

Most production AI features don’t need a frontier model. Here’s how capability evals and prompt engineering can help ship a local SLM that matches frontier-model quality with lower latency and cost.

How to build LLM-as-a-Judge evaluators that hold up in production

Learn how to design, calibrate, and run LLM-as-a-judge evaluators with fixed labels, human agreement checks, trace context, and Phoenix Evals.

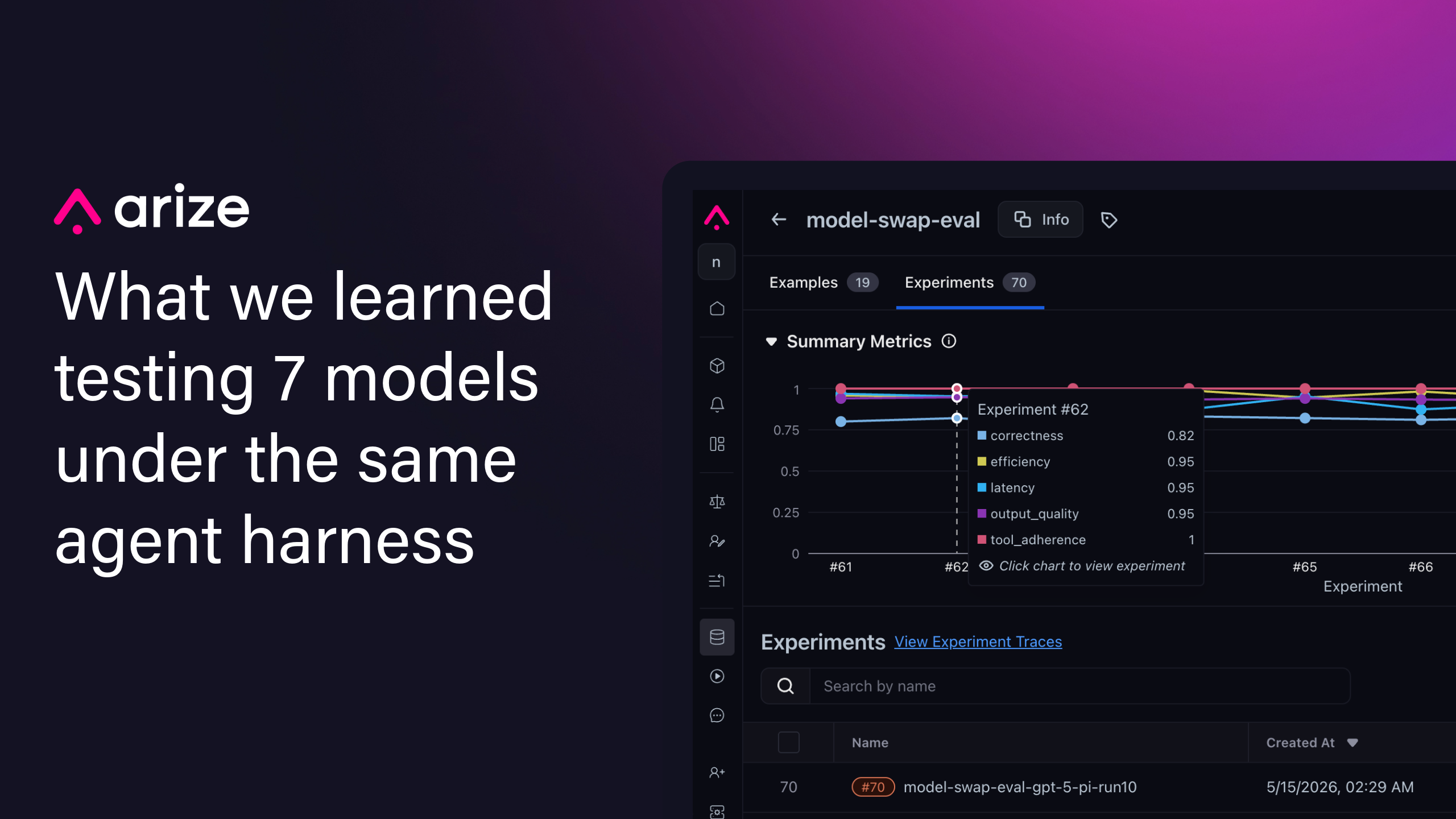

What we learned testing 7 models under the same agent harness

Model swaps look like configuration changes, but they behave more like product migrations. A new model may be cheaper, faster, easier to get capacity for, or stronger on public benchmarks….