Four Crisis-Tested Lessons For Leading Effective ML Teams

Arize AI and Vectice, an AI assets management and documentation platform, recently co-hosted an event featuring the “Voices of ML Leaders.” At the meetup in San Francisco, speakers from Kohl’s and Microsoft discussed best practices for talent acquisition, talent retention, aligning AI initiatives across business units, and building trust between teams.

Miss the action? Here are four top takeaways.

Tying Model Metrics To Business KPIs Upfront Is Paramount

Before joining SymphonyAI earlier this month, Pushpraj Shukla was Partner Director, AI and Machine Learning, Business Apps at Microsoft. There, he led a central machine learning (ML) team of over 50 data scientists and ML engineers focused on Azure Dynamics 365 and Azure Power Platform.

Asked about how to ensure alignment between ML and product teams, Shukla noted the importance of doing “a lot of extra work initially in terms of metrics and OKRs. Every individual should know what they are doing and should be moving a metric, and they should also know that metric leads to what kind of business success their eventual customer – and those two things should be aligned ideally. For example, if I’m working on a natural language processing model that will lead to better summarization of meetings, then I’m working on a metric that should track what better summarization looks like and that metric should track how better summarization leads to reduction in time of the person who has to send the meeting notes after the meeting. If these things are in place, then you always know where your model leads to what success and what that success looks like for the eventual customer. You better have good processes to set these metrics upfront, and that’s where a lot of effort at the time of planning and setup can help.”

Investing All The Way Through The ML Lifecycle Is Critical To Ensuring AI ROI

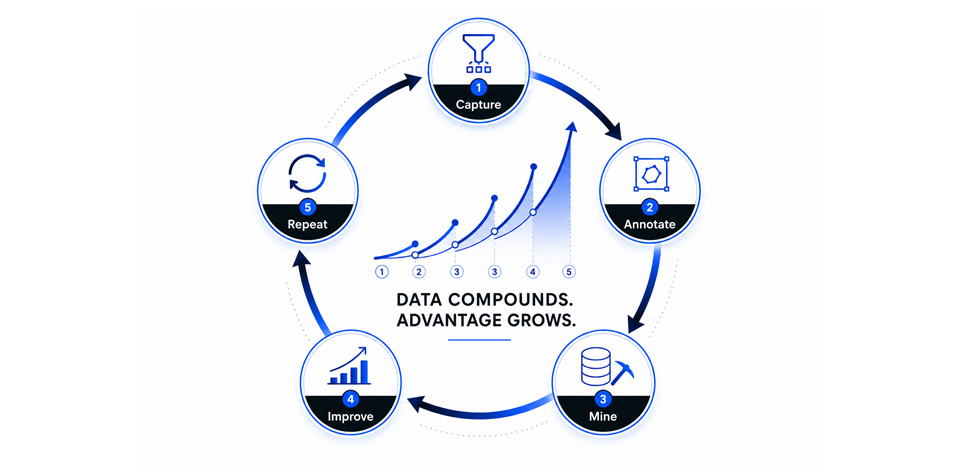

Before becoming Data Science Leader at Vectice, Justin Norman was Vice President of Data Science at Yelp and also led machine learning teams at Cloudera and Fitbit. Drawing on that experience, Norman emphasizes the importance of planning for the entire ML lifecycle. “Every ML or data product is a product lifecycle, which means that you need to invest in the design aspects of it, human computer interaction (HCI), doing hypothesis development and all the things you would do if you were building a software product and taking it all the way through the lifecycle. At some point it’s going to be out there and it will drift, and it will be out there at some point and it will fail, and it will be out there some point and it will need to be retired – all that requires different resources that are typically not planned for at the beginning of an AI lifecycle and this is a real problem because typically when you’re looking at a machine learning outcome you’ll think ‘I’ll just put two MLEs or two data scientists on it and we’ll move off and move on.’ And this is relevant to the ROI conversation because you’ll never get to ROI if you don’t support it through the lifecycle.”

Threading The Needle With Central ML Can Be Worth It

In an era when many are questioning whether to have a centralized machine learning team or to embed ML teams within product organizations, Azure’s Business Apps team is seeing success with a centralized approach that blends product focus with broader technical breakthroughs. By dividing based on “different areas of ML – natural language, conversational intelligence, or supply chain machine learning, or data prep and anomaly detection – we are internally divided in a way that helps us make progress technically in these big theme areas, but then we are also aligned with the product groups” through dedicated product managers and processes to ensure business needs are clearly defined, notes Pushpraj Shukla, describing his time at Microsoft. “But we can evolve. The conversational intelligence team that works for customer service leads to models that can be used in sales conversations as well,” he notes.

Assessing Talent Is All About Simulating Real-World Problems

Doctor Ragnar Lesch, Director of Data Science at Kohl’s Department Stores, leads a team that deploys ML models across several use cases at the company. In assessing new talent, Lesch’s team recently implemented a take-home assignment for candidates. “We give them a modeling task which we actually have tackled, so it’s a real Kohl’s problem. We created a smaller dataset of that and simply ask: if we have a need to forecast something about it, for example, what would you do? They get two days, then we have a conversation. They’re free to show their Jupyter notebook or any code they want to use for that or present a slide deck, then we have a two-person panel to simply discuss their way of thinking. That’s more what we want to learn – how they think, and not just what kind of previous models they have built but what they do when confronted with a new task. It’s really useful for us to see how much time they spend on the various stages of modeling – whether it be the data cleaning, then finding the right model form, evaluating the model. So far, we’ve done this for 18 months now and haven’t had a bad hire.”

Conclusion

AI is now being deployed in nearly every industry, informing everything from customer service to cancer care. With enterprise investments in AI forecast to eclipse $200 billion by 2025, the stakes for getting it right are higher than ever – especially in a challenging economic environment. By implementing a few best practices, ML leaders can ensure they have a good foundation for future success.