LIMA: Less Is More for Alignment – Paper Reading and Discussion

Introduction

In this paper reading, we discuss “LIMA: Less Is More for Alignment.” This research delves into the efficiency and effectiveness of large language models, demonstrating the power of pre-training and how minimal fine-tuning can enable high-quality output. We will do a deep-dive into how LIMA outperforms its contemporaries, redefining the existing knowledge paradigms in the field of AI language models.

Join us every Wednesday as we discuss the latest technical papers, covering a range of topics including large language models (LLM), generative models, ChatGPT, and more. This recurring event offers an opportunity to collectively analyze and exchange insights on cutting-edge research in these areas and their broader implications.

Watch

Dive in:

Transcript

Aparna Dhinakaran, Co-Founder and Chief Product Officer of Arize AI: Let’s jump in. We’re going to go through LIMA. LIMA stands for “less is more for alignment.” It’s a paper out of Meta, CMU, USC, Tel Aviv. I think there’s Q&A in the Zoom, but then there’s also Q & A in the Arize community. So drop questions, let’s make this conversational and dive into it.

Jason, maybe we could start off with your big takeaway from the paper?

Jason Lopatecki, Co-Founder and CEO of Arize AI: I think the biggest takeaway is how much you can get with how little fine tuning. There’s a feeling in the community of those who are actually fine tuning these models that you get the base model like a Llama, and then you fine tune to say an Alpaca. All the knowledge is kind of built into these base models, all the ideas of the structures of the world and structures of language, and all the ideas contained in it that make them work are kind of containing these big training runs, which are a little bit of like large training runs plus, you know, putting the data together.

And then these final fine tunes kind of let you express the model, and that knowledge it has in a way that maybe works for people or works well. It says it in a way, or explains it in a way that humans like to interact with. And I think what’s surprising about this is how little you need of that last stage. I think the point here they’re trying to make is that very so like selectively fine-tuning in the end is quite powerful. That was the amazing takeaway I had.

Aparna: There’s one line actually in the paper that I thought was like a great summary. It’s exactly what you’re saying, which is: “We define the superficial alignment hypothesis, the models, knowledge, and capabilities are learned almost entirely during pre training.” So this is kind of the base model, what’s actually there during pre training. This is really where the majority of its capabilities are cemented: “While alignment teaches it, which sub distribution of formats should be used when interacting with users.”

What format should the response look like for what scenarios? When should you respond with what is a little bit more casual? I think there’s a lot of depth into what is sub distribution of formats. But it really did feel like this is kind of the layer of user interaction that that’s really what alignment is focused on. To me this actually got me thinking, you know, I’d love to see alignment versus prompt engineering down the road of you know. How far can you get with prompt engineering versus, how far does alignment? You know? How far can alignment take you? And maybe the gaps are really big today, but that was like a really critical thesis of the entire paper.

Jason: Yeah, I agree, and I think there’s also a subtle point here where you have the right set of fine tuning at the end of alignment, and you probably need to prompt engineer last because there’s a very natural: I ask this, I get this format, I ask this, I get this format. And you’re kind of fine tuning at the end, so I think that’s one of the things with the difference between maybe ChatGPT and the original GPT-3, which is like you had to work a lot to get the right response out. And then it just started responding, much better.

So I think you get the fine tuning right, and you probably need slightly less prompt engineering. But it’s probably very dependent on what you’re trying to get out of it too. So assuming the things in your response and response formats are in the fine tuning set, you’re probably good. If they’re way out of totally different, like I want this crazy JSON format or something, there are probably things that aren’t in there that you’re probably gonna have to work quite a bit for.

Aparna: So I was actually surprised. Really small subset, only a thousand prompted responses in total that they experimented with for this alignment. But they collected this data from different community Q & A. websites: stack exchange, WikiHow, Reddit data set. Maybe try to see if you can pull them up, Jason, that’d be interesting.

And in case you’ve never heard of them, they give you a good little description of what each of them was. My favorite was the Reddit one, which is, it’s a little bit more witty and sarcastic versus informative but basically they collected data from these data sets. And then what was interesting to me was that they really put a lot of effort into making these responses as high quality as possible. So even though there’s a really small subset, you know, only a thousand examples, you really focus on the quality of that thousand examples more than quantity here. Which is maybe a good note to people on the call or thinking about. Well, how do I improve the outputs of LLMs? It’s really about the quality at the end versus quantity.

So what do they do? They spent some time actually diversifying the data beyond just questions and online community sets. They also had you know, a group of generate some prompt response pairs as well, and this kind of just gave them. I think they hinted at here, which is just. This is really laborious, creating a really high quality data that was really tough. This kind of made it feel like labeling might not go away anytime soon by labeling. Still, there’s still some use cases for labeling, even in the LLM world, because just a good takeaway of this.

Anything else you wanted to add there, Jason, on the data set side?

Jason: No, I think I think that’s it. I mean, the other takeaway is–and I’m hearing this a lot from the people building the models and then and the people fine-tuning–is that data quality is starting to matter a lot like thinking about both the data quality which you build your model from in the open source community. You know, internet-scraped data set that is designed for the base model builds. So those would be, you think of those as like the Luther folks use those to build those GPT-Js. But the curation of your fine-tuned data set actually matters a lot. It’s funny, like there’s a dark art of search where you wait up the index based upon this type of action. It’s you know, you build this knowledge of the years. It certainly feels like the base model building. There’s a lot of that dark magic like people say you wait up Wikipedia, for example, versus sources that you know or have really good knowledge. So a little bit of data quality stuff, also matters on the fine tune side, like when you go to fine tune, probably getting that right data set in place makes a lot of sense.

Aparna: I think that’s interesting. So they actually trained LIMA, and started from, in this case, Llama. I think there’s a little bit of a note of just some data quality stuff here, too. So, using an end of turn token. This came up a lot, and some of the paper explained. So that was interesting. So once they actually trained it, they went through a couple of different evaluations of Lima against several other state of the art language models. The results are actually amazing so they compared Lima against baseline Alpaca, DaVinci, Claude, GPT.

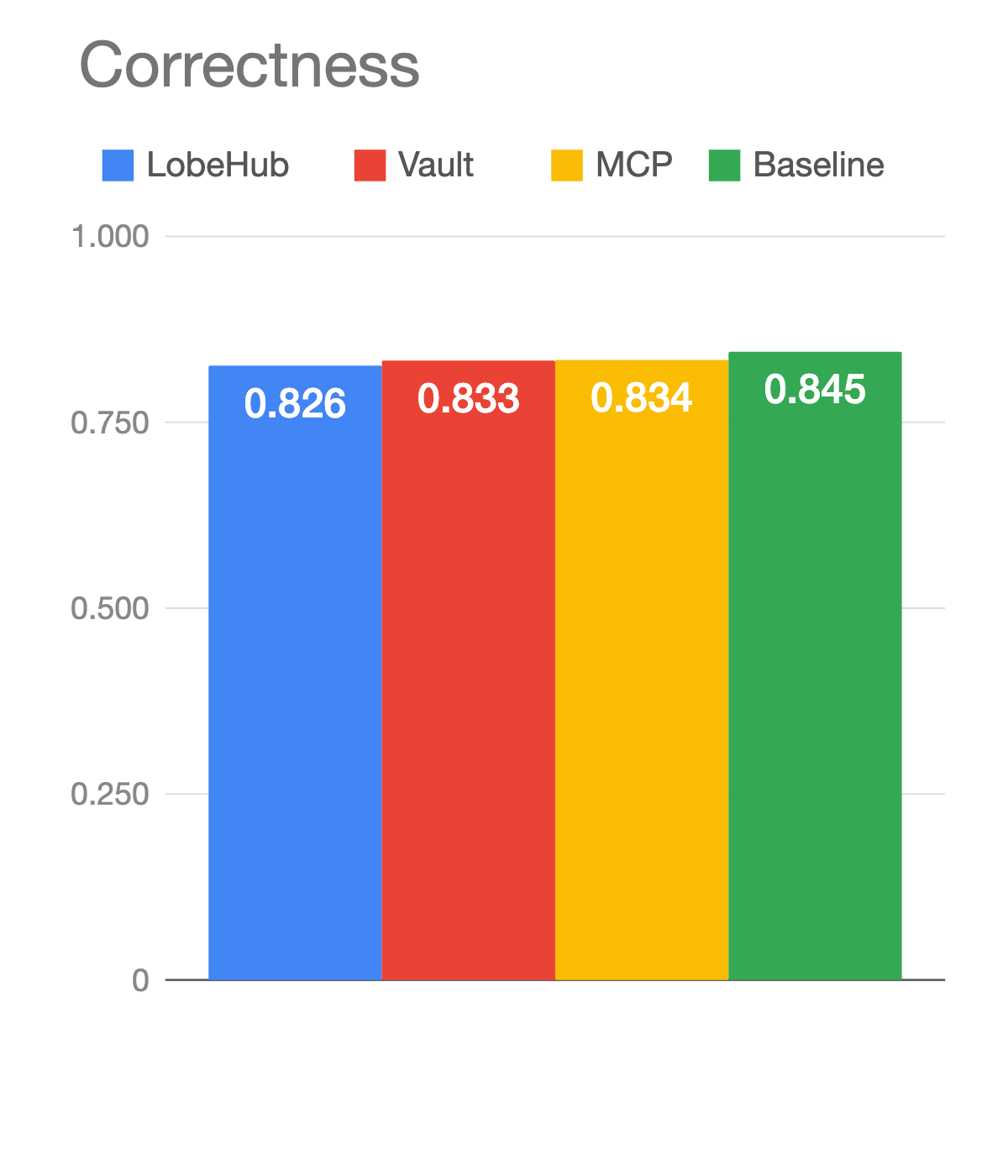

This is one where they basically tested well against what is a human’s preference of a response. We’re on LIMA compared to these other state of the state of their language models. And then they also ran it against GPT-4. And then they kind of compared it and said, okay, well, the results are actually pretty similar. So that was really interesting. They had a human evaluation, and then they also had an LLM evaluation, and in both cases, I think LIMA was most times definitely better than Alpaca, but it still looks like GPT-4 is better than LIMA. So it’s really interesting that in both of these evaluations that was kind of the same ordering of preference.

Jason: Yeah. And I think the Alpaca one is one of their main points too, the Alpaca is like the llama trained on the 50K data set. That was actually, I think generated using GPT or Text da Vinci. And so they generate question and answer examples and different types of prompt response stuff but the fact that just a thousand fine team samples beats the Alpaca is kind of one of the points they’re trying to make, too. ‘

Aparna: It’s a good point. So there’s Alpaca, which is fine tuned on the samples. There’s a daVinci which was tuned with reinforcement learning from human feedback. So it’s kind of a comparison against RLHF. Then they have Bard, Claude which is trained with reinforcement learning from AI feedback and then GPT-4 which is also trained with RLHF. So you’re right, like the Alpaca and the DaVinci are trying to make a point with that. It was better than sample points.

This might be an interesting thing to go through. So it kind of talks about how did they do on out of district, out of distribution samples. So when you ask questions that we’re outside of the training distribution, how did they do? Looks like in general, it had similar absolute performance statistics outside of the training distribution it’s able to generalize well. The one note that I thought was interesting was it’s more likely to produce unsafe responses. So that was one where I think it, you know, small, small little note of safety was maybe one area where it was slightly less than.

And then I think the rest is really just like a discussion on why is less more so. They had all these results okay? Well, here’s kind of how it compares human and GPT for evaluations. And then there’s kind of a conversation of why like? Why only with the samples. Is this doing as good as it’s actually doing. And this was interesting, I think that the the points that they seem to like. I feel like the paper is really highlighting. As input diversity and output quality have the biggest kind of positive effects. So even though it was only a thousand samples. The diversity of the , samples mattered a lot, and then the quality of the responses in those samples really had the the biggest impact compared to Alpaca. And maybe there’s a nudge of like the ones that were generated from GPT. weren’t that high quality versus the output quality of the ones fed here? We’re kind of. They’re a human generated, but seem significantly kind of better.

Jason: I agree. And I think going forward the question is going to be like: How do you create these data sets, and how do you refine them? And how much is enough? I mean, there’s just so many things that I looked at, and it was hard to know. I think, as we get through this at the end I wanted to talk a little bit about how there’s an explosion of this fine tune going on, and there’s whole leaderboards on Hugging Face right now with, like, you know, everyone fine tuning, something they try to get above someone else. So this is kind of like, you know, trying to get hints at like, what? What’s the magic? But I also think, like everyone’s throwing everything at it right now and trying different approaches.

Aparna: One of the things I felt like after reading was is there a score to be able to measure something like an input diversity or an output quality. They kind of had. Okay. Well, in the data set to test the effects of prompt diversity. They had a couple of different data sets, stack exchange data which has heterogeneous prompts with excellent responses.I was actually really curious about that, because I thought a lot of stack exchange is just about, you know, pack encoding. So I didn’t know how heterogenous it was going to get. And then it said WikiHow data which is homogeneous, prompt, with excellent responses. That one kind of threw me.

But it was interesting how they defined it, like what are heterogeneous prompts here? How are they measuring that? Are they measuring like you could kind of read as a human, you could say, Okay, yeah, these are great responses to any way of knowing that. So that when you look at your data set, you have a way of scoring. Is this really good enough data? And maybe that would help with being able to know well, how much data do I really need? Do you need some threshold or some cross where you really start to notice a difference? This is one where I’d love to see a way to measure this.

Jason: Yeah, we’ll show you the leaderboard at the end.

Aparna: This is maybe one of the best parts of the paper, the actual examples. So they tested this on we’ll go through it. So they test this on a single conversation or single step conversation where they asked a question, and then they got an answer. And then they also ran this on multi turn dialogue where they asked a question. And then they did multiple. They asked a question. Then they asked the follow up question. They asked the follow up question. And so let’s maybe go through each one of those.

So in the single turn dialogue. I guess this is some examples of in distribution. You know: My daughter’s super smart finds the kids in her school boring. How can I help her make friends? Pretty great response to this.

And this is one where it’s kind of out of distribution. So: Write a stand-up skit in the style of George Carlin that ridicules PG&E. And so this is one where there’s probably not a lot of training examples on something like this. Write a script that ridicules some electric gas company. And so just an example of two different samples that these were trained on.

Jason: Two things of note is they did do human preference as a like one question is like, Do you look at these? And you’re like, how in the world. Are you going to get to judge, ground truth on this or understand performance? And they did do human preference. But they also use GPT-3 to do evals. So you’re seeing that a lot which is: let’s use an LLM to do at scale evaluation. So I think the major results were human preference. But also there’s a note of GPT-3 evals.

Aparna: The interesting thing here, this is kind of the visual here of comparing the human versus the GPT-4. And the interesting note of this is like: they actually match pretty closely, which is a great note for GPT-4 evals, right? Still the same of what GPT-4 got versus what the human preference one got which is, which is really awesome. And I think in both of them–of course, GPT -4 is still better, but that I mean, it’s close. How good these are like, this is one where I left thinking, wow! Something like annotation labeling. I mean, this is a good data point of where GPT-4 could really be doing those annotations or scoring.

Jason: Agreed.

Aparna: I have a great question here: I’m interested in what factors you think influences input diversity (concepts, language, sentiment, etc.)?

Great question. I had this question to be honest with you, too. This is kind of what they hinted at in the paper. So they have call it the three different data sources, which was WikiHow, Reddit, and then Stack Exchange and then what they called filtered Stack Exchange which is diverse, but does not have quality filters. WikiHow has high quality responses, but all of its prompts are how to questions. Sothat’s kind of what they were getting at earlier when I saying they said stack exchange was heterogeneous. But, Wiki How is homogeneous. I don’t know if it’s like formatting of the data is like maybe one of their biggest things of input diversity. That was kind of what I thought, too when I was reading. I was like Stack Exchange must have a lot about technical subjects. But I didn’t see that in the paper itself. It really seemed more like formatting.

Jason: Yeah, kind of the big note. I think there’s an interesting point here, too, which is like if you’re thinking about the bias angle of the model, like the raw big thing you built on probably contains all the ideas and concepts related to every you know, negative subject you can think of. And then this, this you know, these final stages where they find it. Maybe they do downweight like we don’t know how Meta doesn’t tell us how they built the data for Llama. They give hints at it in papers, but it’s not like you know, exactly. So you don’t know. Did they downweight certain things that were negative?

I just think there’s this final stage here which maybe it doesn’t express the things that it knows and the way you don’t want. But it still might. They still might be in, you know, it’s concepts around those negative subjects still might be in there. so I think there’s a you know, and that’s why they called alignment in the end. You’re trying to make sure the way it’s expressing these things aligns with what you want it to do, even though those subjects probably do exist internally in some way.

Aparna: And there, if you’re curious, this is kind of the set of examples or , examples. Sorry. So this had the set of stack exchange stem, and then they had stack exchange. Other. So I guess they did have a decent half was done. Half was, you’re right. They did say that somewhere above stem exchanges, and then others. This is like English cooking travel and then we sample the questions and answers from each set using a temperature we take the questions at the highest score, we filter answers that are too short. So less than a , characters. If anyone’s ever writing answers on stack exchange and you want it to be used for people’s training data, this is, we need to fall under so answers that are too short. It was less than , characters too long was more than , characters written in the first person. So I, my, were all filtered out, or referenced other answers, and then they removed all these other links, images, etc.

So this is kind of where they ultimately landed. It was like some from stack exchange, some from wiki how And then they did a test on also, they kind of had a group B of people just ask questions as you would ask your friends. And then what would be the response? What would a high quality response have been like? So things like advice? They normally probably wouldn’t put on stack, exchange, or WikiHow So my guess is questions like this one: My daughter is really smart, and she’s finding friends in school. This is probably one that was maybe from more of the friend’s advice questions.

Jason: I thought I’d show some of the leaderboard stuff going on. I don’t know if everyone’s caught this, but there’s this leaderboard now on Hugging Face, so you have Llama, you have Alpaca, and you have a bunch of different models and tons of different fine tune versions of it. So vicuna, there’s a website out there that captures chatGPT statements and you know, everyone was trying to save their chatGPT conversations. Well, why not train Meta’s Llama on ChatGPT prompt and responses? Fine tune it. So there’s like fine tune versions on ChatGPT. Most of these are Llama type fine tunes and what’s meant by that quickly is there’s kind of this base model from Meta Llama and there’s this instruction fine-tune set. And then basically, you create Alpaca by running by fine-tuning this big model. So there’s all these variations. You’ll hear all these names, Alpaca. At the Hugging Face event, there is a llama outside, you know. It’s like, attack of the llamas. And so how you create this, your training so that you know the one we just looked at an email was only a thousand samples. Collecting and creating this 52K is hard for a group on a limited budget. So they actually use, you know, text daVinci and others. to actually create the examples that they use to create Alpacas. So LIMA, which we just went through is a thousand sample version training Llama. Meta actually was the person behind that. But you can see a million versions of different models, and in fine tune versions. And almost all these, I would say, move the vast majority of these are llama-based. It’s worth noting that there’s this leaderboard, that’s out there. So they’re like the SATs for LLMs. There’s a lot of graduate students trying to get the next spot on the leaderboard.

They’re quite diverse in the different types of things, they’ve added. And I think it’s awesome hugging face with this up. It’s like it was kind of a mess before. so it’s kind of interesting to see who pops up, and the fact that fucking hopped up in the last couple of weeks, you know. Okay, it’s worth a paper read, but a lot going on here. It’s also worth noting, I still haven’t seen like GPT is doing something magical on that interface, or like that, there could be like a million black arts going on behind the scenes to make the whole thing work really well that a lot of this kind of self built stuff don’t quite perform up to what we experience in those interfaces. So if you’re just a company trying to run on your own data, these work well.

Aparna: Any questions, comments, any notes you want to share? If not, drop some suggestions for next week’s paper. I was thinking of doing the spring paper or GPT-4 outperforms RL algorithms, that one’s a good one. There’s also the one I think you had sent me, Jason, about agents in GPT-4

Jason: Or Minecraft. That’s a pretty amazing one, that would be a fun one next week, so we’ll figure it out. Put suggestions in and we’ll look through.

Aparna: Looks like we have one last question in the chat. Ethan asked: Do we think the future of RLHF is just 100 data points as opposed to building robust, large, RLHFdata sets for specific cases?

Jason: That’s a great outstanding question. I guess it’s a bigger debate we’re we’re having internally here and across our customer base, which is like it’s the fine tune versus in content, like context, prompt information. So what do you decide to fine tune in? And RLHF is a method of that or what do you decide to? Just how, what, what should you just be putting in the prompt so that the model is responding in the way you want? And I think that’s a debate a lot of companies are having to like. Should I go in fine tune, or can I just get what I want out of like prompt engineering the context. So I think what we’re seeing is, it makes sense to fine tune if all of your customers, if the attribute or thing you want to change across everyone interfacing with the model, you, you know, should you? You want to change it for everyone, so they’ll maybe RLHF, for fine tuning one thing. So maybe it’s slightly better in some area. But then the context is what you use for a lot of like a lot of, you know. a lot of the variability you might get on a daily basis. So I think I think I think there’s a big question, how much do you fine-tune versus how much do you prompt engineer? So that’s like the biggest one. And then I think, if you’re assuming there’s going to be a lot of fine tunes, I could imagine doing them for certain environments or certain responses which I think was the point there. Like if you’re in a certain environment and you want the model to act and respond in a certain way like you can imagine the way mathematicians talk are like different than regular people. And maybe you could find to you in a way that it’s always responding in that way. But the question is, can you get that through the contacts? Could you just prompt engineer your way to that same experience. so I think that’s the biggest debate we’re having internally. Anything else you’d add?

Aparna: Yeah, I mean, I think there is also a lot of conversation going on right now about, especially with Meta’s claim that pre-training is more important than, and fine-tuning. I think that it may not hold true for all tasks, all domains, but something like a language model for specifically like medical diagnosis might benefit more from fine-tuning than something like this example here, where it was just conversations. So I think there’s still a lot to be experimented with. What is the boundaries of fine tuning versus where it’s the boundaries of alignment with the boundaries of really just a whole new language model A. And then, of course, with all this prompt engineering, and in context funding. And I think those lines are very, very blurry right now. There’s kind of a general arc of where you stop seeing marginal returns on each one of these. So you first try the easy approach in context prompting, and then you can go to the harder and harder approaches.

But I’d love to see some of these LLMs being deployed in those use cases like hospitals, for us to see where they landed on it themselves.

Jason: Yeah. So there’s a question on fine tuning here in RLHF, and do we help with that?

So, we’re an observability platform, and we’re typically looking at analyzing results. So if you’re thinking of actually training a model there’s a couple of places I probably would point to you for fine tuning. We actually have a session we’re doing with Anyscale, on Thursday, where we’re doing fine tuning. So, if you’re in San Francisco, that’s a Thursday event.

But Mosaic or Anyscale could be places to go . If you’re a corporation or or company trying to do something somewhat complex, Anyscale is slightly more open source. You know, Mosaic is probably larger but has bigger foundational models. And then if you just want to do something smaller privately, I’ll drop a note. Hugging Face has some good examples–they put out some open source libraries for fine-tuning, and I feel like there’s a lot in that and the write up, and different ways you can do it. But tons of examples out there for fine-tuning, if it’s a small model. And in a notebook that’s kind of where it maybe suggests. First, you know, get it working on a model something you can run in a co-lab and then extend out to something larger size, like Anyscale which will allow you to scale that out or Mosaic.

So, that would be my recommendation. And then, once you’re done, we have tools to help you analyze results.

Aparna: Cool awesome. Well, thanks everyone for being part, see you at the next one.