Lost in the Middle: How Language Models Use Long Contexts Paper Reading

Introduction

This paper examines how well language models utilize longer input contexts. The study focuses on multi-document question answering and key-value retrieval tasks. The researchers find that performance is highest when relevant information is at the beginning or end of the context. Accessing information in the middle of long contexts leads to significant performance degradation. Even explicitly long-context models experience decreased performance as the context length increases. The analysis enhances our understanding and offers new evaluation protocols for future long-context models.

Join us every Wednesday as we discuss the latest technical papers, covering a range of topics including large language models (LLM), generative models, ChatGPT, and more. This recurring event offers an opportunity to collectively analyze and exchange insights on cutting-edge research in these areas and their broader implications.

Watch

Dive in:

Transcript

Sally-Ann DeLucia, ML Solutions Engineer, Arize AI: We’ll give folks a few minutes to join. Do some quick introductions. and then we’ll dive into today’s paper, Lost in the Middle–super excited about this one. I think it’s really groundbreaking, and it’s gonna change the way that we think about large language models. And then how we actually use context. So I’m super excited to dive into this with you, Amber.

Amber Roberts, ML Growth Lead, Arize AI: Yes, yeah, this is a good one. So we have a good amount of folks. As folks join, there will be a recap. We’re going to go through the paper and then recap and start a lot of discussions with some slides.

Sally-Ann DeLucia: Awesome. Yeah, let’s get into the paper. So this is lost in the middle, how language models use context.. and kind of really just diving into the abstract. I think it’s you a really good idea of what this paper is about the very first sentence is the key reason why they’re doing this research and something that machine learning engineers and data scientists really need to pay attention to as they implement these models.

And that’s while we’ve had improvements in tech that allow us to have these large language models that have the ability to take in longer context. There’s really not that much known about how well these models actually use this context, or even how they use it in general. And so this paper really aims to address those. And they found some really key limitations, if you will.

So the first one is going to be that models perform better when the relevant context is at the beginning or the end of the input context.The second is that the performance degrades when the relevant context is in the middle. Hence the name most in the middle, and the last thing is that as the in context length increases, the performance of the model is going to decrease. We’ll dive into each of these a little bit. But I think if you’re just like, what is this paper about? Those are really the key findings in this.

Diving in a little bit more, we know that language models perform downstream tasks through prompting. And so this is important because that means all of that relevant information is being put into the input context. and this is how the model is then going to return a generated text completion.

So there’s a few different things that can drive this input context link up. The first is when you’re just dealing with lengthy input domains, something like the legal use case–having to type in all of these legal or scientific documents that adds into the token length But also, if you’re just doing like a QA bot, and you’re serving that conversational history to the model every time that’s going to increase your context length. Search and retrieval is another way that we can do that. So as we have more and more of these use cases that are increasing the input, context, it’s important for us to understand exactly how well the models are going to use that.

And so, skipping over here, we already know the transformers scale poorly. So that’s something to keep in mind as well, because a lot of these language models are going to have transformers that are implemented. And so if you’re not familiar with those, transformers use what’s called self attention mechanisms. And so basically that creates a matrix of relationships between all of the words or all of your tokens. And so, as this length increases, what’s actually happening is that the memory requirements and time requirements are actually going to increase quadratically. So that’s another important complexity to understand with these models.

What does the paper do to actually figure out how well the models are using their context, and where it sometimes fails? Well, they’re testing four models to open source. So that’s going to be Mbt-30 B-instruct, and then long chat 13 B, and then two closed source models GPT-3.5 Turbo and Claude. I’m sure everyone here has heard of GPT. But Claude from Anthropic is also rising in popularity. And there’s actually some really interesting findings about that model in this paper.

And the two tasks that we’re done were multi dot QA. That’s something that maybe people are familiar with. That’s any time you’re doing something like search and retrieval. You’re gonna have, you know, multiple documents that are going to be retrieved. And then the task of the model is to answer a question and the second task is understanding how well that they retreat the context. There’s no quick, easy name for it. But that was the goal of that second task.

And so for the multi doc Q A, there were some changes that they made so that they can, you know, do this kind of controlled experimentation. So the first thing that they tested was modifying the position of relevant information within the context. So changing the order of the documents, and also adding more documents to the context. So basically, there were K documents that are retrieved. K minus one of those are distractors. So that means there’s only one document that actually has the answer to it. So they’re changing the position of that one as well as adding in more and more distractors to kind of throw off the model, and also see how that performance changes with that length.

And so what they found was that the language model performance is highest when that relevant information is at the very beginning or at the end. And whenever it’s in the middle, we’re seeing that performance degrade. And we’re going to get into the visualizations and this will be really clear, but just at a high level, that is what they’re seeing when they ran this experiment. And something I thought that was really crazy. These are my live annotations you’re seeing on the page here. When I read that GPT-3.5 performance on multi document question is actually lower than his performance with predicting without any documents, and I thought that was absolutely wild. So I definitely think that’s something to pay attention to. I’ve had the thought that the more context you give these models the better that they’re going to perform. And this is showing me right here that that’s not the case I’ve I’ve hit issues with apps that I’ve building where I just keep thinking like it’s not having enough context. Well, maybe the problem was actually that I was giving it too much context. So something really interesting to think about

And then moving on to that second task that they did. That’s really against the question of how well can the lungs achieve context from their input. And so they basically set up this experiment where given a JSON formatted key value pair, they wanted to return a value associated with the specific key, and similarly to what they did with the multi document, they’re again changing the position as well as adding more key value pairs. And they saw again that models struggle to retrieve matching context from the middle of the context. So again, they’re seeing patterns. Now they’ve established with, you know the multi document QA. That this is happening. And now they can just see it with this basic retrieval task that they have so really starting to establish a pattern here.

And they did some other additional tests. that I think are just worth mentioning. I don’t think they’re the main point of this, so they investigated the architecture, which I do think is important–How does the underlying architecture of these models really play into this? And they found that in quota decoder models are relatively robust but when you go above their trained token maximum, they start to struggle. and we see that you shaped performance, which we’ll see in a little while.

They also try doing what’s called query aware contextualization, which means they place the query before and after the documents or key value pairs, and that did drastically improve the performance. But when they tried that seam method with the multi document QA and they didn’t find any results. So it doesn’t seem too promising to me as a solution there.

And then they even were able to see that base language models. That means without instruction, fine tuning. So for all of these there was this prompt instruction, fine tuning that was done. And that’s what created these instruct models that you’ll see all over the paper. But look! Comparing those to the base models, we’re still seeing the U shaped performance curve. So something interesting to note. And I think the last thing I want to cover on this page is this right here. And I think this is super important for anybody who is considering building with LLMs.

These retriever reader models on open domain question answering, there’s gonna be this trade off between adding more information and increasing the complexity. And their ability to reason over this context. So this trade off, I almost want to say, it’s like the new, like complexity bias trade off that that exist in machine learning. It’s like the new one. It’s like, okay, it’s too much information or not enough. And where to land in the middle.

So that’s a kind of an overview of the experiments that they did. A lot of the information that’s in the introduction is repeated again in more detail and through experimental setup. But I think we’ve had all the high points. What I really want to get to now is these results. So I have the model and token length here for you all to see, just if you’re curious what the token links for each of these are. You’ll see that there’s different variations of Claude and GPT 3.5. The additional ones are the extended versions means that’s when they took the the advances in Tech, and they extended that context window as we see here, it’s not actually that helpful. So, looking at this first chart here, what this is they retrieve a total of 10 documents, and these points are where that document that had the answer to was placed. And you can see this performance degrading and kind of creating this U shape. It’s more clear in the 20 and 30 documents, because we can really see U happen, but it’s really an interesting pattern that they’re seeing here. And so again, we’re seeing that when the total number of documents that we’re retrieving is increasing, our accuracy is going down and really just seeing this use. So meaning that all this context in the middle, it’s not really helping the LLM, it’s not performing well, it’s not able to see the answer that’s in that document.

And this is the pattern that we’ll see again and again. So that was for the multi document accuracy. And now coming down here to another task here this is just looking at the average across position so just averaging that out and looking just at the number of documents, and we can really see that decline quite clearly here. It’s a little bit tricky in the 3 graphs. But when we average it out, it’s really, really clear here.

And moving on to the JSON task. Oh, here’s something I really want to mention. So when they did the Json task, they noticed that Claude performed nearly perfectly on all the input links. while the other model struggled. So to me, that’s kind of maybe an indicator that Claude’s gonna be a potential front-runner. We’ve heard a lot about Open AI and maybe Anthropic is going to come in here and and sweep us off with a clause. So that’s something interesting that I saw as well in this paper.

But let’s get to here it is. This is similar graphs that we were seeing before for the multi doc. But now we’re doing the Json pairs. Similar setup, as you can see? So 75, 140, and 300 and you can see across the top. It’s color. Choices are a little bit tricky. But right across that state line that’s going to be your Claude models, and you can see that they’re performing nearly perfectly, and the other two models are struggling a little bit. So we have the long chat as well as Mbt. 30. And so you can see again, the performance is struggling. It’s a little less of that U shape that we saw. But still it’s interesting to see that in the middle area of retrieving they’re still having some struggles.

I think we can cover this one, and then maybe we’ll go over to the slides Amber, but I think this is just something interesting. And the one thing here that was interesting to me is that Here we’re looking at the two different types of model architecture. So because of the open source models they chose for both decoder only models they decided to bring in these encoder decoder models of long variations. And you can see that in the first graph, they’re quite stable when testing the retrieval. And it’s interesting, because once we move on to the 20 and 30 which is beyond their context length maximum, you can see that they too go to this U-shaped thing. So it’s interesting to see how like, perhaps there’s there’s they’re speculating that because of the way that the encoded decoder models work the fact that they can have the bidirectional encoding. So they’re getting the context both forward and backwards might be helping them.

But it’s interesting how it doesn’t really hold up when we expand that context window. So interested to see more work that’s gonna come out of this. I think we’ll see a lot of work leading into the architecture now that we have this insight, how these perform, I think scientists are going to kind of dive into the why behind all of this so really exciting stuff. But again, we’re cover all this or I’m just gonna cover all this. So if you miss something, don’t worry, we’re gonna dive into it a little deeper right now.

Amber Roberts: Awesome. Thanks, Sally-Ann. And yeah. So we wanted to start with an overview showing the physical paper, and we’re all very grateful to Sally Ann for her handwriting, because no one would be able to read mine. That was an excellent overview. And so we’re going to go just briefly summarize the main points because we really want the rest of the time to be focused on the discussion and these outcomes

So just summarizing the experimental controls like what they were able to control. the number of documents retrieved in the input. So the total number of documents. And then the actual position of the document with the answer so relative to the retrieved document. So where the place of the position of the document relative to the answer

And the way that this looks in terms of control. So when we say the relative position, if we look at three documents, and the desired answer is in the second document, this is where we would say the total number of retrieved documents is going to be three, with the important information at two, or the secondary place here.

And what Sally-Ann and I were mentioning is it’s such a straightforward thing and I think everyone reading this paper and the fact that it’s kind of obvious. Why weren’t we doing this? You know the way that you can just play around with the positions and actually see how things are doing is Sally Ann you mentioned that we want to get as much information as possible. We’re maxing out the amount of tokens we can put in. And so just knowing is it worth it? And we’re really glad this paper came out because it’s making us reevaluate where we’re spending our time and priorities. So we’re going to get into some of the key findings here which kind of the main key finding a lot of people are focusing on. But you’ll see Sally Ann and I are going to be focusing on a few other less talked about concepts that this paper really showed.

But obviously model performance is highest when the relative information occurs at the beginning, or, you know, AI is a little strange or or at the end of the input context.

And then the model performance continuously kind of decreasing as that input context grows longer. Especially towards you know where we’re seeing like. Obviously in the middle is where the most underperformance is.

But a focus like for us is that sometimes these extended models are not necessarily better. So. Thanks Sally Ann for putting the amount of tokens that each one of these models are using. Because we’re, you know, in AI, bigger is better– more information the better, like more data, more training data. Obviously, things are going to be better with more training data–Sometimes we talk about that not being the case with LLMs. But Sally Ann would you say this is the first thing that kind of proved maybe more data and more training isn’t always going to give you the better solution?

Sally-Ann DeLucia: Absolutely. I think the conversation around these LLMs and you know training them over your docs and things like that was like, you know, you want to get the most relevant context that you can. You know, alter that K, get more documents, things like that. And now we’re seeing that even going back to that key finding I found where, like the GPT model performed better closed book than it did open book when they were adding more context to it. So that’s really loud to me, and something that I’m definitely taking in. After reading this paper, it changed the way I thought about building these systems completely. There were even a few projects I went back to because it was like: finally, I have the key on how to fix this and improve my performance. So it’s really cool to see. And it’s a new secret for all of us MLEs and data scientists working with these models.

Amber Roberts: Absolutely. The majority of the time we have left is going to be focused around the impacts, you know, Sally Ann as someone that’s diving in and helping out a lot of customers, it’s obviously important for you to see how these impacts can affect customers. And even your date today in the experiments you’re working on. And even though this table is at the very back of the paper and barely mentioned. I thought this was just absolutely crazy. The increase in performance, I mean, a 50% increase in accuracy going from closed books. And just if folks are aware in a closed book, you are not given any input context, you rely on your memory and your to generate the correct answer. The oracle is, you are given a single document that contains the answer, and you need to use it to answer the question. So by giving it this answer like that is an enormous amount of improvement, and it’s an enormous amount of improvement for every single one of the models like selling. What were your thoughts on this? What was your thought on how things that this could be applied to?

Sally-Ann DeLucia: Yeah, I think this is like the base or the foundation for a lot of these contents. Retrieval used cases. because we saw that with, you know the base models, you can ask it your question for your specific use case. And it’s probably not going to give a great answer. It’s going to hallucinate, something weird like that. And we’ve seen where we can, you know, pinpoint this piece of context that’s needed, and feed that to the all along with the question, we get much better performance. So I think that this is really important. But it’s also like, you know. Again, it’s like, okay, just one document that we well, we have to figure out which one document, which is a little bit tricky to do. And you know, a production setting So it’s definitely something to think about. But my highlight here was like, we definitely want to give it some context. But what’s really key is giving it the right context?

Amber Roberts: Would you say that LLM observability or LLM Ops can kind of be used to leverage like this information like, if all it needs is, you know. Maybe there’s a data point, maybe there’s a cluster that we’re seeing in production where we don’t have enough information training. Or you know, if we’re looking through our own documentation. And we want a chatbot around that, people are asking questions. yeah. Where would LLM observability kind of fit in here?

Sally-Ann DeLucia: Yeah, I think it fits perfectly in here, and that’s really like taking a look at the if you have an observability tool. or if you’re playing around with our open source. Phoenix, this is a really great place for this, because you can see the context that’s being retrieved to answer the score. You can kind of get a better, full picture of what’s happening here. So be able to see the context. when you’re using tools like that. And you can see, is it the right context? Again, we are trying to get probably one document or the least amount of information with the right answer in it. So you can see how well your actual retrieval is going. because that’s going to be key and making sure you’re not giving it too much context. Then you can always overlay that with questions, you can maybe isolate one area that the context is not being retrieved properly for, and then kind of fine tune just for that specific.

So I think observability is going to be key to this, because otherwise you’re just going to be guessing honestly right? If you don’t have any way to see into how these issues are happening again, you know, just knowing that an issue is an issue is one thing, but being able to root cause, it is really what the observability comes in. And that’s what’s going to be key for improving these systems and making sure you’re not saturating the LLM and creating a whole bunch of performance issues that you’ve thought you are going to be avoiding by adding on the context, yeah.

Amber Roberts: And it’s probably also good news for machine learning and engineers and data scientists that now realize, like, oh, all I need is one thing that has relevant information, and that I then I’ll get a huge jump in performance. Rather than like, oh, I have to create all these materials, and like every every possible edge case. And I think it is nice for machine learning engineers to be like, okay, so I need relevant information. But I don’t need a ton of it.

Sally-Ann DeLucia: And I think that’s gonna be something. I would be surprised if every or if not, every ML Engineer that has read this paper, being like the light bulb went off. To be like, oh– this is a little bit of a secret to getting these models to perform. This is one of the first papers I’ve seen come out and be like this is how and we’ll get to it a little bit. This is how you improve and optimize. This is the secret, and we haven’t really seen that before. So I think a lot of MLEs are going to be excited about this.

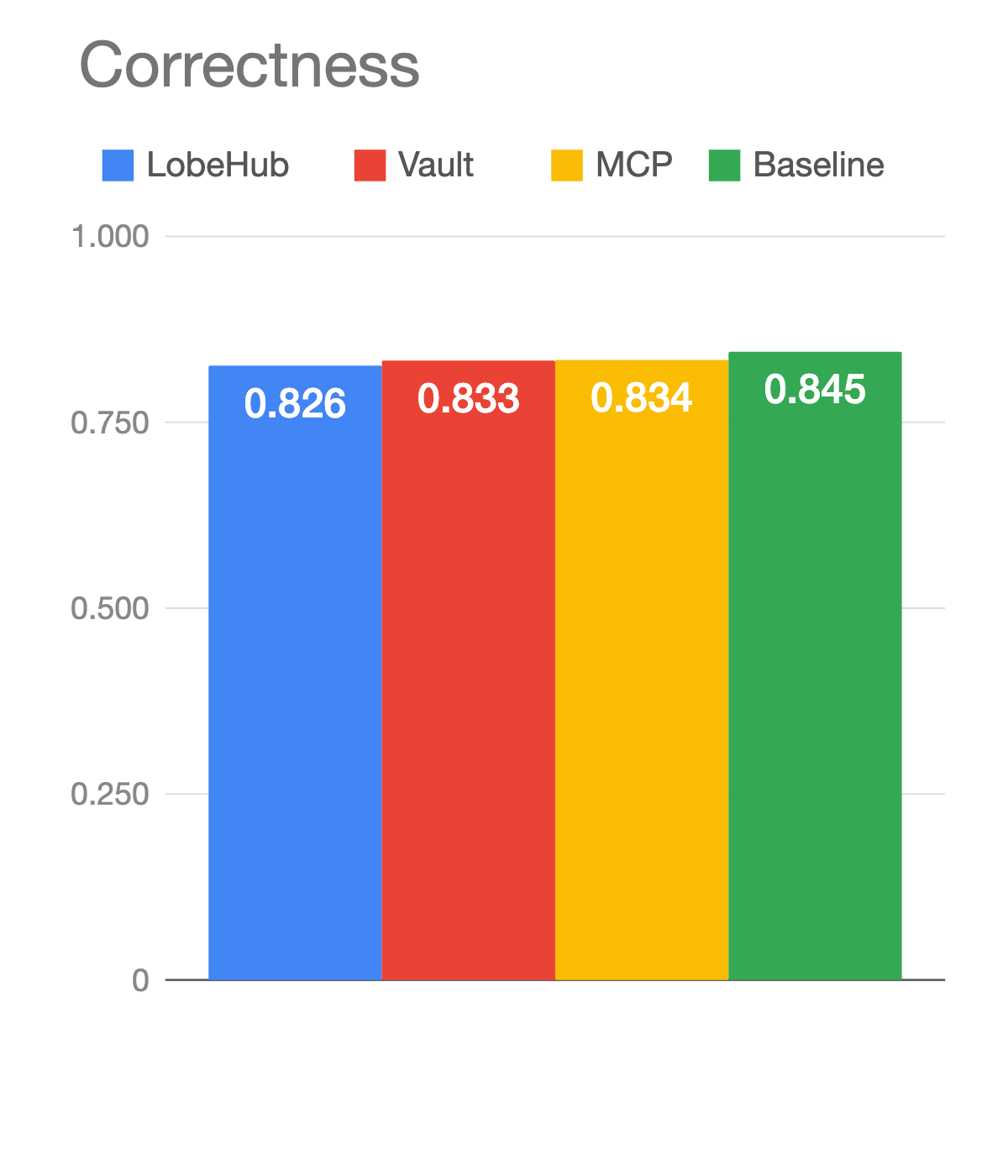

Amber Roberts: Yes, yeah. A lot of the results in this paper actually make things easier to understand. They abstract a lot of the: what about this? And just really show you what was like right in front of us? We didn’t even realize, and I’m sure folks in the chat are like, well Sally Anne’s been saying Chat Gbt, and like Gpt-4. So where is it like? What’s going on? What can we see from it? And you know this is also kind of at the back of the page, but quite an increase in performance. I’ll tell everyone to you know. Just be aware that this is a 500 question sample. but you know some people will see this graph and be like, oh, I should just use GPT-4 it’s 10% better than the next one which is Gpt. 3.5. GPT-4 is the best model to use like, what are your thoughts on this?

Sally-Ann DeLucia: Yeah, I think it’s just, I myself have looked at similar visualizations, maybe not in this exact context and been like, Oh, yeah, like GPT-4 is the best one, and I’ve used it, and then been a little bit disappointed. So for me, I take this visualization very much at face value because I don’t know what those 500-question samples. I think we know that the performance is better specifically compared to 3.5. There’s been a lot more that I’d like to be seen done with GPT-4 right, so they use 3.5 in this. I would love to see how it holds up against all those experiments by itself. And compared, it’s kind of hard to get a lot of information we can start to hypothesize. Maybe it has to do with the architecture, the way that this model is trained and the parameters all those things that make it different than 3.5, but it’s hard to say I wouldn’t just at least value choose Gp. 2, for only on this visualization. but it is impressive at the same time that it has such a higher performance than the other ones.

Amber Roberts: I was gonna say, it is a little suspicious because, you know, there’s certain models that we get a bit more insight into. Not too much open source for these large LLMs, that’s why we have so many workarounds for how we can use them. But just, you know potential things. And if anyone in the audience like, and participants have ideas, too, of why, it’s such a jump here, like what could be factoring in on that. We’d love to hear that. But, what’s a little suspicious to you?

Sally-Ann DeLucia: The thing I think now I’ve sure everybody in the community probably feels this way ever since it came out that, you know it’s not a monolithic, a model. It’s actually a series of models, smaller models that are working. But I’ve been a little suspicious of, and I’m wondering, after reading this paper and seeing how the architecture plays in. It’s kind of making me want to probe more question like, what is the under working mechanism of this model like, what? What is the secret that it? It’s performing this well, and I really think that this has to do with the way that the architecture is. I think those smaller models, especially looking at like how different the architecture is from 3.5, and we can see it here. And the other of their models here have similar architectures too. I’m wondering if that’s the secret, like we’re all blown away when that was leaked. And now I’m starting to be more and more suspicious of what else is, you know, going on there? Could that be the result of this and I’m really, really hoping we see more people maybe experiment with this model.

Amber Roberts: Yes, I’d love to see experiment aimed at this. Maybe that could be our next paper reading. And so you mentioned when going through the paper, does this mean like Claude is victorious? It was pretty stable. It was also the king of key value pairs. Even when it went head to head over here and the writers were trying to break it. Still maintained it’s performance. Do you think it’s due to the constitutional AI approach?

But what are your thoughts as Claude is coming out as leader, I mean, we did just go from one graph like oh, GPT-4 is the clear leader, oh, Claude is the clear leader! So what do you think?

Sally-Ann DeLucia: I gotta say, when I saw this I was a lot more thinking that Claude might be in front of other than I was. for Gp, for when I saw the other visualization, I think this is super interesting. It’s it’s hard to say what this really means for Cloud, I know for me personally means I’m gonna start playing around with call it a little bit more than I have been. But it’s interesting to see that it’s able to grab the the key value pairs in this task. We’re not seeing this the same way when they experiment in the multi documentation. So it’s interesting. I don’t know, you know, the purpose of them doing the key value pairs, having a little more context was really to strip the natural language like semantics and everything out of the task to really just make it, like, you know, a very simple retrieval. experiment. So it seems like when you really strip everything down, Claude is clearly the best at retrieving things. But it seems like maybe Claude gets a little bit more confused when you actually start asking it, and you’re passing it, you know. Real natural language. so I think it’s interesting. I think again, there should be more done. and maybe more variations of this I’d like to see, because this is there’s a lot more variables that we could tweak here and actually get to understand how this works a little bit more. But I think when you pause to me is the retrieval.

Amber Roberts: I guess it also depends–are you using language or you using numbers? Because it seems like it just makes a decision and stays with it, where other models can be a little bit more capricious and kind of change their mind when they’re getting more and more information, which to me shows in terms of bias that Claude is less biased here.

Sally-Ann DeLucia: Yeah. And it’s really interesting, I was actually a little surprised when I first was reading the section of the paper. When it introduces this task, I thought every model was going to be able to do well, because they stripped it of all the semantics and the natural language. I thought, like, okay, that’s what a machine learning model should be able to do right. It should be easily able to like, identify keys and give you the value. And so I was almost as surprised that Claude performed perfectly as the other models did it. I would have expected again some of the other moments to be the front runner there. But it’s interesting, I would probably not want to choose these other models for retrieval just based off of this chart here I’d be thinking more of like, okay, I have a retrieval task. I’m probably going to reach for Claude before I maybe reach for something else.

Amber Roberts: Yeah. And what’s interesting here, too, is this is, I mean, 16,000 tokens is a lot. But I mean, when you’re dealing with a database like, if you’re dealing with a database And you know you’re not. You’re not clustering like terms. And there’s not that same context. It’s like, Oh, reading all these, you know, all these documents like, if this might not even be like that much memory, or like that much space for just a key value pair. and that’s kind of what concerned me on it.

Sally-Ann DeLucia: It’s really not that many tokens like I’ve been there where you like. Think like, oh, this is fine, and then you get the error that says like you, you’re seeing the token limit. And so those the tokens do run up on you fast. So it’s really not that many, or before I run this paper I would have considered it not too many. But now I know this is way too many, but it’s really not. I mean, if you look at the extended version of Claude it has the max token of a hundred thousand. In this graph here I know that Claude’s performing perfectly, but you know these models that have the capacity to have these higher context length. It’s not really doing them any favors, and I think this experiment may really highlights that. We’re seeing here longchat has over 16,000. Claude is the highest one with 100,000. But these extended versions that have the tech and say, hey, you can shove more in there. Well, obviously, it’s not doing you any favors in doing that, I think that’s another key thing that’s highlighted here.

Amber Roberts: Yeah, that’s a good point. I’d like to see. Just Claude versus Claude and go to a hundred thousand tokens because… I actually have no idea. This paper has taken away a lot of what I think. It would be very interesting to see. And I would like to see this one with Claude, too. I can’t even make assumptions like, oh, it should be here. It should be there. Oh, yeah, we’re dealing with key value pairs, machines should be able to handle that because we’re seeing. No, they can’t. And when people ask me like: Oh, you work in AI, is AI going to take over the world? I’ll show them a graph like this, and just say: I don’t think we’re there yet.

Sally-Ann DeLucia: That is the same thing that I do. It’s funny, because, as the practitioners in the space like that, everybody else is so worried about them, we’re just trying to get the models to retrieve the context we want

Amber Roberts: We’re trying to get them to retrieve the context that we already gave them. You have this right? That’s funny. yeah. And so just getting into, like some of the other parts with the large language models. you know the saying: it’s better to beg forgiveness than ask permission, I think with these models it’s better to ask permission.

I just found this very interesting, because the way you’re doing the prompt engineering you set up so many things first, before you try to get it, to give you something back? and just by adding this query, aware contextualization. So placing the question, or like, you know, essentially the thing you’re after before and after. The document really helps with performance especially, I mean the real values putting it before it does help having it after. We can get into like maybe it goes up a little bit. Probably like with one of the next slides. But you know, essentially like the second bullet bullet point And I think you also commented on that like perfect performance when a value over 300 key value pairs like with query, aware contextualization. So additional thoughts on this like, does this change the way we create prompts, prompt templates? What are your thoughts here?

Sally-Ann DeLucia: Yeah, I think the first thing about this test here is that it’s important to aware that the reason they decided to go through this task was due to the findings they had from the architecture experimentation, so their hypothesis is essentially that encoder decoder models have this bidirectional encoder. It means that they can understand context based on the proceeding and future tokens. So the hypothesis really is that this allows them to better estimate the relative importance between documents. So they’re building on that intuition to try to make these models that aren’t encoded decoder models have this same awareness by putting it at the front and in the end. And I think it does show with the perfect performance across the models. But as soon as we’ve changed this from. You know, the roll out key value pairs to multi doc, we see that it doesn’t work. So I think there’s still more that needs to be done in terms of investigating the architecture and how that plays a role. I think the more we understand how these models are really using the context, the better these strategies are going to become. I don’t personally think putting it at the beginning of the end is the answer–the paper hints at what is the answer–but I think it’s something interesting in some ways. It does kind of support their hypothesis that that back and forth the bi-directional understanding could be really key to making these models have better awareness.

Amber Roberts: And since you touched upon what might be the solution. It might be the answer, because they’ve also tested in code or decoder where they’re testing this idea of putting it right at the beginning.What could be a potential outcome or a solution to the problems we’re seeing here?

Sally-Ann DeLucia: So the paper talks about their promising directions for improving models. That’s essentially pushing the context, the start or running fewer docs. Those are the two takeaways like, if you’re if you’re listening to this and you want how to fix your context, retrieval, use case. This paper says, push the relevant info to the top and or return fewer documents. That’s the key. But I think we’re really gonna see, build off of this, though, I’m concerned about the transformer architecture. I think we might see the transformer go away, which is so wild to me, because just a few years ago, the transformers unlocked all this capability. Right? And now we’re seeing more and more research be done to changing architectures, using different types of attention mechanisms. So I think we’ll see architecture ships. but I think we’ll see more and more research as it’s in the related work section of this paper like, there’s a ton of research going on into how we understand context. And I think more and more we understand, we’ll know how to inject the right context in the right location. So it’s interesting to see, I think putting the information at the top. That seems like the most promising piece of information getting fewer. docs is going to be tricky, because again, we don’t know. I think that’s where these observability tools are going to be really helpful as we experiment, and see how our triple is doing, we might be able to return fewer, but I think the little golden nugget here is, put your relevant info at the top. I think that’s going to be what this architecture respond to.

Amber Roberts: Yeah, I think it’s definitely going to be a comparison of architectures and I don’t know if anyone remembers like 2017, becauseI was in Chile in 2017, and using Google translate because I did my masters in South America and was using Google translate. And that was before transformers. And you know, they had the eight Google researchers that created transformers and they pretty much had the transformers on ice because Google is so big. But it was terrible. I think they used RNNs for it and it and it couldn’t even handle non Latin languages. And I think there will be a lot of test towards different architectures, and also like per use case. We’re talking about, put your documents at top, limit your amount of documents. How do you think this factors into a search and ranking like cause? I know if we’re talking to Claire. We’re talking to you, ranking and search are very important to your customers.

SallyAnn DeLucia: It will be interesting, you know. I think the search and retrieval like when we’re taking like a recommender system. I think that’s really where these LLMs do kind of shine like I’ve seen the success of, you know, embedding your user data or your product data and then doing search and retrieval to research the you know, relevant product information. I think that those use cases are doing really well. It’s the generating text, the generative AI ones.

But I think that’s where a lot of this work is going to matter. I think those use cases are just getting better and better like we’ve seen that the these large language models are able to do this retrieval really well, and just looking at the embeddings because that’s where you’re really going to use them is using these models for embeddings. And that’s how you’re going to do your retrieval. I think they’re gonna keep getting better. I don’t know how the change in architecture will really change those use cases. I think that change in architecture is really going to be for the generative ones. But I have a suspicion that as we improve these models. Those use cases are only going to benefit from it as well. But, does I answer your question?

Amber Roberts: Oh, yeah. And as always, it depends on the use case. And people are like, Oh, can I implement this like, what does the rest of your stack look like? What you know, how? How are things set up? And then, especially when people are like, Oh, can I? Can I implement this? And then like, have an agent involved. And so yeah, definitely, this is definitely the hot topic. And this is just fun to think about how we are kind of the same. We’re, you know, producing the same as these transformer architectures of being able to retrieve documents in a similar way. I mean, I was thinking once I realized that the more information you give and give and give if it’s not relevant like, it’s useless. And you think about that as a person, or especially if I’m reading like a whole textbook, a walk away with nothing. But someone gets me like the three big facts, and like areas that would be on a test like you remember those more. So it’s known as like. And we’ve known this since. The sixties like this is the serial position effect that pretty much deals with the long term and short term memory. So like, if I gave you a list and I tell told you like there’s 10 numbers reading back the numbers you’re most likely to remember, like the first and the last in that and you know, so it’s interesting to think about.

Sally-Ann DeLucia: Absolutely. And I always love looking at these crossovers from machine learning. So I I think this is almost promising in some ways that like, okay, we’re not too far off base, like, it’s a little bit expected that these models were are mimicking the way that our brains work and have the same behavior. that our brands do when it comes to being able to retrieve context in our case with the memory. So I feel like it’s encouraging, like when we are on the right track. But we have to figure it out. You know better ways to work within these systems. Maybe we won’t see a perfect world where the model and we’ll be able to grabbed context from every bit of its input but I think it’s going to be a way that we can adapt to understanding like, okay, we need to put it at the beginning of the end. What does that mean? What does that mean for shortening context? I think this makes me want to ask a lot of questions and dive a lot into it. But it’s super cool to see the parallels between the two systems.

Amber Roberts: Yes, I completely agree. And then I’m just like, okay, so where do we get like what architecture is most like our brains? Because we always said neural network, like, it’s exactly yeah, the same idea where our brains are just a little bit more complex. And then, you know, just kind of having these insights is really cool to see. And are there any questions or comments from the audience?

Thanks, Chris, for shouting us out, Chris said: Ladies, crushing the game. Yeah. SallyAnn and I were very excited. And then we realized like, Oh, this might be like the first, you know, like you know, two ladies running the paper readings, and so very, very fun, very exciting. I made the back of these slides pretty for the sake of that.

Any comments from folks? Sally-Ann and I are also available in the Arize community chat. So if you have any questions there, we are almost out of time. So you know whether you’re a practitioner. Probably a lot of the customers that you’re getting on calls with, and that have questions around these systems. Is there a yeah a piece of advice you’d give them that kind of comes from a take away from this paper when they’re experimenting and building these architectures?

Sally-Ann DeLucia: Absolutely. So I think the one thing that we’ve said a million times ever y use case is a little bit different. Keep that in mind. There’s no one size fits all solution to this, but do experiment with pushing them. If you’re building an LLM system and you’re struggling to get the right context, or you’re feeling like you’re getting a lot of wrong answers, try to change the way that you’re sending in that is relevant and go push it to the top. Or maybe you change up that key value to retrieve fewer documents, also definitely recommend using a tool like Phoenix to help you experiment a little bit. It makes such a difference. I went through this myself. So being able to visualize your methods other than just going through the Eval, or something like that, get it yourself, a tool that will make this really visible to you to understand where you should change up your retrieval, maybe where you need to add more or probably add less. so those are key things there, and keep your eyes out. I think there’s going to be a lot of more papers going out around this. We noted in this paper itself that there’s work going on to the attention mechanism, variance different low rank and computationally less expensive approximations, removing attention altogether. and just really an overall investment and understanding how these LLMs use context. So I definitely think, experiment with the principles in today’s paper. But keep your eyes out, because I’m sure within no time this is all going to change once again.

Amber Roberts: And especially with just how much traction this paper got like constantly be experimenting. They don’t even have to be incredibly complicated like these are all new. So everything we learn is going to be new for us, and with that we are out of time. Thank you so much, SallyAnn, and thanks to everyone who joined us, and we’ll be back. Well, we won’t be back. But Arize will back next week with the paper discussion. Have a great day, everyone.