Supercharge Production ML With BentoML and Arize AI

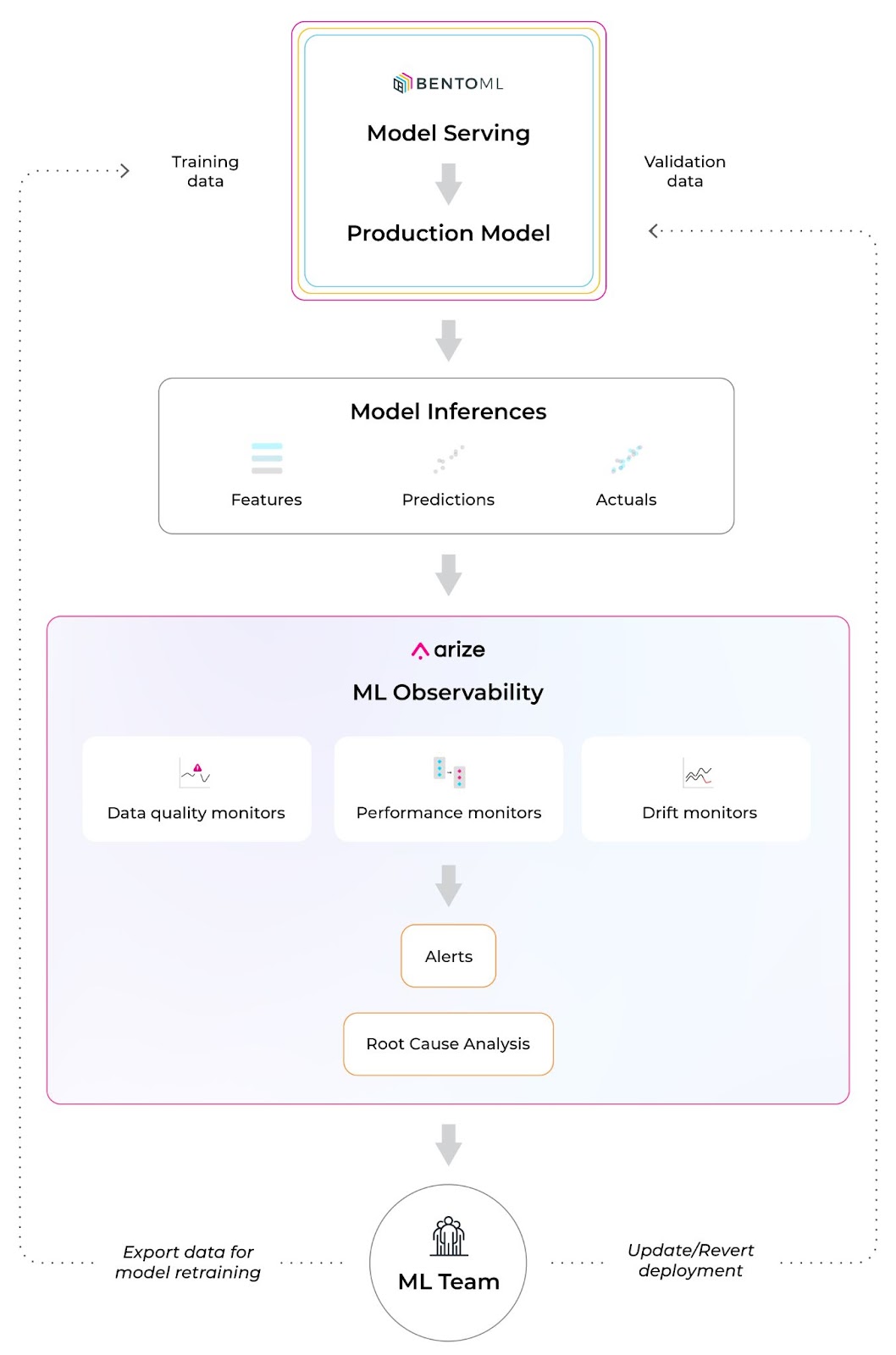

BentoML and Arize AI have partnered to streamline the MLOps toolchain and help teams build, ship, and maintain business-critical models. Leverage Bento’s ML service platform to easily turn ML models into production-worthy prediction services. Once your model is in production, use Arize’s ML observability platform to attain the necessary visibility to keep your model in production.

Learn how to use BentoML and Arize AI to

- Accelerate training to deployment cycles

- Build reliable, scalable, and high-performing models

- Scale ML infrastructure as you grow with a purpose-built integration

Training/Serving Skew

Due to the nature of real-world data, production environments are highly dynamic and change over time, especially compared to training environments. This causes performance degradation that can wreak havoc on your model outcomes.

For example, during the height of the pandemic, U.S. home price prediction models dramatically drifted because the typical real estate buying pattern and available inventory rapidly changed. Due to an increased demand for larger homes to accommodate urban sprawl and a decreased supply of homeowners willing to sell, home price predictions were impacted by changes in both distributions of the ‘size’ feature (feature drift) and per-square footage price of large homes (concept drift).

Most changing user behaviors are hard to pinpoint and happen when you least expect it. Failing to detect decaying models can negatively impact your customer’s experiences, reduce revenue, perpetuate systemic bias, and much more. In other words, even if you were confident in your production model when it was first deployed, your model performance can change at any time as subject to the ever-changing production environment. Thus, it’s imperative to monitor for model, feature, and performance degradation before it’s too late.

Arize AI Co-Founder and CPO, Aparna Dhinakaran, recently spoke about ML observability and drift detection in depth during an AMA session in the BentoML community Slack.

How To Ship a Model With Bento and Enable ML Observability

The 2022 BentoML community survey revealed that more than 50% of respondents are interested in monitoring their model performance after they have at least one model running in production. Once you ship your model with BentoML, implement ML observability with Arize AI to monitor and troubleshoot performance degradation in real time. Get alerted when your model deviates from expected ranges and automatically surface your problematic areas within your model to proactively catch and resolve model issues before they impact your customers.

Integrate BentoML and Arize AI in a few easy steps:

- Step 1: Build an ML application with BentoML

- Step 2: Serve ML Apps & Collect Monitoring Data

- Step 3: Export and Analyze Monitoring Data

Step 1: Build An ML Application With BentoML

Using a simple iris classifier bento service, save the model with BentoML’s API once we have the iris classifier model ready.

If you’re new to BentoML, get started here.

This will save a new model in the BentoML local model store and automatically generate a new version tag. View all model revisions from CLI via bentoml models.

Commands:

$ bentoml models get iris_clf:latest

$ bentoml models list

We recommend running ML model inference in serving via Runner. Draft a service definition and use the saved models as runners.

Use bentofile to declare the dependencies.

Once we have the BentoML service ready, we can start the development server and test the API through this command.

$ bentoml serve .

Before moving the service to production, we’ll add monitoring logging capability with the code below. The bentoml.monitor API logs the request features and predictions.

To conclude this step, we’ll build a bento (BentoML Application) for the service above.

Step 2: Serve ML Apps & Collect Monitoring Data

BentoML provides a set of APIs, and CLI commands for automating cloud deployment workflow, which gets your BentoService API server up and running on any platform.

Navigate to the full deployment guide here for various deployment destinations.

Use this command to start a standalone server.

$ bentoml serve iris_classifier --production

BentoML will default export the data to the monitoring/ directory.

For example, you can view the log file like this.

Step 3: Export And Analyze Monitoring Data

Leveraging BentoML’s monitoring logging API, you can set up the inference logging pipeline to Arize in a few lines of code.

1. Add bentoml-plugins-arize to the bento’s dependencies

First, add bentoml-plugins-arize to the bentofile configuration.

bentofile.yaml

2. Apply bentoml-plugins-arize in the deployment configuration

Update the deployment configuration to connect to the Arize account.

Attain the space_key and the api_key with the instructions here.

3. Rebuild the bento and make a new deployment

Run the command to start serving as a standalone server.

$ BENTOML_CONFIG=bentoml_deployment.yaml bentoml serve iris_classifier --production

After completing the setup, your online inference log will flow to the Arize platform!

4. Monitor, troubleshoot, and resolve production issues with Arize AI

Step 1: Set up Monitors

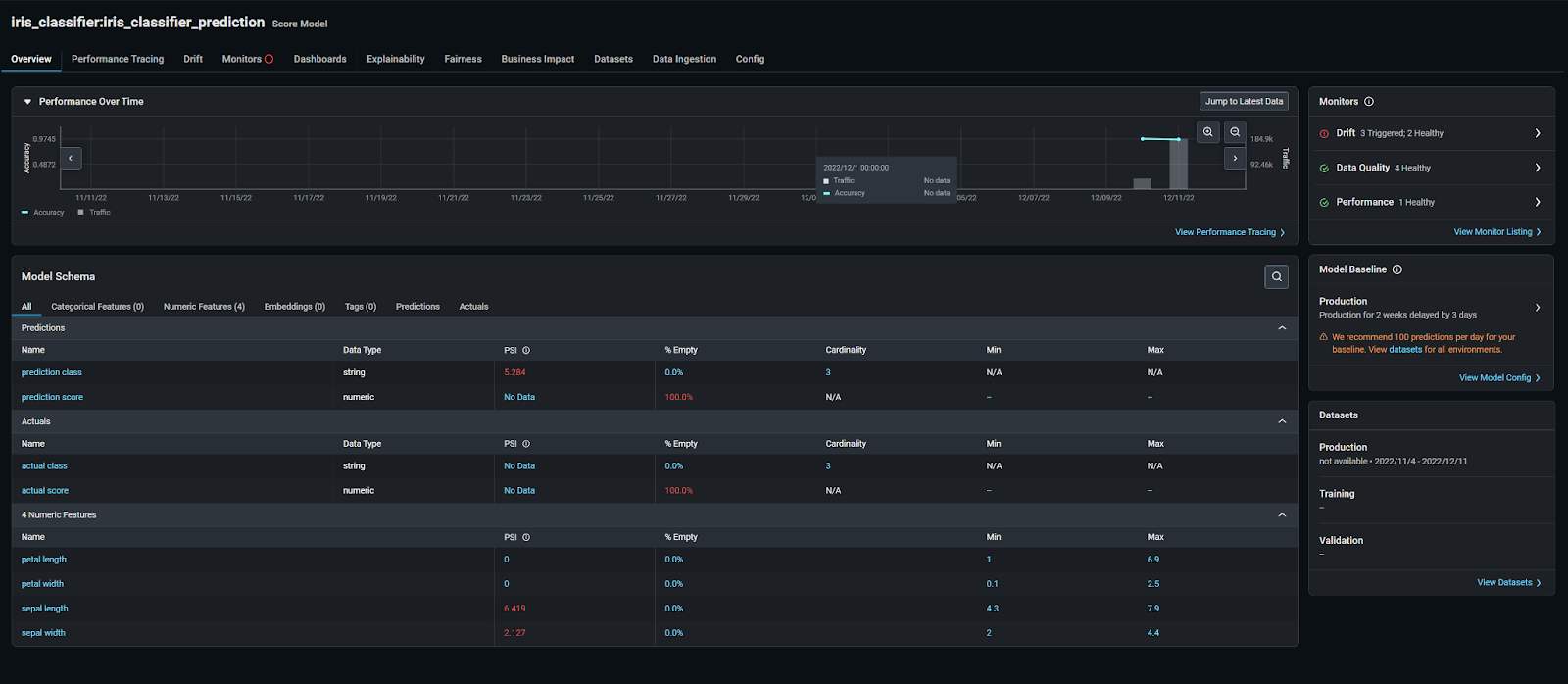

If you haven’t yet, get started using Arize for free here! Once we log on to the platform, we can see our iris_classifier:iris_classifier_prediction model on the “Models” page.

This page contains an overview of all your models for an at-a-glance health check across the board.

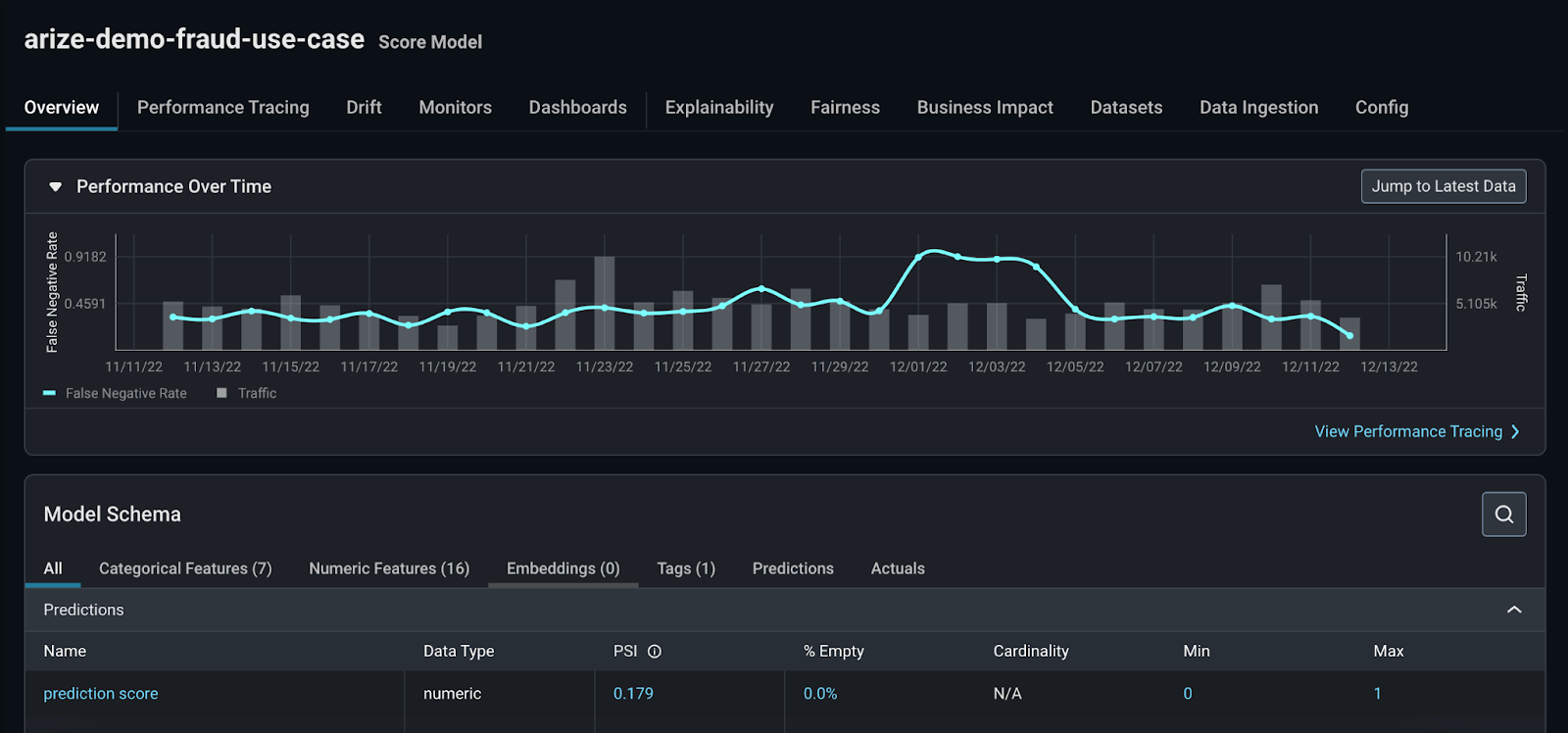

All Arize accounts come with demo models to showcase different use cases and workflows. For a common troubleshooting workflow, let’s look at setting up the arize-demo-fraud-use-case model. Our main objective is to learn how to improve our model and export those findings back to BentoML for retraining.

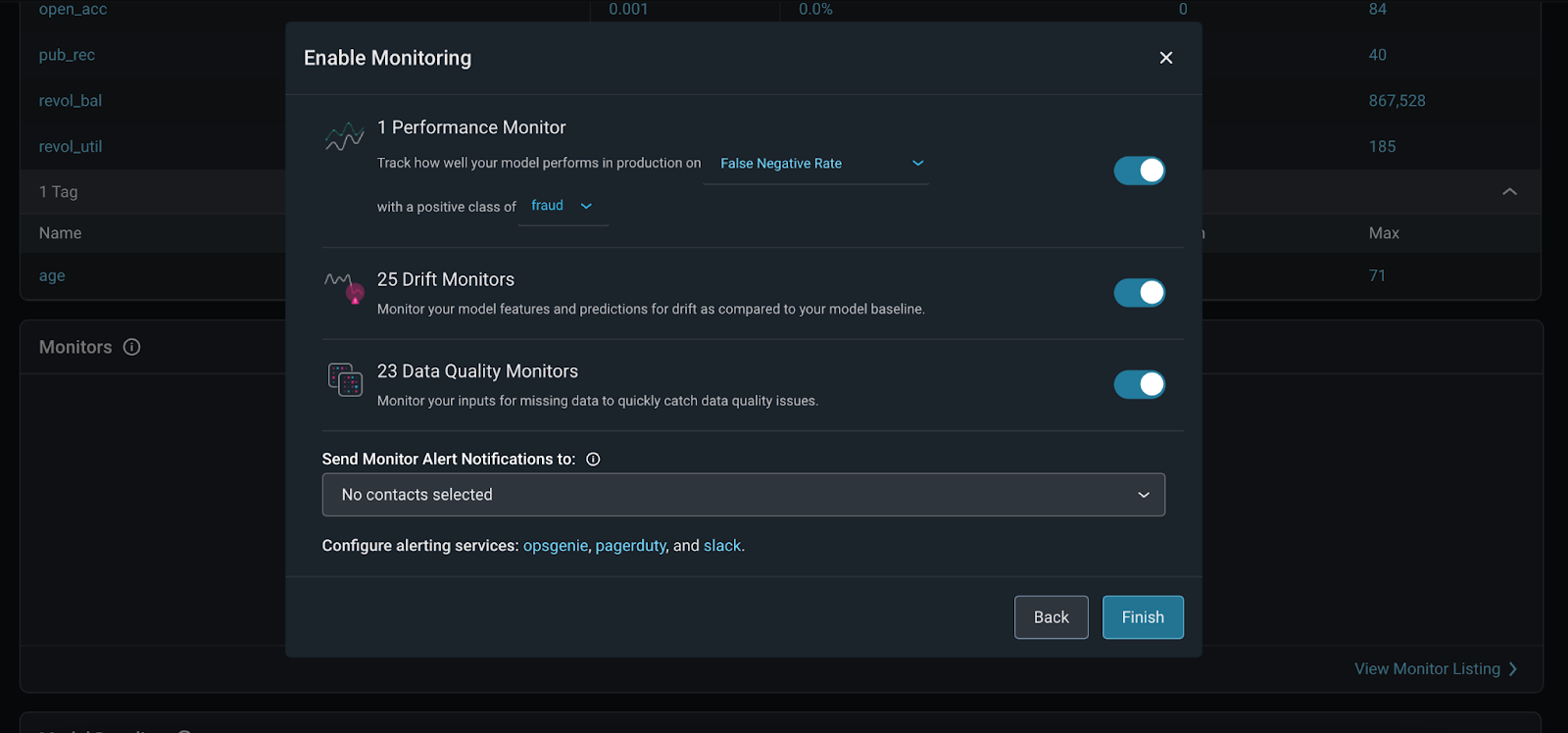

Once your model data is in the Arize platform, enable monitors in Arize to catch drift, data quality, and performance issues. Arize supports three main types of monitors:

- Performance Monitors:

Model performance monitors indicate how your model performs in production. Measure model performance with an evaluation metric via daily or hourly checks on metrics such as Accuracy, Recall, Precision, F1 score, MAE, MAPE, and more.

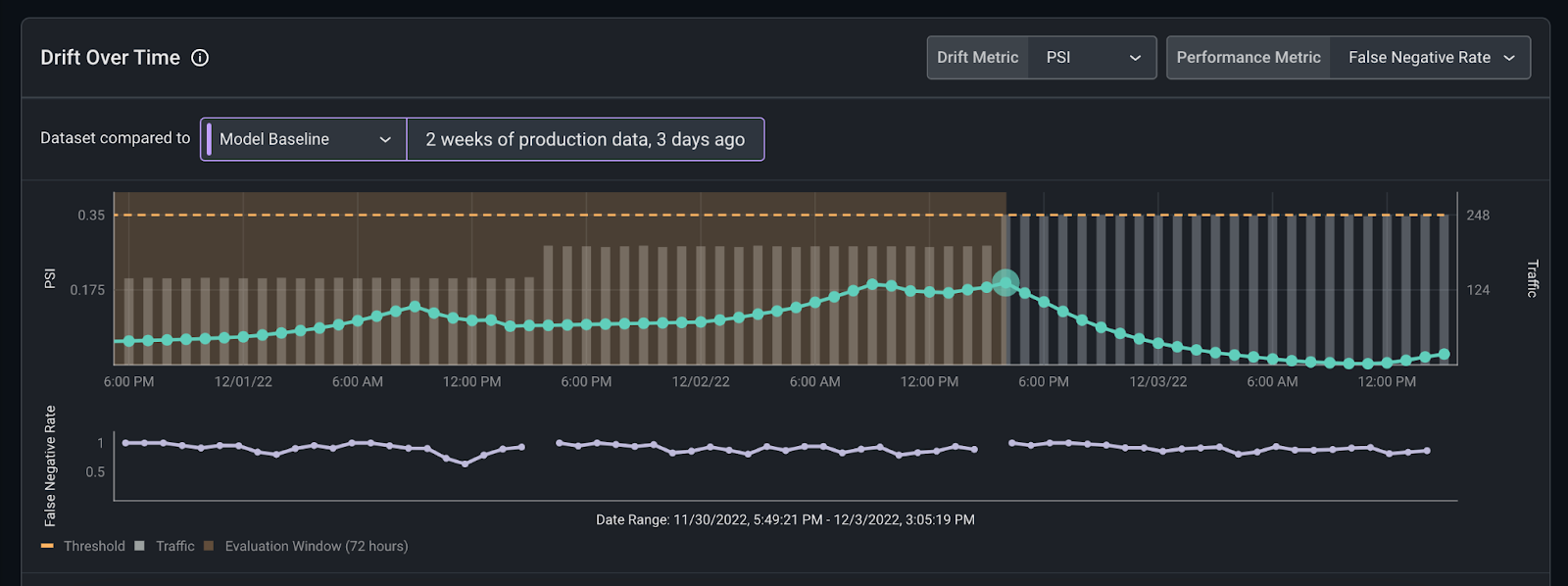

- Drift Monitors:

Drift monitors measure distribution drift, which is the difference between two statistical distributions. Since it’s common for real-world production data to deviate from training parameters over time, drift can be a great leading indicator into model performance.

- Data Quality Monitors:

Model health depends on high-quality data that powers model features, so it’s important to ensure your data is always in tip-top shape. Data quality monitors help identify key data quality issues such as cardinality shifts, data type mismatch, missing data, and more.

To easily set up all three monitors, we’ll follow the automatic bulk creation workflow by clicking the “Set Up Monitors” button on the “Model Overview” page.

Here are further instructions on how to set up model monitoring in the Arize platform.

Step 2: Monitoring Alerts

Once our monitors are set, Arize triggers an alert when your monitor crosses a defined threshold to notify you that your model has deviated from expected ranges. You can configure your own threshold or set an automatic threshold. Learn how to configure a threshold.

Arize offers alerting integrations for alerting tools and methods. Send an alert via email, slack, OpsGenie, and PagerDuty. Within these tools, you can add configurations to edit your alerting cadence, severity, and alert grouping.

Step 3: Troubleshoot Your Model

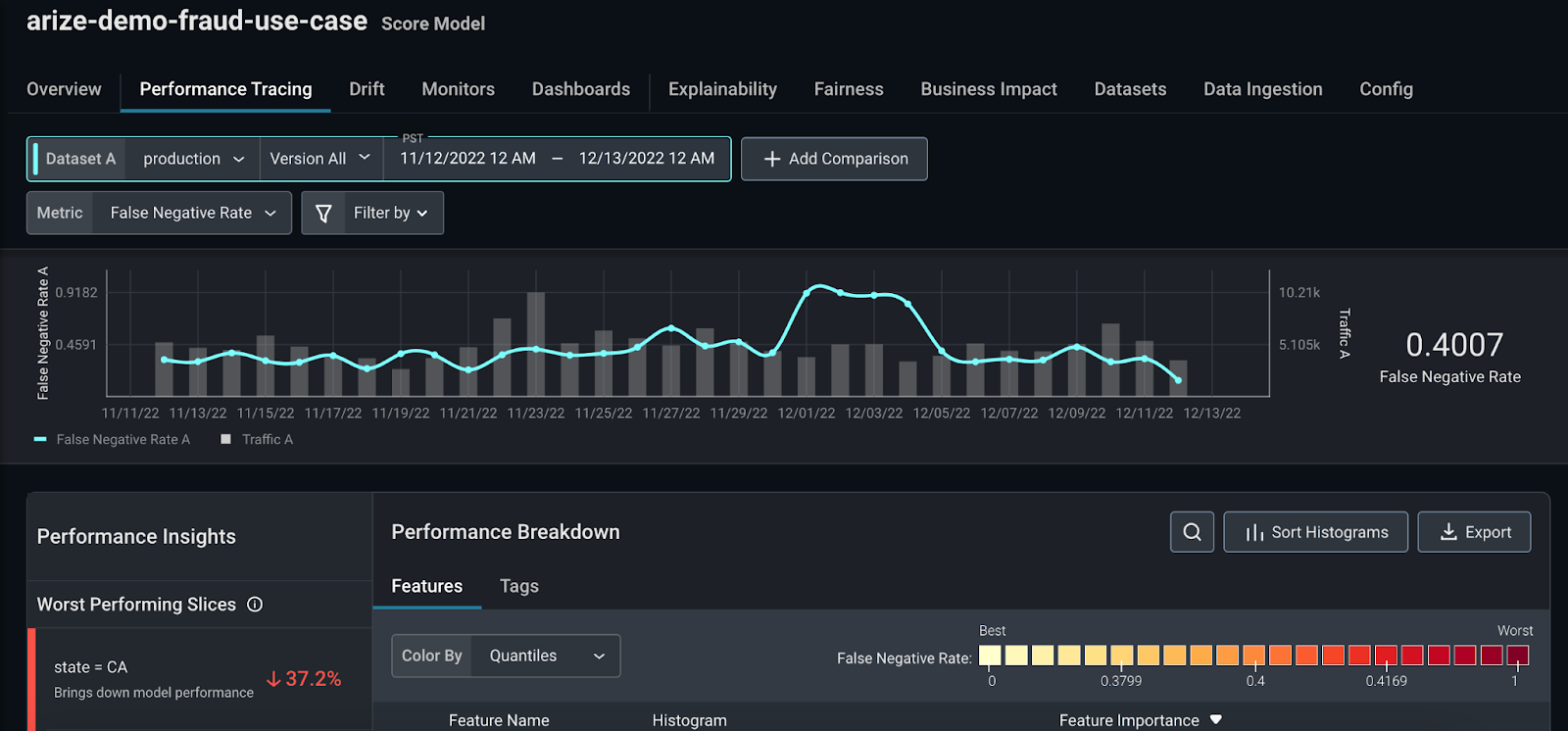

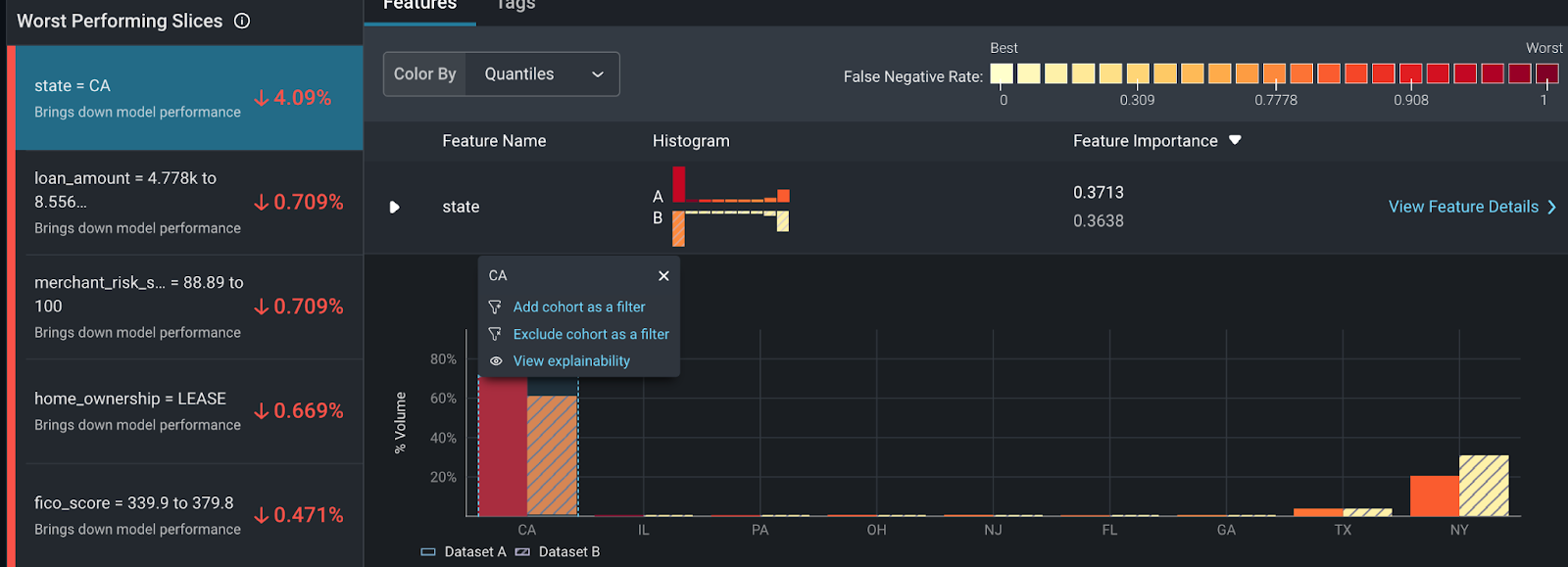

When your model deviates from the threshold, use the “Performance Tracing” tab to troubleshoot the features and slices that impact your model the most.

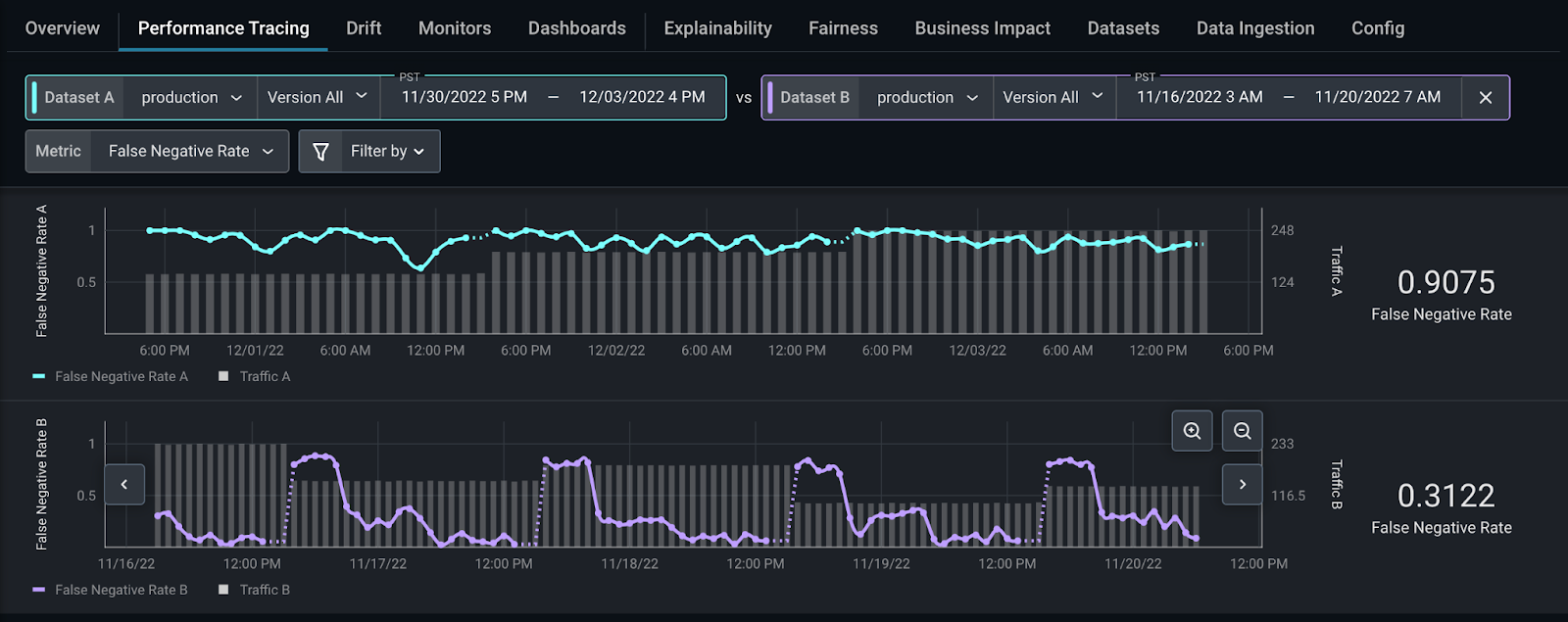

Add a high-performing comparison dataset from any model version or environment to easily identify the root cause of our performance issue. In our case, zooming in on a high-performing section of our production dataset and comparing that with a low-performing section of our production dataset is instructive.

From here, Arize automatically surfaces the slices that affect your model performance the most on the “Performance Insights” card. Click on state = CA to compare how this slice behaves differently between the two datasets.

Here, you can see some discrepancies between training and production. Within the “Feature Details” page, you can view additional details on how our distribution comparison changes over time. This provides a granular view of how the data slices change over time – informing if your features have drifted significantly, your overall data quality, and a distribution comparison of your slices.

While this model is healthy, this workflow can also easily identify areas to improve or monitor for future degradation. From here, it’s easy to export your data to begin retraining or update/revert deployment with BentoML.

Explore More In the BentoML & Arize AI Communities

Build reliable, scalable, and high-performing models with BentoML and Arize AI. Use our purpose-built integration to scale your ML infrastructure stack for a seamless production model workflow.

If you enjoyed this article, please show your support by ⭐ the BentoML Project on GitHub and joining both the Arize AI Slack Community and the BentoML Slack Community. Want to deliver and maintain better ML in production? Sign up for a free Arize account to quickly detect issues when they emerge, troubleshoot why they happened, and improve overall model performance across both structured and unstructured data. Searching for a great place to run your ML services? Check out BentoML Cloud for the easiest and fastest way to deploy your bento.