Documentation Index

Fetch the complete documentation index at: https://arize-ax.mintlify.dev/docs/llms.txt

Use this file to discover all available pages before exploring further.

What are prompts?

A prompt is the instruction set you send to an LLM. In Arize, a prompt is managed as a Prompt Object: messages, model settings, tools/functions, and response format together as one versioned artifact.

Prompt template

System, user, and assistant messages with variables like

{customer_input}.Model settings

Model choice and invocation parameters such as temperature and max tokens.

Tools/functions

Optional tool definitions and function-calling behavior.

Response format

Output schema and structure your application expects.

Build and improve a prompt

Start in Playground with a draft prompt, then refine it for clarity, correctness, and brevity.Create a prompt

- By Alyx

- By UI

- By Code

Create a new playground

Navigate to Playgrounds and click New Playground to create a new playground. You can also start from the home page, and Alyx will create a playground for you.

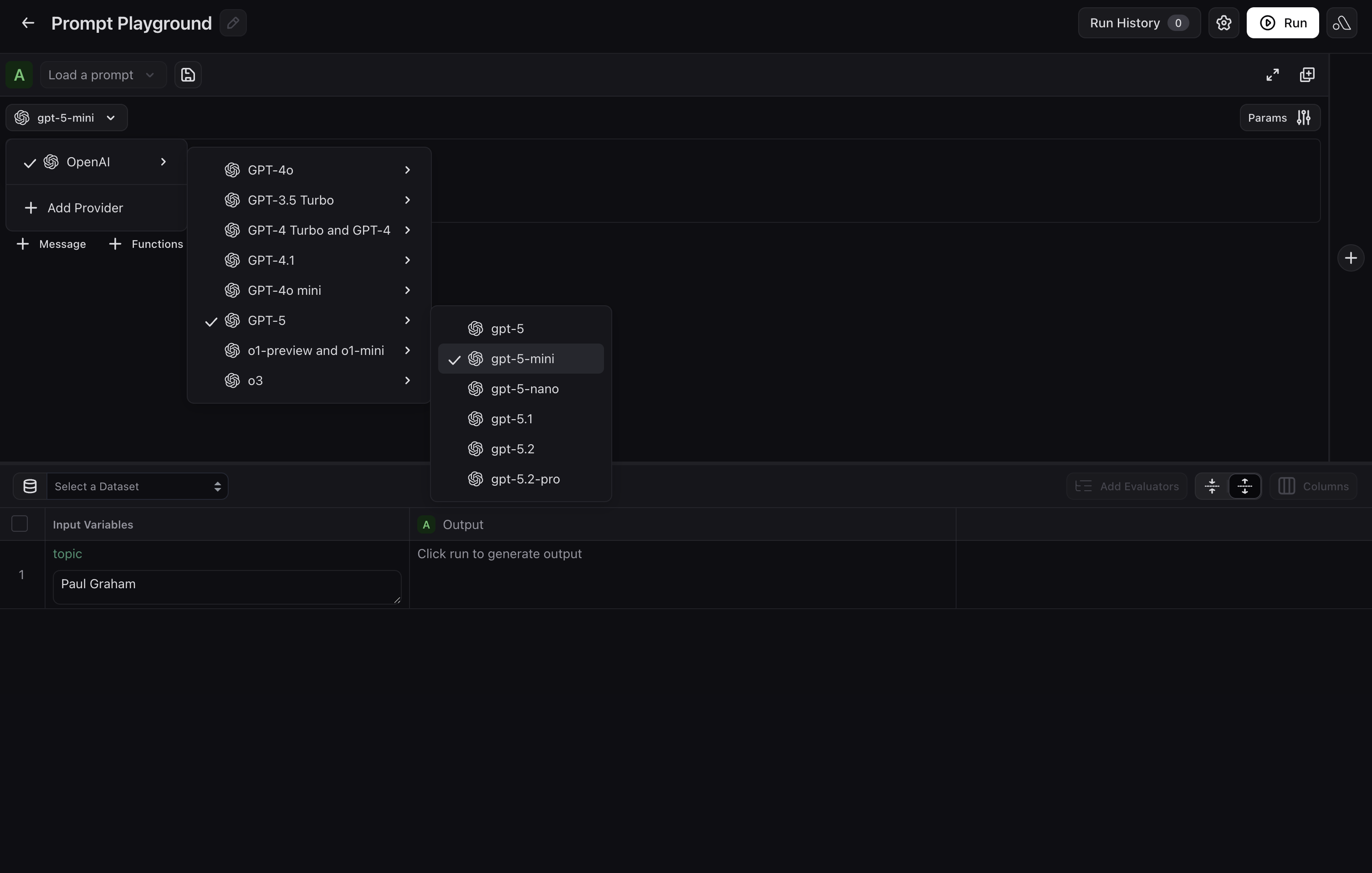

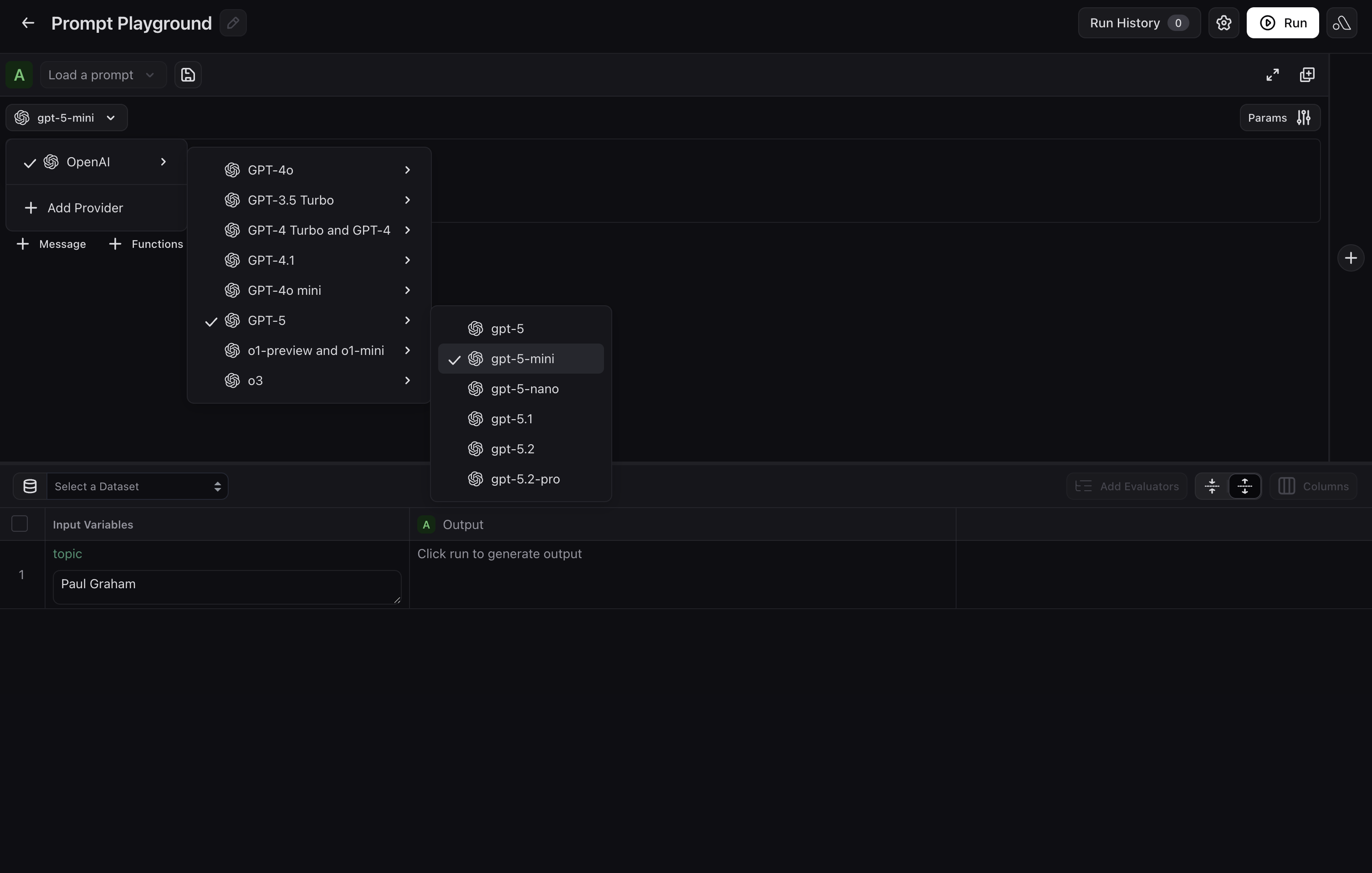

Choose a provider or model

If you have not already added one, select your LLM provider and model in the Playground before you draft or run. Options include OpenAI, Azure, Vertex AI, AWS Bedrock, and others.

Draft and refine your prompt with Alyx

Describe your use case in plain language in the Alyx panel on the right. Include details about what your prompt should capture, desired output format, tone, length, etc. Alyx will wire them in automatically.For example, you could say:

Create a prompt for a customer support agent that handles returns and escalations. UseGive feedback on Alyx’s drafts and keep iterating until you are satisfied with the prompt.{customer_input}and{order_id}as variables.

Try the prompt in the Playground

If you added a dataset or test cases, run the prompt over rows to check outputs. If you skipped that step, exercise the prompt with manual inputs or keep iterating with Alyx. For a fuller testing workflow, see Test a prompt.

Test a prompt

Test your prompt against real examples before shipping.- By Alyx

- By UI

- By Code

Load or generate data

Open Alyx and load your data, or ask it to generate examples from your use case.

Load your prompt

Ask Alyx to load a prompt from Prompt Hub and align

{variables} with your dataset columns.Create evaluator and run

Ask Alyx to draft an evaluator, then run the experiment.

Save and version prompts

When a variant wins, save it to Prompt Hub so your team can track and deploy it safely.

- By Alyx

- By UI

- By Code

In the Prompt Playground, you can ask Alyx to persist the prompt you have been editing, for example:

Save this prompt to Prompt Hub as support-agent-v2 with a description that we tightened escalation rules.

Alyx can help you refine messages, parameters, and tools first; when you are ready, ask it to save (or click Save Prompt yourself) so a new immutable version is written to Prompt Hub with the name, description, and tags you want.