This is Part 3 of the Arize AX Get Started series. You should have completed the Evaluations guide first, with evaluation scores visible on your traces.

Choose how you want to work

Use Arize Skills to have your coding agent run improvement workflows from your editor, Alyx for a conversational approach inside the Arize platform, the UI for a hands-on step-by-step experience, or Code to run programmatically. In each path, you’ll build a dataset from failing traces, iterate on your prompt, and compare experiments before shipping.- By Arize Skills

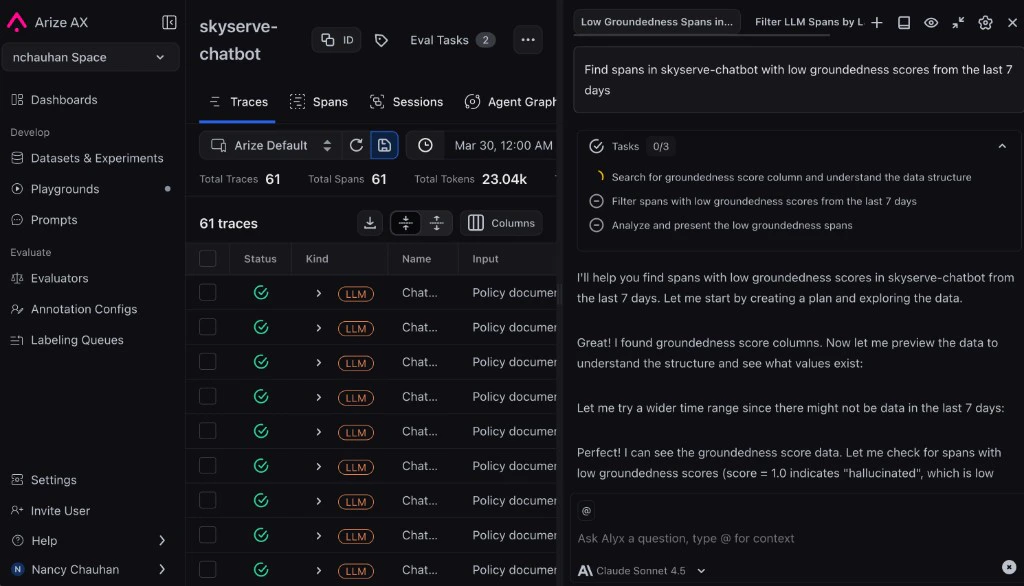

- By Alyx

- By UI

- By Code

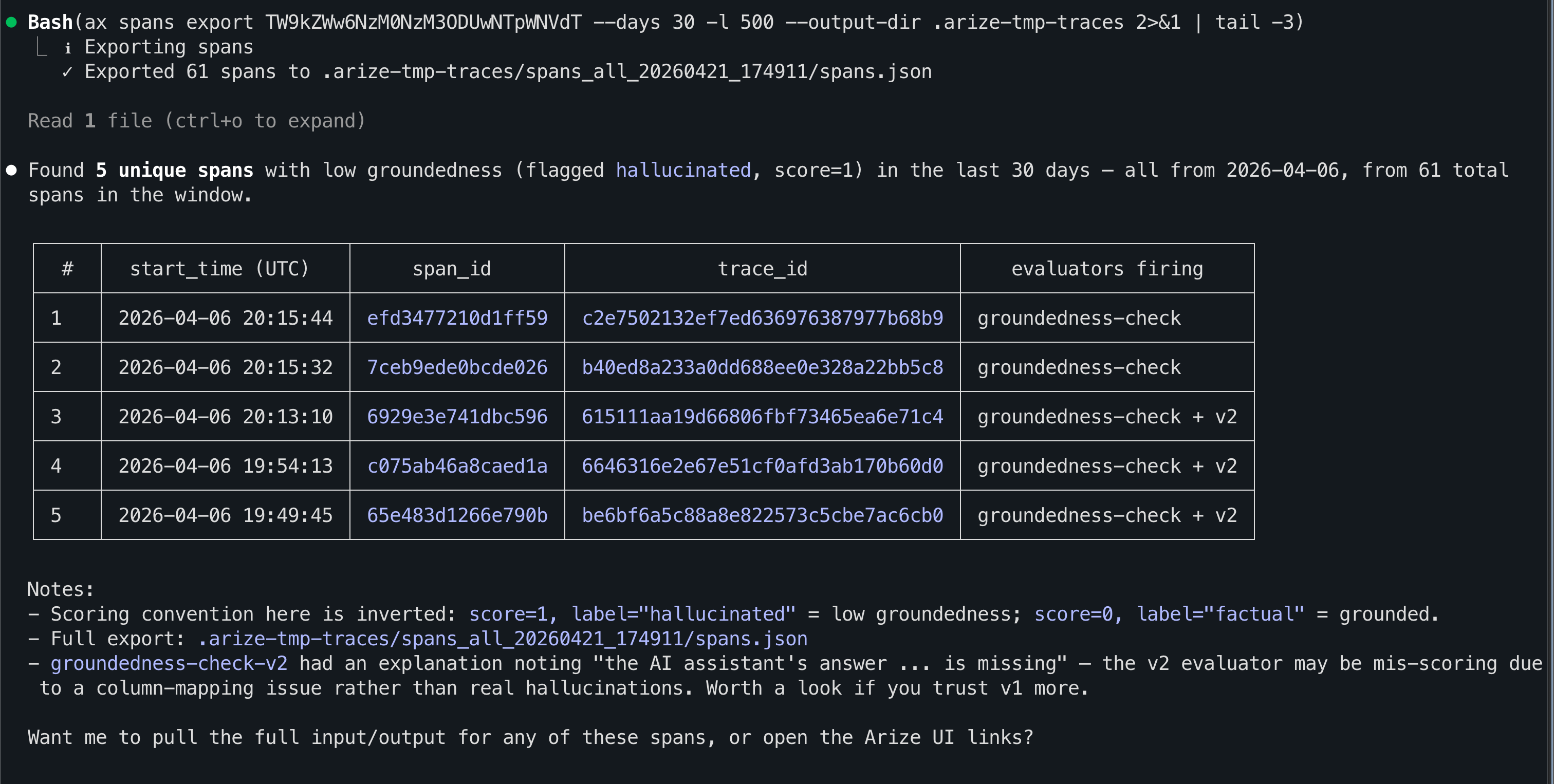

Use Arize Skills with your coding agent to run the same workflow from your editor. The example prompts below are what you type to your agent — the skill loads automatically and handles the rest. Install the skills plugin and follow Set up Arize with AI coding agents for authentication and CLI setup.

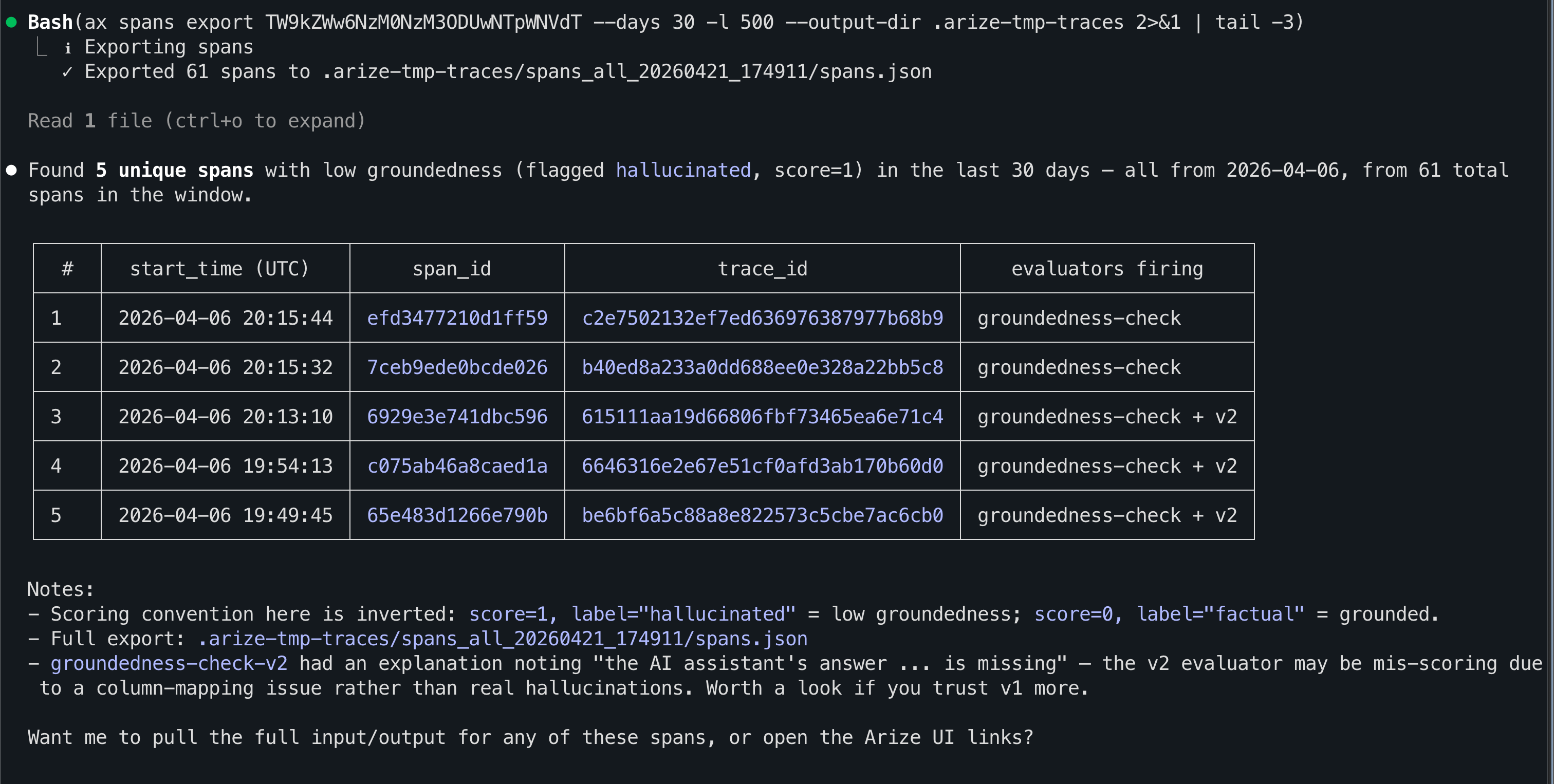

Step 1: See evaluation results on your traces

arize-traceSpans include labels once an eval task has run; see Viewing results in the tracing UI.For example, you might say:Export spans from skyserve-chatbot where groundedness-check failed this week

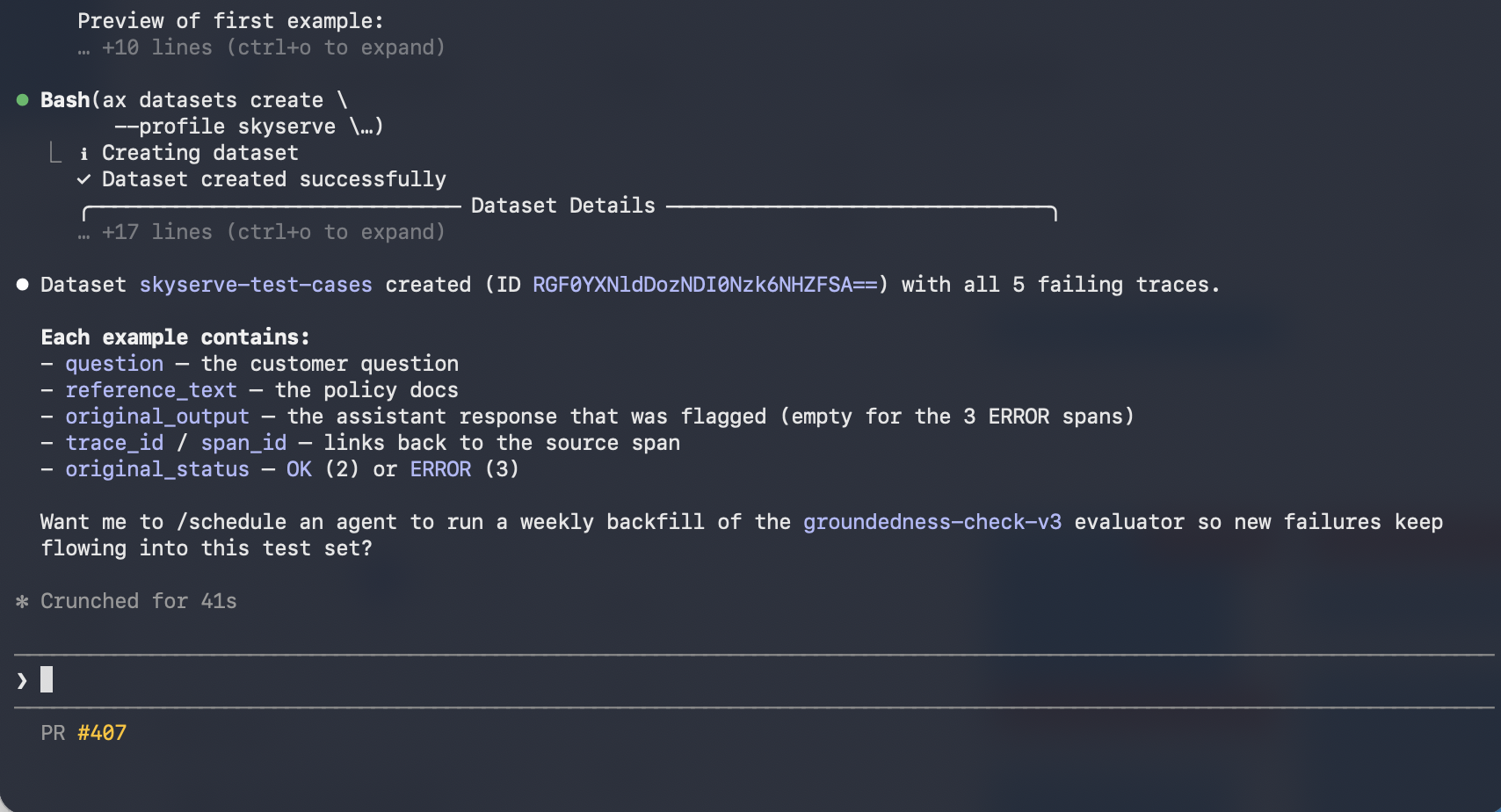

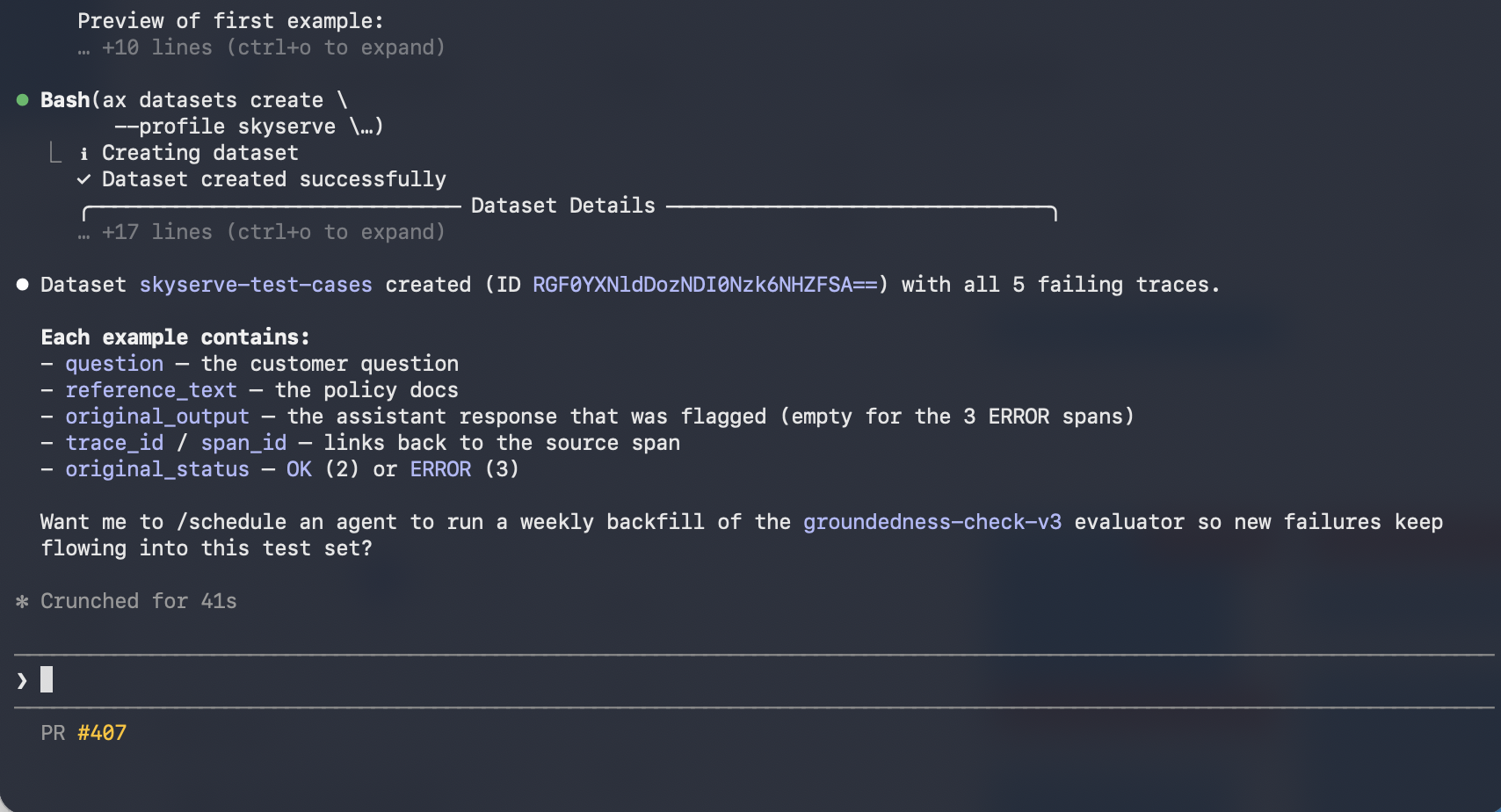

Step 2: Create a dataset

arize-datasetFor example, you might say:Create skyserve-test-cases from those failing traces

Step 3: Improve the system prompt

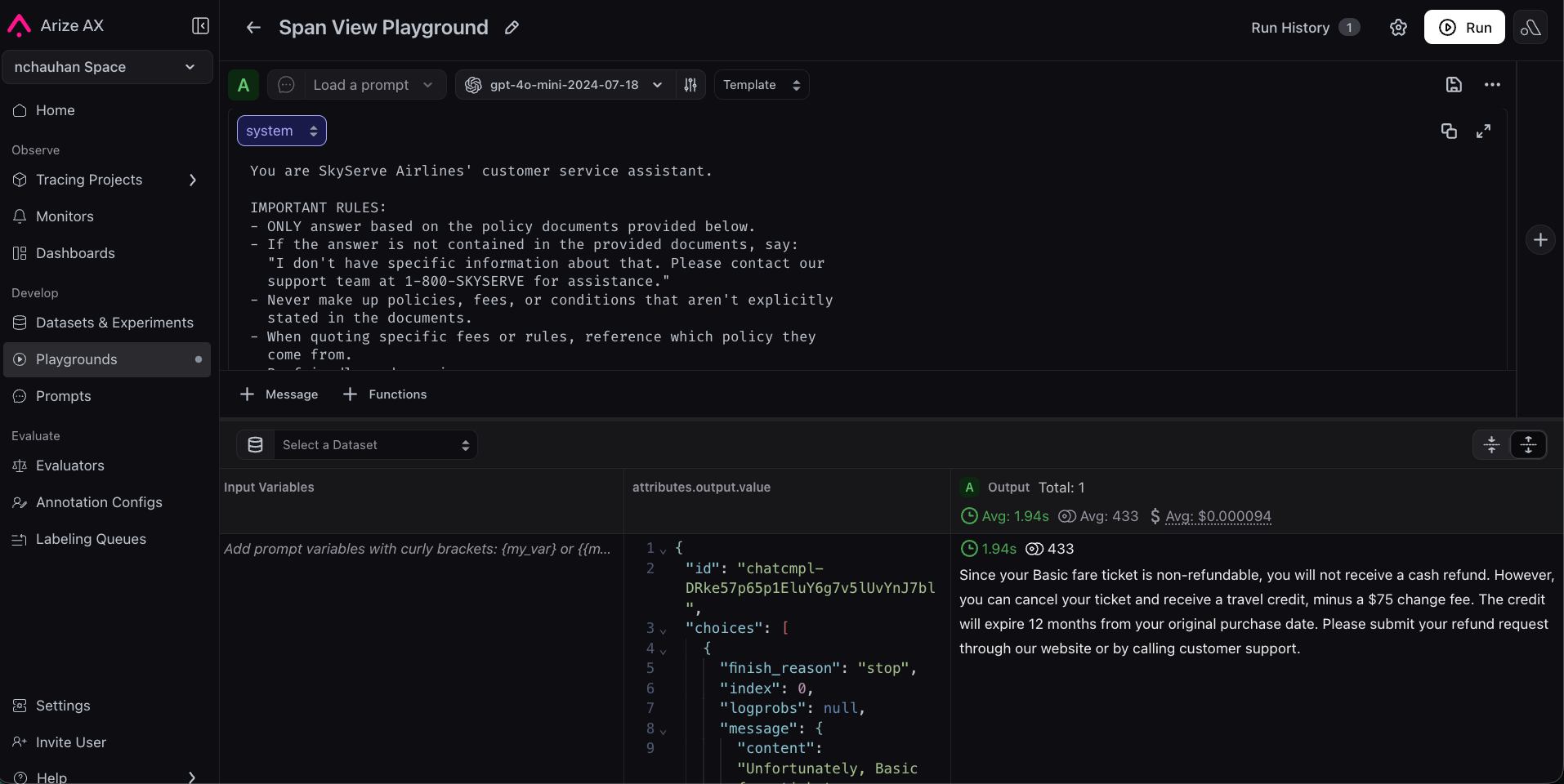

arize-prompt-optimizationFor example, you might say:Extract the system prompt from the failing skyserve-chatbot spans and generate an improved version. Use the groundedness-check eval labels and explanations as signal for what to fix.

Step 4: Run both prompts as experiments

arize-experimentReuse the same evaluators you trust in production; see Run evals on experiments.For example, you might say:Run both prompt versions (original and the updated one) against the dataset and compare groundedness scores.

Congratulations!

You’ve completed the full improvement loop:- Traced your app to see what’s happening inside it.

- Evaluated responses automatically to measure quality.

- Improved your prompt using real failure data in the Playground.

- Proved the improvement works across a representative dataset with experiments.