LoRA: Low-Rank Adaptation of Large Language Models Paper Reading and Discussion

Introduction

In this paper reading, we discuss LoRA, which freezes the pre-trained model weights and injects trainable rank decomposition matrices into each layer of the Transformer architecture, greatly reducing the number of trainable parameters for downstream tasks.

Join us every Wednesday as we discuss the latest technical papers, covering a range of topics including large language models (LLM), generative models, ChatGPT, and more. This recurring event offers an opportunity to collectively analyze and exchange insights on cutting-edge research in these areas and their broader implications.

Watch

Dive in:

Transcript

Harrison Chu, Engineering Leadership at Arize AI: Okay, so where we last left off we wanted to contextualize fine tuning relative to other techniques that are out there. I was sharing this diagram here from Andrej Kaparthy about sort of where he situates fine-tuning between prompt engineering, and when you would use fine tuning versus uh prompt engineering.

I think the other interesting thing is to contextualize it with what’s going on out there from a business perspective: fine-tuning versus prompt engineering plus foundational models I think are two paths that people are debating. And for those of you who’ve read the Google memo, LoRA which is again what we’re talking about today features prominently in the memo because it drives a lot of these cheap fine-tuning models that we’re seeing everywhere.

I’ll give you a brief example advancing a bit to the slide here Vicuna um which is this open source version of you can call the chatGPT sure or at least on par which have TBT um was fine-tuned on the open source Llama model using around 300 dollars which is insane if you think about GOT-4 or or chat GPT taking reportedly hundreds of millions of dollars to train and behind that behind that 300 training is fine-tuning and more specifically parameter efficient fine-tuning.

The last interesting bit is in the you know Google internal memo there’s this other point about there’s aspirations for Google to incorporate near real-time information into these large language models and if you’re doing that there’s no way you can do a full sort of fine tuning uh on top of a base model and so that’s where LoRA comes in. With that context let’s jump back into the paper um so to start it kind of restates this problem a lot of these adaptations to multiple downstream applications are done via fine-tuning, but the problem is that the this sort of baseline fine-tuning on the existing model often contains as many parameters as the original model. So this is how I visualize it. You have this simplified view of the pre-trained weights on the left, the model weights on the right, and the problem is that Delta W is massive. Now, fine-tuning is not a totally new concept but the problem the authors are saying here is that existing techniques often extend the latency inference latency on top of the existing model and more importantly, these methods are usually produced a fine tune model that doesn’t compare as strongly against the full baseline tuning.

And a visualization of what they mean here is in these existing techniques you typically have an adapter, and this adapter is probably another fully connected feed forward neural net on top of the previous pre-trained weights. And you know if say X here is all the way on the bottom is like the input that inference has to fully propagate in a serial manner from the existing pre-trained weights to the adapter and finally to the output so the problem with this is additional latency and more importantly it’s not as good as a full baseline fine tuning.

Aparna Dhinakaran, Chief Product Officer and Co Founder at Arize AI: Maybe I can ask you something like to level. Maybe even just start why would someone want to find you like what is the value prop of you know fine-tuning an LLM.

Harrison Chu: Yeah I think depending on who you asked the answer might be different. If I’m say like a solo entrepreneur Indie hacker or something and I want to build an application that um uh isn’t using one of the open AI models or Anthropic or whatever I might want to take an off-the-shelf open source let’s say it’s I don’t know font five or GPT-2 but I have a very defined set of tasks I wanted to do let’s say I’m trying to build a company around translating human language to SQL that’s a perfect example where there’s a lot of data out there for you to train it on you can take it off the shelf open source where the weights are open source uh and fine-tune that for your very specific objective.

Aparna Dhinakaran: Got it, and in in this case maybe you can help me understand so one of the things that this paper is saying is that well, it’s freaking hard to find two you it’s not feasible if you have something like GPT-4 with the 175 billion parameters–could you explain just why like what’s phenomenal about this paper is that you you don’t have to go and find you know those billing parameters but you can you can do something kind of in between. So yeah, what’s hard about fine tuning? maybe just walk us through that.

Harrison Chu: Yeah so the way I would say it is, let’s say the world exists without LoRA or any of these for this is a greater class of parameter efficient fine tuning methods.You’re choosing from two evils and one evil is this one which is you have your existing let’s say the the pre-trained weights W is this like 175 billion parameter GPT-4 model on the left, and on the right you have what your fine-tuning weight updates are and clearly these two boxes are the same and so they’re both 175 billion it’s extremely expensive to to train and even after you’ve trained it, it’s also hard to deploy because the artifact is the same size it’s like you know I don’t know how large GPT-3 is I think it was like four to 500 gigabytes it’s it’s large, in a software sense a very deployable artifact.

And the other evil is maybe use another existing fine-tuning technique but you’re stacking latency on top of it again you can see the flow of data from bottom to the top you it strictly has to go through this new adapter of neural network. And maybe I should have flipped the two problems because like the bigger problem is it’s just not as performant as a space line fine tuning here and those neither are really attractive options. And I think if we want to jump ahead a bit it’s you know the key inside is like what if this Delta W can be smaller?

Aparna Dhinakaran: Okay that was really helpful just to kind of set the context

Harrison Chu: Those are quite great questions that I think help the reading. So, you know importantly these methods fail to match. This is exactly what we just talked about like your your trade-off between two really unattractive options if you’re trying to fine tune and this is the key insight of the entire paper and I think as a group if we understand what the authors are saying here the rest of the paper is kind of like the key insight is that models will typically reside in a low intrinsic dimension. And what they’re saying is so what’s a model? It’s a bunch of matrices in some sense. And these matrices have this characteristic of being low intrinsically dimensional. And the key was they’re saying we think the same characteristic also applies to this Delta W here for the weight updates that it’s intrinsically low dimensional. And that is the hypothesis to which we will use to shrink the Delta W down. And again if we go back to the original not so attractive option, this Baseline fine-tuning it’s going to work–the problem is that it’s really big. So what if it can work and it doesn’t have to be as big? And the way the authors are trying to bridge that saying I think what we think it’s intrinsically low dimensional therefore there is a lower uh sort of there’s a way we can represent it that doesn’t take as much memory and weights.

Aparna Dhinakara: I’m curious like how good did they get that you know? How good could we if we were to represent it in that lower dimensional space? How good is that? Obviously not going to be as great as the final model, like is it on average 80 percent? Is it 70? Does it depend on the double like that’s that’s really interesting.

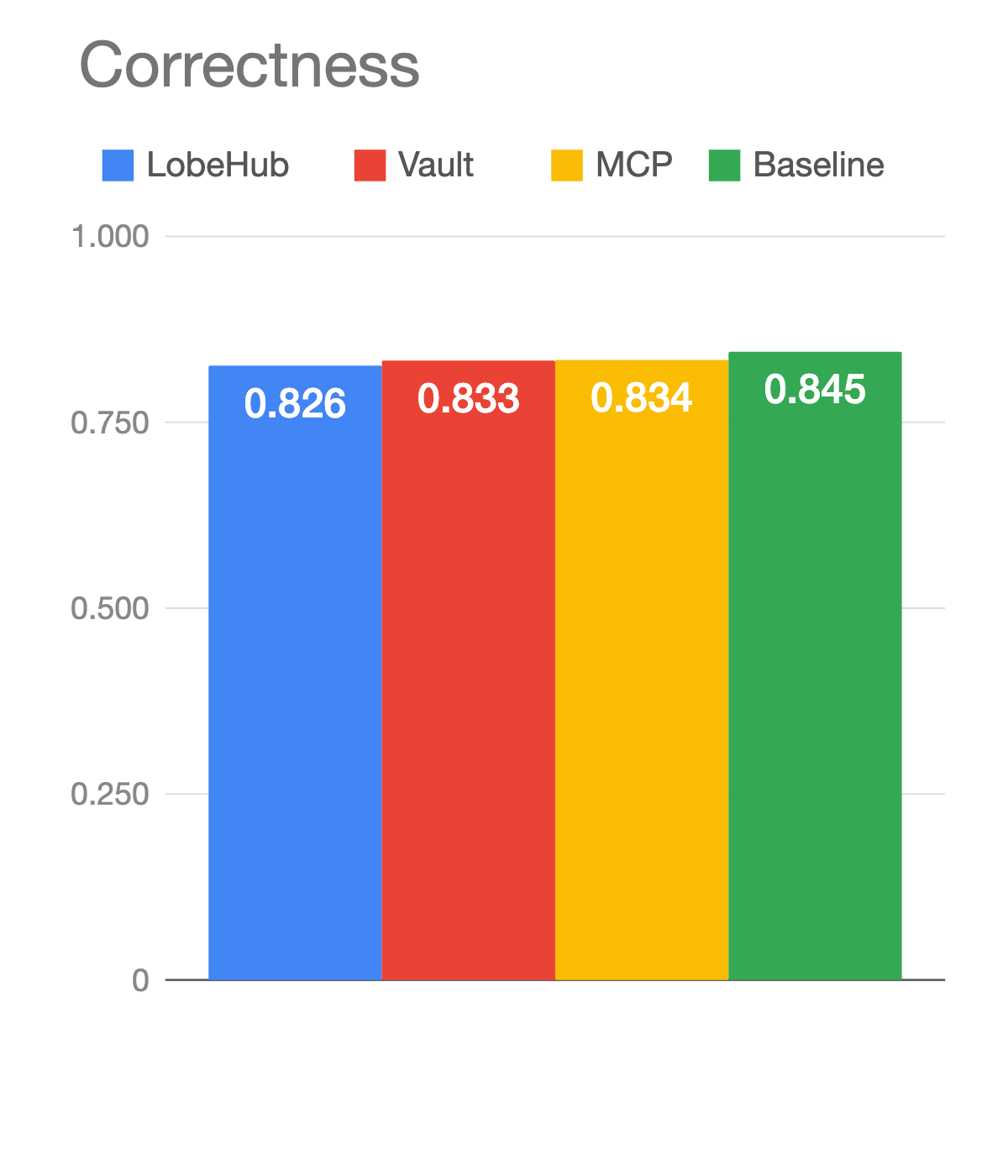

Harrison Chu: I mean we can jump ahead a little bit, let’s go to the results. It’s actually very astonishing. So there’s this data set called Wiki SQL which is human language to structured SQL, that was the example we were giving earlier um in the paper these are like the Baseline full like full fine tuning the really expensive one that takes takes a lot to train. And what you see is like using this you know and if we have time we can we can get into it but using this smaller version this trick that they found to represent the weight updates like magnitudes and magnitude is smaller like insanely small is actually better than what the Baseline was. So it’s one of those techniques and results and in computer science it’s just amazing you don’t have to trade off anything it’s even better than what your Baseline was and it costs magnitude’s magnitudes less time to to train and deploy

Aparna Dhinakaran: Do you predict you’re going to see more of this than fine-tuning, or what’s your take on that?

Harrison Chu: I think it depends on what you’re trying to do. Let’s take the sole entrepreneur thing. If I’m starting a company and I’m trying to build it on a feature set, I probably wouldn’t reach out for fine-tuning right away. I think at the end of the day, let’s go back to that initial stage. I would probably be spending my time in like the zero shot few shot prompts area and I think the graph is kind of a useful way to to contextualize you know fine-tuning versus prompt engineering, few shot prompts. But I think this is actually kind of like an exponential x-axis right? It’s significantly harder to figure out fine-tuning than it is to write a few sentences, so if you’re a new company I would probably stick there, I think if you’re a larger company…let’s say you’re an established player on like internal Dev tools or something and you want to roll out something that translates customer queries uh sorry natural language SQL queries and your SAS platform so you have customers that probably have privacy concerns and like okay there’s no way I’m gonna do this extra API call in fact my product is deployed They’re going to want to take something off the shelf and fine-tune it so you have control over it so in that case that’s where I think this graph is not very applicable because you’re not actually trading off task accuracy versus fine-tuning you’re saying I will go through that extra effort of fine-tuning. Maybe I’ll use LoRA um but I’m doing that because I have control over the sort of uh data and it doesn’t leak out over an API.

Aparna Dhinakaran: yeah and so maybe LoRA and you feel like LoRA is kind of in between the yellow fine tuning section here and in between kind of the the few shop prompting it’s kind of like you’ve gotten as much as you can with prompting you don’t want to go build a whole another model this this is kind of that middle ground that you can go try is that kind of where you you would place this and you know that it’s missing from Andrej Karpathy’s image here.

Harrison Chu: Can you restate that I’m not sure I quite understood the question?

Aparna Dhinakaran: yeah I guess you where I see LoRA landing is in between prompting and full fine-tuning and that’s kind of where it is I think we’re for me what was really interesting is in the paper there are examples where LoRA actually did better than the base model because it was optimized for a specific task for a specific use case and I think trying to understand when what is it giving up are there things because it’s a smaller model is there any kind of trade-offs: what did it give up to optimize for X tasks? Did you get any of that sense from the paper or just just kind of intuition?

Harrison Chu: So when we say baseline we’re not talking about like the baseline like raw model, well the authors are comparing was LoRA against existing like baseline fine-tuning, so I think we’re using Baseline two different ways. So what I’m saying is like baseline full fine tuning, it’s the classic like I’m taking an off-the-shelf model and I’m updating all the weights, I’m back propagating everything. And what the authors were showing was that LoRA performs better than that existing method. And I’m assuming, depending on what the and here I’m using the baseline differently now like the baseline foundational model. I think depending on what that is it could perform better or worse I don’t think the paper–unless I missed that has a comparison there.

We’ve talked quite a bit about the implications, so what is it? How can you represent it smaller and do that in a principled way? This is where I’m going to get a bit into the math, so I’ll try to keep it as simple as possible, but we’ll start with Matrix decomposition So it’s it’s very simple like what this graph is showing is that if you have a matrix A, you can express it as a product of two matrices on the right being multiplied together. And you if you see the K on like the Y Matrix and the K on the D Matrix that’s like why the column of the row is and this is what in linear terminology people call the ray of the Matrix a and I think for for engineers and computer scientists here you can think of A as a measure of how much information the matrix A contains. You know the larger the K the more information that Matrix actually contains.

Now sometimes I think it’s easier just to think about it from right to left from the right I can imagine I have these two skinny matrices If I multiply them together I get the matrix on the left and again if you if for those of us in the audience that are more like from the software engineering background this should immediately stick out to you because it will take way less memory to hold the information on the right even though we’re saved Matrix on the left is exactly the same. That is going to be the trick so the question is now the the key is what I’m talking about the update weights this is not what it looks like on the right here is the full matrix. I want to get to this sort of representation on the right that has less memory footprint. So we’ve been we were talking about matrix multiplication from the right to the left going from the left to the right is where we’ll take our second mathematical leap that’ll be the only one I promise which is the technique called singular value decomposition so, in this what we’re seeing here is you can take any arbitrary matrix even our weight update matrix from this slide we can decompose it such that the middle matrix–everywhere in that matrix is zero except the diagonal. And the diagonal has a very very special property which is the non-zero values on that diagonal tells you what this K value is. In other words it tells you how much information that Matrix contains and the smaller that K value, the smaller you can make the representation on the right be.

Any questions here before I move on to truly the final leap?

Aparna Dhinakaran: Yeah this is great and yeah uh folks feel free to drop questions or ask as well. This is great Harrison.

Harrison Chu: So just going back to the paper, what I promised was as long as we understand what they mean by low intrinsic rank we’ll understand everything else so the last mathematical leap is. Okay I get what a rank is what’s intrinsic rank so let’s dive in. So at the very top we had our initial decomposition remember what I said was in the non-zero values of that middle matrix represents ranks of K. By the way more importantly in this decomposition the K is the singular values on the diagonal are shorted and the larger the K implies how important the First Column is to the final Matrix how important the second column it is in the third one and so forth so now in this example the yellow at the top row where my cursor is the yellow box is zero so there’s no value there this is k equals two there’s no value there you can equivalently rewrite the matrix on the left by lobbing off the last column without any loss in information. And that’s how I get to the third one right so even from the top row to the third row, we’ve already compressed our representation by factor of two without any loss in information. Pretty cool right? Now the final transition from the third row to the final row is what the authors mean by low intrinsic rank. If I notice that say the one on the bottom right is a low value I can just pretend it’s zero I’ll throw it away.

That means I can lob off the third column of my matrix and achieve an even smaller representation of my original Matrix with some there’s going to be some loss in how closely that resembles that’s why the graph it’s like M Bar instead of M and so you know to reiterate the first three stages you’re lobbying things off but there’s no loss. At the final one you are taking a smaller value and you’re throwing away. It’s not the same Matrix but it’s a good enough approximation. And this is the final graph I really promise this time just to build some intuition of why this should intuitively be true. That most singular values here are very low and implying that the matrix is intrinsically low ranked. So if I treat this grayscale image as a matrix and I do the same technique where I you know singular value decompose it and I look at the singular values on the diagonal, that’s what this blue line represents–you can see it really quickly quickly exponentially drops off around like the 10 like rank equals 10. but there’s still 110 dimensions left and what this graph is saying is you can probably log those off, recombine The matrix and you’ll get something that really resembles the original image and really all I’m saying here is I’m taking a long detour to describe compression.

Aparna Dhinakran: Yeah I mean sorry go ahead this seems like LoRA in one picture!

Harrison Chu: That’s it that’s LoRA in one picture. We go through all this justifications for why we can we can do this transformation and ultimately at the end of the day all the authors did was take the original weight update matrix and substitute it for this like two skinny matrices multiplied together that gets back the approximation that’s really it. I’ll pause there. Any questions?

Aparna Dhinakaran: I think one of the things that the paper started with here maybe I can start there is like this you know this looks really simple this looks great well, this is actually pretty novel because in the past um you know earlier in the paper they’re talking about how prior to this teams would add these adaptive layers to LLMs and the con with that is obviously it introduces inference latency, so you have your LLM response you have this adapter, almost model yeah that would go so you take the response and then maybe the adapter model is like make it better in some language and then it would reduce the inference latency downstream.

What you have is actually pretty novel though at the end because they don’t this approach doesn’t have that inference latency, it still gets you whatever optimization you’re trying to do the response. Where does this break? Where do you feel like this might not work? One of the questions I had was like, well great, why doesn’t everyone use this?

Harrison Chu: Yeah I think so there’s two very like there’s two very interesting things I’ll say about it one is I think in hindsight these things always look really obvious and you’re like oh all you did was like change the shape of a freaking thing like why didn’t I think of that right I that’s I don’t know. I think really big innovations will often seem obvious. Well I think it could break is this is a very simplified view of what happens and if I double click into that weight update thing it’s not just a neat matrix it’s like there’s this whole like transformer mechanism here and there’s actually multiple sort of neural networks working together to produce an element and so I think or it gets challenging and where it might break is when you think about well where the hell do I put this adapter?

Aparna Dhinakaran: Can you pull up the paper actually there’s there’s a maybe we can dive into like there’s like I think it’s like there’s a section on like practical benefits and limitations. I think it’s page five. okay yeah right above the empirical experience yes okay so that last last paragraph which is like has its limitations it’s not straightforward to batch inputs to different tasks with different single forward pass I didn’t totally understand that and they didn’t add a lot of detail on that but I my interpretation tell me if you agree this like my interpretation of this was that figuring out that A and B matrix that you’ve got to and how do you you know so if we started with just an example let’s say we were trying to make the LLM…we’re trying to optimize for let’s just say a language like a specific language probably one that they didn’t have a lot of data on and so you’re trying to answer back to people– is it getting these inputs? Figuring out in a single forward pass? I don’t know if you have an interpretation of this?

Harrison Chu: If you fine-tuned your LLM on like the the let’s just use a SQL example again and then maybe have another one which is I’ve also fine-tuned it to uh write uh pandas don’t apply okay so I have two versions of these. I have two fine-tuned versions yeah what you’re saying here is well each one I have to attach this adapter to and I actually think we should get into the details a little bit after this one yeah which is uh I think another practical limitation what they’re saying is okay let’s say I figured out where to attach this adapter but I can’t predict but if I have a batch of inputs stats both seek the the SQL example in the pandas data frame example it doesn’t work. Like each fine-tuning thing can only do that one fine-tuning task I can’t batch it together. And I think, and I’m going out on a limb here, I haven’t read the other papers for the existing adapters, but I think the existing adapters did have some sort of fusion thing going on, we kind of have multiple layers of the adapters that there’s another thing that fuses them together. I can’t help but view this from like my background is in distributed systems mostly and data engineering–I tend to feel like these problems of multi if your input is Multiplex and it has to go to a different model I tend to I think there’s probably easier levers to pull on the deployment side a more traditional distributed systems engineering then try then this limitation they’re they’re talking about here which, how the adapters can only work on one task and if you have an input that’s multiple tasks it can’t uh it can’t multiplex. But it is but you’re right it is a limitation the the authors call out.

The other limitation I wanted to go to was we’re vastly over simplifying here right? And if we look at what’s in the attention head, so the existing adapter methods will have you adding these adapter matrices atop the feed forward mechanisms um but what the authors are doing they’re saying like we don’t know where to attach it and uh let’s just attach to some of the attention heads maybe not others and here’s where things get super interesting.

Just give you one more second to try and find these results. So there’s this open quite like which makes your season the transformation we apply is it the query is it the key is it the value um you know and you can see what they’ve tried here is okay what if we spent our entire parameter budget on like the query matrix or by our entire parameter budget on the key matrix. You know in the end it turns out you just give it evenly across the core key value thing and it’s like the best I think some of the limitations it’s like oh it’s yet another hyper parameter I have to uh I have to tune is maybe the annoying part yeah my intuition is people probably end up just spreading it evenly across all matrices but that that is another annoying part of this method methodologies that additional hyper parameter if you ask me.

Aparna Dhinakaran: Got it got it got it that’s super interesting I feel like I’m really excited to see if people put this into actually deploy this and use this that’s yeah there’s clearly some upsides I don’t have to retrain GPT-4 that’s a big one um but it also sounds like there’s um I think the last one about the different tasks in batching that’s actually probably to me just thinking for you know deploying this in a product if you’re trying to balance two or three different things to fine tune on, you’d have to be really specific about what’s the task that you want to optimize. And maybe that’s if you’re focusing on the pandas thing on specific language or specific etc like maybe that isn’t a fine trade-off.

Harrison Chu: I think it is I think that the the sort of like mixing batch things is to me maybe eventually more of how these AI businesses will evolve a question more of how are they going to be evolved to be more vertical things my my personal bet on it is like the sort of I need one model that’s super generalizable you know zero shot sort of across that’s goodness I think that’s always going to be in the foundational layer I don’t think you’re gonna want to get the the fight you know when your business turned assuming the thesis plays out which is you have a lot of these small businesses that are fine-tuning on top of these open source models yeah the one last thing I want to touch on I want to make sure we get to is just how much smaller the weights eventually are the one that they’ve used in their benchmarks was 35 megabytes. It doesn’t mean you can just download 35 megabytes and you have the model it it is again like an adapter on top of an existing one, but you know zooming out from the mechanics of building models and reducing, it makes the ability to to host these and share these– it’s literally 10,000 times less the cost and you know and we’ve seen this play out in in every different sort of domain right like we lower the cost of selling the friction with something you get this sort of like a thousand flowers bloom sort of effect and I think that is why this is super exciting.

Aparna Dhinakaran: yeah do you feel like most people like this is especially exciting for the open source set of LLMs? Is there anyway I guess with pop like with GPT-4. Where do you feel like we will see the biggest usage of something like LoRA?

Harrison Chu: The complement of LoRA is like a reasonable foundational model, and I think I think the funniest thing about like just going back to this like vicuna thing is very impressive but the compliments are like you can make a reasonable claim that they’re kind of stolen ish you know what I mean like llama was like leaked from Facebook, it doesn’t have a commercial license the the the data they trained it on using LoRA was like off of the site called share GPT yeah which is people posting their chat-GPT solved in responses and so I think the latter which is where you get the data from is probably less contentious you’ll you’ll find things that were there’s less of a gray area in terms of the legality of the data um and I’m not completely up to date on the latest you know open source but I do think that the complement and whether or not this gets even more adoption from open source more than it is already is the the existence of really really good film reasonable foundation models to to build on top of.

Aparna Dhinakaran: One one last thing and then maybe I think we’re getting close time. I think one thing we’re hearing out you know out in the Twitter verse is that people are stacking LoRA, so especially in the image space you can stack one style and another style. And I think this is where Hugging Face is actually working with StabilityAI on and doing that not totally sure how that works in all the details but any thoughts on that? Do you feel like that this might be especially interesting for image or other applications?

Harrison Chu: To be honest I don’t know I’m nowhere nearly as familiar with these models as they are deployed in uh in the image space. So the authors do make this claim that like–actually we can go back here. Maybe it’s not as impressive of a thing as the reason why there’s no additional inference latency is actually how the forward pass eventually ends up looking correct. So, it gets split you have this other you know the original embedding getting Matrix multiplied by the same B and then combined so that’s why there’s no additional latency because you can parallelize that part um and maybe I’ll open another window here so you can compare it and what they’re saying is because we’re using a different data path, you can also stack your original adapter on top. That’s what they mean by orthogonal to existing adapter methods, I don’t know if that’s going to be super useful like if you’ve gone through all this trouble of making sure you know you can get this down to a reasonable size, or you can use another adapter I don’t know how the stacking will work in in the image space I’m not I’m just not familiar enough to have an opinion on it.

Aparna Dhinakaran: I think we’re all excited to see how this actually gets used. Harrison this was super helpful, thank you for breaking down the LoRA paper. Thank you everyone for joining. I feel like I walked away learning a lot about this paper so thank you all and uh we’ll do this again next week different paper drop some suggestions and we’ll see you there thanks everybody.