Orca: Progressive Learning from Complex Explanation Traces of GPT-4 Paper Reading

Introduction

Recent research focuses on improving smaller models through imitation learning using outputs from large foundation models (LFMs). Challenges include limited imitation signals, homogeneous training data, and a lack of rigorous evaluation, leading to overestimation of small model capabilities. To address this, we introduce Orca, a 13-billion parameter model that learns to imitate LFMs’ reasoning process. Orca leverages rich signals from GPT-4, surpassing state-of-the-art models by over 100% in complex zero-shot reasoning benchmarks. It also shows competitive performance in professional and academic exams without CoT. Learning from step-by-step explanations, generated by humans or advanced AI models, enhances model capabilities and skills.

Join us every Wednesday as we discuss the latest technical papers, covering a range of topics including large language models (LLM), generative models, ChatGPT, and more. This recurring event offers an opportunity to collectively analyze and exchange insights on cutting-edge research in these areas and their broader implications.

Watch

Dive in:

Transcript

Jason Lopatecki, Co-Founder and CEO, Arize AI: We’ll kind of let people trickle in here. How’s life over at Harvey?

Brian Burns, Founder, AI Pub + Recruiter, Harvey AI: Oh, it’s quite good. It’s definitely hectic. There is a lot going on. For people on this call I very recently joined the talent recruiting here, it’s a lot of fun.

Jason Lopatecki: We’ll give folks a little bit more time to make their way in, and once they do, maybe we’ll give you a chance to re-intro.

So how did you start AI Pub originally?

Brian Burns: It was really random actually, I took a leave of absence from a machine learning PhD in Seattle, and I wanted to do something kind of small and entrepreneurial in the machine learning space. It actually started with a blog that I called computer vision oasis. I thought I would publish articles and things like this on computer vision. And basically no one read it. And they started posting on Twitter about computer vision. And then, I think, with the whole foundation model paradigm shift over the last two years, 20 or something like that, I kind of came to realize that it’s more interesting to tweet about machine learning more broadly than narrowly computer vision. So then I started to do that. And then the Twitter account kind of blew up.

Jason Lopatecki: With all of the in-person meetups in the Bay Area, an AI explosion kind of started to occur, and you did some of those early meetups. When did that start happening?

Brian Burns: I definitely don’t want to peg myself as starting any of it. But when I moved back here last summer from Seattle there were already a lot of events. I mean, I think there’s a kind of a lot of VC and start up activity that I think really kicked up around the fall with chatGPT. But even before that there was a lot going on. But after chatGPT, there were big events, popular events, funded events, and I think that started around October or something like that.

Jason Lopatecki: Yeah, I mean, it’s pretty insane right now.

Now people have joined, maybe do an intro of yourself and then we could get started Kinda yeah, introduce yourself. Your background kind of where you started where you are now.

Brian Burns: Sure, I’ll keep it short. So I’m Brian. I dropped out of a machine learning PhD and the University of Washington, and I’m interested in computer vision. Last year I started a Twitter account called the AIPub, covering technical AI research topics. ran a podcast for a while with Jason and Arize covering AI research papers. This is kind of a variant of that and also started like a talent referral business for AI startups. I’m at Harvey which is like language models for law firms and systems of language models and software generative AI software for law firms. Recruited for them for a really long time and just joined the team to lead talent recruiting a couple of weeks ago. So that’s what I’m up to now.

Jason Lopatecki: Awesome. And Harvey we hear about everywhere, it’s an amazing startup in the kind of generative ecosystem you know, legal assistant kind of is the focus.

Let’s hop in. I’m a Founder here at Arize, and what we’re going to do today is the Orca paper. And Brian, I’ll let you kick it off. Do you want to describe your take on the abstract–the big picture–and I can kind of do the same?

Brian Burns: Sure, so I mean, Orca is this general topic of using a very large foundation model like GPT-4 that’s very advanced, very intelligent, to train it and fine tune a much smaller language model around 10 billion parameters. There are other examples of this. you know, things like Vicuna, LLaMA, or whatever. So there are other examples of this trend and actually it’s very engineering relevant. We do things like this at Harvey, fine tuning, fast language models, using large, intelligent language models to produce language models that are fast on given tasks. And then the topic of this paper is kind of like improving the training process for these small language models. Where basically, by using techniques that are similar to step by step prompting or chain of thought prompting, they demonstrate that they can fine tune small language models to perform much better one in general, but then two, especially on complex reasoning tasks. That would be my take on the paper, my sense of the paper at large.

Jason Lopatecki: One angle I probably would add to it is there’s kind of a theme in a lot of stuff recently, which is like, which is around quality of data I think, both training but definitely fine tune. I think both are going to both matter. This one obviously focuses on quality of data for fine tune. There’s kind of one school thought which is like this, you know, lots of data just through lots of data at it. I think this is the case here, is going to be a bit more for the data matters, the quality of data you fine tune on matters. And there’s a recent paper from Facebook, where you know a thousand samples of well thought through fine-tuning data, you know, beats vicuna. So I think the goal of this felt like can we beat Vicuna? Can we get close to chatGPTwith a very small model with very selective thoughtful data. and and then how they produce that data from a large foundational model. There’s some really unique ideas, I think, in this, too.

Brian Burns: I have a physical paper here so I’ll follow along.

Jason Lopatecki: I feel like there’s a generation who I’m still in–the paper generation. The early section here is like–can you use a model to supervise and teach a small model? It’s kind of the beginning section here and you know, like we’ve been talking about the teachers and you know, teachers and it using outputs from a large language model. I think the first was probably Alpaca which came out a couple of weeks after LLaMA dropped. Alpaca was largely generated from chatGPT in terms of the data set. So it was kind of like the very first example that turned LLaMA from not a great foundation model to something that felt pretty decent. So it was kind of like that first leap there. And then the question here becomes: How does that idea extend beyond just this first incarnation?

And then, I think Vicuna was the example where there’s a website of GPT-4 you know, natural conversation, and then Vicuna as an example, where they just use those people posting their chats for that. So both of these fit that, using a foundational model results to train a smaller model. What can you do with that?

Brian Burns: Yeah, I think I think one thing that was kind of also interesting from this this section, and maybe it’s a little bit negative, is they kind of critique some of these other small language models where they say that in the other papers the authors of the other papers say that these language models perform just as well, say chatGPT. But really it’s only on a limited set of benchmarks, and if you end up kind of extending the benchmarks, or you include more complicated reasoning tasks, and the benchmarks actually chatGPT ends up performing better than these smaller models. And the sense I get is that’s part of what motivated this paper.

Jason Lopatecki: I think it was like 70,000 examples of shared chats with GPT, or on this, what we’re on this website. And like, you would think, though, that okay, yeah, it might catch up. It might get some of the general feeling of responses. But it is really learning anything in that fine tuning process, and I think they’re kind of hinting here. It’s not like that, you know, when you look at reasoning or real tough problems that’s just not enough. that from a fine tune perspective.

Brian Burns: I think if we’re chatting about these things, one interesting thing that I’ve been thinking about lately–and maybe this is kind of obvious–is just the extreme difficulty of general benchmarking of language models, especially with complex tasks. It’s like we’re getting to the point where these language models are better than humans in many domains. This is obviously true at Harvey, we’re trying to benchmark language models for legal performance, both within specific legal domains, but then legal in general. Doing that is really really difficult to just generically assess performance along a lot of benchmarks. And like this is kind of a theme among a lot of companies that are using language models to do complex human tasks. I mean, like your Github Copilot– I don’t know how the benchmarking works when they swap out a different model or something like that. But I assume it’s incredibly difficult. Jasper–how do you benchmark? you know, models for copywriting like, what are the data sets for that? What are the various sub skills? So it’s just very hard.

Jason Lopatecki: I agree. And there’s this whole area of model evaluation, which is blowing up and like And and if you look at OpenAI Evals, I was blown away by how many people have contributed an evaluation set to that, it’s an insane number of those now, but it’s still like such an early field. There’s a write up on Hugging Face of like. Why, their leaderboard didn’t you know, eval, on the exact eval set didn’t match your paper and it was, and it was actually the harness itself was adding, like both both little bits, to the prompt, to to, to the template as well as like even the way they measured that that the harness was measuring the output differently for a different like execution. Evals itself is such an early field. They hit it a little bit in this paper, but one thing that the paper does a good job, though is doing a really extensive eval, like part of the problem of and and Alpaca, which was you know, you had pretty simple evals, but you didn’t really see the holes or problems in the model itself, and you have to like run a lot more evals on on it to actually see those holes.

So this is kind of like challenges with existing models. And I think this is kind of like challenges, like the alpacas and really it’s kind of hinting at how their instructions and fine-tuning data set were pretty limited. This example is talking about a human contributed to. So this is talking about maybe the volcano. One content generation information syncing queries, you know, got the style, but maybe not reasoning which makes sense.

Brian Burns: I thought that the one thing that was kind of interesting for me here was the limited imitation signals. So it’s like you train these things on. Query, response pairs from, say, a big model like GPT-4. It’s kind of interesting, the idea in part of this paper is that they wanted to enrich these signals the way that the authors do it is basically via some kind of chain of thought, or step by step kind of more elaborate prompting of the model where the model explains more of its reasoning process. But then they also talk about other ways like at least theoretically. You could get imitation signals like logic intermediate reference representations. You can’t do this with GPT-4 because these are not publicly exposed. But if and when there is some kind of open source version of GPT for some kind of Mega model that’s open source. You could do something like this. And potentially, the training could get much better because you get much better intermediate representations. So I thought that was an interesting idea.

Jason Lopatecki: I hadn’t thought about this much, it’s an interesting kind of angle. And then they do talk about evaluations here. So I think we talked a little bit about challenges with the evaluation. GPT-4 bias has been coming up quite a bit, I think. You see that like the long text bias of GPT-4

Brian Burns: They also said when you’re comparing two models, it actually just likes the first one a little bit better.

Jason Lopatecki: Okay, that’s funny. Yeah, there’s probably a lot of subtle things to figure out. I’ve grown comfortable with it. But it feels like there’s so many nuances to it that, like we all need to kinda figure out. And you know, it feels like. It’s the only way to really scale these valuations. But there’s a lot to unpack in terms of mistakes. It can make key contributions. Did you have some thoughts on these?

Brian Burns: So, to me the kind of main sauce of the paper, the main idea of the paper, at least personally, is just augmenting this query response process. So what the previous papers Vicuna, Alpaca, and Llama etc. have done is they just train on a set of queries to a language model, and then the response from the language model. Basically, how this whole paper works is they add, like one layer on top of that, where, instead of it being a query, and then a response–maybe let’s like, give an example. So previously, the way that these models were trained is basically just these latter two instructions. The main idea of this paper to me is to just add this first additional prompt which is a system instruction. So if you are an AI assistant, this will give you a task. Your goal is to complete the tasks as faithfully as you can while performing the task, think step by step, and justify your answers. And the authors have a total of like 16 of these different system instructions. So personally, the whole paper for me in my mind, like in a sentence, is just like augmenting the data with these system messages of various sorts to cause the language model to be more elaborate and give more of a step by step, reasoning in the output. so that’s to me like the main idea of the paper.

Jason Lopatecki: I think what’s fascinating is really, how to think through a problem, and then fine tuning on that like is what makes the expression, you know, of reasoning better of you know all, all the stuff that is kind of what the big idea is.

Brian Burns: It is very interesting to think about like LLM psychology. Andrej Karpathy posted about this on Twitter, like he says that, like interfacing with a language model, is like interfacing with a really smart person who has the mandate to produce the next word in the next second. With these language models, no matter how hard the thought that you’re making them think they use the same amount of compute, like they use the same amount of compute to produce the next token, whether it’s a really simple thought that produces a token, or whether it’s really complex thought. So like in a way these step by step instructions give these anxious language models a little bit more room to breathe when they have to think and it’s like you make them more time, like, I’ve kind of almost thought like. And this is like a really, I wonder if there’s been a paper like an experiment where you just somehow allow the language model to say over and over and over again. And then it’s like, maybe like with that. So I think in a way, I think a lot of the step by step instruction. Basically, it gives the language model more time to think, because it’s actually programmed to just produce.

Jason Lopatecki: I would say, a lot of things that have come out recently, like scratch pads and and ways of inserting information into that. But my take is like you know, the attention on the attention works by. You know your next words based on all the other previous words. So by kind of filling out and having more context, you’re more likely to be applying more embeddings and information relative to your subject, plus attention to that next word. So by extrapolating and getting out there. You know you’re you’re you’re providing more information to that next word which is kind of what the scratch pads do, and in some of the in some of the latest stuff. But exactly that, like you’re giving it more space to you know, to apply more of the same ideas before it generates that final answer. And then, in this case, you’re fine tuning it to understand that reasoning better, based upon the data from the other model in terms of a data set construct.

So then they talk a little bit about data set construction. So this is just your system message stuff you’re talking about, like all the different variations of like trying to get it to be slightly more verbose or or thoughtful in the data is sharing which then it’s going to be fine-tuning data.

Brian Burns: One thing that I thought was kind of interesting. I think they actually talk about this in the next section or the next page. But one thing that is kind of interesting is that they sampled like five to one from chatGPT, and then GPT-4. And they said, if I remember correctly, that they got slightly better performance by first training it all on chatGPT and then the later on GPT-4, the idea being that there’s some kind of curriculum learning thing going on. Where, first you learn from the simpler model, and you learn the simpler reasoning from the simpler model. And then kind of once you’re smarter. And once you’ve graduated almost to the next level, then you get to learn from GPT-4, and supposedly that kind of progressive learning trained the model somewhat better.

Jason Lopatecki: Interesting. Well, let’s keep going through the data set side of this. So this is like different system messages for different data sets. So this is talking about again, how do they come up with their datasets for the generation of the data fine tuning. Note, some of these they sample. They’re definitely trying to get a smaller data set but it’s definitely bigger than, say, an Alpaca, or a Vicuna. And it talks about the different data sets and types. I think, is a lot more thoughtful in kind of the fine tuning data selection, I mean clearly more thoughtful than the first versions, which is the whole idea of this: Can we be more thoughtful in our generation of fine tuning data and task set? And this just gives examples from some of the data sets, how they sample. It’s kind of nice.

When they’re using chatGPT versus GPT-4 responses, it’s pretty clear GPT-4 has more to say. So it’s just, you know, I think it’s kind of well known that you get these longer responses from it. Obviously, it’s probably because of the way it’s fine tuned or aligned, but that’s just. I think they’re trying to note it here.

So this gets into the baseline models and test sets. To me it felt like, can I get close to chatGPT with a smaller size. And can I beat Vicuna now?

Brian Burns: Clearly there’s an interesting diagram, or like Eval diagram later in the paper, where it kind of show you how it works out lines up against, like against chat, Bt, against gpt for it. It’s human performance.

Jason Lopatecki: Yeah, there, there’s some really good pictures. We’ll keep going. But that you’re right there. There’s some like it, may I? I thought, really good pictures that kind of showed that. How they did across this set of evals.

Brian Burns: I don’t think that was somehow the main innovation of the paper again. I think the main thing is, the system prompts for fine tuning. but I do think that was like another kind of major element of the paper, basically like extending the Eval data set to include much harder reasoning tasks to to kind of really see how these models match up against much larger models like chatGPT or GPT-4.

Jason Lopatecki: Have you seen the Hugging Face leaderboard? I feel like everyone’s trying to get the spots now. But there’s like, there’s like three or four evals that they have on it that like sort the latest models, and everyone’s trying to like, get up there. But like, you realize that you want like you potentially well, like a much broader set, and more selective like I can imagine, as a new model comes out. You know, folks at Harvey, or folks at whatever might want to know. Not just how it’s doing on these three Eval sets. This whole field of Evals and leaderboards is still early in its incarnation. If you only ran three of all sets you wouldn’t see all the holes and everything. so so how do you get a broad enough set with enough detail to know, like this latest thing is going to be good for what you want it to be worked on. They did a really good job of building the test set here. There’s stuff on reasoning. There’s stuff on open-ended generation. They obviously compare against Vicuna which is again very simple in generation.

Brian Burns: But what I found interesting is like, how do these evals actually work? Because ultimately you have to come up with a number right given that the response is completely open ended. so they end up having to kind of like programmatically, parse the model responses. I actually forgot, like, I don’t know if there’s like an intermediate language model. Pretty sure there’s an intermediate language. Yeah, there’s an intermediate language model. It says something like among 0 through 3, the answer is, and it kind of converts the long form response answer to basically a multiple choice question. I also thought that was kind of interesting.

Yeah, just the amount of processing that needs to be done to actually make these open ended evals numeric is…

Jason Lopatecki: Yeah, I totally agree. And it can vary quite a bit by even the hardness you choose for the same Eval sets which is what we’ve seen. So some of these a lot of these, I think GPT-4 is the raider and evaluation. They give some examples later on. We’re like, pick a number between 0 and 10 to rate the you know the rate this and And so there seems to be. There’s probably based upon your eval set. There’s even different approaches to getting that number in the end. which I think is also the the challenge in comparing things

Any thoughts? So in this section, it’s kind of like Orca versus chat, or to versus GPT-4

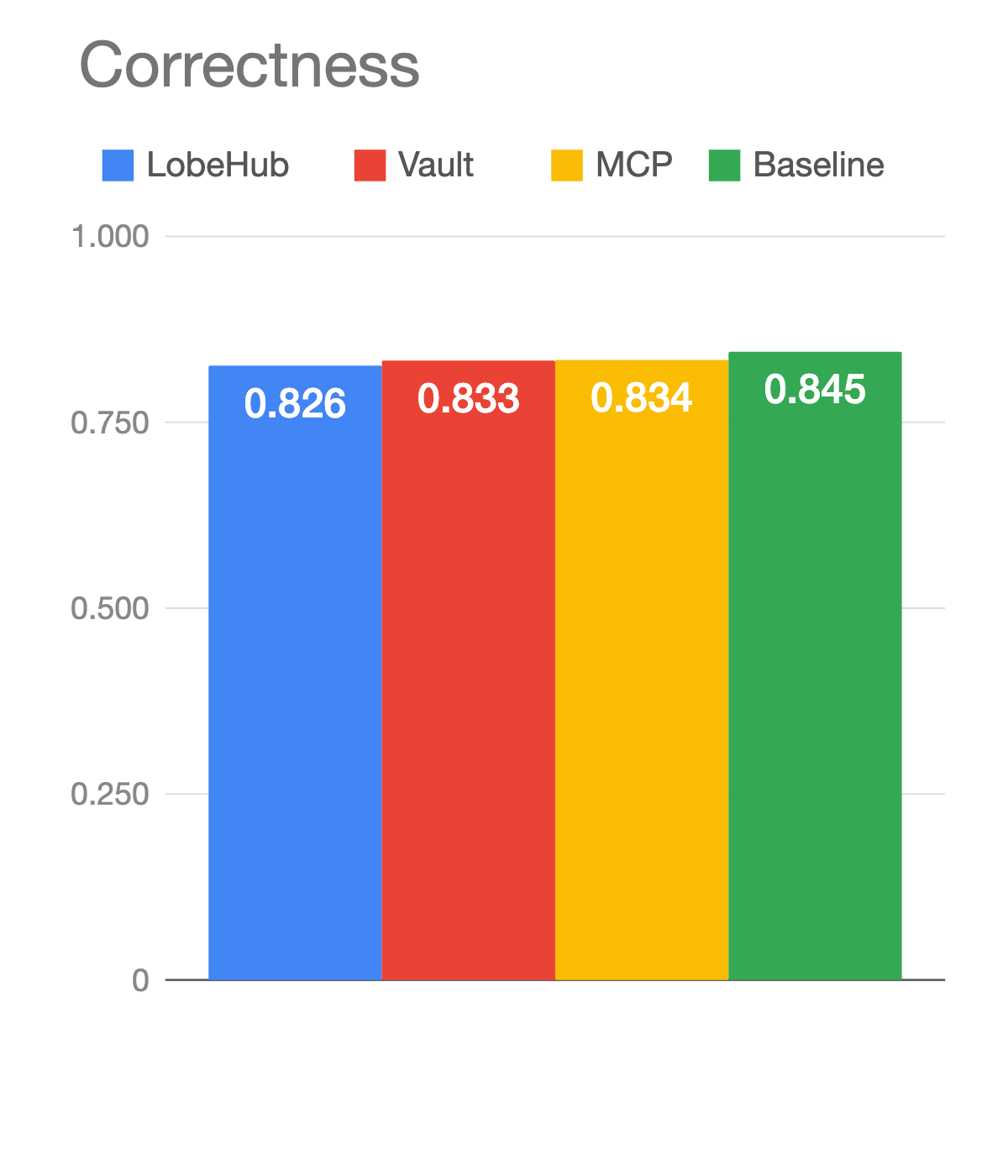

Brian Burns: I would say two things, one, they go into this later, like in the next section, or something like that. But across the board, across various metrics, they get something like a 10% relative improvement. across kind of simple language, modeling benchmarks. But the interesting thing is that among these more complex tasks for example, to Vicuna or formal fallacies or geometric shapes, or, you know, sports understanding, or things like some more kind of more complicated tasks, Orca ends up performing way way better. They have a bunch of numbers here on a relative basis. You know 40% better, 400% better. So like, there’s this general theme where it’s like you get a moderate boost on the kind of medium difficulty tasks. But then, on the very difficult tasks that actually required more elaborate reasoning that the work it performs much better. And they have some data to back that up.

Jason Lopatecki: Oh, this was an interesting section. So the subtle point on this one was like. Okay, if I only take the GPT-4 outputs which are supposedly better, you know better descriptions better, you know chains of thought that are reasoning. If I only fine tuned the results from GPT-4. ChatGPT gives you more volume of fine tune data but less quality. And then, you know, GPT-4 gives you great data, but lower size. And it’s interesting that by adding the chatGPT data in, you’re still better, one question would only need the really thoughtful results. And the answer is actually, if you’re going to fine tune, you might want a little bit more volume or diversity, you know. There’s something that you know, quality versus quantity trade off here that’s not clear. You only want one thing. I thought that was kind of interesting in this section.

Long context–I feel like these have been coming up a lot like your context window, and how well things use your context window, and how good things are on context windows. I thought this was a fun point to make that GPT beats some of this stuff, possibly because of how well it uses the context window and how well it does in longer context. Lots of papers recently on this. I don’t know if you’ve seen some of the stuff I dropped in our community on the context window stuff, but a lot of work is going on to understand what’s going on in the context windows and have stuff better use data there, and these are the ones you were talking about. Do you want to describe what this looks like?

Brian Burns: Sure, I mean, yeah, it’s just the idea that there’s not just one unified benchmark, but rather there are a lot of different ones. And you can see there’s actually quite a difference in performance where it’s like at least they claim, for example, chatGPT, at least on some sections of the LSAT actually performs better than humans. But then, on other sections, humans perform better. So then, you kind of have to evaluate models on different axes, and you can see how that plays out here. And at least according to this kind of very simple picture, Orca is strictly at least in this very eight dimensional picture Orca ends up being strictly worse to chatGPT but in many ways comparable and then, at least in some dimensions like the SAT English even out that performance. So yeah, I thought that was kind of interesting. I just like the graph, just being able to see a kind of multi-dimensional evaluation is a very simple graph, like later on, with like kind of your data sets.

Jason Lopatecki: I was wondering what is the data set that humans crush GPT-4 on right? I wanted to go look at it.

This just speaks to the depth of evaluation that this team did here, too, like you look at just how much they’re tracking across every you know everything here, and it’s impressive how much evaluation was done here. But you can also see, like by testing different areas you get, you, you see, different holes. And I thought this was pretty good lately, pretty comparable. At least this that’s pretty comparable.

Brian Burns: It’s also very interesting like it, just as a general commentary and language models, and at least the current limitations is just no one. None of them do well at tracking shuffled objects so like the spatial reasoning I mean, obviously, GPT-4 is very powerful, very impressive, but still, the kind of spatial and geometric reasoning is quite limited.

Jason Lopatecki: That’s interesting, yeah.

Brian Burns: I mean yeah, if you look at the graph. It’s like there’s kind of like a quarter circle here. And then, yeah, the top left quadrant is missing for everyone. Minor note.

Jason Lopatecki: Although this paper was kind of designed for something else, I haven’t seen this put in such a good form in terms of like looking at Evals and comparing evals, it was well done.

So truthful QA. by the way, this is one of the ones in the Hugging Face leaderboard. So they do use this one but it’s like a set of three or four evaluations. So it sounds like I’m the truthful one again.

Brian Burns: I honestly kind of skimmed this, the last bit on the toxicity and stuff like that.

Jason Lopatecki: Okay. yeah, we’re getting questions here. Are we comparing apples to apples when we’re talking billions of parameters? Is it the thing that differentiates the models? You know. the billions of parameters size does define your cost a bit. It does define your inference, latency. There are aspects to it that make it a nice metric for comparability. But you’re right as you get a mixture of experts versus not like the, you know, quite different things we’re comparing when we’re doing that.

So yeah, this is just again toxicity. How it does, and toxicity versus GPT-4 And again, I think they’re trying to beat Vicuna, you’re probably not going to meet your chatGPT results. And again, it’s probably a model size, plus kind of data sets that you’re fine tuned on that get you there?

Brian Burns: Yeah, the last bit right here kind of before the paper ends. This is very minor. But I found it interesting. It’s like having these smaller 10 billion type models like Alpaca, Vicuna or Orca augmenting them with tools might be a very good future direction given. It’s kind of like you’re working with this constraint of fewer parameters to think and it’s like, if you can free up some of that by giving them tools, then somehow you have more memory to memorize other things. Because I mean, you know it’s like, if you ask, like LLaMA , or sorry Vicuna, or whatever Orca like, add two plus two that comes back with 4. It’s actually doing like 10 billion floating point operations in order to actually compute that when it could just call a calculator or something like that. So I imagine that with these smaller models, we get way better when there is an easy way to add tool use to them.

Jason Lopatecki: Yeah, I don’t know if you got to play with code interpreter yet? I used it over the weekend and it’s magical for data analysis. Go into the beta like, and you pay for a month for access, there’s a whole alphabet like in your settings. It’s slightly buggy but if you manage the, you know the complexity of your ask and your data size, it’s pretty magical right now, and some of the analytical stuff can do so. So I think there’s a big promise there of when to call tools. Maybe those tools are code and you’re rightI think your earlier section is like the sorting you know the the sorting stuff or the highlighting where the problems are, maybe the right things to do are call out our tools. And then I think the point on this one that I had highlighted here is like, there is this trade off in exactly what you’re saying, which is that there’s probably a trade off between memory, memorizing something, and in terms of parameters and learning concepts. And right now, you’re kind of using the models, you know, parameters to do all of it you’re learning yeah concepts and your memorizing. And it’s kind of like the point. It struggles to trade that off as you get the smaller small models.

I think we’re kind of at the conclusion here. There’s a lot of potential here. I think we’re definitely going to see fine tuning. We’ll definitely see models using other models’ outputs for building. It is worth noting. One interesting thing here is probably going to limit some of this. I I don’t know if you noticed that. I think, like all the Google and OpenAI, is try to put terms of service like trying to limit this to. It’s something that’s kind of blowing up in the LLaMA OSS communities, but, how much people are going to use it, or how much people can can can. I think there’s going to be questions on limitations of this to like, where can I use it from a corporate perspective?

Brian Burns: Yeah, I like to be totally private with candidates, obviously, but I will note this week I spoke with two candidates who are doing side projects– a variety of sketchy stuff that they told me about, and they all have to do it open source because they were using chatGPT or commercial OCR software to do things that skirted copyright laws or things like that. There’s way sketchier stuff that I’m not going to talk about on zoom, and you know they’re trying to do this stuff as side projects. And what they do is they use open source models. So I feel like this stuff is really going to explode. If there’s ever like. We’re, you know, whenever there’s kind of an open source. Very large foundation model like GPT-4 that’s just you can open source. It also goes back to the other thing about intermediate representations. Like, again, like, why this stuff works is that you get a lot of this step by step, reasoning. But what would be way better is if you got like all of the neuron activations, you know, for all of the whatever trillion, I don’t know– 10 trillion multiple trillion parameters are inside. GPT-4. And if you could actually use those as signals instead of just the output. So I do think a lot of the stuff is going to get really interesting when the large foundation models become open source.

Jason Lopatecki: Yeah. And it’s I mean, I’ve been kind of hoping that Facebook or Meta, I would come up with a licensing model for LLaMA . You know my fingers were crossed, although I think the lawsuits flying you know, are probably going to hinder that, you know. But maybe Harvey can help out with the lawsuits.

Brian Burns: Maybe!

Jason Lopatecki: Well, awesome. awesome to have everyone. Thanks, Brian, for joining us in this episode.

Brian Burns: Yeah, thank you.

Jason Lopatecki: And thanks for everyone for joining.