This post covers the organizational and regulatory patterns that shape AI platform decisions in banking, and why the Arize ecosystem aligns with how these institutions actually operate.

Federated Architectures

Large banks rarely operate as a single, centralized technology organization. Instead, they are structured as federated systems of business lines, each with its own priorities, budgets, and operational constraints.

A typical bank may have major business divisions such as retail banking, investment banking, asset management, and risk. Within each division sit multiple profit-and-loss (P&L) units responsible for their own products and revenue streams. Each of these units often builds and operates its own technology stack, including AI systems.

This structure creates several practical challenges for AI platforms.

Different levels of AI maturity

Some business units operate mature machine learning platforms with dedicated ML engineers and model governance processes. Others may be early in adoption, running a small number of models or relying heavily on vendor solutions. A centralized AI platform must therefore support teams across a wide spectrum of technical maturity without forcing a single rigid workflow.

Independent budgets and infrastructure

Because P&L units manage their own budgets, infrastructure decisions are frequently decentralized. Models may run in different cloud accounts, different regions, or even private datacenters. A monitoring and observability system must operate across these boundaries without requiring full infrastructure consolidation.

Complex networking and account isolation

Banks typically enforce strict isolation between environments. Business units often operate in separate cloud accounts or VPCs, with tightly controlled networking policies. Connectivity to central platform services may require:

- Private networking

- Cross-account permissions

- Secure gateways

- On-premise connectivity

As a result, simple centralized architectures often fail to deploy cleanly inside banking environments.

Platform teams serving multiple organizations

Central platform teams are responsible for providing shared infrastructure and tooling across the institution. However, they must do this while respecting the autonomy and isolation of individual business units.

This requires systems that can support:

- Multi-tenant deployments

- Cross-account integrations

- Flexible data routing

- Strict access controls

In practice, AI infrastructure in banks must function within a federated model, where central teams provide common capabilities while business units retain operational independence.

Platforms that assume a single unified environment tend to struggle in this structure. Solutions must instead be designed to operate across distributed infrastructure, heterogeneous teams, and independently managed environments.

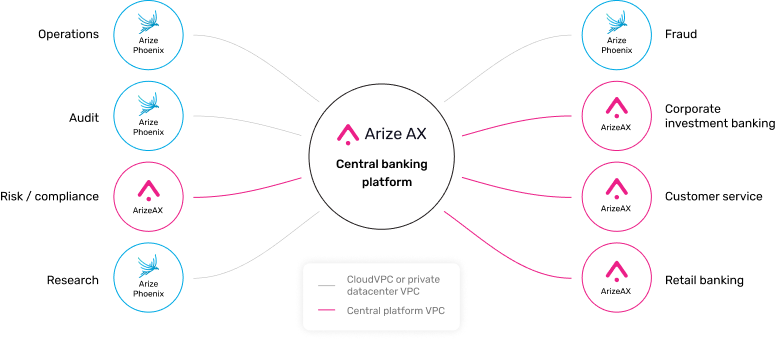

Why the Arize Ecosystem Works in Federated Environments

The Arize ecosystem aligns well with the federated structure common in banks. Arize Phoenix is open source and deploys as a single container, which significantly reduces internal friction. Teams can run it with approved infrastructure such as a standard PostgreSQL backend, making it accessible even for business units with limited budget, early AI maturity, or strict internal approval processes. This allows individual teams to start quickly and reach value without requiring centralized platform changes.

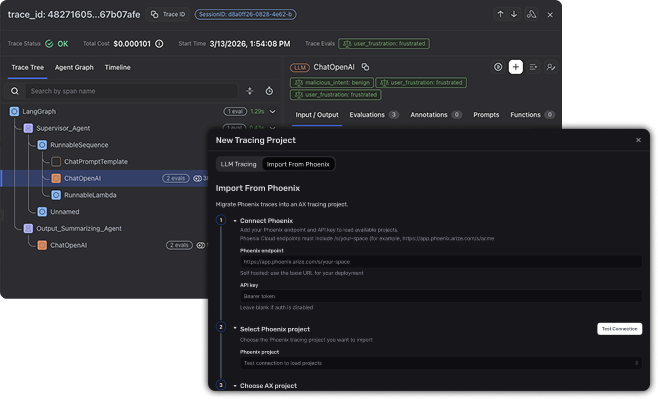

As teams scale or platform groups standardize observability across the organization, they can transition to Arize AX, which is designed for large-scale deployments and built on a purpose-built data store optimized for AI observability workloads (see the adb architecture post). Migration from Phoenix to AX is intentionally straightforward, with one-click data migration, allowing teams to move to enterprise infrastructure without rebuilding their workflows.

This progression (from lightweight Phoenix deployments within individual business units to scaled AX infrastructure managed by central platform teams) fits naturally within federated banking environments. Teams can start locally, mature independently, and converge onto a shared platform when scale, governance, or standardization requires it. This adaptability across organizational boundaries is a key reason many banks adopt the Arize ecosystem.

High Regulation, Compliance, and Risk Mitigation

Banks operate in one of the most heavily regulated technology environments. Financial institutions must satisfy oversight from multiple regulatory bodies while ensuring that internal risk, security, and governance standards are consistently enforced.

Arize works with banking customers across North America, Europe, APJ, and other regions. While each jurisdiction introduces its own requirements (from regional regulatory guidance to emerging frameworks like the EU Artificial Intelligence Act) the operational patterns banks need for AI governance are broadly consistent.

Auditability and reproducibility

Banks must be able to reconstruct how a model behaved at any point in time. This includes understanding what inputs were received, what system components were involved, and how outputs were generated. Observability primitives such as traces, spans, and session-level logs provide the technical foundation for this capability.

These records enable teams to:

- Audit model behavior after deployment

- Replay historical interactions for investigation

- Trace failures or unexpected model outputs

- Support internal governance and regulatory reviews

Without strong observability, it becomes difficult to demonstrate how AI systems operate in production environments.

Evaluation as a governance mechanism

Evaluation is increasingly treated as part of risk management rather than purely model development. In regulated organizations, evaluations must support both technical validation and governance workflows.

This includes:

- Offline evaluations during development and testing

- Online evaluations monitoring live model behavior

- Span, trace, or session-level evaluations

- Workflows accessible to non-technical stakeholders, including risk, compliance, and product teams

Allowing non-technical teams to run or review evaluations is particularly important. Many governance reviews require domain experts rather than engineers to assess model outputs, policy adherence, and decision quality.

Security and AI risk controls

Financial institutions must also address emerging AI-specific risks. This includes adversarial behavior, prompt injection, and misuse of generative models in customer-facing systems. Industry guidance increasingly points toward practices such as OWASP Top 10 for Large Language Model Applications and structured LLM red teaming to identify vulnerabilities before systems are widely deployed.

Banks therefore require platforms that help support:

- Security testing and red teaming workflows

- Monitoring of model behavior in production

- Guardrails that reduce exposure to unsafe or policy-violating outputs

- Clear audit trails for internal risk review

In practice, AI systems in banking must satisfy both technical reliability and regulatory accountability. Observability, evaluation, and security testing form the core operational capabilities needed to support these requirements.

How The Arize Ecosystem Helps in Compliance and Risk

Arize provides a unified platform that enables banks to operationalize these requirements at enterprise scale. Observability, evaluation, and governance capabilities are consolidated in a single system, allowing organizations to manage technical monitoring, compliance review, and risk mitigation workflows from one place.

This centralization is particularly valuable in banking environments where multiple teams (ML engineers, platform teams, risk, compliance, and business stakeholders) must collaborate on the same AI systems. Tracing, spans, and session-level visibility provide the auditability required for regulated environments, while evaluation workflows allow both technical and non-technical users to assess model behavior through offline and online evaluation pipelines.

By bringing these capabilities together, Arize helps banks reduce fragmentation across tools and processes. Instead of separate systems for monitoring, testing, governance, and review, organizations can manage these overlapping responsibilities within a single platform. This simplifies operational oversight, strengthens audit readiness, and enables institutions to scale AI deployments while maintaining strong risk controls.

Further reading:

- OWASP Top 10 for Agentic Applications Compliance Guide

- Quick Guide to the EU AI Act for AI Teams

- Guardrails Documentation

- LLM Red Teaming

Central Platform Team Requirements

In most banks, centralized platform teams are responsible for enabling AI capabilities across the institution. Their role is not to build every application directly, but to provide the infrastructure, tooling, and governance frameworks that allow business units to build safely and efficiently.

The challenge is the breadth and diversity of the organizations they support.

Platform teams must serve dozens (sometimes hundreds) of internal teams that vary widely in AI maturity, technical capability, budget, and operational needs. Some teams run sophisticated ML pipelines, while others may only be experimenting with early LLM applications. The platform must support both without introducing excessive operational burden.

Access control and organizational structure

Banks require strict control over who can see and interact with data, models, and evaluations. Platform teams therefore need robust role-based access control (RBAC) and flexible workspace structures that mirror internal organizational boundaries.

This allows institutions to enforce policies such as:

- Isolating data between business units

- Restricting sensitive model outputs

- Granting different access levels to engineers, analysts, and governance teams

- Supporting cross-team collaboration without breaking compliance boundaries

Without flexible access controls, platforms quickly become unusable in regulated environments.

Supporting both technical and non-technical users

AI systems in banks involve more than engineering teams. Product managers, risk officers, compliance teams, and domain experts all participate in model review and governance processes.

As a result, platform teams must support two very different modes of interaction:

- Programmatic workflows for engineers building automated pipelines and evaluations

- UI-based workflows for analysts, business stakeholders, and compliance teams

Platforms that only support one of these user groups tend to create bottlenecks in regulated organizations.

Customization and operational monitoring

Different teams require different metrics, dashboards, and alerting configurations depending on the type of model or application they operate. Platform teams must provide systems that allow teams to customize monitoring and evaluation views while still operating within a shared governance framework.

This flexibility is necessary to support the wide range of use cases across the bank.

Air Gapped Deployments

Air-gapped deployments are common in banking environments where security policies prohibit systems from accessing the public internet. Many financial institutions operate sensitive workloads inside isolated networks to reduce exposure to external threats and to satisfy regulatory and internal security requirements. AI platforms deployed in these environments must therefore function entirely within controlled infrastructure, often inside private datacenters or restricted cloud networks. Supporting air-gapped deployments ensures that observability, evaluation, and governance tooling can operate without external connectivity while still meeting the security, compliance, and risk management standards required by regulated financial institutions.

Cost attribution and internal chargeback

Many banks operate internal chargeback models where platform usage is attributed back to the business units consuming the resources. AI platforms must therefore provide visibility into usage patterns and costs, allowing central teams to allocate expenses according to actual consumption of infrastructure and LLM workloads.

This ensures that AI adoption scales sustainably across multiple business lines.

Self-service enablement

Finally, platform teams aim to reduce redundant engineering effort across the organization. Without shared infrastructure, individual teams often rebuild similar tooling independently, leading to duplicated work and inconsistent governance.

A well-designed platform enables self-service onboarding, allowing teams to deploy, monitor, and evaluate models using standardized enterprise patterns. This allows business units to move quickly while maintaining consistency across the broader organization.

For banks, the central platform team is effectively responsible for enabling AI across a federated institution. Supporting this mission requires platforms that can handle organizational complexity, governance requirements, and large-scale multi-team adoption.

How Arize AX Helps Central Teams with Their Biggest Platform Needs

Arize AX is designed to support the operational realities of central platform teams at large banks. Instead of requiring institutions to build and maintain their own observability infrastructure, AX provides a production-ready system that can integrate directly into existing internal platforms.

Through REST and GraphQL APIs, platform teams can programmatically interface with Arize while still maintaining their own internal developer portals or platform tooling. This enables banks to deliver a self-service AI observability layer to internal teams without forcing a rigid architecture. Teams can start generating value immediately while still integrating AX into broader internal systems and workflows.

Customization is another critical requirement for enterprise environments. Different business units often require different monitoring views, evaluation logic, and alerting behavior. Arize AX supports this through custom metrics, dashboards, alerts, and evaluation pipelines, allowing platform teams to provide flexible tooling without fragmenting governance practices across the organization.

Access control is also a core capability. RBAC in Arize AX is highly granular and configurable, enabling banks to define custom roles, policies, and workspace boundaries that match their internal governance models. Access can be controlled not only at the user and workspace level, but also at the model level, allowing institutions to specify which users or teams are permitted to interact with specific models or datasets.

Finally, Arize supports both code-first and UI-driven workflows, enabling collaboration across technical and non-technical users. Engineers can integrate observability and evaluations into automated pipelines, while analysts, risk teams, and business stakeholders can review model behavior and run evaluations directly through the interface.

Together, these capabilities allow central platform teams to deliver a scalable, compliant, and flexible AI observability layer across the bank, without needing to build and maintain the entire system themselves.

Further reading:

Technology vs. People

In many banks, the limiting factor for AI adoption is not the technology itself but the people and processes required to operationalize it.

Large institutions often have early AI adopters: teams or leaders pushing advanced capabilities into production. However, broader adoption across the organization moves at a different pace. Different teams must learn new tools, understand model behavior, adapt governance processes, and build internal confidence in AI systems. While the underlying technology evolves quickly, institutional change tends to move slower.

As a result, bottlenecks emerge around education, enablement, and operational best practices rather than infrastructure.

Bridging the Gap

Arize works closely with enterprise customers to provide training, guidance, and proven operational patterns. Having supported some of the largest banks globally, the team has experience with the organizational challenges that accompany large-scale AI adoption.

Beyond the technology platform, Arize helps institutions understand what successful AI platform teams do in practice (from governance models to rollout strategies) to help banks avoid common pitfalls and accelerate adoption across teams.

Conclusion

AI adoption in banking is shaped as much by organizational structure as by technology capability. Federated business lines, strict regulatory requirements, platform team constraints, and the pace of institutional change all influence what AI infrastructure can realistically succeed.

The Arize ecosystem is designed with these realities in mind. Phoenix provides an accessible starting point for individual teams operating within their own constraints. AX scales to enterprise requirements when platform teams standardize across the organization. Together, they offer a path that accommodates how banks actually work: distributed, regulated, and operating across teams with widely varying needs and maturity levels.

For banks evaluating AI observability and evaluation platforms, the question is not just whether a tool has the right features. It is whether the platform fits the way the institution operates.

See How Leading Banks Run AI Observability

Arize works with financial institutions ranging from global systemically important banks to regional leaders across retail, investment banking, asset management, and insurance. Our team understands the deployment realities of federated environments, air-gapped infrastructure, and the compliance requirements that shape AI platform decisions.

If your platform team is evaluating AI observability and evaluation infrastructure, we can walk you through how similar institutions have deployed the Arize ecosystem, from initial Phoenix adoption within individual business units to enterprise-wide AX rollouts.