If you prefer to use Terraform, jump to Applying Bucket Policy & Tag via Terraform

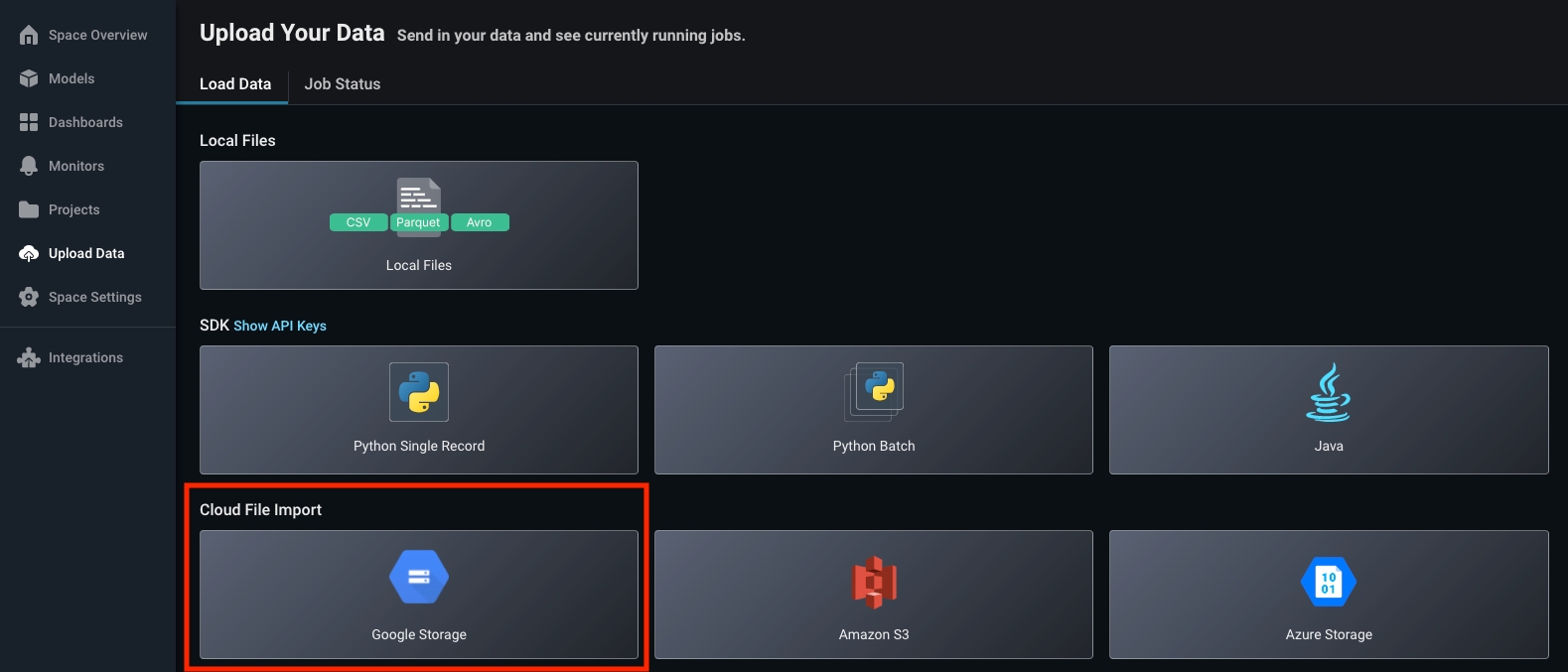

Step 1. Select Google Storage

Navigate to the ‘Upload Data’ page on the left navigation bar in the Arize platform. From there, select the ‘GCS’ card to begin a new file import job.

Step 2. Specify File Path

Get File Path In GCS: Within your project, navigate to the folder you wish to ingest and click on a file to easily copy your file path.

gs://

gs://docs-example-bucket/production/ that contains parquet files of your model inferences.

The file structure can take into consideration various model environments (training, production, etc) and locations of ground truth. In addition, GCS bucket import allows recursive operations. This means that it will include all nested subdirectories within the specified bucket prefix, regardless of the number or depth of these directories.

Arize supports up to three layers of wildcards

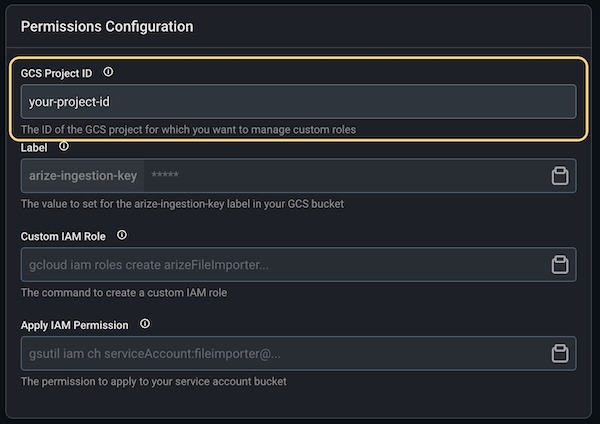

Step 3. Add GCS Project ID

The GCS Project ID is a unique identifier for a project. See GCS Docs for steps on how to retrieve this ID.

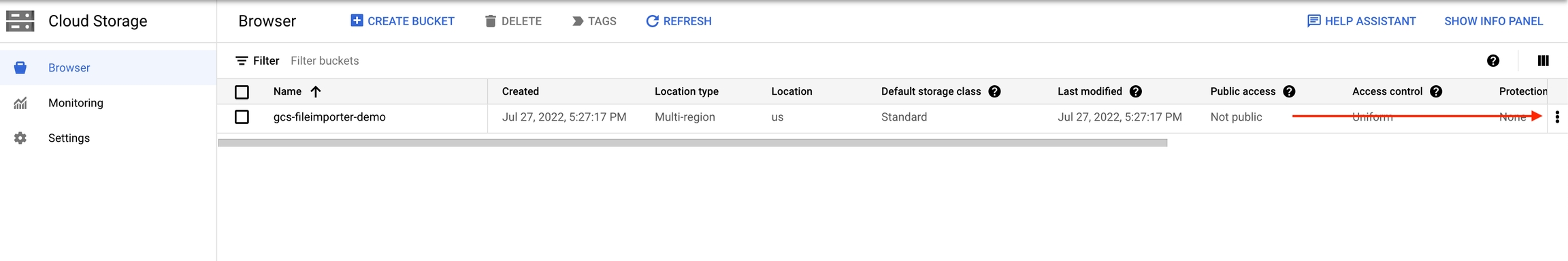

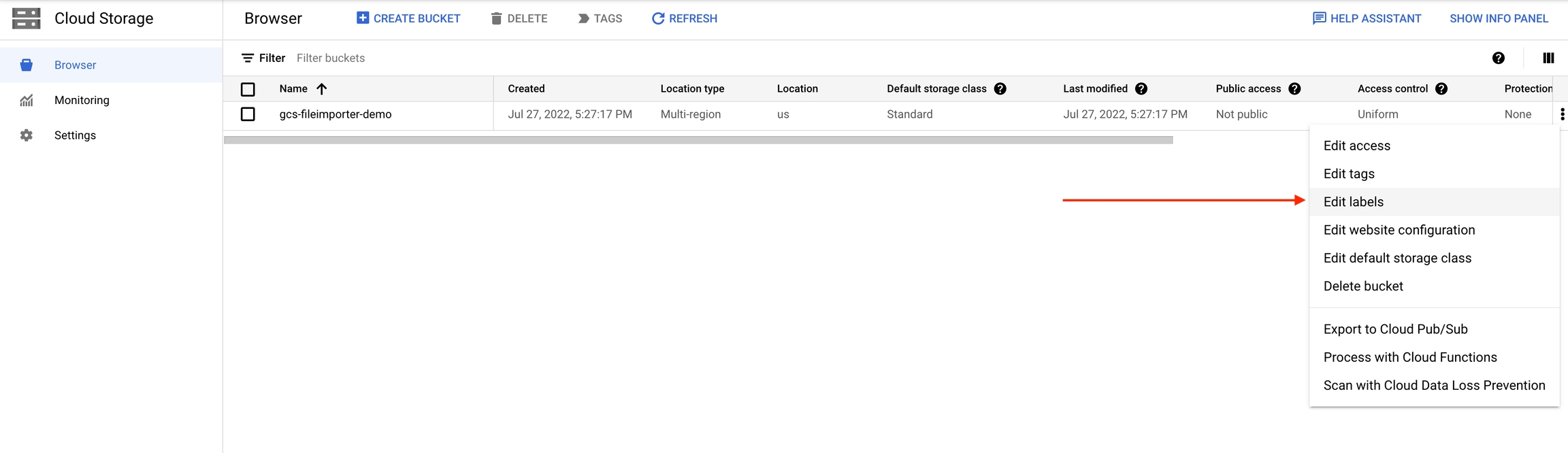

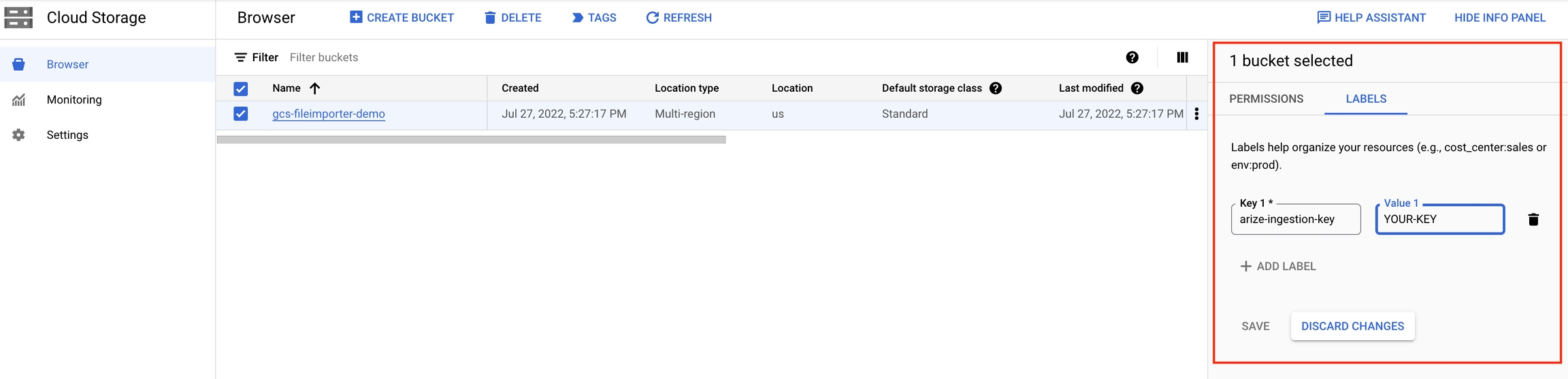

Step 4. Add Proof of Ownership To Your GCS Bucket

Tag your GCS bucket with the keyarize-ingestion-key and the provided tag value. For more details, see docs on Using Bucket Labels.

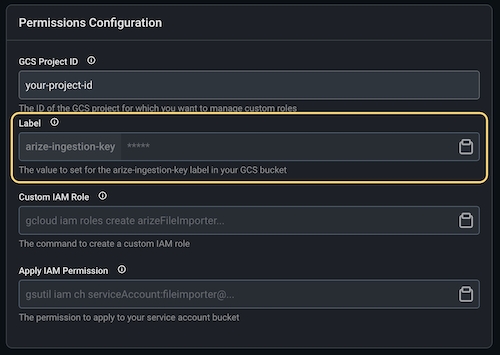

1) In Arize UI: Copy arize-ingestion-key value

- Key: arize-ingestion-key

- Value: arize-ingestion-key value from the Arize UI

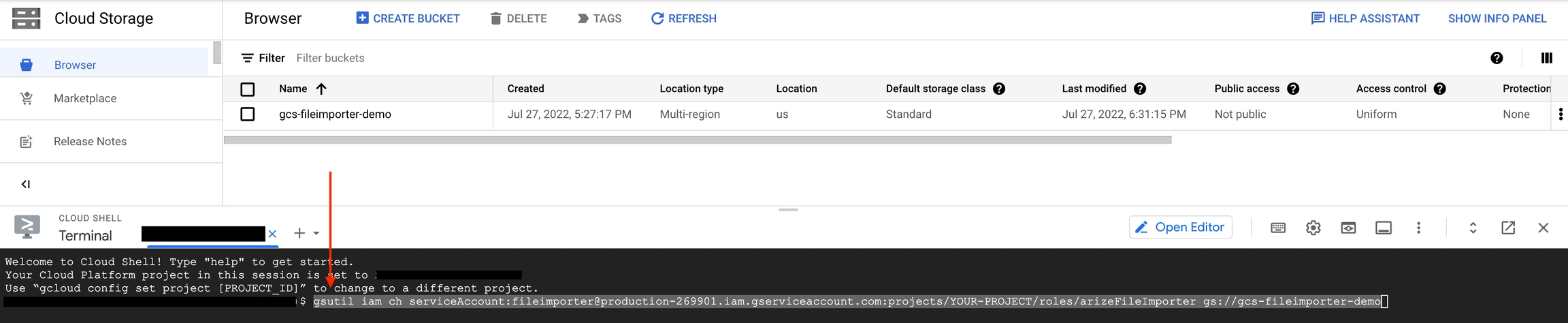

Step 5. Grant Arize Access Privileges

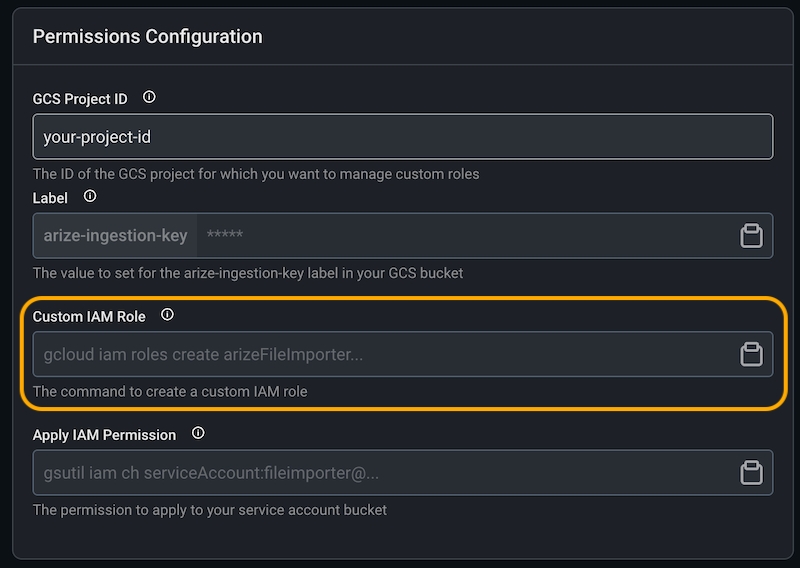

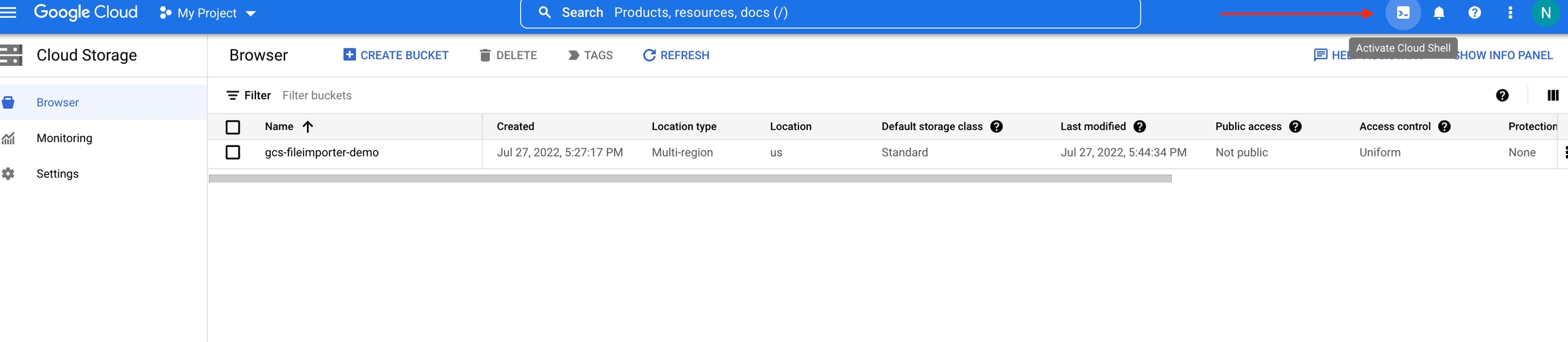

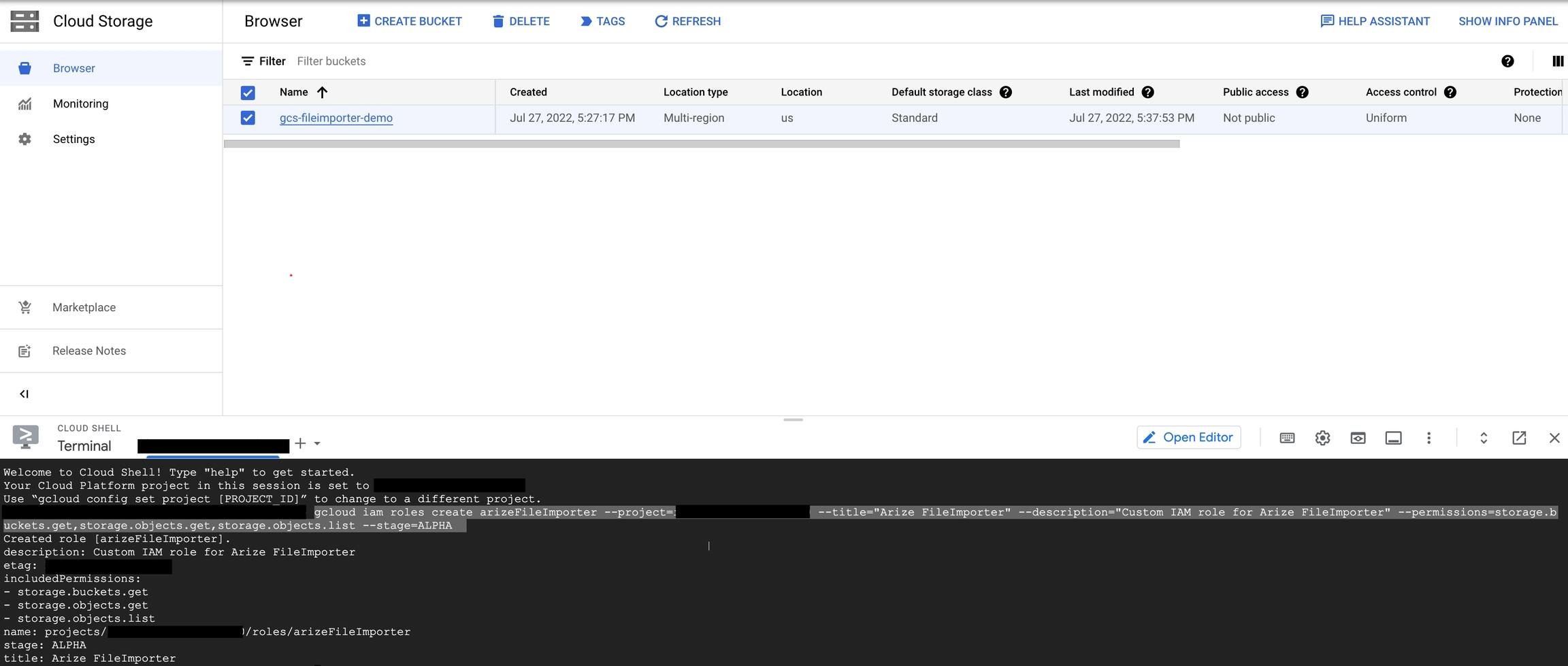

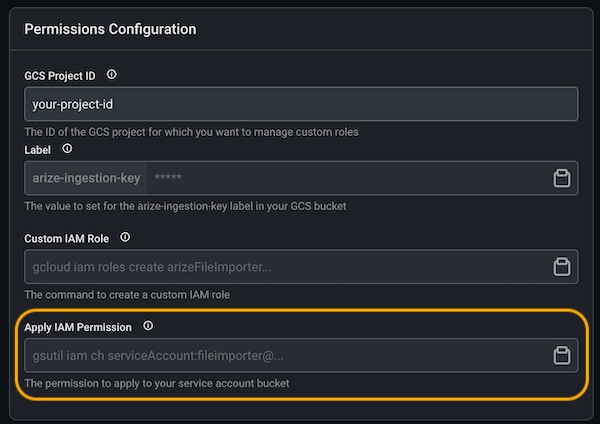

Create a custom role and copy the command from the Custom IAM Role field in Arize UI.

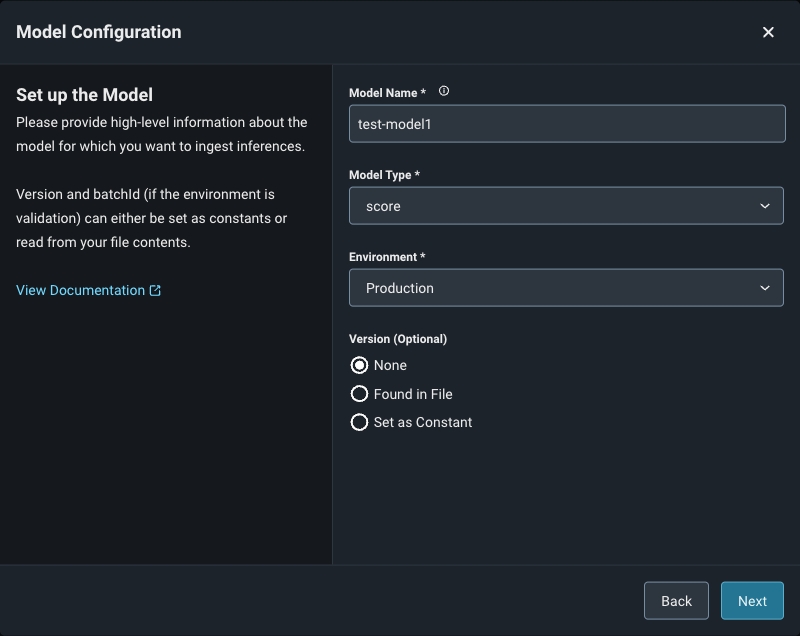

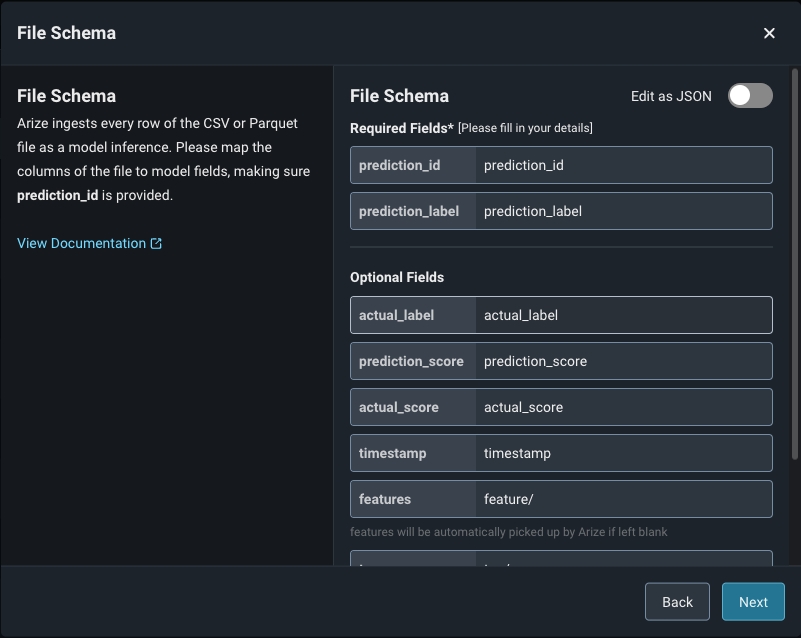

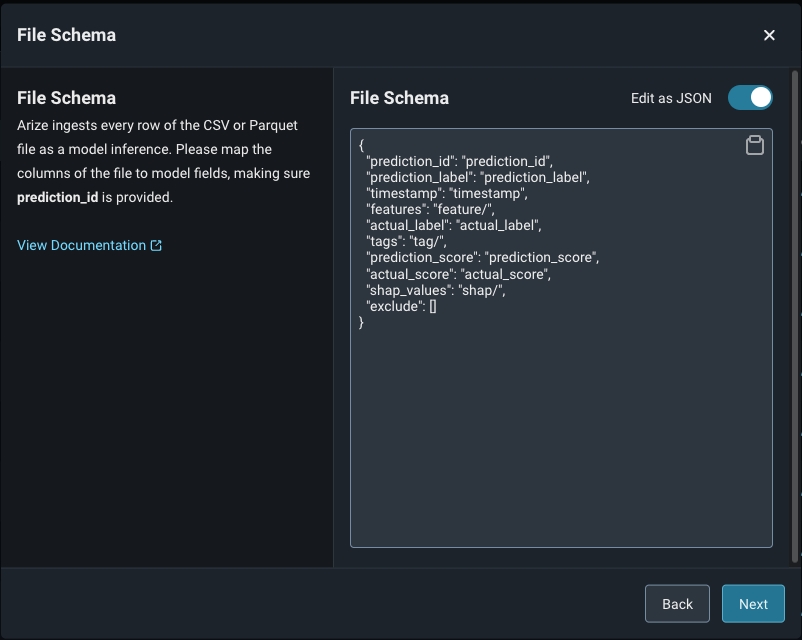

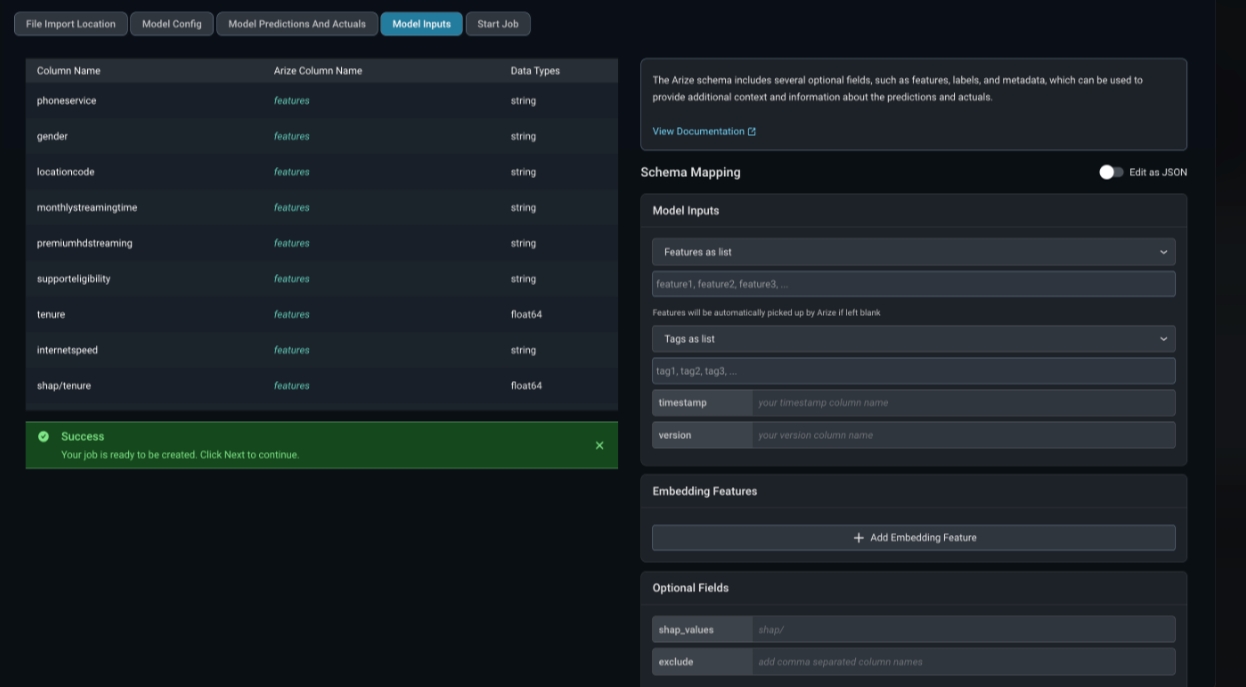

Step 6a. Define Your Model Schema

Model schema parameters are a way of organizing model inference data to ingest to Arize. When configuring your schema, be sure to match your data column headers with the model schema. Either use a form or a simple JSON-based schema to specify the column mapping. Arize supports CSV, Parquet, Avro, and Apache Arrow. Refer here for a list of the expected data types by input type.

6b. Validate Your Model Schema

Once you fill in your applicable predictions, actuals, and model inputs, click ‘Validate Schema’ to visualize your model schema in the Arize UI. Check that your column names and corresponding data match for a successful import job.

If your model receives delayed actuals, connect your predictions and actuals using the same prediction ID, which links your data together in the Arize platform. Arize regularly checks your data source for both predictions and actuals, and ingests them separately as they become available. Learn more here.

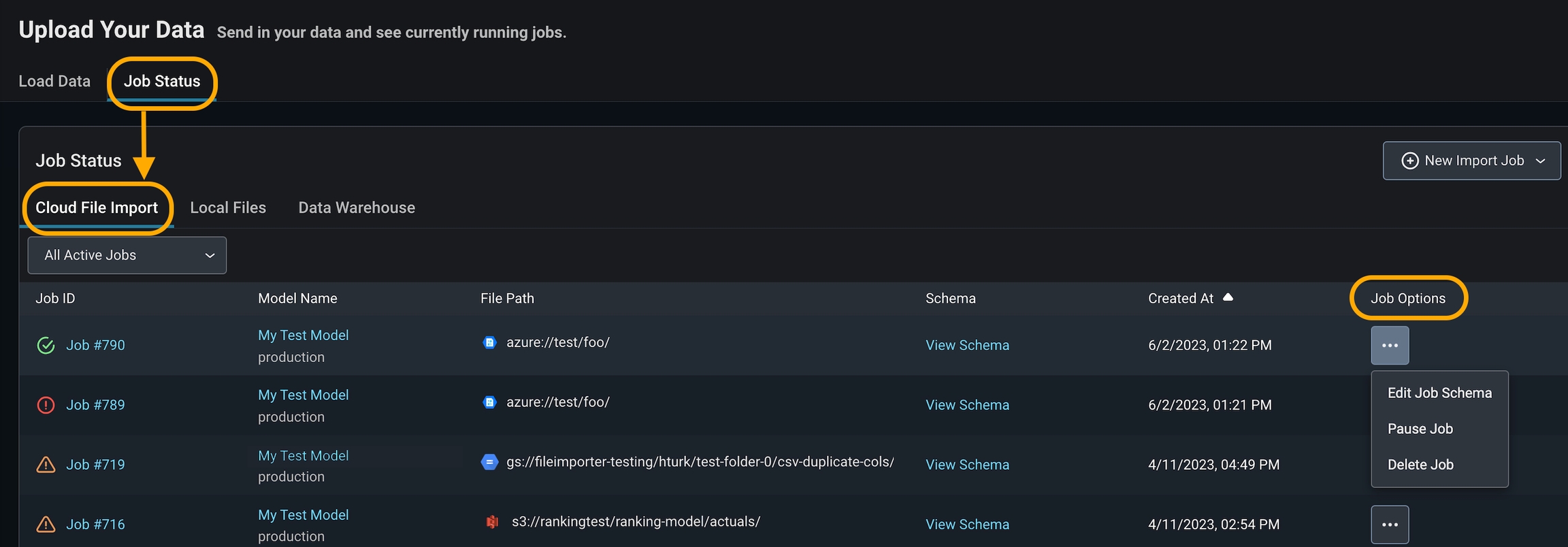

Step 7. Check Job Status

Arize will attempt a dry run to validate your job for any access, schema, or record-level errors. If the dry run is successful, you can proceed to create the import job. From there, you will be taken to the ‘Job Status’ tab. All active jobs will regularly sync new data from your data source with Arize. You can view the job details by clicking on the job ID, which reveals more information about the job.

- Delete a job if it is no longer needed or if you made an error connecting to the wrong bucket. This will set your job status as ‘deleted’ in Arize.

- Pause a job if you have a set cadence to update your table. This way, you can ‘start job’ when you know there will be new data to reduce query costs. This will set your job status as ‘inactive’ in Arize.

- Edit a file schema if you have added, renamed, or missed a column in the original schema declaration.

Step 8. Troubleshoot Import Job

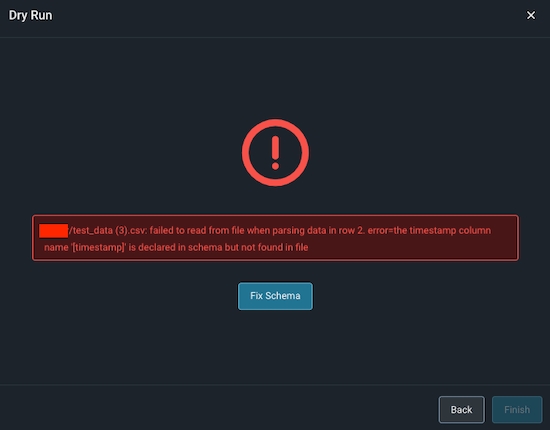

An import job may run into a few problems. Use the dry run and job details UI to troubleshoot and quickly resolve data ingestion issues.Validation Errors

If there is an error validating a file the model schema, Arize will surface an actionable error message. From there, click on the ‘Fix Schema’ button to adjust your model schema.

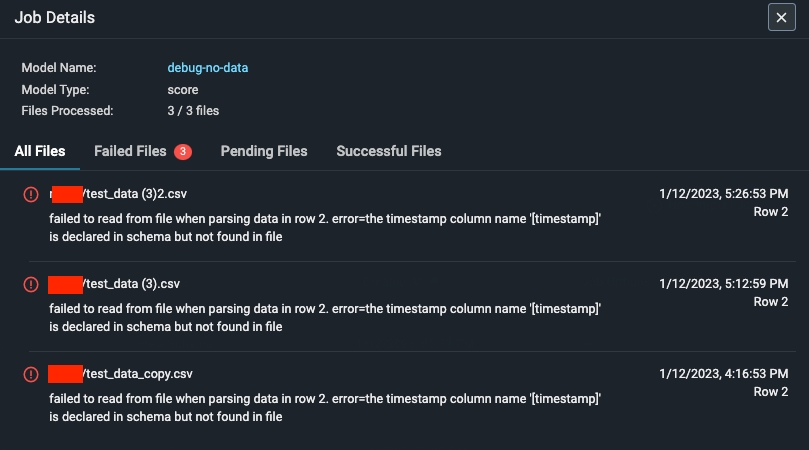

Dry Run File/Table Passes But The Job Fails

If your dry run is successful, but your job fails, click on the job ID to view the job details. This uncovers job details such as information about the file path or query id, the last import job, potential errors, and error locations.

Applying Bucket Policy & Tag via Terraform

An alternative to applying new IAM permissions via a binding (

google_storage_bucket_iam_binding), which is limited to the current role and could be lost in complex terraform deployments, is to modify the actual policy (google_storage_bucket_iam_policy). This is the most authoritative method of configuration and can be utilized for large enterprise deployments of Google Cloud services.More details: https://registry.terraform.io/providers/hashicorp/google/latest/docs/resources/storage_bucket_iamNote: If you elect to manage IAM privileges for the Arize user via a policy configuration, verify that all configurations are correct as it is a powerful enough configuration that users can mistakenly lock themselves out of their own projects.