Resource Hub

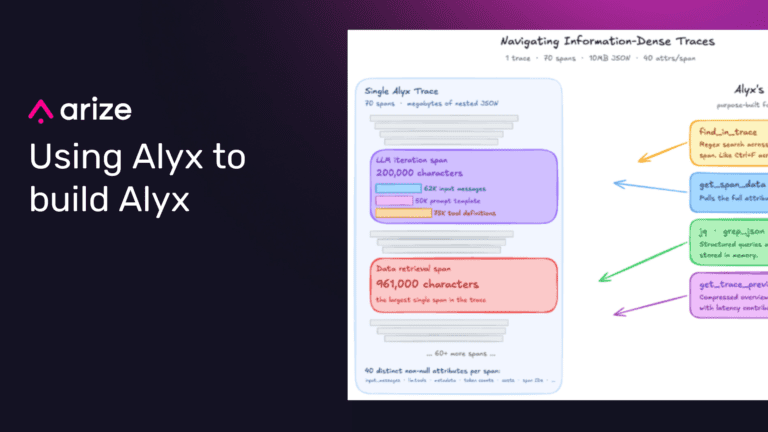

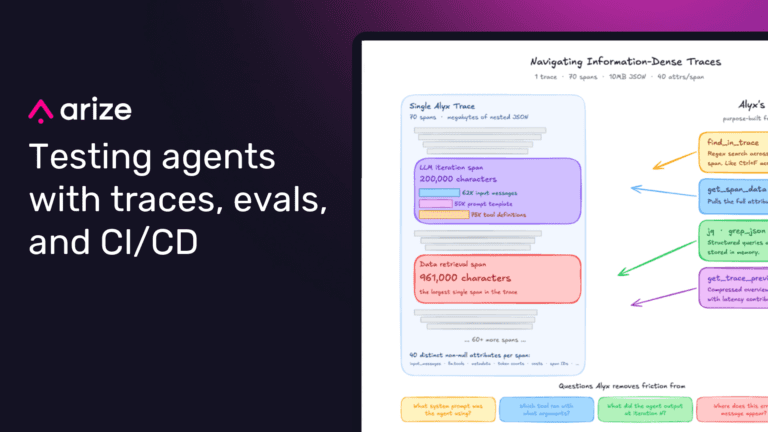

How we use Alyx to build Alyx: How to build an AI agent feedback loop

How Arize uses Alyx to debug Alyx: searching dense traces, aggregating failures, triaging dogfooding issues, and closing the AI engineering feedback loop.

AI agent analytics: A buyer’s guide

What to measure, how to evaluate, and what to require when buying analytics for production AI agents—spans, traces, sessions, and closed-loop engineering.

Agent observability: A buyer’s guide

What to measure, how to evaluate, and what to require when buying analytics for production AI agents—spans, traces, sessions, and closed-loop engineering.

Agent evaluation metrics: how to measure whether an agent works

Models got an order of magnitude better at following instructions in one year

A year ago, frontier models started losing track of instructions somewhere around 200–300 simultaneous constraints. With 2026 models, that ceiling is closer to 2,000 — an order-of-magnitude jump. We re-ran IFScale to see how, and how each model fails.

From observability to context: What’s next for Arize Phoenix

As agents start changing software, they need a way to verify their work that includes traces, evals, feedback, and APIs. This is where Phoenix goes next — not the next release, but what this product becomes.

Agent harnesses have an expiration date

A benchmark-driven look at why agent harnesses need adaptive finish logic as model behavior changes across Claude, GPT-4o, and Gemma.

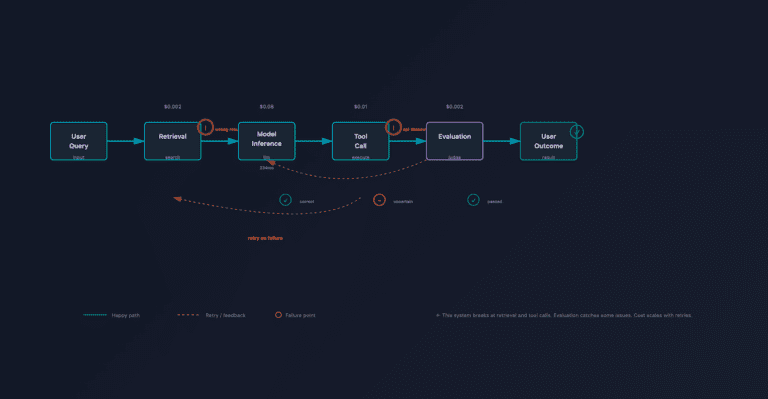

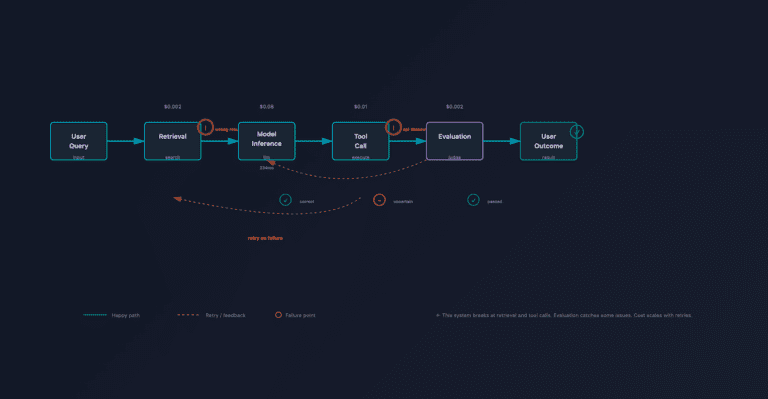

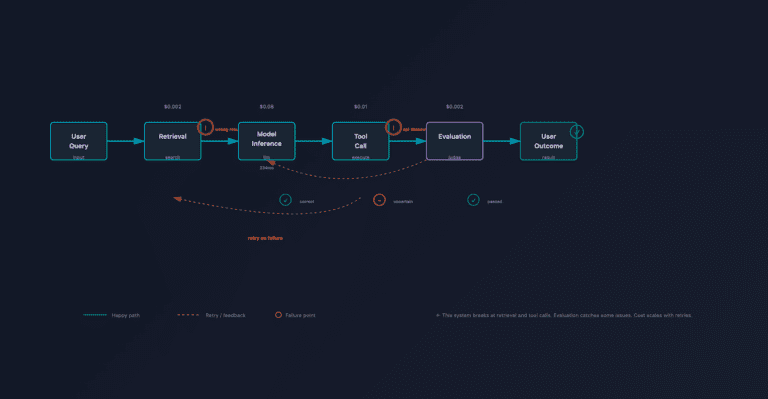

AI agent evaluation: How to test, debug, and improve agents in production

Lessons from building and shipping Alyx, our AI agent

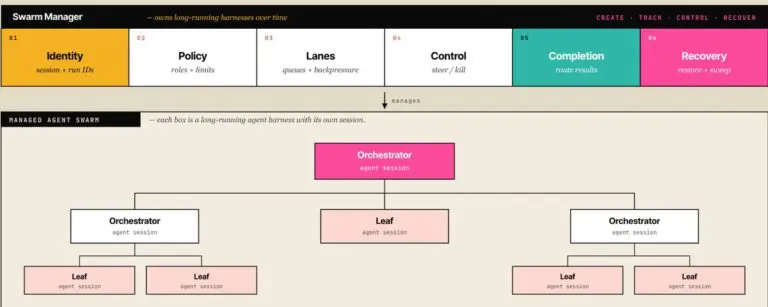

Swarm management in agent harnesses: owning long-running agents

As we have built our own harness management tools internally at Arize, and watched external systems like Devin @cognition start managing other Devins, managed agents at @AnthropicAI and long running

LLM observability & evals: Proving AI ROI

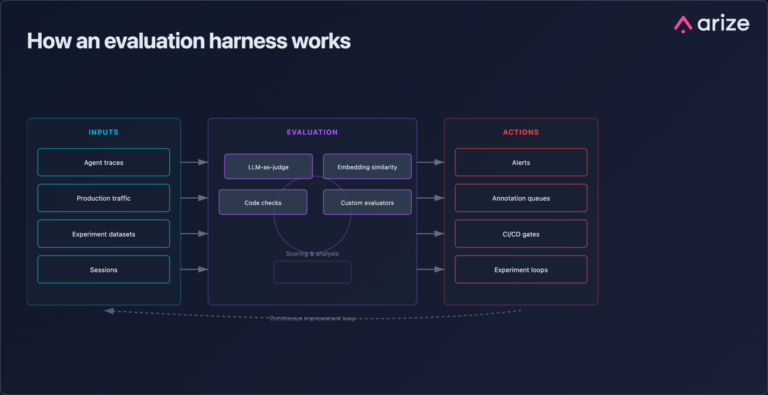

What is an evaluation harness?

An evaluation harness is the standardized infrastructure that decides what gets evaluated, runs the evaluation, and acts on the result.

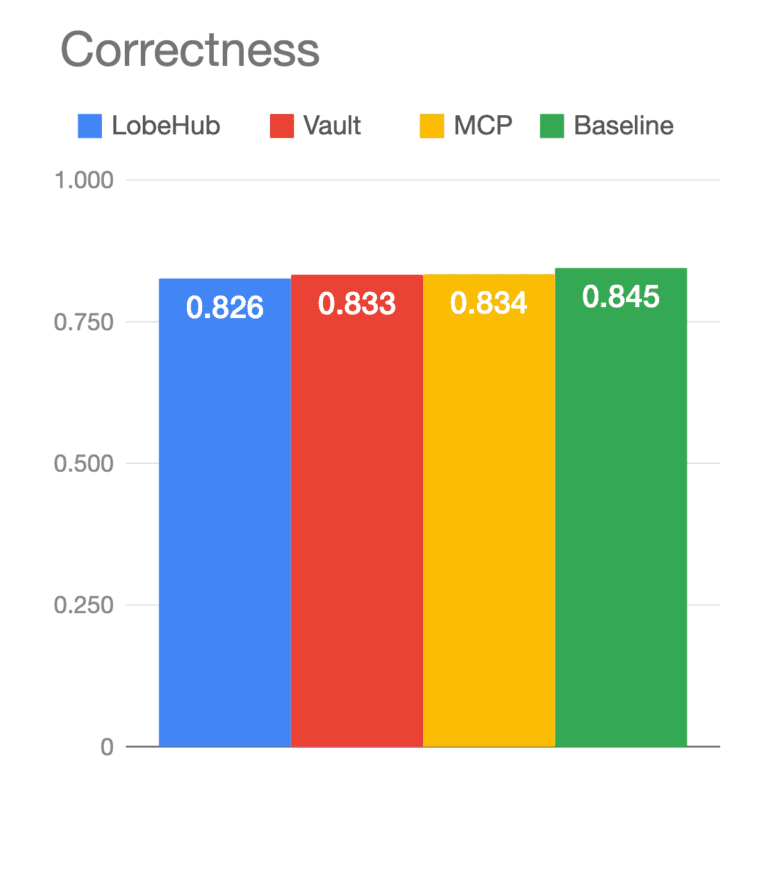

MCP vs. CLI Skills for agents: what our eval found (and which you should use)

Twitter said pick a side. The eval said the question was wrong. Six months ago, MCP (model context protocol) was the hot new thing: tool usage with a built-in discovery...

No results found. Try a different filter or search term.