Trace the Exponential

The open-source platform for agent development and evaluation

AI Engineers & Fortune 500 alike

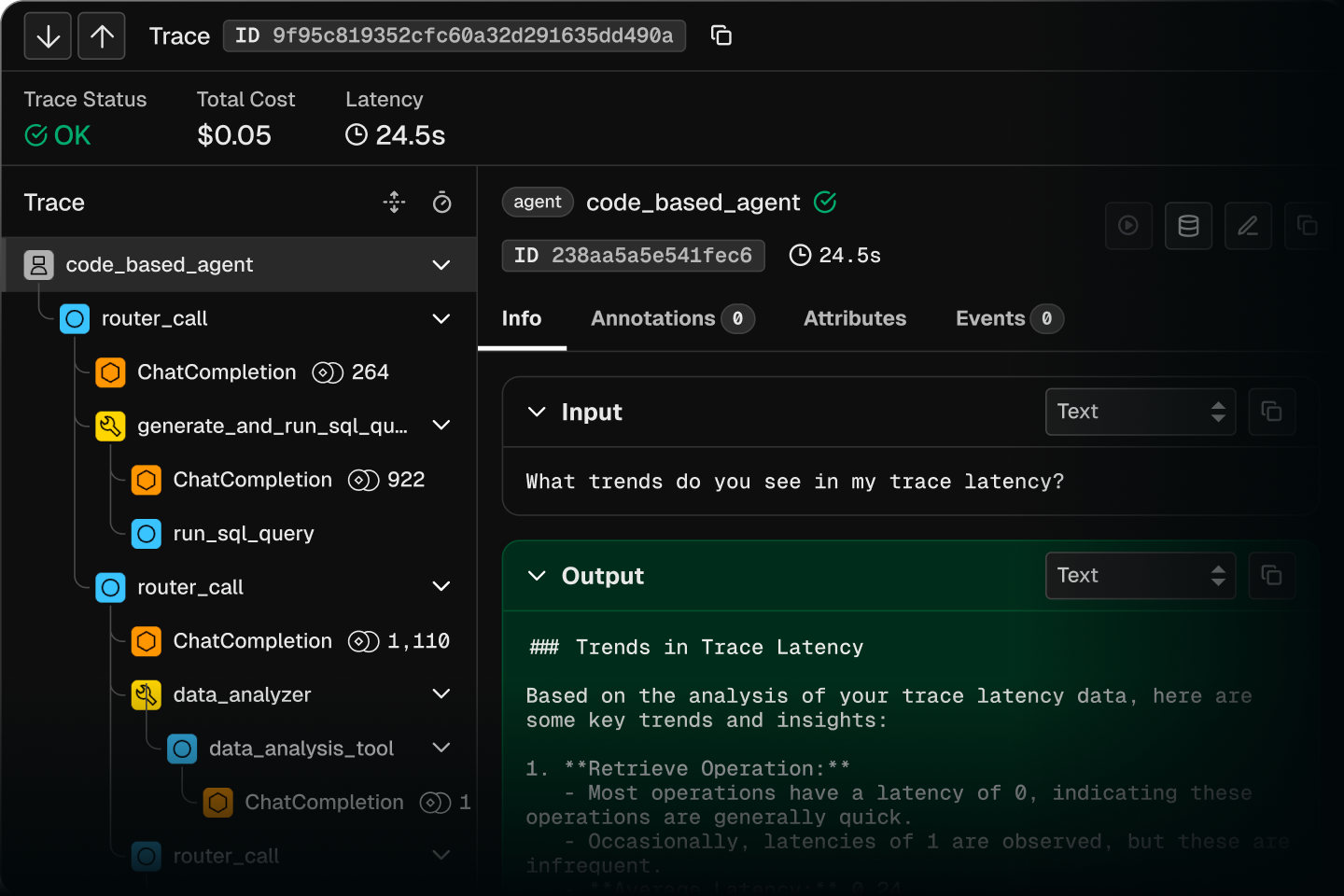

Get visibility into your agents

Without tracing, you’re flying blind. Phoenix shows every step your agent takes so you know what went wrong.

Measure and improve agent quality

Build evals that score outputs and catch issues before they reach your users.

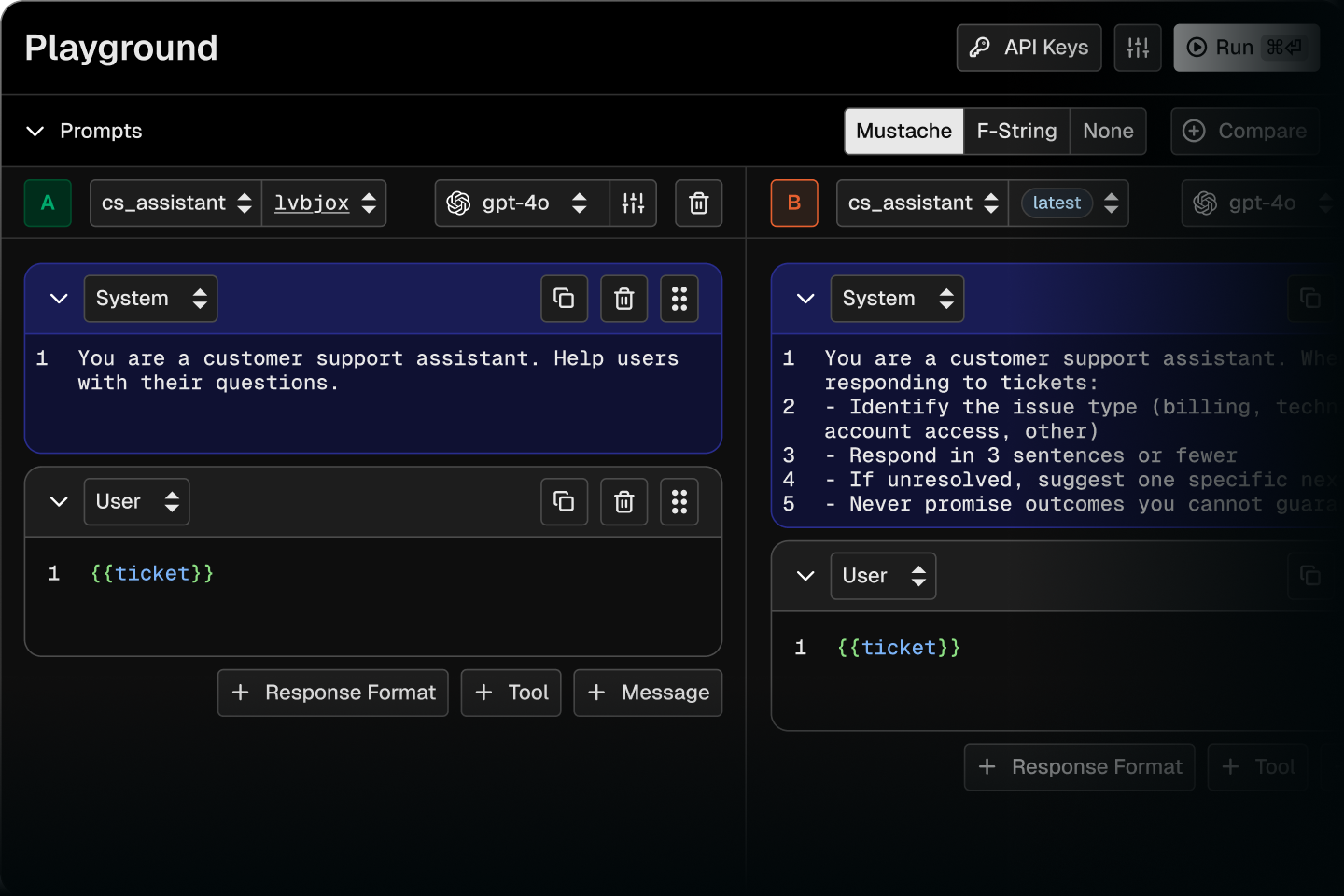

Test changes with evidence

Create datasets from traces, run experiments, and ship improvements.

Agent Native

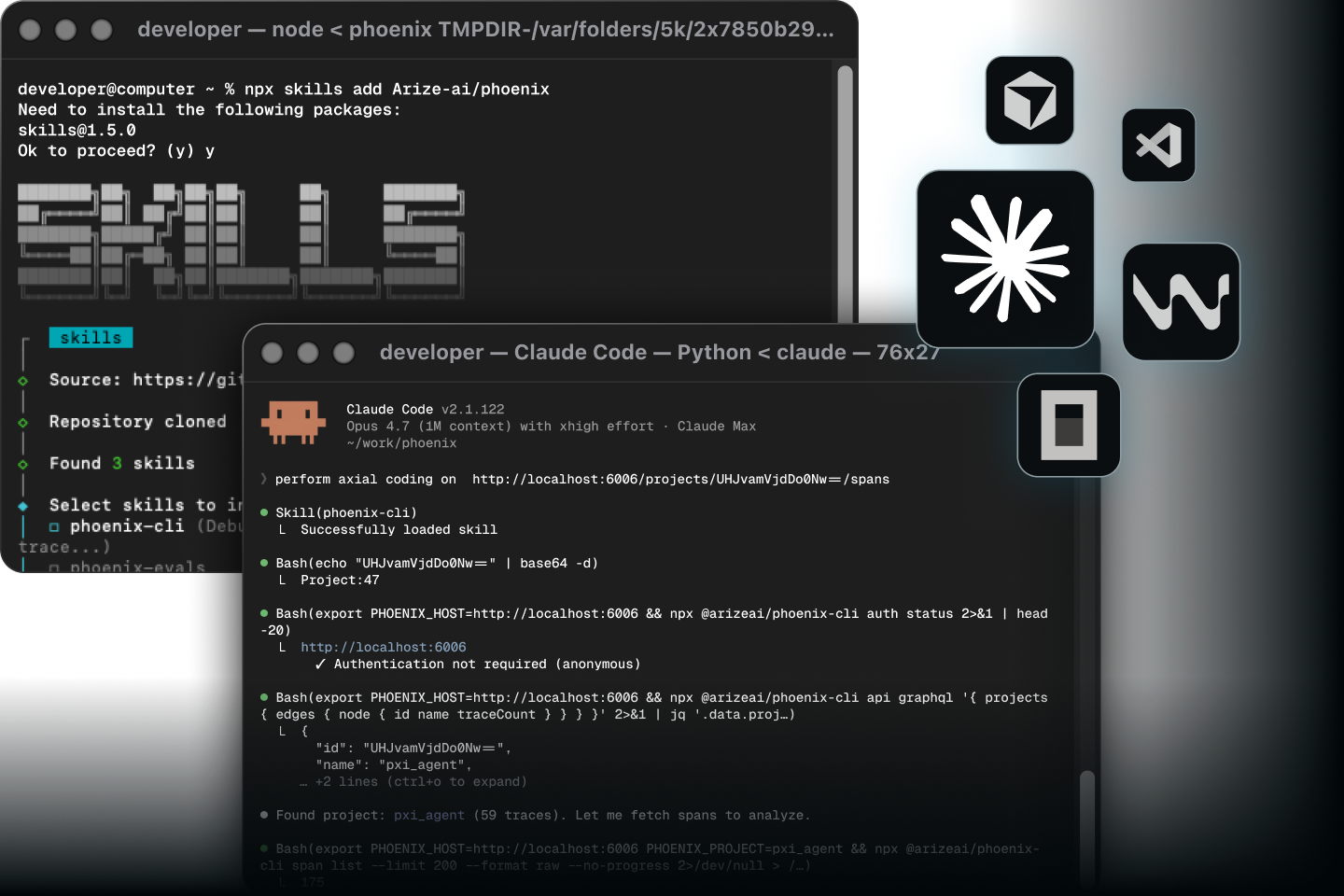

npx skills add Arize-ai/phoenix

Build automations using coding agents so you can focus on the things that matter.

A systematic way to improve AI quality

OBSERVE

1Why did my agent respond like that? Trace every step: prompts, retrievals, tool calls, outputs.

ANNOTATE

2Mark what worked. Flag what broke. Add labels with human review or LLM-as-judge.

HYPOTHESIZE

3Find the pattern. Is it the prompt? The retrieval? The model? Form a hypothesis about what to change.

EXPERIMENT

4Test your hypothesis. Make a change, run it under the same conditions, and benchmark performance.

MEASURE

5Score output across cost, latency, and performance. Ship only what improves quality.

Ask Another Question

Why did my agent respond like that? Trace every step: prompts, retrievals, tool calls, outputs.

Built by Engineers for builders like you.

Own your AI Stack

Privacy

Privacy

Your traces stay in your environment. Self-host Phoenix and keep sensitive data on your own infrastructure.

OSS Community

OSS Community

ELv2 licensed. 9k+ GitHub stars. Built in the open with contributions from teams building production agents.

Built on Standards

Built on Standards

Native OpenTelemetry support. Your traces work with the tools you already use. No proprietary lock-in.

Vendor Agnostic

Vendor Agnostic

Phoenix works with any model, framework, or language. No lock-in.

Deploy Anywhere in seconds

Kubernetes

Kubernetes

Deploy Phoenix with Helm. Manage, upgrade, and scale your Phoenix instance on Kubernetes.

Cloud

Cloud

Get 2 Phoenix Cloud instances for free. Start building with no infrastructure setup required.

Trusted by Builders

Join thousands of developers who ship AI with confidence.

2.5M+

Downloads Monthly

9k+

Github Stars

7k+

Community

2.4M+

OTEL Instrumentation Monthly Downloads

Get started in Seconds

Agent Mastery

Learn to build, debug, and evaluate AI agents

Docs

Quickstarts, API reference, and integration guides