Getting Started With Embeddings Is Easier Than You Think

A quick guide to understanding embeddings, including their real world applications and how to compute them

Written in collaboration with Aparna Dhinakaran

Ready to put this into practice? See how Arize enables you to monitor unstructured data.

Imagine you are an engineer at a promising chatbot startup aimed at helping people find the medical care they need quickly. You have millions of chat interactions between customers and medical personnel. You’re building a model to route questions to different departments in hospitals and clinics. Since machine learning is math, not magic, you need to somehow “explain” to your model that “sprained ankle” and “swollen foot” may be similar queries. How do you do that?

Enter embeddings.

What Are Embeddings?

Embeddings are compact, lower-dimensional versions of high-dimensional data that serve as a potent tool for representing input data, compressing it, and facilitating collaboration across teams.

Generating such representations can be accomplished in a variety of ways, but it’s crucial for engineers to consider the size, accuracy, and usability of the resulting representation. This is a recurring problem in machine learning, and proper versioning is both challenging and critical.

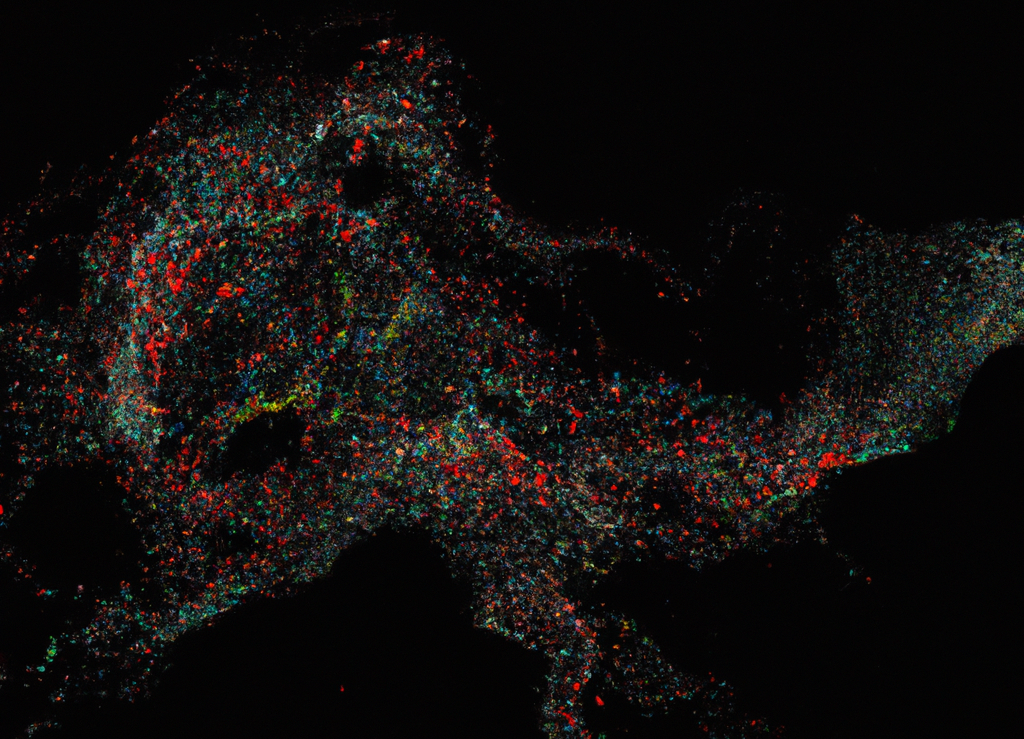

Despite their ability to drastically reduce the dimensionality of input features, embeddings can still be difficult to comprehend without additional dimensionality reduction techniques like UMAP.