Production scale from day one. Stable architecture with the benchmarks to prove it. Agent-first workflows. An AI engineering agent that closes the loop.

Architecture that matches the claim.

Marketed for production. Built for dev.

Strong prompt iteration. Real dev-loop value. But when you’re running multiple agents at scale, costs climb fast and stability matters. That’s where the architecture shows its limits.

What AI builders are saying

from field interviews

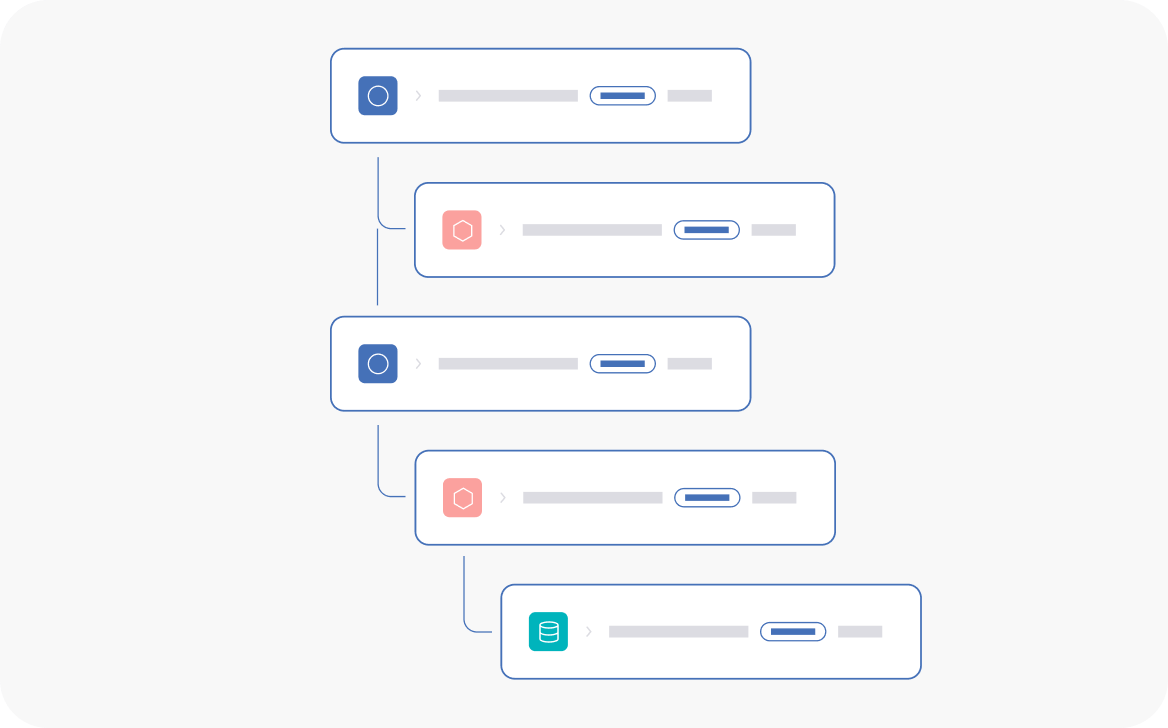

Deeper observability

“The granularity of the agent traces and the way we are able to see each step – like the agent graph and the agent timeline, the decision path and all agent tool-calling paths – that’s exciting and stands out.”

Proven architecture

“Arize is just further ahead across the board. We had 200 people on Braintrust with 16 LLM applications. We moved away due to runaway costs and server stability issues at scale.”

Built for production agents

“We needed a solution that works across experimental workflows straight through to production – Arize has a much more robust production suite once you’re running multiple agents.”

Where the architecture diverges

Shallow roots show at scale

Retrofitted for production

Braintrust’s schema is fixed: input, output, metadata. All your trace and span context gets dumped into those buckets. Query something specific? Parse it out yourself.

A purpose-built datastore for agent eval telemetry is a no-brainer. They built Brainstore to catch up. We built adb to handle the schema complexity of real agent production traces.

If it’s useful, we can run a quick ingestion + query test on one of your largest datasets.

Surface-level agent evaluation

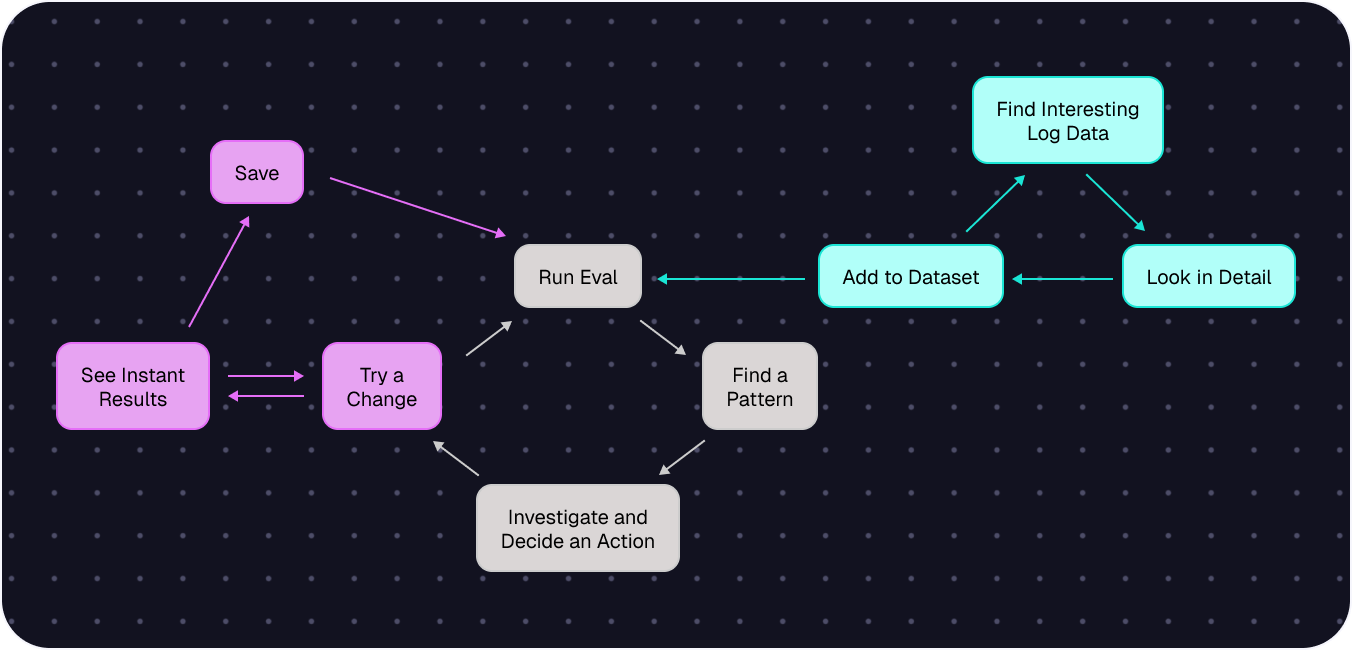

Braintrust traces agent runs, but Arize AX goes deeper.

Path evals measure if your agent took the optimal route. Convergence evals catch loops and unnecessary backtracking. Session evals track coherence across multi-turn interactions.

Braintrust captures what happened; for teams debugging why an agent made a specific choice, the eval depth isn’t there.

The VPC compromise

The whole point of VPC is control. Braintrust’s hybrid model defeats that: control plane and auth live in their cloud, and version conflicts force you onto their update schedule.

Fall behind and your deployment breaks. Arize AX deploys to one Kubernetes cluster. No cloud dependencies. No forced cadence. Your data, your rules.

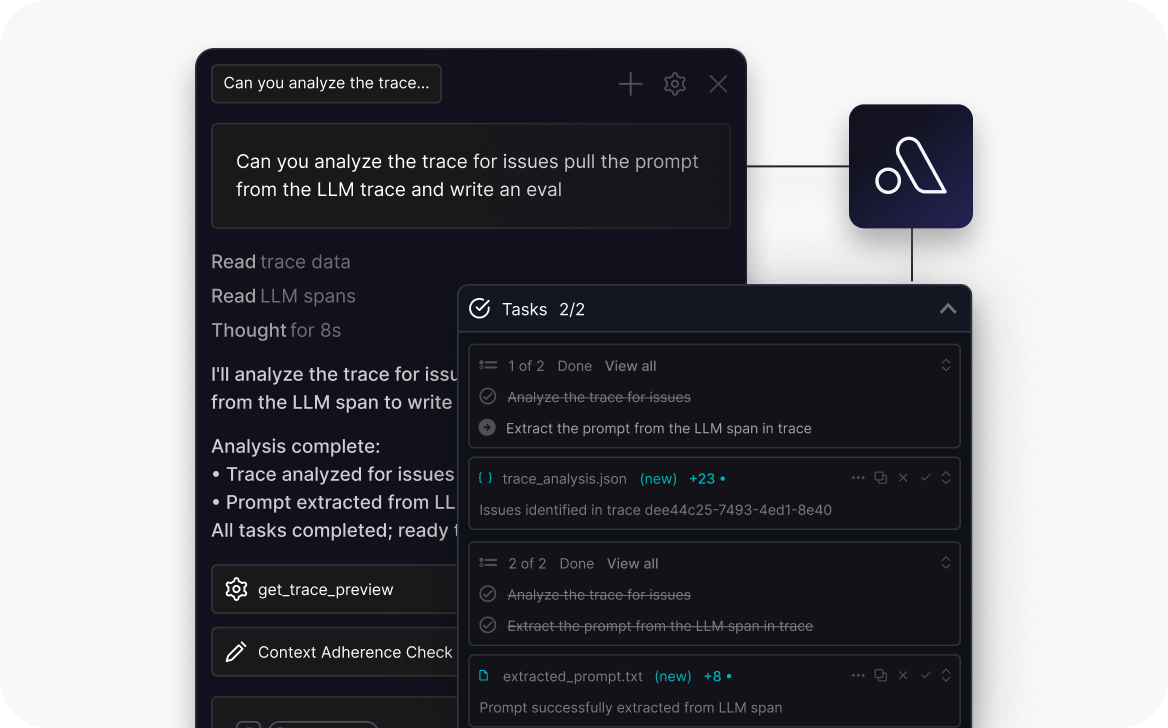

Following at a distance

Braintrust’s Loop iterates only on what you point it at. We shipped the first AI engineering agent for observability, then wrote the guide on how to build one.

Alyx finds (and fixes) what you didn’t know to look for.

When Braintrust is the right call

If your team is focused on prompt iteration and offline evaluation (building loops), and you’re not yet running agents in production at scale,

Braintrust’s dev-loop tooling is solid.

Where Arize AX stands out

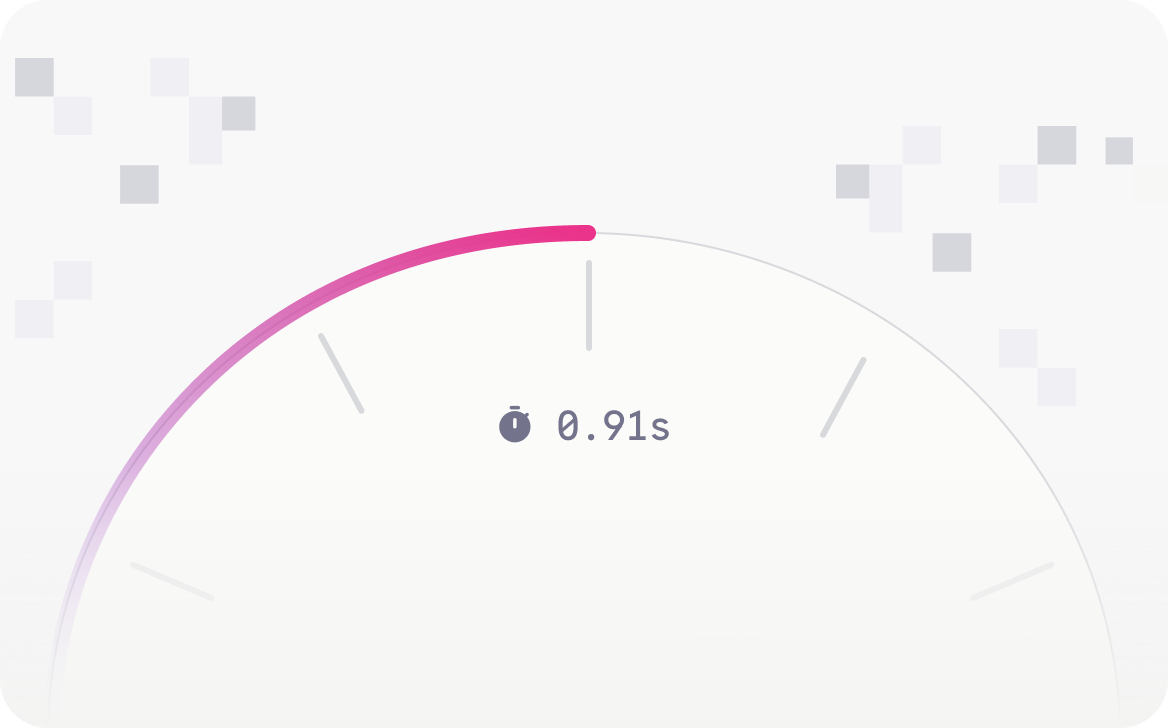

Trillions of data points. No tradeoffs.

First trace to full production visibility

Find what you didn't know to look for

One deployment. Total control.

No split-brain risk

Predictable costs

Simpler operations

AI evolved from ML. So did we.

We’re Jason and Aparna.

We built the foundational ML infrastructure at Uber, Apple, and TubeMogul.

Before LLMs existed, we watched models break in production with nothing to fix them. So we started Arize to fix it.

Our mission since 2020: make AI work.

ML first. Then LLMs. We shipped the first open-source library for LLM evaluation: Phoenix.

Now agents.

That’s Arize AX — the Agent Experience.