Overview

OpenLLMetry is an open-source observability package for LLM applications that provides automatic instrumentation for popular LLM frameworks and providers. This integration enables you to send OpenLLMetry traces to Arize using OpenInference semantic conventions.Integration Type

- Tracing Integration

Key Features

- Automatic instrumentation for 20+ LLM providers and frameworks

- Seamless conversion to OpenInference semantic conventions

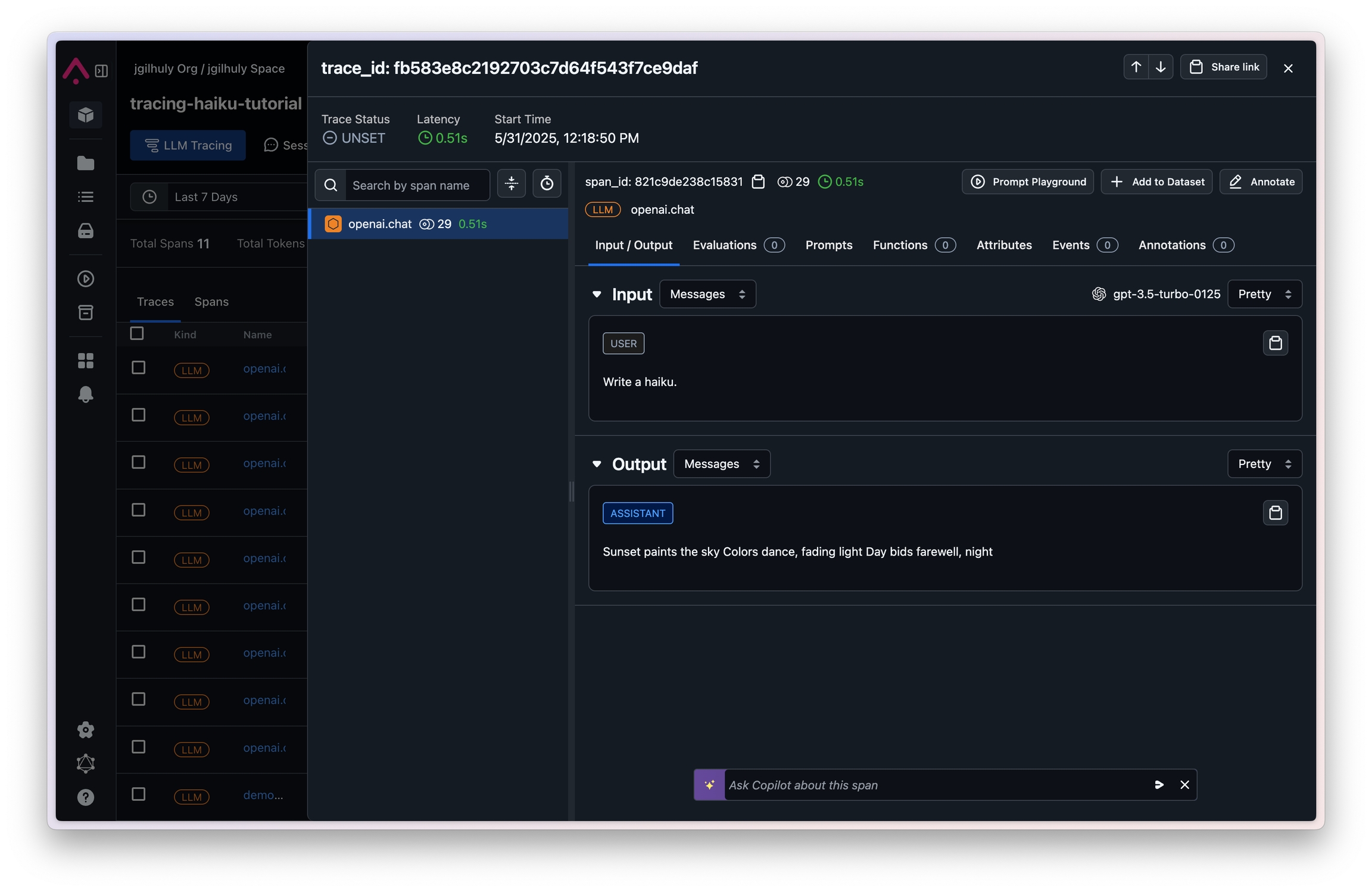

- Real-time trace collection and analysis in Arize

- Support for complex LLM workflows and chains

Prerequisites

- Arize account with Space ID and API Key

- OpenLLMetry and OpenTelemetry packages

- Target LLM provider credentials (e.g., OpenAI API key)

Installation

Quickstart

This quickstart shows you how to view your OpenLLMetry traces in Phoenix. Install required packages.This example:

- Uses OpenLLMetry Instrumentor to instrument the application.

- Defines a simple OpenAI model and runs a query

- Queries are exported to Arize using a span processor.

http://localhost:6006.